Navigating the Path to CyberPeace: Insights and Strategies

Featured #factCheck Blogs

Executive Summary

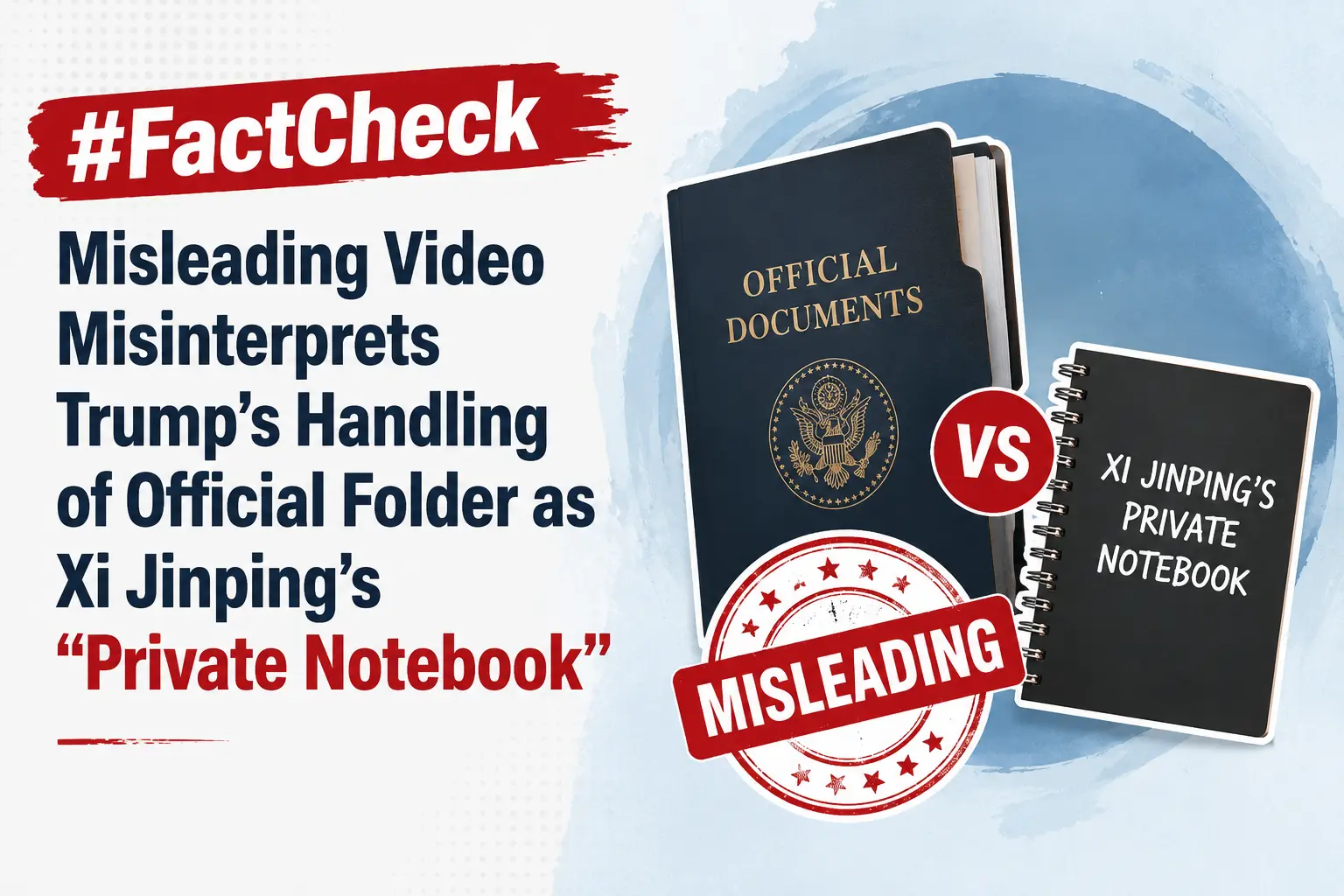

A video circulating on social media claims that during a summit in Beijing, Donald Trump was seen peeking into Chinese President Xi Jinping’s “private notebook” while Xi briefly stepped away. However, a fact-check by CyberPeace Research Wing found the claim to be baseless. A review of the full event footage clearly shows that the folder in question belonged to Donald Trump himself, not Xi Jinping. The viral interpretation is therefore misleading.

Claim

An X user shared the clip alleging, “Trump caught sneaking a peek at Xi Jinping’s private notebook during a Beijing banquet while Xi stepped away.”

Fact Check

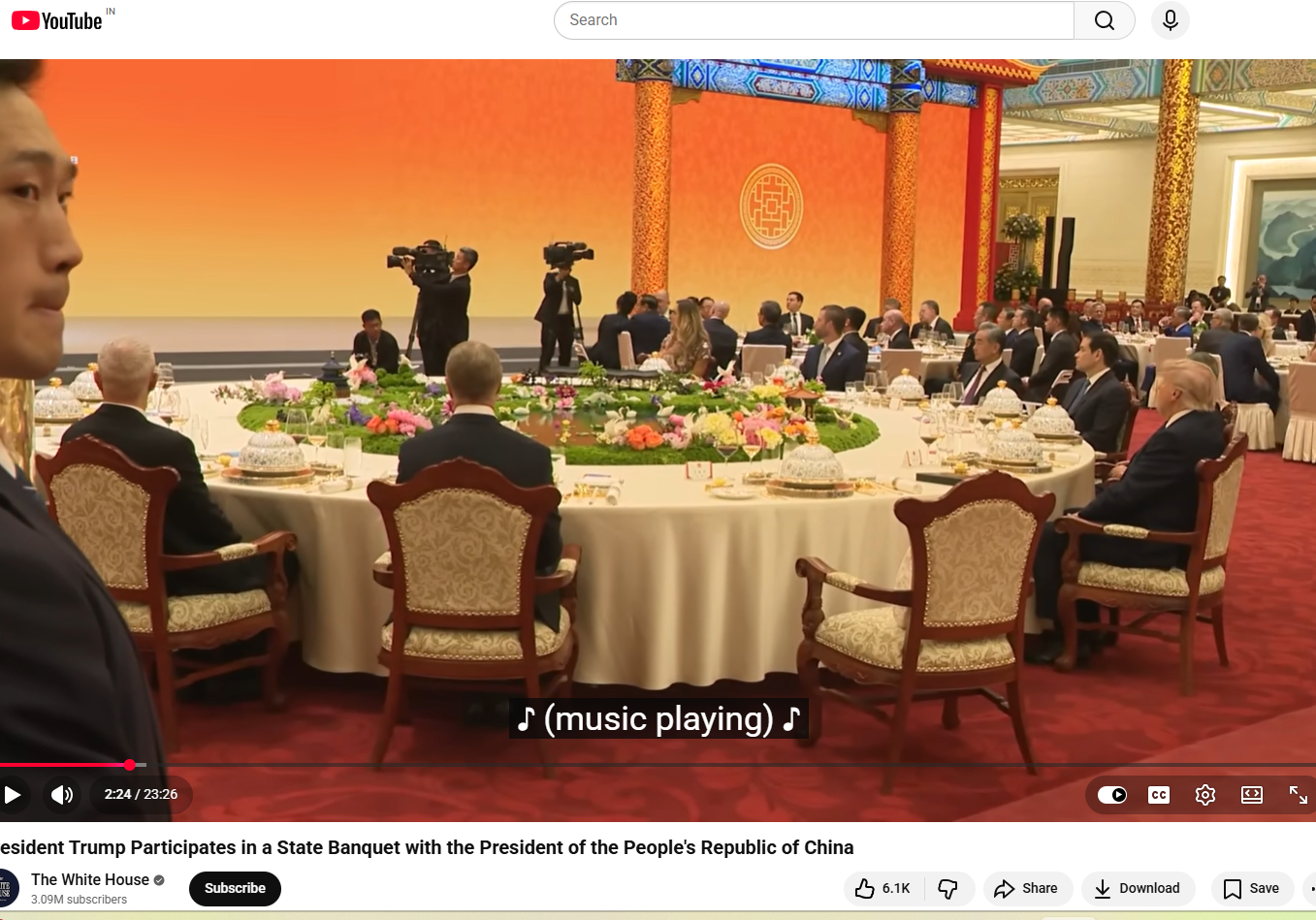

A longer version of the video, shared by NBC News on May 14, shows the state banquet held at the Great Hall of the People in Beijing. Around the 1-minute-50-second mark, Xi Jinping, seated to Trump’s left, gets up and walks to the podium. The viral clip follows shortly after, showing Trump opening the folder placed to his left and flipping through its pages.

The White House also uploaded the full footage on its official YouTube channel, showing wider, uninterrupted shots of the event. Around the two-minute mark, the announcer says, “And now a toast by President Xi,” after which Xi Jinping stands up. Immediately after, Trump is seen opening the folder on his left and reading from it.

Later in the video, around the 12-minute mark, when Xi returns to his seat, Trump is seen standing up, taking the folder with him to the podium, turning pages, and reading from it. The same sequence can also be seen in the NBC News footage at around 11 minutes and 50 seconds. This clearly indicates that the folder belonged to the U.S. President and not Xi Jinping, and that Trump was not peeking into any private notebook. Another key detail is the embossed emblem on the folder, which closely resembles the Seal of the President of the United States. The American bald eagle, the national bird of the United States, is clearly visible at the centre. A comparison between the viral screenshot and the official seal shows they are nearly identical.

Conclusion

The viral claim is misleading and taken out of context. A detailed review of the full footage, including official recordings from NBC News and the White House, clearly shows that the folder in question belonged to Donald Trump and not Chinese President Xi Jinping. At multiple points in the video, Trump is seen opening, handling, and reading from the same folder, including while Xi Jinping is away from his seat and later after he returns. The visual evidence from the event also supports this conclusion. The embossed seal on the folder matches the official Seal of the President of the United States, further confirming that it was part of Trump’s official briefing material and not any private document belonging to Xi Jinping. Taken together, the full sequence of events and official video sources make it clear that the viral narrative has been incorrectly framed. There is no evidence to suggest that Trump was peeking into Xi Jinping’s personal notebook.

Executive Summary

A graphic widely circulating on social media claims that Union Home Minister Amit Shah has warned, “A major crisis is coming; if possible, skip one meal a day.” The claim has been found to be false in a fact-check conducted by CyberPeace Research Wing. The research revealed that Amit Shah has not made any such statement.

Claim

A Facebook user shared the viral graphic on May 17, 2026, claiming that BJP leader and Home Minister Amit Shah issued a “warning” to the public, allegedly saying people should be prepared for a major crisis and consider skipping one meal a day. The post has been widely circulated on social media, drawing significant attention and discussion.

- https://www.facebook.com/photo.php?fbid=1509406197622070&set=pb.100056581115590.-2207520000&type=3

- https://archive.ph/Z9Tle

Factcheck

A keyword-based search on Google did not return any credible news reports supporting the claim. Further scrutiny of the official account of the Ministry of Home Affairs on X also found no mention or statement matching the viral claim.

A separate review of the official X account of Home Minister Amit Shah also did not show any such statement or post confirming the viral claim.

Conclusion

The viral claim is false. Union Home Minister Amit Shah has not made any such statement.

Executive Summary:

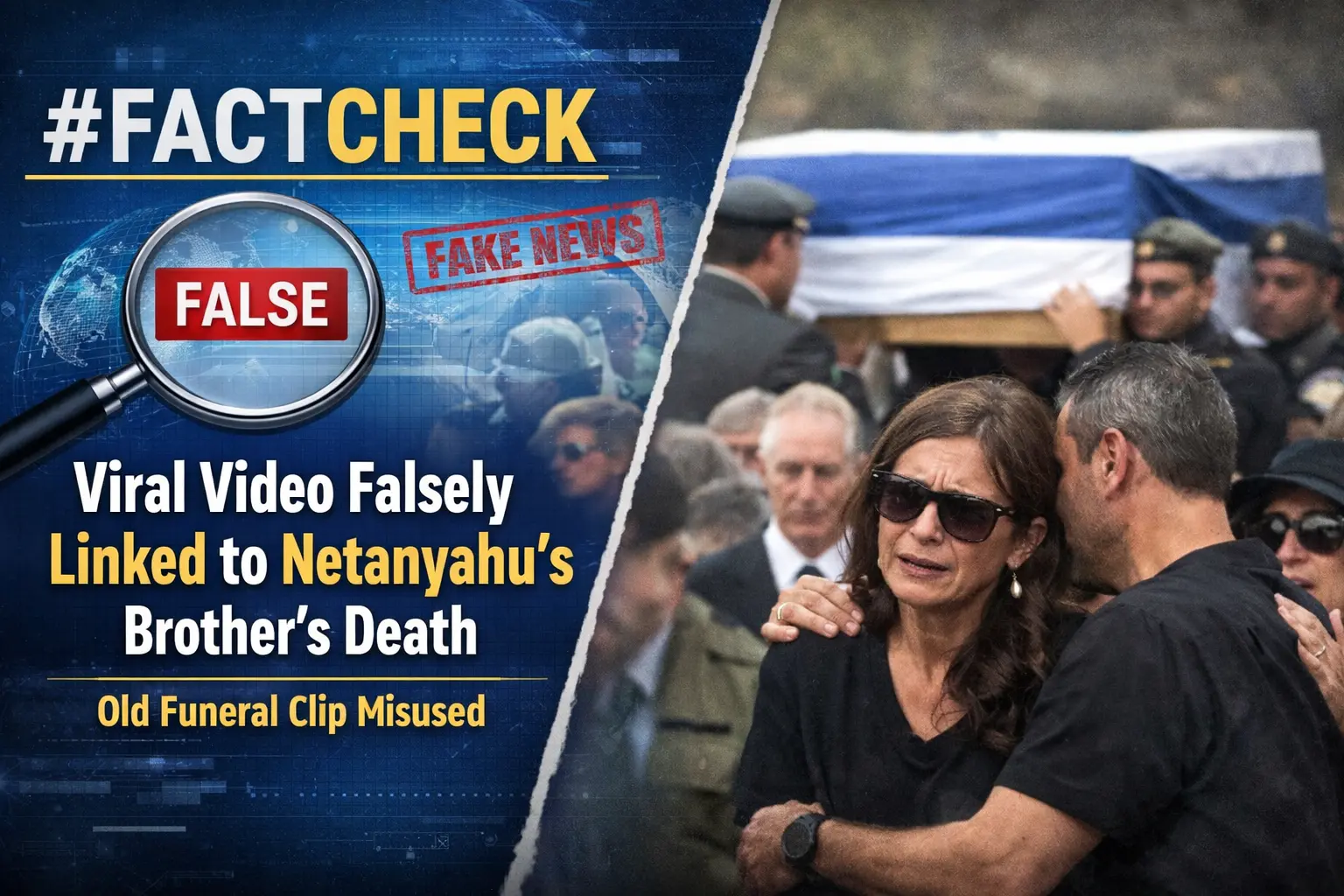

A video is going viral on social media claiming to show family members mourning the death of Iddo Netanyahu, brother of Israeli Prime Minister Benjamin Netanyahu. However, an research by the CyberPeace found that the claim being shared with the video is false. The video has been available on the internet since 2024. According to available information, it shows the funeral of an Israeli soldier who was killed in an attack in the Jabalia area of northern Gaza.Moreover, no credible news reports were found confirming the death of Iddo Netanyahu.

Claim:

An Instagram user shared the viral video with an English caption stating, “Family members are crying after the death of Iddo Netanyahu was confirmed.”

Fact Check:

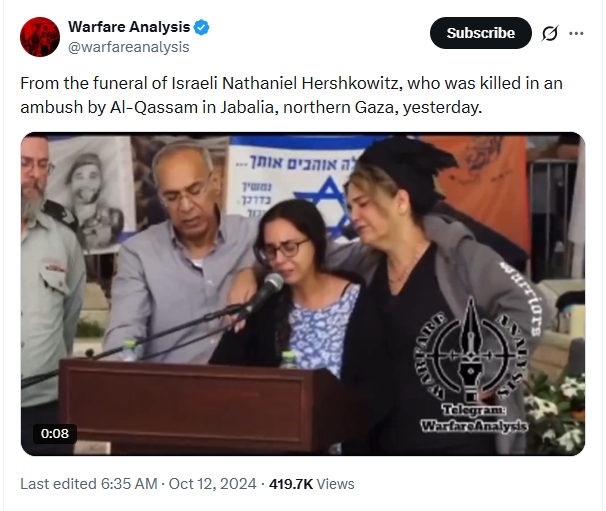

During the investigation, we found the original video on an X (formerly Twitter) account named Warfare Analysis. The video was posted on October 12, 2024, confirming that it predates the recent Iran-Israel conflict. Notably, the “Warfare Analysis” logo is also visible in the viral video. According to the caption, the footage shows the funeral of Israeli soldier Netanel Hershkovit, who was killed on October 11, 2024, in an attack by Al-Qassam in Jabalia, northern Gaza.

A report published by VIN News on October 12, 2024, also covered the funeral of Netanel Hershkovit and included statements from his family members.

Conclusion:

Our research found that the claim shared with the video is false. The video has been online since 2024 and shows the funeral of an Israeli soldier killed in northern Gaza. Additionally, no credible reports confirm the death of Iddo Netanyahu.

Executive Summary:

A video of former Army Chief General Manoj Pande is going viral on social media with the claim that he attacked the Modi government, saying that supporting Israel is causing significant harm to the Indian Army. The research by CyberPeace revealed that the audio present in the viral video is AI-generated. No such statement was made in the original video.

Claim:

On social media platform X, while sharing the viral video, users wrote, “Delhi: Former Army Chief General Manoj Pande (Retd.) said, ‘Do you know what the biggest loss of supporting Israel is? Our Indian Army was always trained as a moral force, but the current situation is turning it into an ethnic force. Remember my words, this situation is moving towards a complete rebellion. We have all seen what is happening in Assam.’ ‘The Israeli army stands against humanity, and brutality has become its identity. Our army is becoming like them due to its association. The Modi government and the Sangh Parivar are responsible for this. For both, Israel is an ideal country, and they are running an agenda to turn India into Israel.’”

Fact Check:

In the research of the viral video claiming that former Army Chief General Manoj Pande attacked the Modi government, we conducted a reverse image search with the help of keyframes. During this process, we found a video uploaded on March 14 on the X account of the news agency Press Trust of India (PTI).

The visuals present in the video matched those in the viral video.

In this video, former Army Chief General Manoj Pande was seen delivering a speech in Marathi and English. However, during this, he was talking about increasing new kinds of capabilities in view of the current situation and not mentioning Israel, as claimed in the viral video. In the approximately 1 minute 15 seconds long video, he did not give any such statement as present in the viral video.

While taking the research forward, we found a report published on March 15, 2026, on the website of ThePrint. This report mentioned the speech delivered by former Army Chief General Manoj Pande, but no report mentioned the statement shown in the viral video.

Conclusion:

Our research found that the audio present in the viral video is AI-generated. In the original video, he did not make any such statement.

Executive Summary

A video is being widely shared on social media and linked to protests that allegedly took place in Lucknow after the reported killing of Iran’s Supreme Leader Ali Khamenei.Users claim that police in the capital of Uttar Pradesh baton-charged people who were protesting against the United States and Israel. The video is being widely circulated across social media platforms with this claim. However, research by CyberPeace found the claim to be false. Our verification revealed that the video is not from Lucknow but from Bareilly, and it is related to an incident that took place on September 26, 2025, when Uttar Pradesh Police baton-charged protesters during a rally held in support of the “I Love Mohammad” campaign.

Claim Post:

On March 3, 2026, an X (formerly Twitter) user shared the viral video claiming that the Uttar Pradesh Police took action against people blocking roads in Lucknow and creating unrest in support of Ali Khamenei.

Fact Check

To verify the claim, we extracted key frames from the viral video and conducted a reverse image search using Google Lens. During the search, we found a similar video posted on Instagram on September 26, 2025, indicating that the footage predates the current claim.

Further research led us to the same video on the website of Aaj Tak, where it was published on September 26, 2025.

According to the report, protests erupted in Bareilly after Friday prayers over a controversy related to “I Love Mohammad” posters. Hundreds of people took to the streets carrying banners and posters. The report further stated that protesters, responding to a call by cleric Maulana Tauqeer Raza, attempted to break police barricades and move forward. Police initially tried to persuade the crowd to disperse, but when the situation escalated and the crowd refused to back down, officers resorted to baton-charging to control the situation. The incident reportedly led to tension in the area.

Conclusion:

Our research found that the viral video being shared as police action on protesters in Lucknow after the alleged killing of Ali Khamenei is misleading. The footage is actually from Bareilly and shows a police baton-charge during a protest rally held on September 26, 2025 in support of the “I Love Mohammad” campaign.

Executive Summary

A dispute over water balloons on the day of Holi in the Uttam Nagar area of Delhi reportedly turned violent, resulting in the brutal murder of a young man named Tarun Khatik. Following the incident, a video is being widely shared on social media and linked to the murder case. In the viral video, Yogi Adityanath, Chief Minister of Uttar Pradesh, can be seen walking alongside Ravi Kishan, Member of Parliament from Gorakhpur. People standing along the route are seen showering flowers on them. Several users claim that the video shows the chief minister visiting the house of Tarun Khatik to meet his family.

However, research by CyberPeace found the viral claim to be misleading. Our research revealed that the video has no connection to the Tarun Khatik murder case. In fact, the video is from a Holika Dahan celebration held in Gorakhpur, which is now being shared on social media with a misleading claim.

Claim Post:

An Instagram user shared the viral video on March 10, 2026, writing: “Tarun bhai ko insaaf dilane ke liye aage aaye maananiya mukhyamantri Shri Yogi Adityanath ji.”

Fact Check:

To verify the claim, we extracted several key frames from the viral video and conducted a reverse image search using Google Lens. During the search, we found the same video posted on a Facebook account on March 2, 2026.

According to the caption of the Facebook post, the video shows a grand procession organised by the Shri Shri Holika Dahan Utsav Samiti, Pandeyhata in Gorakhpur. Yogi Adityanath attended the procession and was seen celebrating Holi with people by playing with flowers and coloured powder. During the procession, flower petals were showered on devotees, and the entire area witnessed a festive atmosphere filled with colours, devotion, and enthusiasm. A large number of people participated in the celebration, and the festival was celebrated with traditional drums and music. During further research, we also found images related to the viral video on the official X (formerly Twitter) account of Ravi Kishan. These images were shared on March 2, 2026, and the caption confirmed that they were taken during the Holika Dahan celebration in Gorakhpur.

At the end of the research, we also found the same video uploaded on March 3, 2026 on the Instagram page Local News Gorakhpur. According to the information in the post, Yogi Adityanath and several other leaders participated in the grand procession organised by the Shri Shri Holika Dahan Utsav Samiti, Pandeyhata. People celebrated Holi with flowers and coloured powder during the event.

Conclusion:

Our research found that the viral video has no connection with the Tarun Khatik murder case in Uttam Nagar, Delhi. The video actually shows Yogi Adityanath participating in a Holika Dahan celebration in Gorakhpur. Therefore, the video is being shared on social media with a misleading claim.

Executive Summary:

Amid the ongoing conflict between the United States, Israel, and Iran, a video circulating widely on social media claims to show American soldiers kneeling and surrendering to Iranian forces. In the clip, several soldiers appear to be sitting on their knees in front of armed personnel, creating the impression that they have been captured on the battlefield.

The video is being shared with the claim that the Iranian military has taken US soldiers prisoner during the war.

However, an research by the CyberPeace found that the claim is false. The viral clip is not authentic and has been generated using artificial intelligence. There is no credible evidence to support the claim that American soldiers have been captured by Iranian forces.

Claim

A Facebook user named “News Tick” shared the video on March 12, 2026, claiming that Iran had released footage of captured US soldiers. In the clip, the soldiers can be seen kneeling while armed personnel stand around them, giving the scene a highly dramatic appearance.

Fact Check

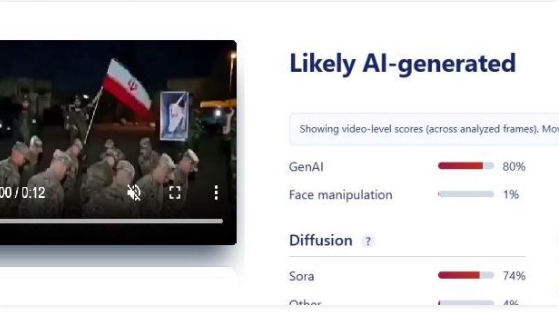

To verify the claim, we first searched the internet using relevant keywords. We found no credible reports from reputable news organizations confirming that US soldiers had been captured by Iran during the conflict. A closer examination of the video revealed several visual inconsistencies. The weapons carried by the soldiers appear unclear and oddly shaped. Additionally, the background looks unusually blurred and overly dramatic. The lighting and textures in the footage also appear artificial—common indicators of AI-generated visuals.

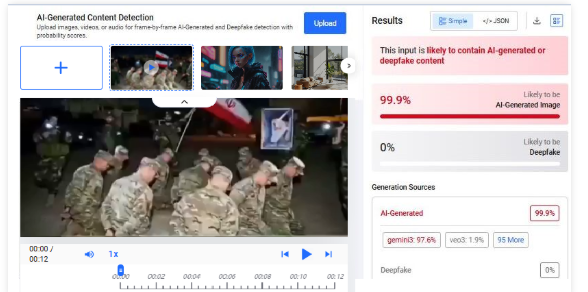

To confirm this suspicion, we analyzed the clip using multiple AI detection tools. The tool Hive Moderation indicated a 99% probability that the video was created using artificial intelligence.

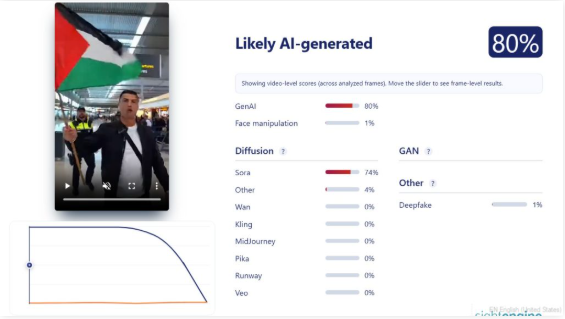

Further analysis using Sightengine also suggested that the video was likely AI-generated, estimating an 80% probability of AI creation.

Conclusion

Our research shows that the viral video claiming to depict American soldiers surrendering and being captured by Iranian forces is fake. The footage has been generated using AI and does not represent a real incident.

Executive Summary:

Amid the ongoing conflict involving the United States, Israel, and Iran, a video showing a building engulfed in flames is being widely circulated on social media. In the clip, a large fire can be seen inside a building while several people appear to be running in panic. The video is being shared with the claim that Iran fired a hypersonic missile targeting a ceremony in Tel Aviv, Israel, allegedly killing several Israeli military generals and other prominent figures.

However, research by the CyberPeace found that the claim is false. The video being circulated as footage of an attack in Israel actually predates the current conflict and shows a fire that broke out during a wedding ceremony.

Claim

A Facebook user named “Syed Asif Raza Jafri” shared the video on March 13, 2026, claiming that an Iranian hypersonic missile had struck a grand ceremony in Tel Aviv, where several Israeli military officers, generals, soldiers, and other important personalities were present. According to the post, the attack resulted in multiple casualties.

Source:

- https://www.facebook.com/reel/902182825912364

- https://ghostarchive.org/archive/rZryr

Fact Check

To verify the claim, we began our research using the Google Lens reverse image search tool. Several key frames from the viral video were extracted and searched online.

During the search, we found the same video shared earlier on multiple foreign social media accounts. A Facebook user named “Es de Bombero” from Chile had posted the video on January 17, 2026, describing it in Spanish as footage of a fire that broke out during a wedding celebration.

Our research shows that the viral video had been circulating on social media since at least January 15, 2026, well before the escalation of the current conflict. According to a report published on March 1, 2026, by BBC, the large-scale attacks on Iran by the United States and Israel began on February 28, 2026, after which Iran’s Supreme Leader Ali Khamenei was reported dead.

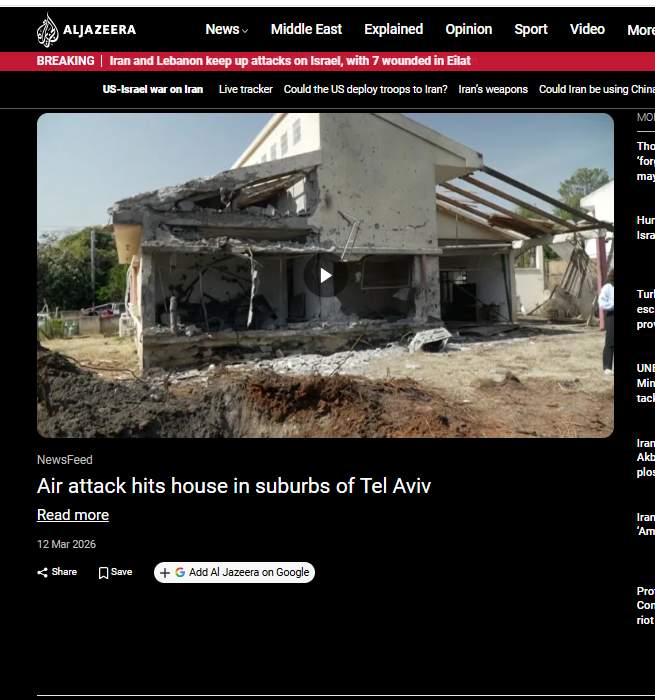

Additionally, a March 12, 2026 report by Al Jazeera stated that a house near Tel Aviv in central Israel was damaged by a rocket reportedly fired by Hezbollah, which has previously carried out joint attacks in coordination with Iran.

Conclusion

The viral video being shared as footage of an Iranian hypersonic missile strike in Tel Aviv is misleading. The clip is an older video of a fire that reportedly broke out during a wedding ceremony and was circulating online before the current conflict began.

While the exact location of the incident shown in the video cannot be independently verified, it is clear that the footage has no connection to the ongoing war between the United States, Israel, and Iran.

Executive Summary:

Amid the ongoing tensions and conflict involving the United States, Israel, and Iran, an image of a heavily damaged industrial facility is circulating widely on social media. Several users are sharing the picture claiming that it shows an Iranian water treatment or desalination plant destroyed in a US–Israel attack. Some media reports have also used the same image while reporting on the alleged attack on a freshwater desalination plant in Iran.

However, a research by the CyberPeace found that the claim is misleading. The viral image is not from Iran. It actually shows the aftermath of a drone attack on a warehouse belonging to a US company in Basra, Iraq.

Claim

X user “Shashank Shekhar Jha” shared the image on March 8, 2026, claiming that a freshwater desalination plant in Qeshm, Iran, had been destroyed.

Fact check

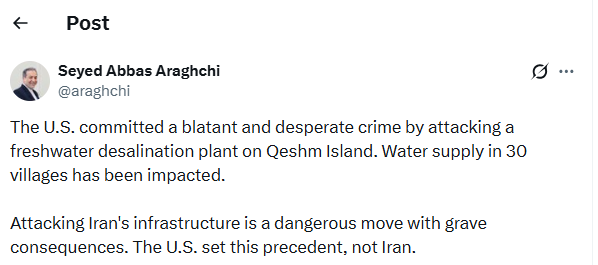

To verify the claim, we conducted a reverse image search using Google Lens. During the search, we found a report published on March 7, 2026, on the website of Asian News International (ANI). The report stated that Iran’s Foreign Minister Seyed Abbas Araghchi condemned a US attack on a freshwater desalination plant on Qeshm Island, calling it a “blatant and desperate crime.”

The report used the same viral image; however, the caption clearly mentioned that it was a representational image credited to Reuters.

https://www.aninews.in/news/world/middle-east/blatant-and-desperate-crime-irans-fm-condemns-us-attack-on-qeshms-freshwater-desalination-plant-warns-of-grave-consequences20260307212645/

To further confirm the claim, we checked the official X account of Seyed Abbas Araghchi. In a post on March 7, he condemned the alleged attack on the desalination plant in Qeshm and stated that the strike had disrupted water supply to around 30 villages. However, the post did not include any image of the incident.

Conclusion

The viral image being shared as evidence of a US–Israel attack on Iran’s water treatment plant is misleading. The photo actually shows the aftermath of a drone strike on a warehouse belonging to a US company in Basra, Iraq, and has been wrongly linked to the situation in Iran.

Executive Summary:

After the reported attacks by Israel and the United States on Iran, a video allegedly showing footballer Cristiano Ronaldo has been widely circulated on social media. In the clip, Ronaldo appears to be holding a Palestinian flag and chanting “Free Palestine.” Several users are sharing the video with the claim that Ronaldo waved the Palestinian flag and raised “Free Palestine” slogans after the death of Iran’s Supreme Leader Ali Khamenei. However, a research by CyberPeace found that the claim is false. The viral clip does not depict a real event and has been generated using artificial intelligence. The fabricated video is being shared online with misleading claims.

Claim

An Instagram user “ham_313_ka_admi” shared the viral video on March 2, 2026. The text on the video reads: “Cristiano Ronaldo waved the Palestinian flag after Khamenei’s death. Mashallah. Free Palestine.”

Fact Check:

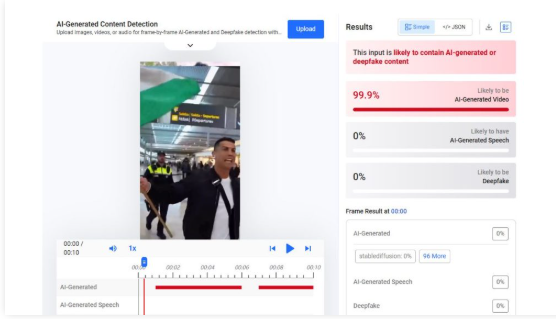

To verify the claim, we searched Google using relevant keywords but found no credible news reports supporting the viral claim. We also reviewed the official social media accounts of Cristiano Ronaldo, where no such video or statement was posted. This raised suspicion that the clip might be AI-generated.

To further examine the video, we analyzed it using AI detection tools. The tool Hive Moderation indicated a 99.9% probability that the video was created using artificial intelligence.

We also analyzed the footage using the Sightengine AI detection tool. The results suggested an 80% likelihood that the video was AI-generated. The tool also indicated that the clip may have been created using Sora, an AI video-generation tool.

Conclusion

The viral video claiming that Cristiano Ronaldo waved the Palestinian flag and chanted “Free Palestine” after the death of Ali Khamenei is AI-generated. It does not depict a real incident and is being shared with a misleading claim.

Executive Summary

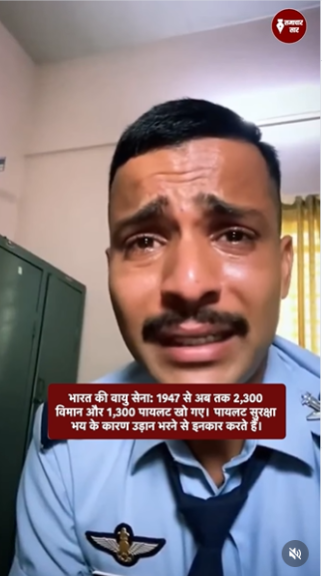

A video circulating widely on social media claims to show a pilot of the Indian Air Force (IAF) crying and expressing fear about flying fighter jets, allegedly citing poor maintenance and frequent crashes. The clip is being linked to the crash of an IAF Sukhoi-30 fighter jet in Assam on March 5, in which two pilots lost their lives. In the viral video, a man dressed like a pilot is seen speaking emotionally, saying that flying fighter jets has become frightening due to lack of maintenance and repeated accidents. Several users are sharing the clip claiming that the man in the video is an IAF pilot revealing the reality behind aircraft crashes. However, research by the CyberPeace found the claim to be false. The video does not depict a real pilot or an actual incident. Instead, it appears to be an AI-generated clip created and circulated with the intent to spread misinformation.

Claim:

An Instagram user, ‘samacharsaar0’, shared the viral video on March 10, 2026, with the English caption: “2300 aircraft crashes, 1300 pilots dead: A major challenge before the IAF.”

- Source: :https://www.instagram.com/reel/DVqa4lNiYJQ

- Archived link::https://perma.cc/EUZ8-DHE3

Fact Check:

The claim was also debunked by PIB Fact Check. While verifying the viral video, PIB clarified that the clip is artificially generated and not related to any real IAF personnel.

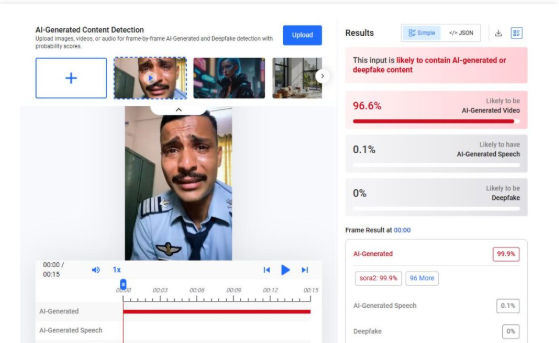

To further verify the authenticity of the video, we analyzed it using AI detection tools. The tool Hive Moderation indicated a 99.9% probability that the video was generated using artificial intelligence.

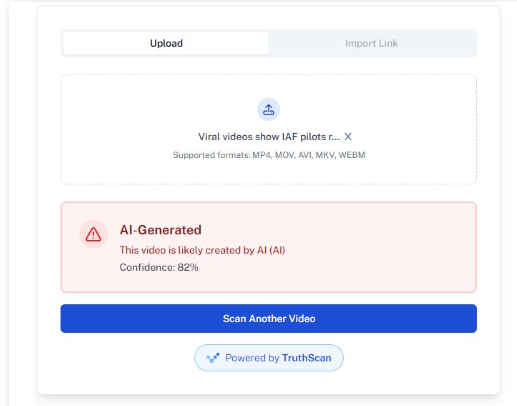

We also examined the clip using another AI detection platform, Undetectable. The analysis suggested an 82% likelihood that the video was created with AI tools. The tool also indicated the possibility that the footage may have been generated using the Sora AI video generation tool.

Conclusion

Our research concludes that the viral video of a crying “pilot” is not authentic. The clip has been created using artificial intelligence and is being misleadingly shared as a real Indian Air Force pilot speaking about aircraft crashes. The government has also denied the claim associated with the video.

Executive Summary:

Amid the ongoing war involving the United States, Israel, and Iran, a video clip circulating on social media claims to show Iran unveiling a drone resembling the US B-2 stealth bomber. In the viral clip, an aircraft-like object can be seen emerging from a cave before taking off. Several users are sharing the video with the claim that Iran has deployed a B-2-style drone in the conflict.

However, research by the CyberPeace found that the viral video is not real and was generated using artificial intelligence. While the United States has reportedly used B-2 stealth bombers in strikes against Iran during the conflict, the viral clip does not show an actual Iranian drone.

Claim

X user “Muslim_Voice_Space” posted the video on March 3, 2026, claiming that Iran had rolled out a drone resembling the B-2 bomber for use in the war.

Fact Check

To verify the claim, we first closely examined the viral video. In the opening moments of the clip, the wing of the alleged drone appears to hit the side of the cave while exiting. Despite the apparent collision, the aircraft continues flying smoothly without any visible damage. This unusual detail raised doubts about the authenticity of the footage.

We then analyzed the video using the AI detection tool Hive Moderation, which flagged the clip as likely AI-generated.

Further analysis using the Sightengine AI detection tool also suggested that the video was artificially created. The tool estimated a 75% probability that the footage was generated using AI. It also indicated a 70% likelihood that the clip may have been created using Sora, an AI video-generation tool.

Conclusion

The viral video claiming to show an Iranian drone resembling the US B-2 stealth bomber emerging from a cave is not authentic. Analysis indicates that the clip was created using AI tools and is being misleadingly shared in the context of the ongoing conflict.

Executive Summary

Amid the ongoing tensions between the United States, Israel, and Iran, a video circulating on social media claims that Israeli Prime Minister Benjamin Netanyahu was seen running after Iran launched an attack on Israel. However, research by the CyberPeace found the viral claim to be misleading. Our research revealed that the video has no connection with the current tensions between the United States, Israel, and Iran. In reality, the clip dates back to 2021, when Netanyahu was rushing inside Israel’s parliament to cast his vote after arriving late.

Claim:

On the social media platform X (formerly Twitter), a user shared the video on March 5, 2026, claiming that Netanyahu had fled and gone into hiding due to fear of Iran. The post included inflammatory remarks suggesting that Iran had demonstrated its power and that Netanyahu had abandoned his country out of fear.

Fact Check

To verify the authenticity of the video, we extracted several keyframes and conducted a reverse image search on Google. During the research, we found the same video on the official X account of Benjamin Netanyahu, posted on December 14, 2021. In the post, Netanyahu wrote in Hebrew, which translates to,“I am always proud to run for you. Photographed half an hour ago in the Knesset.”

Further research also led us to a Hebrew news website where the same video was published.

According to the report, voting in the Knesset (Israel’s parliament) continued throughout the night, and an explosives-related bill was passed by a very narrow margin. At the time, opposition leader Benjamin Netanyahu was in his room inside the Knesset building. When he was called for the vote, he hurried through the parliament corridors to reach the chamber in time to cast his vote.

Conclusion:

Our research found that the viral video is unrelated to the ongoing tensions involving the United States, Israel, and Iran. The footage is from 2021 and shows Benjamin Netanyahu rushing inside the Knesset to participate in a parliamentary vote after being called in at the last moment.

Executive Summary

A 57-second video featuring India’s Chief of Army Staff Upendra Dwivedi is widely circulating on social media. The clip is being shared with the claim that the Army chief admitted India had “betrayed” Iran by providing the location of an Iranian naval ship to Israel, allegedly leading to its destruction The video is spreading amid heightened tensions in West Asia involving United States, Israel, and Iran. According to posts sharing the claim, the Iranian naval vessel IRIS Dena, which had participated in a naval event in Visakhapatnam and was returning to Iran with around 130 personnel onboard, was torpedoed by a US submarine near the southern coast of Sri Lanka on March 4 while sailing in the Indian Ocean.

In the viral clip, the speaker—presented as the Indian Army chief—appears to say that India informed Israel about the exact location of the Iranian ship after it left Indian waters, describing Israel as a strategic ally and suggesting that the attack occurred in international waters. The clip also claims that India had no direct involvement in the alleged joint US-Israel torpedo strike.

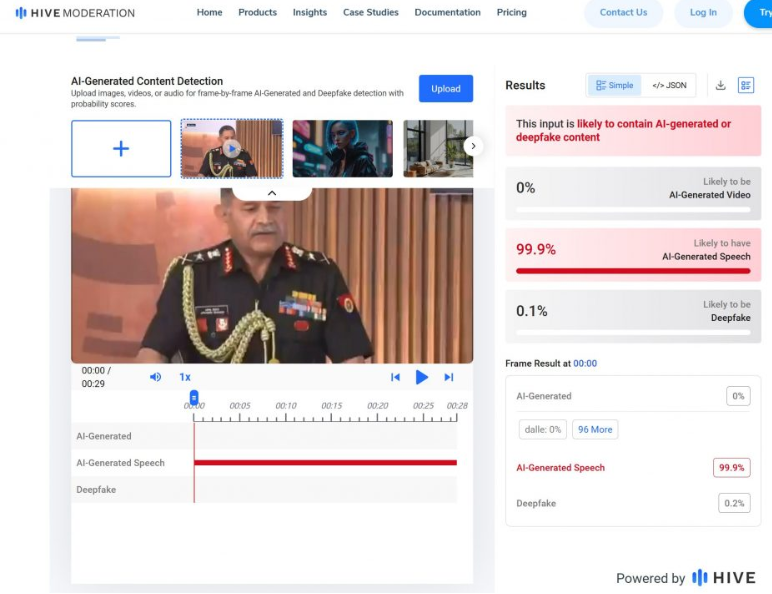

However, research conducted by the CyberPeace found the claim to be false. Our research shows that the video does not contain a genuine statement from Army Chief Upendra Dwivedi and is in fact a manipulated clip.

Claim

On X (formerly Twitter), a page named GPX (@GPX_Press) shared the video on March 9 with the caption: “India confesses it BETRAYED Iran by leaking the location of an Iranian ship to Israel, leading to its total destruction!”

Fact Check

During the verification process, researchers noticed a ticker in the viral video reading “Raisina Dialogue 2026 × Firstpost.” Using this clue, we conducted a keyword search on YouTube and located a video uploaded by Firstpost on March 7 titled “India’s Army Chief Speaks on Op Sindoor, Pakistan and Future of Warfare | Raisina Dialogue 2026.”

In the 21-minute interview, Army Chief Upendra Dwivedi is seen speaking with strategic affairs expert Harsh V. Pant. According to the video description, the discussion focuses on lessons from Operation Sindoor and the evolving nature of modern warfare.

The viral clip appears to be taken from this interview. However, throughout the conversation, Dwivedi does not mention any conflict involving the United States, Israel, and Iran, nor does he refer to the sinking of an Iranian naval ship in the Indian Ocean. This indicates that the circulating clip has been edited and misrepresented to create a misleading narrative.

For additional verification, the viral video was analyzed using the AI detection tool Hive Moderation. The results suggested a 99.9% probability that the speech in the clip was generated using AI, indicating manipulation of the original footage.

Conclusion

The research makes it clear that the viral video does not reflect an authentic statement by India’s Army Chief Upendra Dwivedi. The clip has been altered and the audio appears to be AI-generated. In other words, the circulating video is a deepfake being shared with a misleading claim.