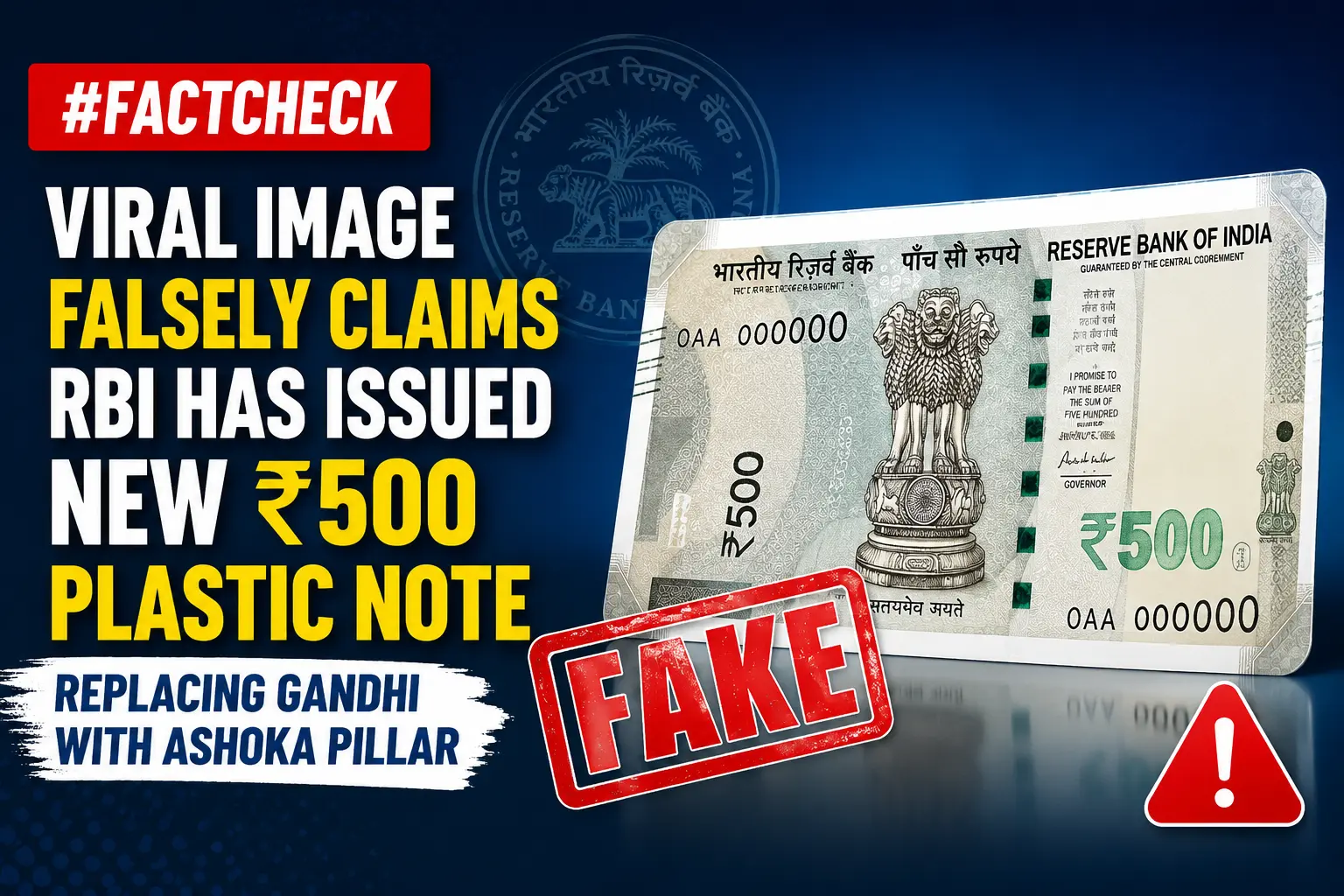

#FactCheck

Executive Summary

An image of a purported new ₹500 plastic banknote is being widely shared on social media. Users claim that the Reserve Bank of India (RBI) has issued the new note and replaced Mahatma Gandhi’s portrait with the Ashoka Pillar.The CyberPeace Research Wing research found the claim to be misleading. The probe revealed that RBI has not issued any new ₹500 plastic banknote. Furthermore, no official announcement or decision has been made regarding the removal of Mahatma Gandhi’s portrait from Indian currency and its replacement with the Ashoka Pillar.

Claim

An Instagram user shared the viral image with the claim: “RBI has issued a ₹500 plastic note in which Mahatma Gandhi’s image has been removed and replaced with the Ashoka Pillar.” The claim has been widely circulated on social media, with many users sharing the image as genuine. The post link, archived version, and screenshot can be seen below.

Fact Check

To verify the claim, we conducted a keyword search on Google. However, we found no credible media reports suggesting that RBI had issued a new ₹500 plastic note featuring the Ashoka Pillar in place of Mahatma Gandhi. We then searched the official RBI website for any notification or announcement related to the claim. Our search yielded no official communication supporting the viral claim.

During the research, we also came across a report published by Business Standard. According to the report, the Reserve Bank of India is exploring the possibility of introducing polymer (plastic) currency notes in the future. The report states that RBI is studying and discussing the proposal in view of the growing global adoption of polymer notes and their greater durability. However, the report does not state that RBI has already issued a new ₹500 plastic note. Nor does it mention any decision to remove Mahatma Gandhi’s portrait from existing currency notes and replace it with the Ashoka Pillar.

- https://www.business-standard.com/finance/news/rbi-set-to-unveil-polymer-rupee-notes-amid-rising-currency-demand-126052801725_1.html

Conclusion

The research found that the viral claim is misleading. RBI has not issued any new ₹500 plastic banknote, and there has been no official announcement or decision to replace Mahatma Gandhi’s portrait on Indian currency with the Ashoka Pillar.

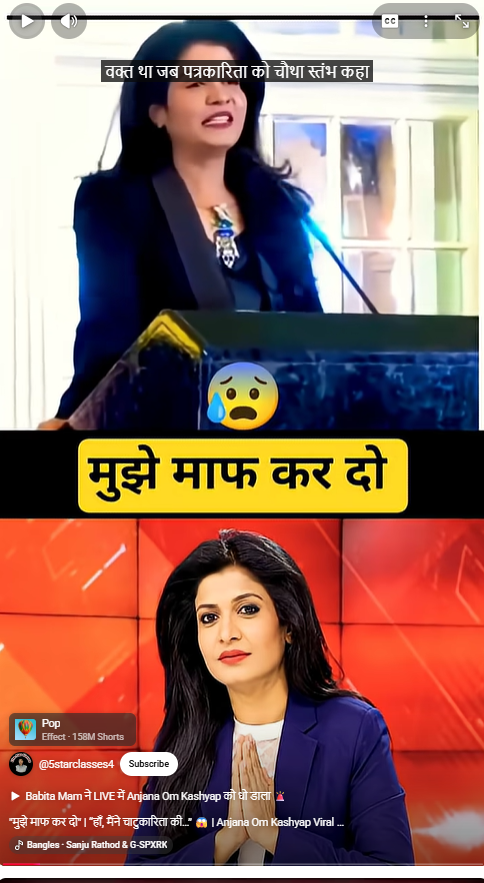

Executive Summary

A purported video of Hindi news channel Aaj Tak anchor Anjana Om Kashyap is being widely shared on social media. In the viral clip, Kashyap appears to be apologising and questioning her own journalistic credibility. The CyberPeace Research Wing research found the claim to be false. The probe revealed that the viral video was created by manipulating an original video of Anjana Om Kashyap. Her voice and statements were altered to falsely portray her as issuing an apology, whereas she made no such remarks in the original footage.

Claim:

A YouTube user shared the viral video claiming that Anjana Om Kashyap was apologising. The post can be seen here:

Fact Check

The keyframes of the viral video were analysed using reverse image search. During the research , the original reel was found on Anjana Om Kashyap’s Instagram account, where it was posted on December 26, 2023. In the original reel, Kashyap is seen praising Bihar. At no point does she apologise or make any statements similar to those heard in the viral clip.

Further research led to the original version of the video on Anjana Om Kashyap’s YouTube channel, uploaded on October 17, 2023. According to the video description, the speech was delivered during the ‘Bihar Meet’ event organised by the Indian People Forum in the UAE. Notably, none of the statements heard in the viral clip appear in the original speech.

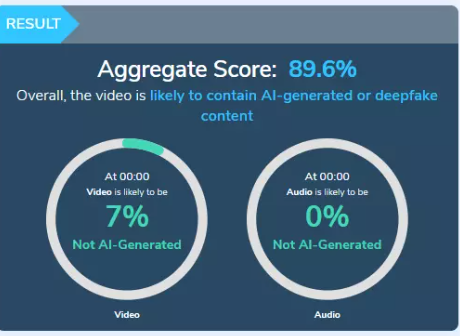

The viral video was also analysed using the AI-detection tool Hive Moderation, which indicated a high likelihood of AI-generated or deepfake manipulation.

Conclusion

The research found that the viral video has been digitally altered and falsely shared on social media. The original video of Anjana Om Kashyap was edited, and the audio was manipulated to create the misleading impression that she was apologising. No such statement was made by her in the authentic video.

Executive Summary

Amid heightened tensions in West Asia following the conflict involving the United States, Israel and Iran, a video showing a large explosion behind a building is being widely shared on social media.

Users claim that the footage shows an Iranian missile strike on a US military base in Kuwait. However, CyberPeace Research Wing research found the claim to be misleading. The viral video is actually from an Israeli airstrike in southern Lebanon. While Kuwait said its air defence systems intercepted missiles and drones during regional hostilities, the viral footage has no connection to any alleged attack on a US base in Kuwait.

Claim

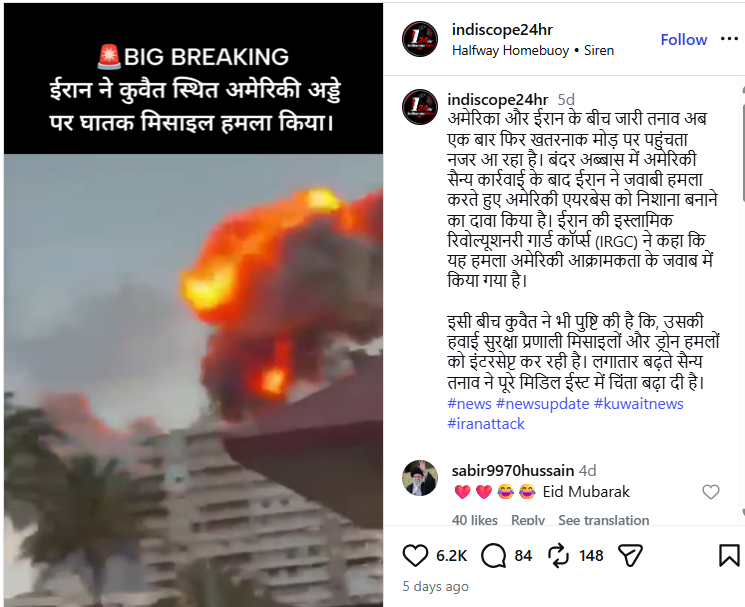

An Instagram user, “indiscope24hr,” shared the video on May 28, 2026, with text overlaid on the clip stating:“Iran launches a deadly missile attack on a US base in Kuwait.”The caption claimed that Iran targeted a US airbase in retaliation for American military action and that Kuwait’s air defence systems were intercepting incoming missiles and drones.

Fact Check

To verify the claim, we extracted key frames from the viral video and conducted a reverse image search using Google Lens. This led us to a post shared on May 28, 2026, by the Instagram account “iltv_israel,” which identified the footage as an Israeli Air Force strike on a Hezbollah target in the southern Lebanese city of Tyre.

Further research found the same footage in a video report uploaded by the New York Post’s YouTube channel on May 28, 2026. According to the report, Israel carried out strikes targeting Hezbollah positions in Tyre, southern Lebanon.

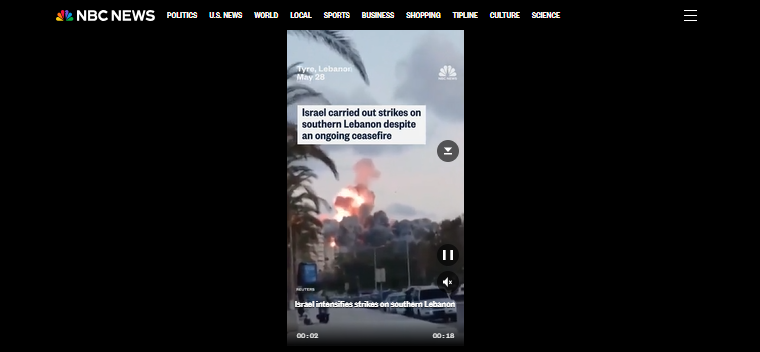

We also found the clip in a video report published by NBC News. The report stated that Israel intensified strikes in southern Lebanon despite an existing ceasefire agreement.

The matching visuals across these reports confirm that the viral footage originated from Lebanon and not from Kuwait.

Conclusion

The viral claim is misleading. The video does not show an Iranian missile strike on a US military base in Kuwait. It actually depicts an Israeli airstrike carried out in the Lebanese city of Tyre on May 28, 2026, and is being shared with a false context on social media.

Executive Summary

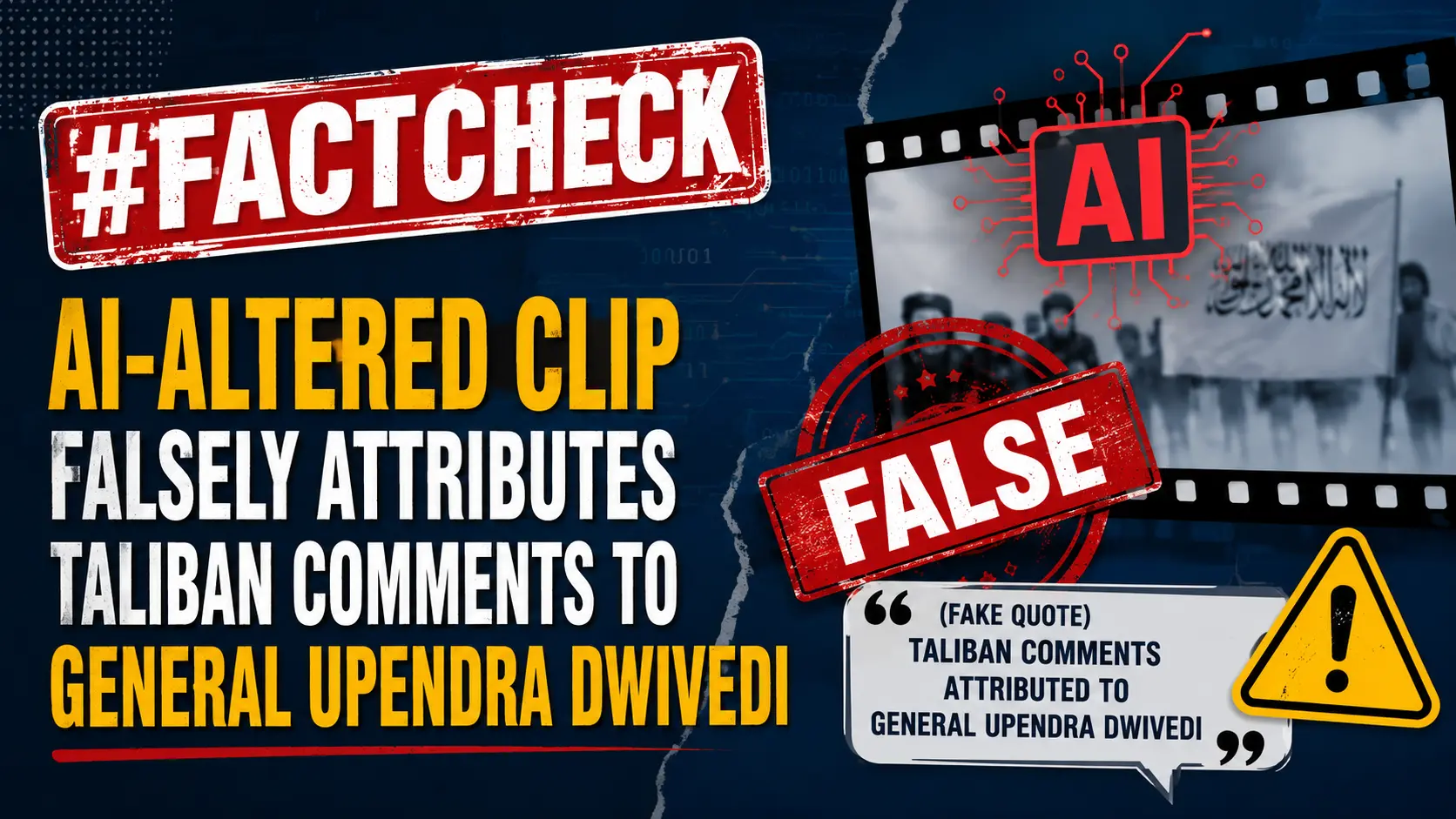

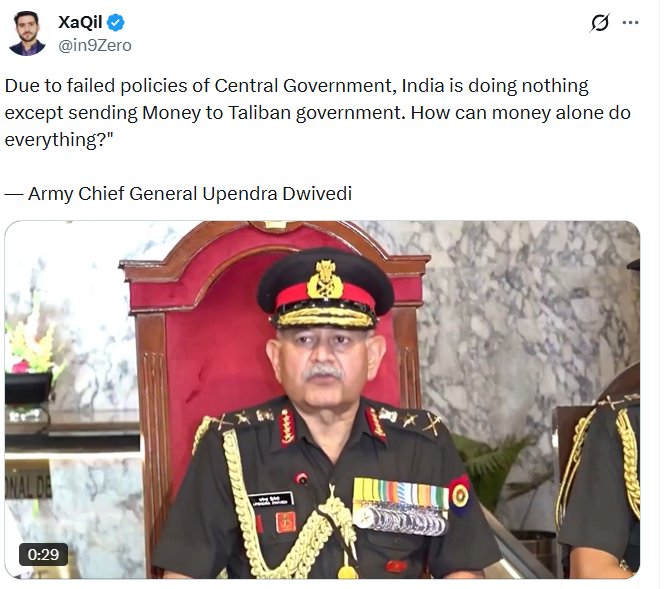

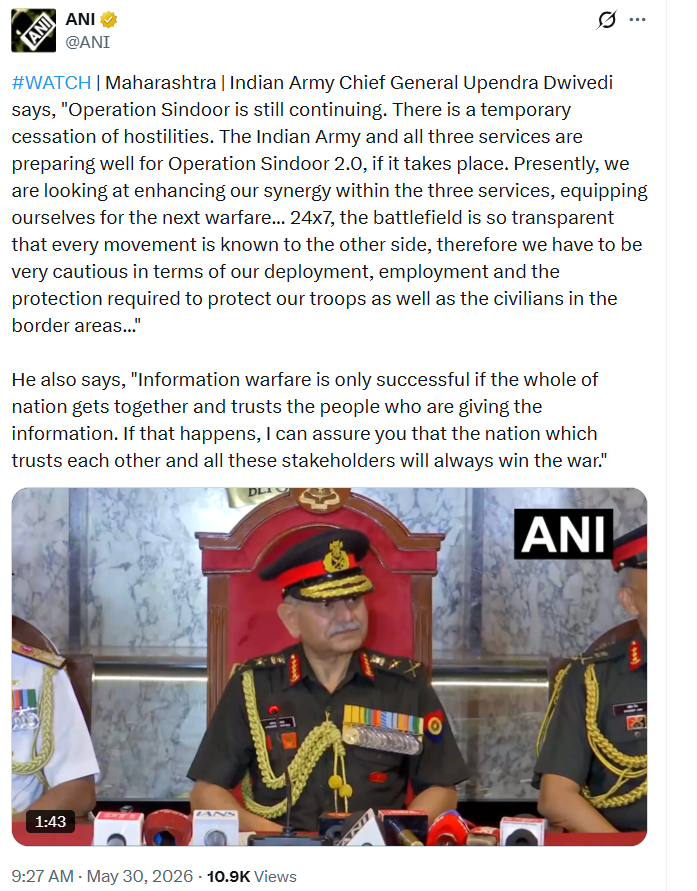

A video clip of Indian Army Chief Upendra Dwivedi is being widely shared across social media platforms with the claim that he criticised the Indian government's policy towards Taliban-ruled Afghanistan. In the viral clip, the Army Chief is allegedly heard saying that India is doing nothing except sending money to the Taliban government due to the Centre’s failed policies.

However, CyberPeace Research Wing research found the claim to be false. The viral video is a deepfake. In the original footage, General Upendra Dwivedi was speaking about Operation Sindoor and the preparedness of the Indian Armed Forces for a possible “Operation Sindoor 2.0.” He made no remarks regarding the Taliban or the government’s Afghanistan policy.

Claim

An X user named “XaQil” shared the viral video on May 31, 2026, with the caption:“Due to failed policies of the Central Government, India is doing nothing except sending Money to Taliban government. How can money alone do everything?” — Army Chief General Upendra Dwivedi.

Fact Check

In the viral video, General Dwivedi is purportedly heard making remarks about India’s Afghanistan policy, the Taliban, Pakistan, Iran, and India’s diplomatic position. To verify the claim, we searched for the original source of the video. A reverse image search of key frames led us to the authentic footage posted by news agency ANI on its official X account on May 30, 2026.

In the original video, General Dwivedi was responding to a question about Operation Sindoor. He stated that the operation was still ongoing, hostilities had only paused temporarily, and that the Indian Armed Forces were fully prepared if “Operation Sindoor 2.0” became necessary.

He also spoke about enhancing coordination among the three services and maintaining operational readiness.”

No part of his statement mentioned the Taliban, Afghanistan, Pakistan, Iran, or criticism of the Central Government.

Further corroboration came from media reports covering the same event. According to a report published by Navbharat Times on May 30, 2026, General Dwivedi made the remarks during the passing-out parade of the 150th course of the National Defence Academy (NDA), where he attended as the chief guest. He reiterated that the armed forces were fully prepared for “Operation Sindoor 2.0” if required.

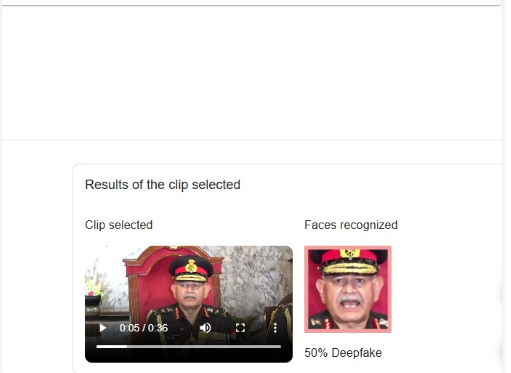

Since the content of the viral clip did not match the original statement, we examined it using InVID’s MeVer Deepfake Detector. The tool flagged signs of AI manipulation and indicated that the video had likely been altered.

Conclusion

Cyber Peace Foundation found that the viral video purportedly showing Army Chief General Upendra Dwivedi criticising the Indian government’s policy towards the Taliban is a deepfake. The Army Chief made no such remarks. The original video was recorded during an NDA event, where he spoke about Operation Sindoor and the preparedness of the Indian Armed Forces for a possible future operation. The viral clip has been manipulated using AI to spread a false narrative.

Executive Summary

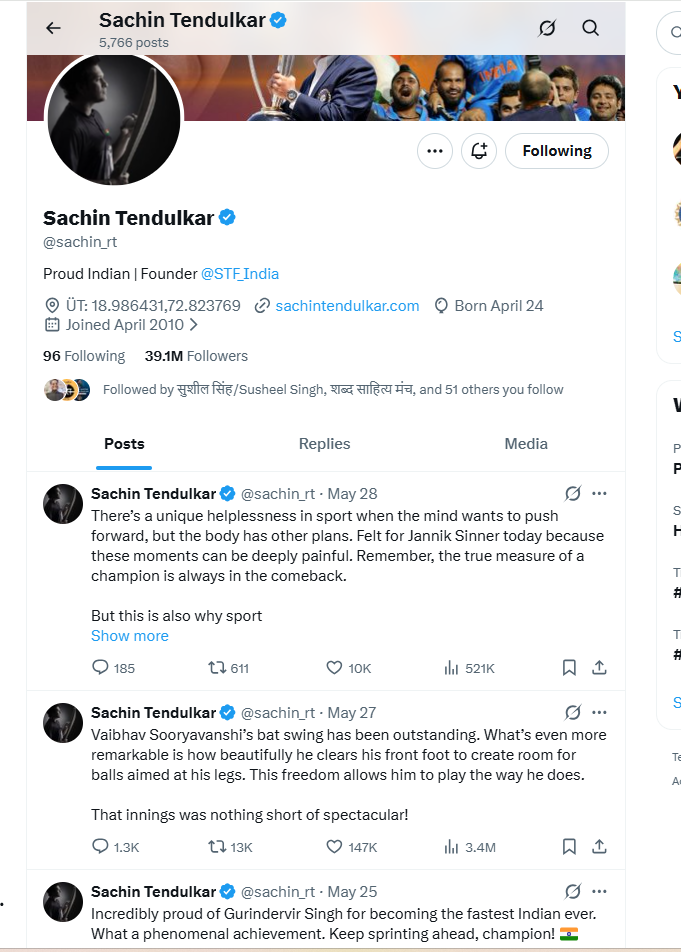

A post claiming that former Indian cricketer Sachin Tendulkar praised Congress leader Rahul Gandhi and urged people to elect him as Prime Minister is being widely circulated on social media.The viral poster falsely attributes a political statement to Sachin Tendulkar, suggesting that he has endorsed Rahul Gandhi for the post of Prime Minister. However, CyberPeace Research Wing research found the claim to be fake. Sachin Tendulkar has not made any such appeal or statement supporting Rahul Gandhi for Prime Minister.

Claim

On X (formerly Twitter), a verified user “Queen” shared a viral poster claiming:“Sachin Tendulkar has always supported education and never promoted superstition. Rahul Gandhi always predicts what Narendra Modi will do next. It is time to choose Rahul Gandhi again.”

Fact Check

To verify the claim, we first searched for any news reports, interviews, or credible references linking Sachin Tendulkar to such a political statement. However, we found no evidence in any reliable media source or public record suggesting that he made any such remark about Rahul Gandhi or the Prime Ministership. We also reviewed Sachin Tendulkar’s official social media accounts, but found no post, video, or statement endorsing any political leader in this manner.

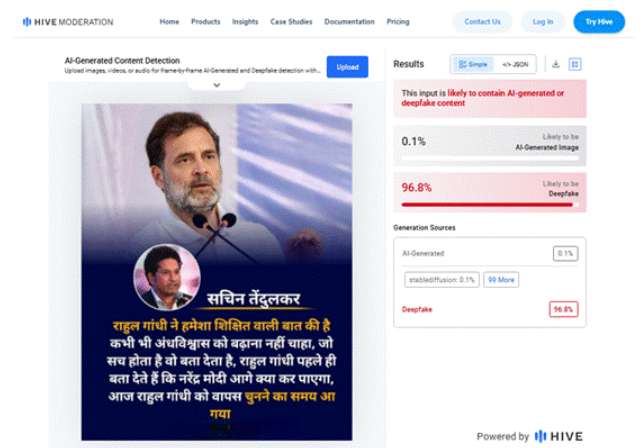

Finally, the viral poster was analysed using the AI detection tool Hive Moderation. The analysis indicated a 96.8% probability that the poster was digitally created or manipulated, suggesting possible AI-generated or edited content.

Conclusion

CyberPeace Research Wing research found the claim to be fake. Sachin Tendulkar has not made any appeal to elect Rahul Gandhi as Prime Minister. The viral poster appears to be digitally fabricated and is being shared to spread misinformation.

Executive Summary

A video of an Additional Secretary in the Ministry of External Affairs (MEA), handling the Americas & Canada Division, is being widely circulated on social media. The clip is being shared with the claim that he said:“Even if the Quad ends, India will partner only with Israel, and since Israel controls the US, India also controls the US.”The viral post attempts to link this alleged statement to India’s foreign policy. Many users are sharing it as authentic. However, CyberPeace Research Wing research found the claim to be false. The video has been digitally altered, and no such statement was made by the official in the original briefing.

Claim

On social media platform X (formerly Twitter), the viral video is being shared with the claim that the MEA Additional Secretary said Israel controls the United States, and therefore India also controls the US.

- https://www.facebook.com/61562281661615/videos/1518036689691723/

- https://archive.ph/xGJHa#selection-967.0-978.0

Fact Check

To verify the claim, we extracted key frames from the viral video and conducted a reverse image search. During the research, we found the original video, which was streamed live on May 26, 2026, on the verified YouTube channel of the Ministry of External Affairs (MEA), titled: “Special Briefing by MEA on Quad Foreign Ministers’ Meeting”

During the briefing, Naidu highlighted India’s commitment to a free and open Indo-Pacific region, mentioning new initiatives in maritime surveillance, critical minerals, and 6G development. He also noted the continued momentum of the Quad, stating that frequent ministerial meetings reflect strong and ongoing cooperation among member countries despite challenges in holding formal leaders’ summits.

The official transcript of the briefing is also available on the MEA website:

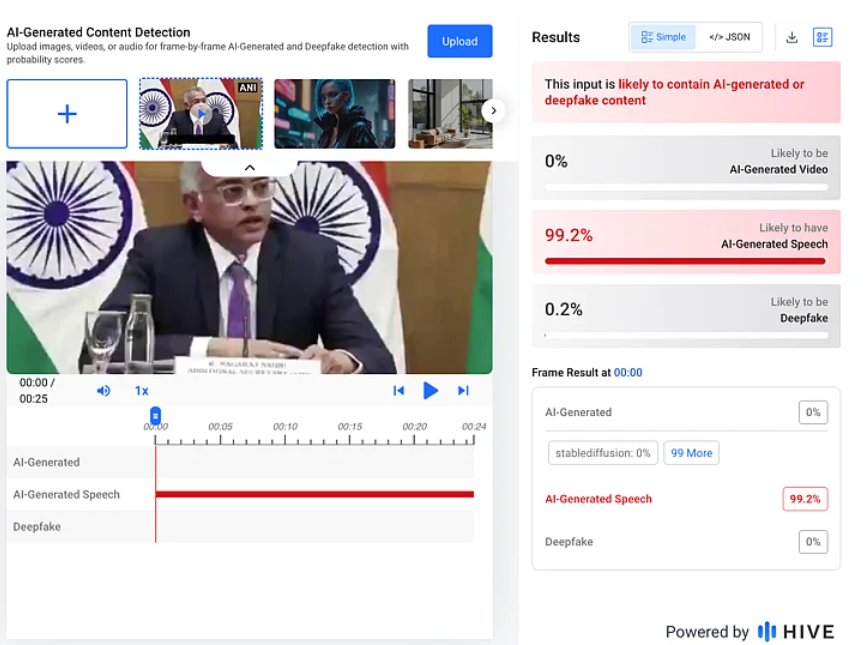

Since the viral statement was never made during the event, we further analysed the video using Deepfake Voice Detector and Hive Moderation’s AI-generated content detection tool. Hive moderation analysis indicated a 99.2% probability that the audio in the viral video is AI-generated.

Conclusion

CyberPeace Research Wing research found that the viral video is digitally altered. The Additional Secretary did not make any such statement during the official briefing. The audio in the clip has been manipulated and is being circulated with a misleading narrative.

Executive Summary

A picture allegedly showing Sunrisers Hyderabad (SRH) owner Kavya Maran emotionally hugging young cricketer Vaibhav Suryavanshi has gone viral on social media. The image is being shared as a genuine photograph from a cricket-related event, with users claiming that Kavya Maran was seen embracing Vaibhav Suryavanshi. However, CyberPeace Research Wing research found the claim to be false. No credible news reports, official statements, or authentic photographs support the incident depicted in the viral image.

Claim

A Facebook user shared the viral image with the caption: “Kavya Maran Hug Vaibhav Suryavanshi 🥰🔥 #cricketnews #RRvsSRH” The link to the post and its screenshot are provided below.

Fact Check

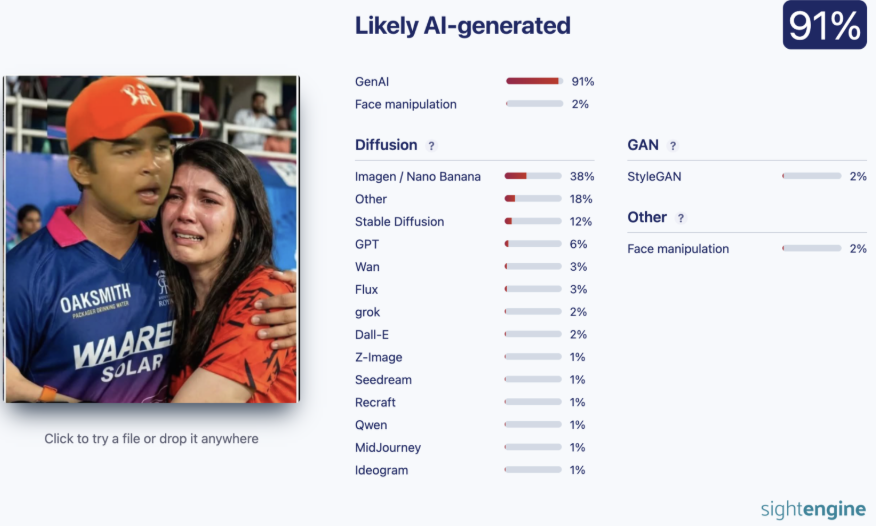

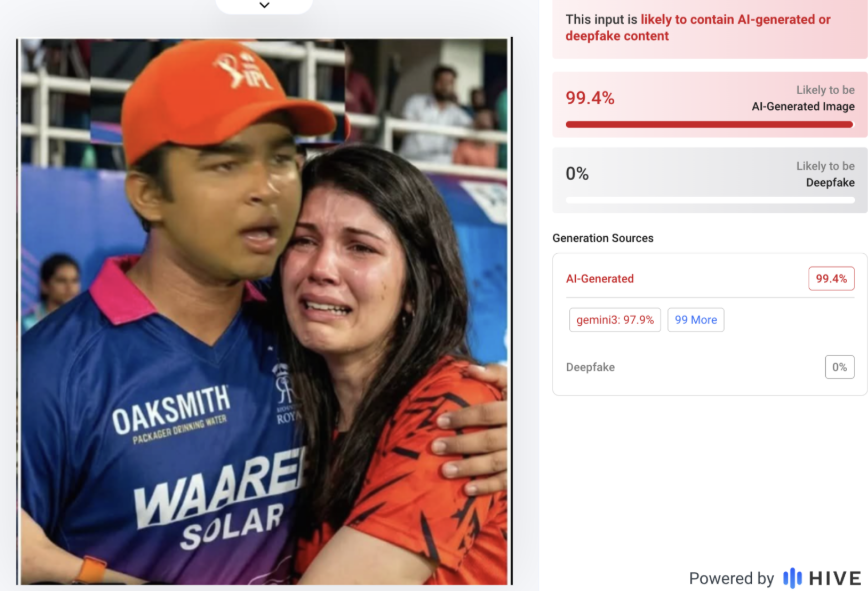

During the research, we found no credible news reports, official statements, or authentic images confirming that Kavya Maran hugged Vaibhav Suryavanshi as shown in the viral picture. To further verify the image, it was analysed using AI detection tools, including Sightengine and Hive Moderation. Both tools indicated a high probability that the image was generated using Artificial Intelligence. The findings suggest that the viral photograph is not a genuine image captured at a real event but a digitally created visual.

Conclusion

Our research found that the viral image showing Kavya Maran emotionally hugging Vaibhav Suryavanshi is not authentic. The picture was generated using AI and does not depict a real incident.

Executive Summary

A video purportedly showing Italian Prime Minister Giorgia Meloni angrily addressing a room full of delegates before throwing a bundle of papers and storming out has gone viral on social media. The clip is being shared alongside claims that Meloni terminated all agreements with Israel following growing tensions over the conflict in the Middle East. However, CyberPeace Research Wing research found that the viral video is not authentic. The clip was generated using Artificial Intelligence (AI).

Claim

On April 24, 2026, an X user shared the viral video with the caption:“Italy's woman Prime Minister has terminated all agreements with Israel!! Italy's woman Prime Minister is far more courageous and fearless than the leaders of 56 Islamic nations.”

- https://x.com/middle_East_up/status/2047597154257297878?s=20

- https://perma.cc/4EM9-5GS4

Fact Check

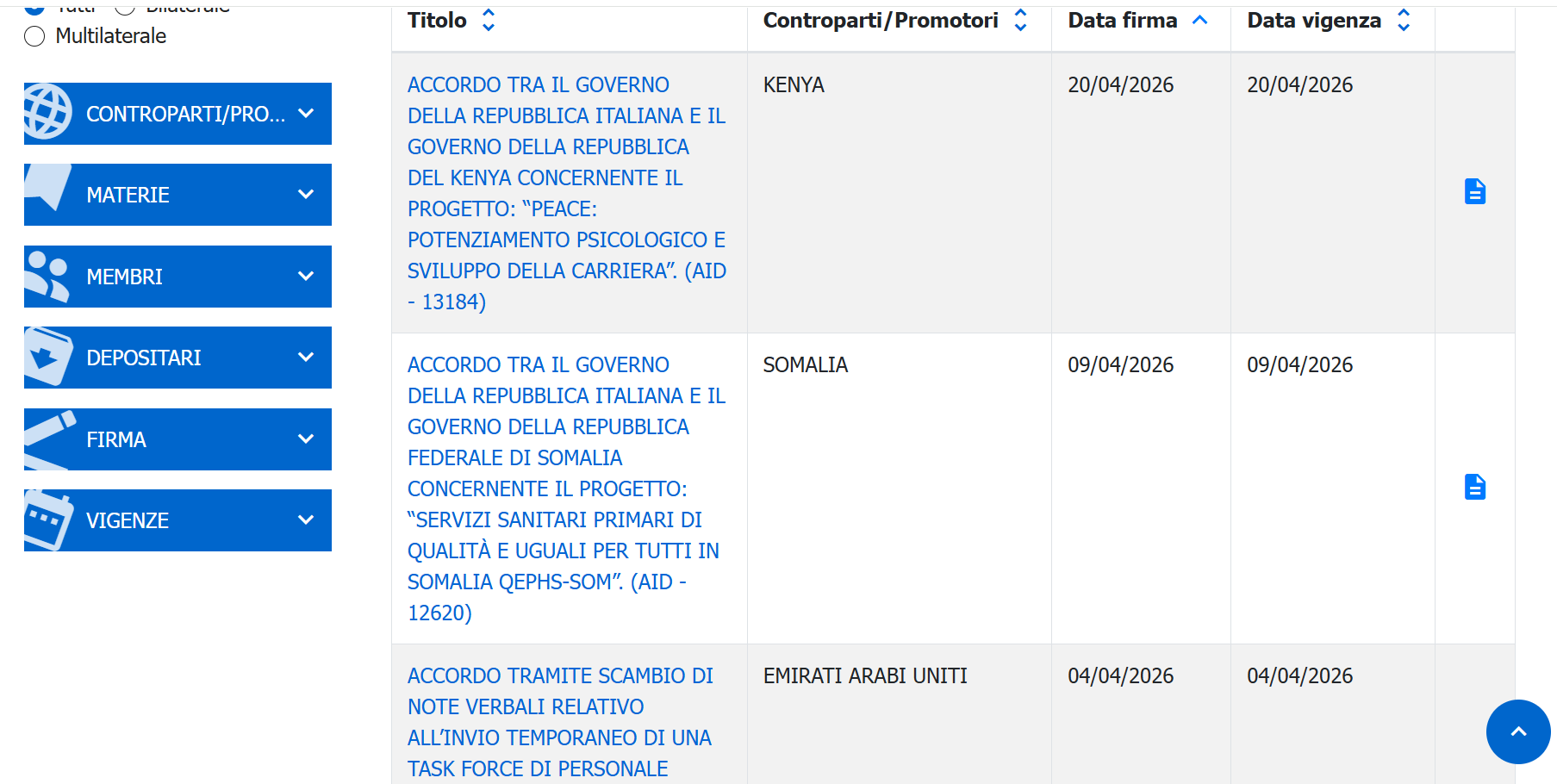

To verify the claim, we examined official records related to agreements between Italy and Israel. Data available from the Italian Ministry of Foreign Affairs and International Cooperation shows that multiple bilateral agreements between the two countries remain in force in 2026.

- https://atrio.esteri.it/Home/Search

Further research found reports related to discussions within the European Union regarding the suspension of certain cooperation arrangements with Israel. During a meeting of EU foreign ministers in Luxembourg, Spain and Ireland renewed calls to review the EU-Israel Association Agreement. However, Italian Foreign Minister Antonio Tajani reportedly stated that no decision would be taken that day. A closer examination of the viral clip revealed several visual inconsistencies commonly associated with AI-generated content, including unnatural facial movements, irregular body gestures, and unrealistic scene transitions.

To further verify the footage, we analysed it using the DeepFake-o-Meter tool. Results from three separate detection models indicated that the video was likely generated using artificial intelligence.

Conclusion

CyberPeace Research Wing research found that the viral video allegedly showing Italian Prime Minister Giorgia Meloni angrily terminating agreements with Israel is AI-generated. There is no evidence that the incident shown in the clip actually occurred.

Executive Summary

A video of Prime Minister Narendra Modi is being widely shared on social media with the claim that he is being “made up” or styled by a team, with users attempting to mock him using the footage. The clip is being circulated with misleading captions suggesting it shows the Prime Minister undergoing makeup and grooming by a dedicated team. CyberPeace Foundation Research Wing, in its research, found that the viral claim is false. In fact, the viral video is not recent, but from 2016. At that time, a statue of PM Modi was to be installed at Madame Tussauds Museum. A team of artists and experts visited the Prime Minister's residence to take measurements for the statue. This misleading claim has been circulating on social media for several years. We have previously fact-checked this claim and exposed the truth.

Claim

An X user named “Adv Shubham” shared the viral video on May 27, 2026, with the caption:“This is how Modi Ji is styled…”The post also claims that the Prime Minister is regularly “made up” by a team.

- https://x.com/AdvShubhamllb/status/2059682034289946746

- https://perma.cc/FVM9-PQBP

Fact Check

The viral video is not recent. It dates back to 2016 and is related to a completely different context. During the investigation, we found the same footage on the official YouTube channel of Madame Tussauds London, uploaded on March 16, 2016.

According to the video details, it shows the process of taking measurements for a wax statue of Prime Minister Narendra Modi for installation at Madame Tussauds. A team of artists and experts had visited the Prime Minister’s residence in Delhi for this purpose.

Conclusion

The viral claim that Prime Minister Narendra Modi is being “made up” by a team is false. The footage is from 2016 and shows a measurement session conducted for his wax statue at Madame Tussauds Museum.

Executive Summary

A social media post featuring a graphic attributed to Navbharat Times and BJP MP Ravi Kishan is being widely circulated. The post falsely claims that Ravi Kishan made a controversial statement saying, “Narendra Modi should be ashamed, Meloni is of his granddaughter’s age.” CyberPeace Research Wing investigation found that the claim is false. In the original Navbharat Times postcard, Ravi Kishan is seen saying that people should stop criticising the Prime Minister, otherwise they may have to face consequences.

Claim

An X (formerly Twitter) user named “Prem Jai Moolnivasi Paswan” shared the viral post and alleged that Ravi Kishan made objectionable remarks targeting Prime Minister Narendra Modi.

- https://www.facebook.com/premjaymulnivasi.paswan/posts/pfbid02Drv387K2ugesnP9vvbJXgqU7yTZkrUhVAB5y9euzHuxpigWa8uDEnu3E2T6oox8Wl?rdid=4VbtbVi1G8bgo12d

- https://archive.ph/NHBXP

Fact Check

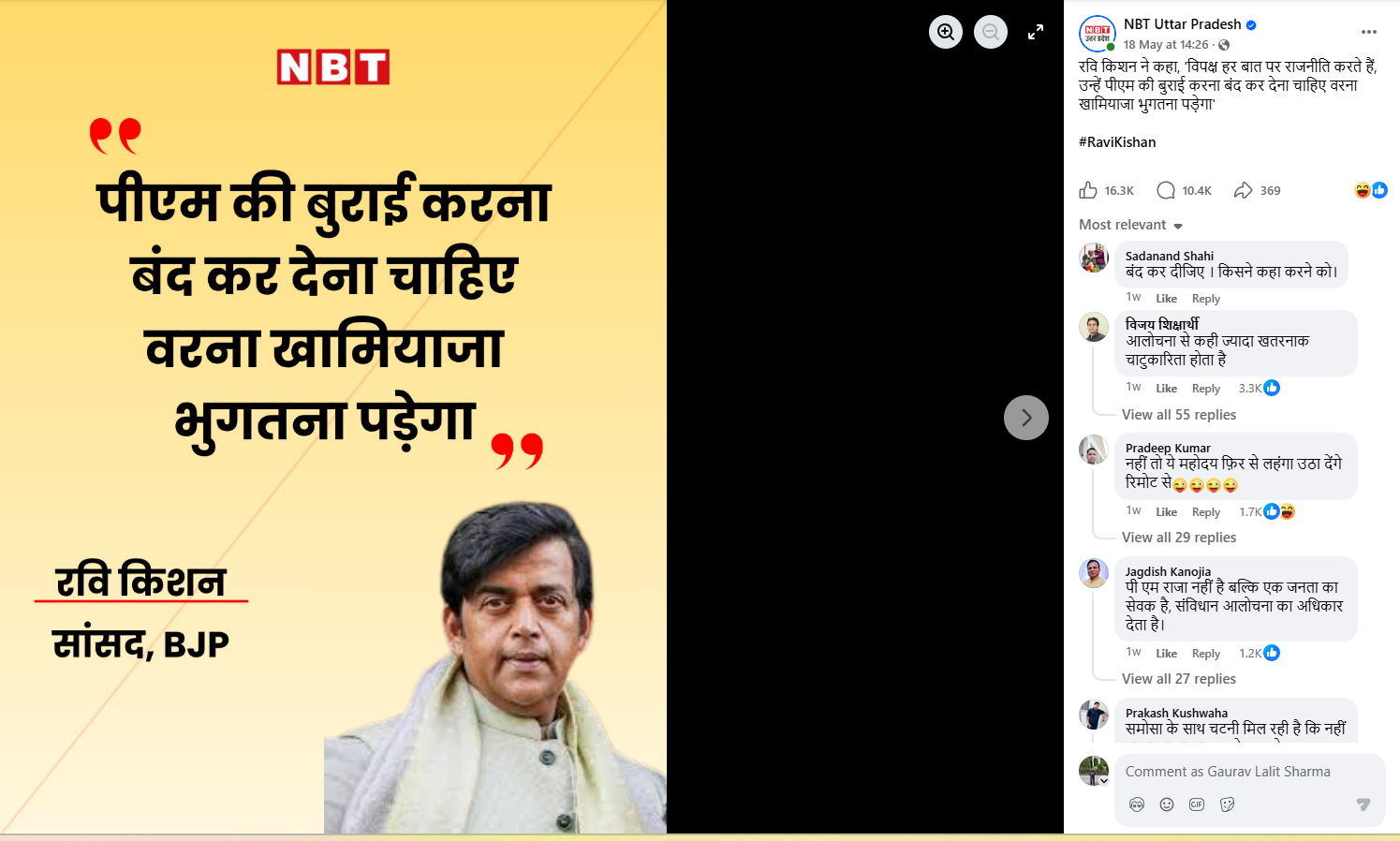

During the investigation, we searched for the alleged statement using relevant keywords but found no credible reports or evidence supporting the claim. We then examined Navbharat Times’ official social media handles. A similar-looking but authentic postcard was found on the official Facebook page of Navbharat Times (Uttar Pradesh) dated May 18, 2026.

The original post quoted Ravi Kishan differently, stating that criticism of the Prime Minister should be avoided, or else consequences may follow. Nowhere in the authentic post is the viral controversial remark mentioned.

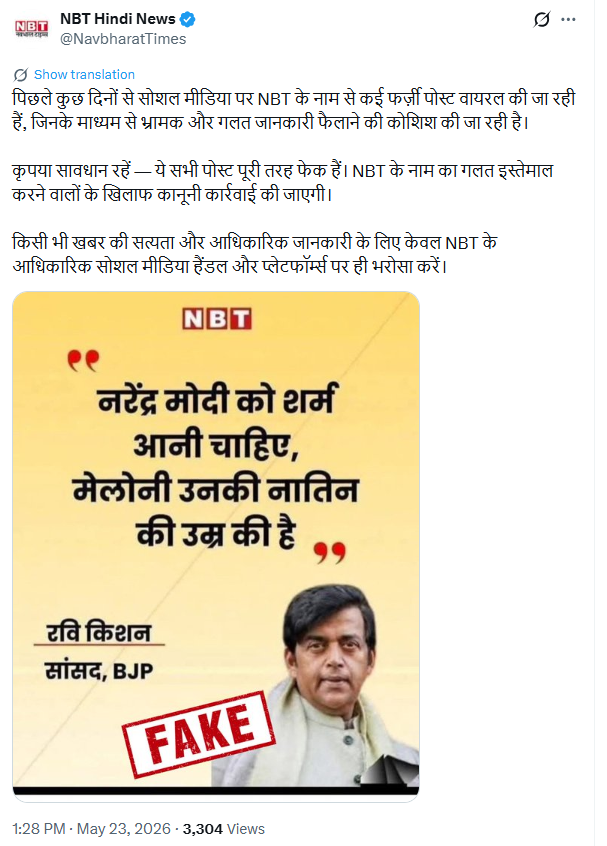

Further investigation revealed that Navbharat Times itself issued a clarification on its official X handle, stating that the viral post is fake and that legal action would be taken against misuse of its name.

Conclusion

The viral claim is false. The authentic Navbharat Times postcard shows Ravi Kishan saying that criticism of the Prime Minister should be stopped, or there would be consequences. The viral graphic has been altered to spread misinformation.

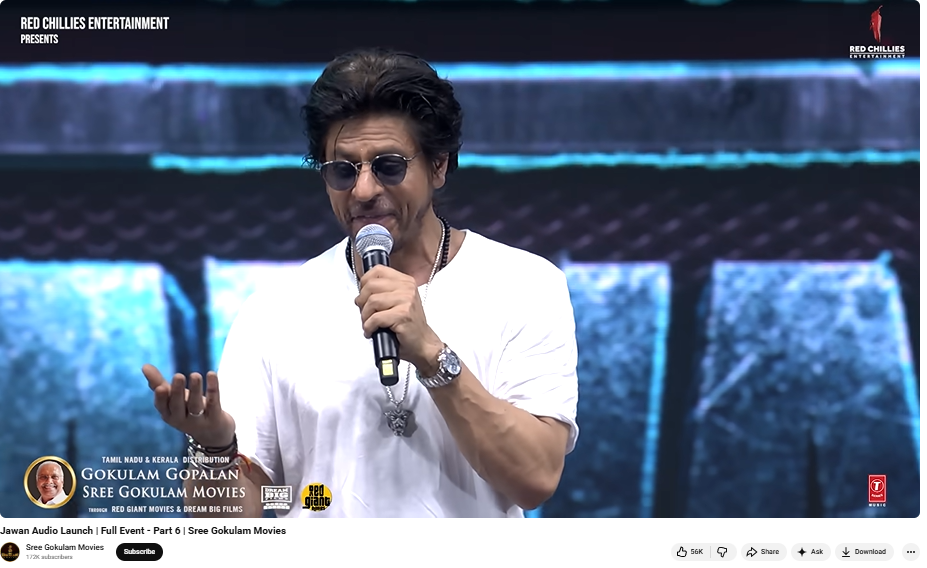

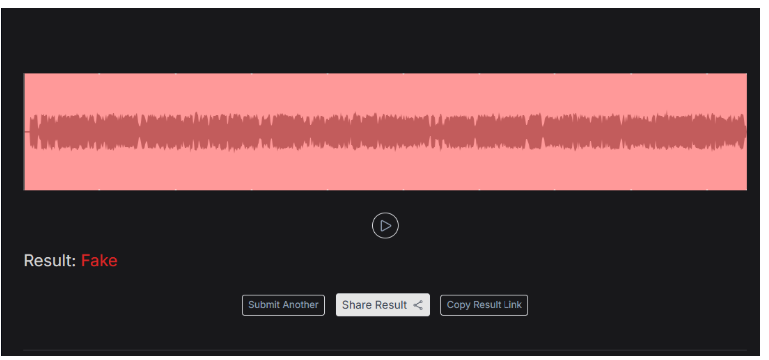

Executive Summary

A video allegedly showing Bollywood actor Shah Rukh Khan supporting and expressing his intention to join the so-called ‘Cockroach Janta Party’ (CJP) is being widely shared on social media.In the viral clip, Shah Rukh Khan can allegedly be heard saying:“Friends, the common people of this country are now fully awakened, and the storm of Cockroach Janta Party on social media has become so huge that its name is echoing everywhere… In just a few days, it has gained more than 15 million followers on Instagram… and honestly, I too will soon join the Cockroach Janta Party…”

However, CyberPeace Research Wing investigation found the claim to be false. The voice heard in the viral clip is AI-generated.

Claim

The viral video is being shared with the claim that actor Shah Rukh Khan publicly endorsed the ‘Cockroach Janta Party’ (CJP) and announced that he would soon join the movement.

- https://archive.is/wWueV

Fact Check

To verify the authenticity of the viral video, we first searched the internet using relevant keywords. However, we found no credible media reports, interviews, or posts from Shah Rukh Khan’s official social media accounts mentioning any support for the ‘Cockroach Janta Party’. Notably, if a major actor like Shah Rukh Khan had publicly supported any political or social media movement, it would have received widespread media coverage.

We then analysed key frames from the viral clip using Google Lens. During the investigation, we found an original video uploaded on September 10, 2023, on the YouTube channel of Sri Gokulam Movies. The footage was from the audio launch event of the film Jawan, where Shah Rukh Khan appeared in the same outfit seen in the viral clip.

वीडियो के डिस्क्रिप्शन में लिखा गया है, “शाहरुख़ In the original video, Shah Rukh Khan is seen speaking about the film, its cast, music, and his experience during the event. At no point does he mention the ‘Cockroach Janta Party’. The video description states that Shah Rukh Khan and the film’s team attended the audio launch event of Jawan in Chennai, where he praised music composer Anirudh Ravichander and thanked artists from the Tamil film industry. Additionally, the online trend related to the ‘Cockroach Janta Party’ emerged only in May 2026, whereas the original video is nearly three years old.

During the investigation, we also found several media reports covering the Jawan audio launch event, showing Shah Rukh Khan in the same attire as seen in the viral clip. For instance, a report published by Hindustan Times extensively covered the Chennai event, confirming that the viral footage was taken from the promotional event of the film.

To further examine the audio in the viral clip, we analysed it using the AI detection tool Resemble AI. The tool flagged the voice in the video as likely fake and AI-generated.

Conclusion

The investigation clearly shows that the claim about Shah Rukh Khan supporting or joining the ‘Cockroach Janta Party’ (CJP) is false. The viral video is actually from the 2023 audio launch event of the film Jawan, while the audio added to the clip has been generated using AI.

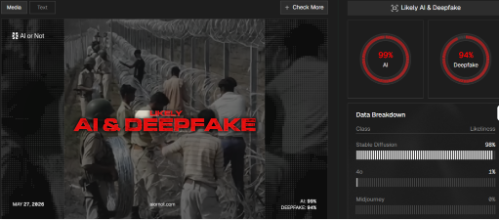

Executive Summary

A video showing people installing fencing along a border has gone viral on social media. In the clip, several individuals along with security personnel can be seen laying barbed wire fencing near a border area. The video is being shared with the claim that it shows fencing work underway on the India-Bangladesh border in West Bengal after the Bharatiya Janata Party (BJP) allegedly came to power in the state.

However, CyberPeace Research Wing investigation found the viral claim to be false. The video is not from a real incident and has been created using Artificial Intelligence (AI).

Claim

An X user named “Gopal Sanatani” shared the viral video on May 26, 2026, with the caption:“Voting in the right place keeps the country secure. Now no outsider will be able to snatch the rights of Indian citizens.”The archived link to the post is provided below.

Fact Check

To verify the authenticity of the viral claim, we extracted several key frames from the video and conducted reverse image searches using Google. However, we could not find any credible information or authentic reports related to the visuals shown in the clip. We also searched using relevant keywords on Google, but no trustworthy news reports connected to the claim were found.

Upon closely examining the video, several visual inconsistencies became noticeable. At one point, an object resembling a piece of paper suddenly appears in a soldier’s hand and then disappears moments later. In another scene, a man installing the fencing appears to pass directly through the barbed wire and emerges unharmed, despite the sharp wires visible in the video. These irregularities raised suspicion that the clip had been artificially generated. To further investigate, we analysed the video using AI detection tools. The analysis conducted through Hive Moderation indicated a high probability — around 80 percent — that the video was AI-generated.

Additionally, the video was examined using another AI detection platform, “AI or Not,” which indicated nearly a 99 percent likelihood that the clip had been created using artificial intelligence.

Conclusion

Our investigation found that the viral video claiming to show fencing work along the West Bengal-Bangladesh border is fake. The footage does not depict a real incident and was generated using Artificial Intelligence (AI).