#FactCheck: Debunking the Edited Image Claim of PM Modi with Hafiz Saeed

Executive Summary:

A photoshopped image circulating online suggests Prime Minister Narendra Modi met with militant leader Hafiz Saeed. The actual photograph features PM Modi greeting former Pakistani Prime Minister Nawaz Sharif during a surprise diplomatic stopover in Lahore on December 25, 2015.

The Claim:

A widely shared image on social media purportedly shows PM Modi meeting Hafiz Saeed, a declared terrorist. The claim implies Modi is hostile towards India or aligned with terrorists.

Fact Check:

On our research and reverse image search we found that the Press Information Bureau (PIB) had tweeted about the visit on 25 December 2015, noting that PM Narendra Modi was warmly welcomed by then-Pakistani PM Nawaz Sharif in Lahore. The tweet included several images from various angles of the original meeting between Modi and Sharif. On the same day, PM Modi also posted a tweet stating he had spoken with Nawaz Sharif and extended birthday wishes. Additionally, no credible reports of any meeting between Modi and Hafiz Saeed, further validating that the viral image is digitally altered.

In our further research we found an identical photo, with former Pakistan Prime Minister Nawaz Sharif in place of Hafiz Saeed. This post was shared by Hindustan Times on X on 26 December 2015, pointing to the possibility that the viral image has been manipulated.

Conclusion:

The viral image claiming to show PM Modi with Hafiz Saeed is digitally manipulated. A reverse image search and official posts from the PIB and PM Modi confirm the original photo was taken during Modi’s visit to Lahore in December 2015, where he met Nawaz Sharif. No credible source supports any meeting between Modi and Hafiz Saeed, clearly proving the image is fake.

- Claim: Debunking the Edited Image Claim of PM Modi with Hafiz Saeed

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

Introduction

Recent advances in space exploration and technology have increased the need for space laws to control the actions of governments and corporate organisations. India has been attempting to create a robust legal framework to oversee its space activities because it is a prominent player in the international space business. In this article, we’ll examine India’s current space regulations and compare them to the situation elsewhere in the world.

Space Laws in India

India started space exploration with Aryabhtta, the first satellite, and Rakesh Sharma, the first Indian astronaut, and now has a prominent presence in space as many international satellites are now launched by India. NASA and ISRO work closely on various projects

India currently lacks any space-related legislation. Only a few laws and regulations, such as the Indian Space Research Organisation (ISRO) Act of 1969 and the National Remote Sensing Centre (NRSC) Guidelines of 2011, regulate space-related operations. However, more than these rules and regulations are essential to control India’s expanding space sector. India is starting to gain traction as a prospective player in the global commercial space sector. Authorisation, contracts, dispute resolution, licencing, data processing and distribution related to earth observation services, certification of space technology, insurance, legal difficulties related to launch services, and stamp duty are just a few of the topics that need to be discussed. The necessary statute and laws need to be updated to incorporate space law-related matters into domestic laws.

India’s Space Presence

Space research activities were initiated in India during the early 1960s when satellite applications were in experimental stages, even in the United States. With the live transmission of the Tokyo Olympic Games across the Pacific by the American Satellite ‘Syncom-3’ demonstrating the power of communication satellites, Dr Vikram Sarabhai, the founding father of the Indian space programme, quickly recognised the benefits of space technologies for India.

As a first step, the Department of Atomic Energy formed the INCOSPAR (Indian National Committee for Space Research) under the leadership of Dr Sarabhai and Dr Ramanathan in 1962. The Indian Space Research Organisation (ISRO) was formed on August 15, 1969. The prime objective of ISRO is to develop space technology and its application to various national needs. It is one of the six largest space agencies in the world. The Department of Space (DOS) and the Space Commission were set up in 1972, and ISRO was brought under DOS on June 1, 1972.

Since its inception, the Indian space programme has been orchestrated well. It has three distinct elements: satellites for communication and remote sensing, the space transportation system and application programmes. Two major operational systems have been established – the Indian National Satellite (INSAT) for telecommunication, television broadcasting, and meteorological services and the Indian Remote Sensing Satellite (IRS) for monitoring and managing natural resources and Disaster Management Support.

Global Scenario

The global space race has been on and ever since the moon landing in 1969, and it has now transformed into the new cold war among developed and developing nations. The interests and assets of a nation in space need to be safeguarded by the help of effective and efficient policies and internationally ratified laws. All nations with a presence in space do not believe in good for all policy, thus, preventive measures need to be incorporated into the legal system. A thorough legal framework for space activities is being developed by the United Nations Office for Outer Space Affairs (UNOOSA). The “Outer Space Treaty,” a collection of five international agreements on space law, establishes the foundation of international space law. The agreements address topics such as the peaceful use of space, preventing space from becoming militarised, and who is responsible for damage caused by space objects. Well-established space laws govern both the United States and the United Kingdom. The National Aeronautics and Space Act, which was passed in the US in 1958 and established the National Aeronautics and Space Administration (NASA) to oversee national space programmes, is in place there. The Outer Space Act of 1986 governs how UK citizens and businesses can engage in space activity.

Conclusion

India must create a thorough legal system to govern its space endeavours. In the space sector, there needs to be a legal framework to avoid ambiguity and confusion, which may have detrimental effects. The Pacific use of space for the benefit of humanity should be covered by domestic space legislation in India. The overall scenario demonstrates the requirement for a clearly defined legal framework for the international acknowledgement of a nation’s space activities. India is fifth in the world for space technology, which is an impressive accomplishment, and a strong legal system will help India maintain its place in the space business.

Introduction

Recently, in April 2025, security researchers at Oligo Security exposed a substantial and wide-ranging threat impacting Apple's AirPlay protocol and its use via third-party Software Development Kit (SDK). According to the research, the recently discovered set of vulnerabilities titled "AirBorne" had the potential to enable remote code execution, escape permissions, and leak private data across many different Apple and third-party AirPlay-compatible devices. With well over 2.35 billion active Apple devices globally and tens of millions of third-party products that incorporate the AirPlay SDK, the scope of the problem is enormous. Those wireless-based vulnerabilities pose not only a technical threat but also increasingly an enterprise- and consumer-level security concern.

Understanding AirBorne: What’s at Stake?

AirBorne is the title given to a set of 23 vulnerabilities identified in the AirPlay communication protocol and its related SDK utilised by third-party vendors. Seventeen have been given official CVE designations. The most severe among them permit Remote Code Execution (RCE) with zero or limited user interaction. This provides hackers the ability to penetrate home networks, business environments, and even cars with CarPlay technology onboard.

Types of Vulnerabilities Identified

AirBorne vulnerabilities support a range of attack types, including:

- Zero-Click and One-Click RCE

- Access Control List (ACL) bypass

- User interaction bypass

- Local arbitrary file read

- Sensitive data disclosure

- Man-in-the-middle (MITM) attacks

- Denial of Service (DoS)

Each vulnerability can be used individually or chained together to escalate access and broaden the attack surface.

Remote Code Execution (RCE): Key Attack Scenarios

- MacOS – Zero-Click RCE (CVE-2025-24252 & CVE-2025-24206) These weaknesses enable attackers to run code on a MacOS system without any user action, as long as the AirPlay receiver is enabled and configured to accept connections from anyone on the same network. The threat of wormable malware propagating via corporate or public Wi-Fi networks is especially concerning.

- MacOS – One-Click RCE (CVE-2025-24271 & CVE-2025-24137) If AirPlay is set to "Current User," attackers can exploit these CVEs to deploy malicious code with one click by the user. This raises the level of threat in shared office or home networks.

- AirPlay SDK Devices – Zero-Click RCE (CVE-2025-24132) Third-party speakers and receivers through the AirPlay SDK are particularly susceptible, where exploitation requires no user intervention. Upon compromise, the attackers have the potential to play unauthorised media, turn microphones on, or monitor intimate spaces.

- CarPlay Devices – RCE Over Wi-Fi, Bluetooth, or USB CVE-2025-24132 also affects CarPlay-enabled systems. Under certain circumstances, the perpetrators around can take advantage of predictable Wi-Fi credentials, intercept Bluetooth PINs, or utilise USB connections to take over dashboard features, which may distract drivers or listen in on in-car conversations.

Other Exploits Beyond RCE

AirBorne also opens the door for:

- Sensitive Information Disclosure: Exposing private logs or user metadata over local networks (CVE-2025-24270).

- Local Arbitrary File Access: Letting attackers read restricted files on a device (CVE-2025-24270 group).

- DoS Attacks: Exploiting NULL pointer dereferences or misformatted data to crash processes like the AirPlay receiver or WindowServer, forcing user logouts or system instability (CVE-2025-24129, CVE-2025-24177, etc.).

How the Attack Works: A Technical Breakdown

AirPlay sends on port 7000 via HTTP and RTSP, typically encoded in Apple's own plist (property list) form. Exploits result from incorrect treatment of these plists, especially when skipping type checking or assuming invalid data will be valid. For instance, CVE-2025-24129 illustrates how a broken plist can produce type confusion to crash or execute code based on configuration.

A hacker must be within the same Wi-Fi network as the targeted device. This connection might be through a hacked laptop, public wireless with shared access, or an insecure corporate connection. Once in proximity, the hacker has the ability to use AirBorne bugs to hijack AirPlay-enabled devices. There, bad code can be released to spy, gain long-term network access, or spread control to other devices on the network, perhaps creating a botnet or stealing critical data.

The Espionage Angle

Most third-party AirPlay-compatible devices, including smart speakers, contain built-in microphones. In theory, that leaves the door open for such devices to become eavesdropping tools. While Oligo did not show a functional exploit for the purposes of espionage, the risk suggests the gravity of the situation.

The CarPlay Risk Factor

Besides smart home appliances, vulnerabilities in AirBorne have also been found for Apple CarPlay by Oligo. Those vulnerabilities, when exploited, may enable attackers to take over an automobile's entertainment system. Fortunately, the attacks would need pairing directly through USB or Bluetooth and are much less practical. Even so, it illustrates how networks of connected components remain at risk in various situations, ranging from residences to automobiles.

How to Protect Yourself and Your Organisation

- Immediate Actions:

- Update Devices: Ensure all Apple devices and third-party gadgets are upgraded to the latest software version.

- Disable AirPlay Receiver: If AirPlay is not in use, disable it in system settings.

- Restrict AirPlay Access: Use firewalls to block port 7000 from untrusted IPs.

- Set AirPlay to “Current User” to limit network-based attack.

- Organisational Recommendations:

- Communicate the patch urgency to employees and stakeholders.

- Inventory all AirPlay-enabled hardware, including in meeting rooms and vehicles.

- Isolate vulnerable devices on segmented networks until updated.

Conclusion

The AirBorne vulnerabilities illustrate that even mature systems such as Apple's are not immune from foundational security weaknesses. The extensive deployment of AirPlay across devices, industries, and ecosystems makes these vulnerabilities a systemic threat. Oligo's discovery has served to catalyse immediate response from Apple, but since third-party devices remain vulnerable, responsibility falls to users and organisations to install patches, implement robust configurations, and compartmentalise possible attack surfaces. Effective proactive cybersecurity hygiene, network segmentation, and timely patches are the strongest defences to avoid these kinds of wormable, scalable attacks from becoming large-scale breaches.

References

- https://www.oligo.security/blog/airborne

- https://www.wired.com/story/airborne-airplay-flaws/

- https://thehackernews.com/2025/05/wormable-airplay-flaws-enable-zero.html

- https://www.securityweek.com/airplay-vulnerabilities-expose-apple-devices-to-zero-click-takeover/

- https://www.pcmag.com/news/airborne-flaw-exposes-airplay-devices-to-hacking-how-to-protect-yourself

- https://cyberguy.com/security/hackers-breaking-into-apple-devices-through-airplay/

A video purportedly showing Rashtriya Swayamsevak Sangh (RSS) chief Mohan Bhagwat making remarks about the “saffronisation” of the Indian Army has been widely circulated on social media. The clip claims that Bhagwat called for the removal of non-Hindus from the armed forces and linked the issue to future political leadership changes in the country.

Claim

However, a verification by the Cyber Peace Foundation has established that the video is misleading and has been digitally manipulated.

In the video, Bhagwat is allegedly heard saying that unless more than 50 percent of non-Hindus are removed from the Indian Army by 2028, Prime Minister Narendra Modi would be replaced by Uttar Pradesh Chief Minister Yogi Adityanath. The clip further attributes another statement to him, suggesting that he would resign if the Prime Minister were to demand Nitish Kumar’s resignation.

By the time of publication, the video had been viewed over 7,000 times.( lINK, ARCHIVE Link, Screenshot

Fact Check:

The reverse image search also directed the Desk to a video uploaded on CNN-News18’s official YouTube channel on December 21, 2025. The footage was found to be a longer version of the viral clip and was recorded at the RSS centenary event held in Kolkata on the same date. A comparison of both videos confirmed that the background visuals, stage setup and camera angles were identical.

However, a careful review of the original CNN-News18 video revealed that Mohan Bhagwat did not make any of the statements attributed to him in the viral clip.

In his original address, Bhagwat spoke about unity and referred to concerns over increasing atrocities against Hindus in Bangladesh. He made no reference to the Indian Army, nor did he comment on its composition or alleged saffronisation. Here is the link to the original video, along with a screenshot: https://www.youtube.com/watch?v=KnsAUGfBQBk&t=1s

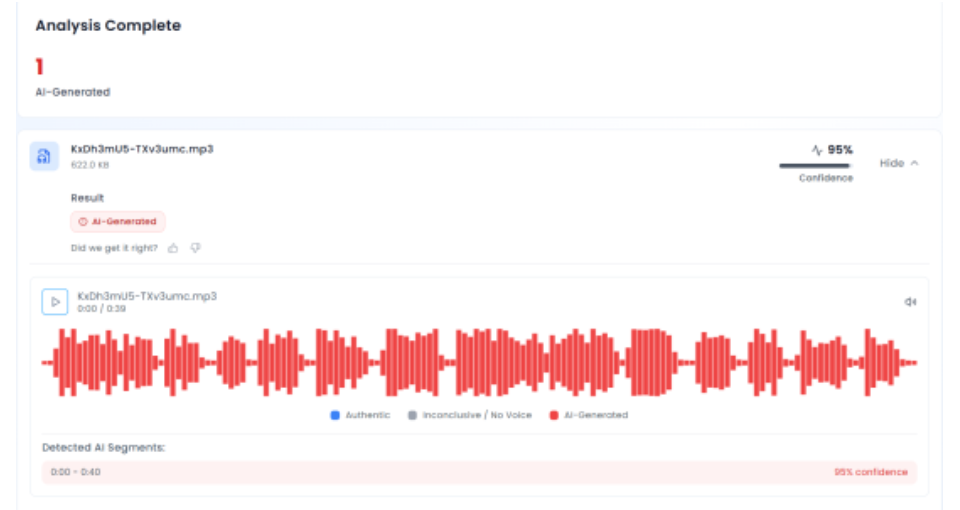

In the next phase of the investigation, the audio track from the viral video was extracted and analysed using the AI audio detection tool Aurigin. The tool’s assessment indicated that the voice heard in the clip was artificially generated, confirming that the audio did not originate from the original speech.

Conclusion

The claim that RSS chief Mohan Bhagwat called for the saffronisation of the Indian Army is false. PTI Fact Check found that the viral video was digitally manipulated, using genuine footage from an RSS centenary event but pairing it with an AI-generated audio track. The altered video was shared online to mislead viewers by falsely attributing statements Bhagwat never made.