#FactCheck: Fake Claim that US has used Indian Airspace to attack Iran

Executive Summary:

An online claim alleging that U.S. bombers used Indian airspace to strike Iran has been widely circulated, particularly on Pakistani social media. However, official briefings from the U.S. Department of Defense and visuals shared by the Pentagon confirm that the bombers flew over Lebanon, Syria, and Iraq. Indian authorities have also refuted the claim, and the Press Information Bureau (PIB) has issued a fact-check dismissing it as false. The available evidence clearly indicates that Indian airspace was not involved in the operation.

Claim:

Various Pakistani social media users [archived here and here] have alleged that U.S. bombers used Indian airspace to carry out airstrikes on Iran. One widely circulated post claimed, “CONFIRMED: Indian airspace was used by U.S. forces to strike Iran. New Delhi’s quiet complicity now places it on the wrong side of history. Iran will not forget.”

Fact Check:

Contrary to viral social media claims, official details from U.S. authorities confirm that American B2 bombers used a Middle Eastern flight path specifically flying over Lebanon, Syria, and Iraq to reach Iran during Operation Midnight Hammer.

The Pentagon released visuals and unclassified briefings showing this route, with Joint Chiefs of Staff Chair Gen. Dan Caine explained that the bombers coordinated with support aircraft over the Middle East in a highly synchronized operation.

Additionally, Indian authorities have denied any involvement, and India’s Press Information Bureau (PIB) issued a fact-check debunking the false narrative that Indian airspace was used.

Conclusion:

In conclusion, official U.S. briefings and visuals confirm that B-2 bombers flew over the Middle East not India to strike Iran. Both the Pentagon and Indian authorities have denied any use of Indian airspace, and the Press Information Bureau has labeled the viral claims as false.

- Claim: Fake Claim that US has used Indian Airspace to attack Iran

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

%20(1).webp)

Introduction

The global food industry is vast and complex, influencing consumer behaviour, policy, and health outcomes worldwide. However, misinformation within this sector is pervasive, with significant consequences for public health and market dynamics. Misinformation can arise from various sources, including misleading marketing campaigns, unsubstantiated health claims, and misrepresentation of food production practices through public endorsement or otherwise. Nutrition misinformation is one such example. The promotion of false or unproven products for profit can lead to mislead consumers and affect their interests. Misleading claims and inaccurate information about the nutritional value of food products and processes are common claims. The misinformation created about food on the global stage distorts public understanding of nutrition, food safety, and environmental impacts, leading to significant consequences for public health, consumer trust, and the economy.

Rise of Nutritional Misinformation and Consumer Distrust

Health and nutrition-related misinformation is one of the most prevalent types in the food sector. Businesses frequently advertise their products as "natural" or "healthy" without providing sufficient data to back up these claims, tricking customers into buying goods that might be heavy in fat, sugar, or salt. Words like "superfood" are frequently used without supporting evidence from science, giving the impression that they are healthier.

Misinformation also impacts the sustainability and ethics of food production. Claims of "sustainable" or "ethical" sourcing are frequently exaggerated or fabricated, leaving consumers unaware of the true environmental and social costs associated with certain products.

This lack of clarity is not only observed in general food trends but also within organisations meant to provide trustworthy information. There has been significant criticism, directed at the International Food Information Council (IFIC), for their alleged promotion of nutrition-based misinformation to safeguard the interests of large food corporations, resulting in potentially compromising public health. The preemptive claims that IFIC made about the nutritive claims have been questioned by the National Institutes of Health, USA in November 2022. They reported in their study that IFIC promotes food and beverage company interests and undermines the accurate dissemination of scientific evidence related to diet and health. This was in support of the objective of the study, which was to determine whether, there have been many claims that the nutritional value of certain foods or diets may be manipulated to favour business goals, leaving consumers misinformed about what constitutes a truly healthy diet.

Another source of misinformation is the growing ‘Free-From’ fad. The “free-from” label in the US is a food category of products that claim to be free from certain ingredients or chemicals. It has been steadily growing by 7% annually. These labels often tout products as healthier due to a simpler ingredient list. Although seemingly harmless, transparency in ingredient disclosure is often obscured in the 'free-from' trend. This can lead to consumer distrust in the long run, making them hesitant.

The Harmful Effects of Food Misinformation

The effects of misinformation about nutrition and food safety can directly affect public health.

Consumers unknowingly may accept false claims or avoid certain foods without scientific basis and adopt harmful dietary habits, potentially leading to malnutrition or other health problems. By the time the realisation sets in about being misled, their trust is eroded not only towards such companies but also towards the regulators. This distrust can lead to declining consumer confidence and disrupt market stability.

Some food-related misinformation downplays the environmental impact that certain food production practices have. An example of such a situation is the promotion of meat alternatives as being entirely eco-friendly without considering all environmental factors. This can mislead consumers and obscure the complex environmental effects of food production systems.

Misinformation can distort consumer purchasing habits, potentially leading to a reduced demand for certain products and unfair competition. The sufferers in this case are the small-scale producers who suffer disproportionately, while the large corporations might use this misinformation to maintain their dominance in the market. Regulatory checks, open communication, and public education campaigns are needed to combat mis/disinformation in the global food sector and enable consumers to make decisions that are sustainable, healthful and informed.

CyberPeace Recommendations

- Unfair trade practices like providing misleading information or unchecked claims on food products should be better addressed by the regulators. Companies must provide clear, transparent and accurate information about their products as mandated under the Food Safety and Standards (Advertising and Claims) Regulations, 2018. This information should include the true origins, production methods, and nutritional content on their labels.

- Promotions of initiatives and investments by public health organisations and food authorities towards educating consumers and improving food literacy should encouraged.

- Regulating social media endorsement is also crucial to prevent the spread of misinformation and unchecked claims. Without proper due diligence on product details, influencers may unknowingly mislead their audience, causing potential harm.

- The Social Media Platforms can partner with nutritionists, dietitians, and other health professionals who are content creators, as they can help in understanding and promoting accurate, science-based nutrition information and debunk any misleading claims.

- Campaigns should be encouraged to spread public awareness about the harms of food-related misleading claims or trends. Emphasis should be on evidence-based nutritional guidance. The ongoing research towards food safety, nutrition, and true information should be actively communicated to keep the public informed. Combating food misinformation requires more robust regulations, improved transparency, and heightened consumer awareness and vigilance.

References

- https://timesofindia.indiatimes.com/india/label-claims-on-packaged-food-could-be-misleading-icmr/articleshow/110053363.cms

- https://www.outlookindia.com/hub4business/empowering-change-freedom-food-alliance-takes-on-global-food-industry-misinformation

- https://insightsnow.com/misinformation-hurting-food-business/

- https://www.ncbi.nlm.nih.gov/pmc/articles/PMC9618198/pdf/12992_2022_Article_884.pdf

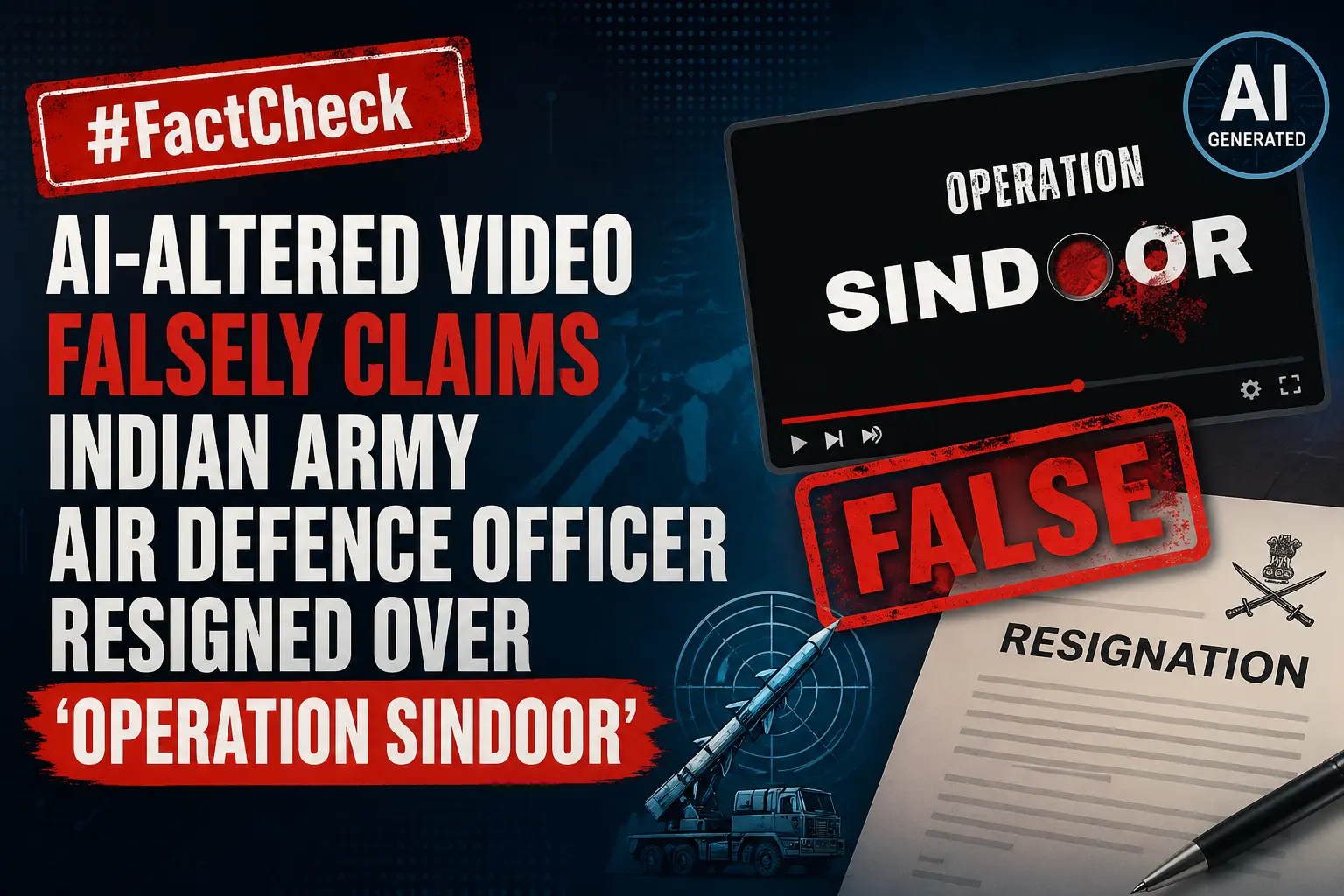

Executive Summary

A video of a soldier is being widely circulated on social media with the claim that an Indian Army Air Defence officer named Anurag Thakur resigned, alleging that soldiers martyred during “Operation Sindoor” were ignored by the government. However, research by the CyberPeace Research Wing found the claim to be false. The viral video has been manipulated with AI-generated audio and is being shared with a misleading narrative.

Claim:

Instagram users shared the clip claiming: “Indian Army Air Defence officer Anurag Thakur has resigned. He said the Government of India did not even acknowledge the deaths of soldiers.”

Fact Check:

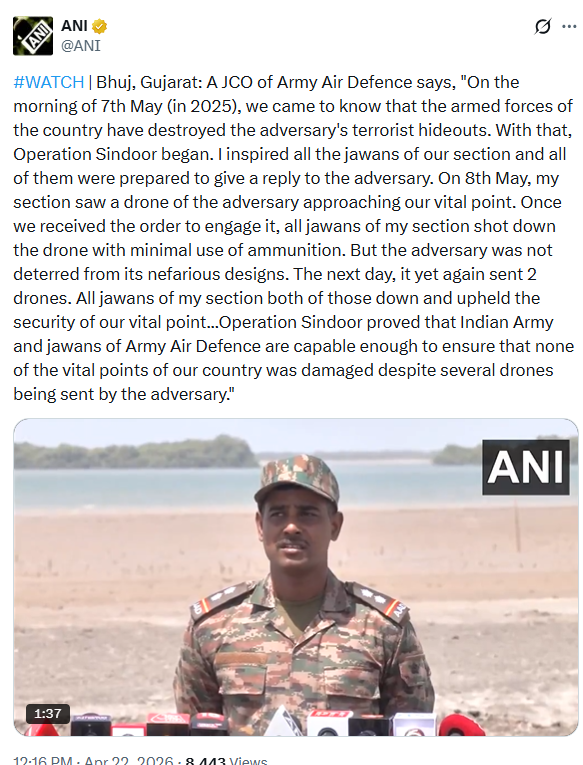

The research began with keyword searches related to the alleged resignation of an “Indian Army Air Defence JCO Anurag Thakur.” No credible or reputed media report was found supporting such a claim. A reverse image search of a frame from the viral video led to the original footage posted by news agency ANI on its official X account on March 22, 2026. The original video runs for 1 minute and 42 seconds A comparison of both videos showed that in the viral clip, the soldier appears to be speaking in English, whereas in ANI’s authentic video, the same soldier is speaking in Hindi while addressing the media.

In the original video, shared by ANI from Bhuj, Gujarat, the JCO explained that on the morning of May 7, 2025, they learned that Indian armed forces had destroyed enemy terror launch pads, marking the beginning of “Operation Sindoor.” He said he motivated his unit and they were prepared to respond. He further stated that on May 8, an enemy drone heading toward a vital location was detected and shot down using minimal ammunition. Two more drones were sent the following day and were also neutralised. He added that “Operation Sindoor” demonstrated the capability of the Indian Army and Air Defence units.

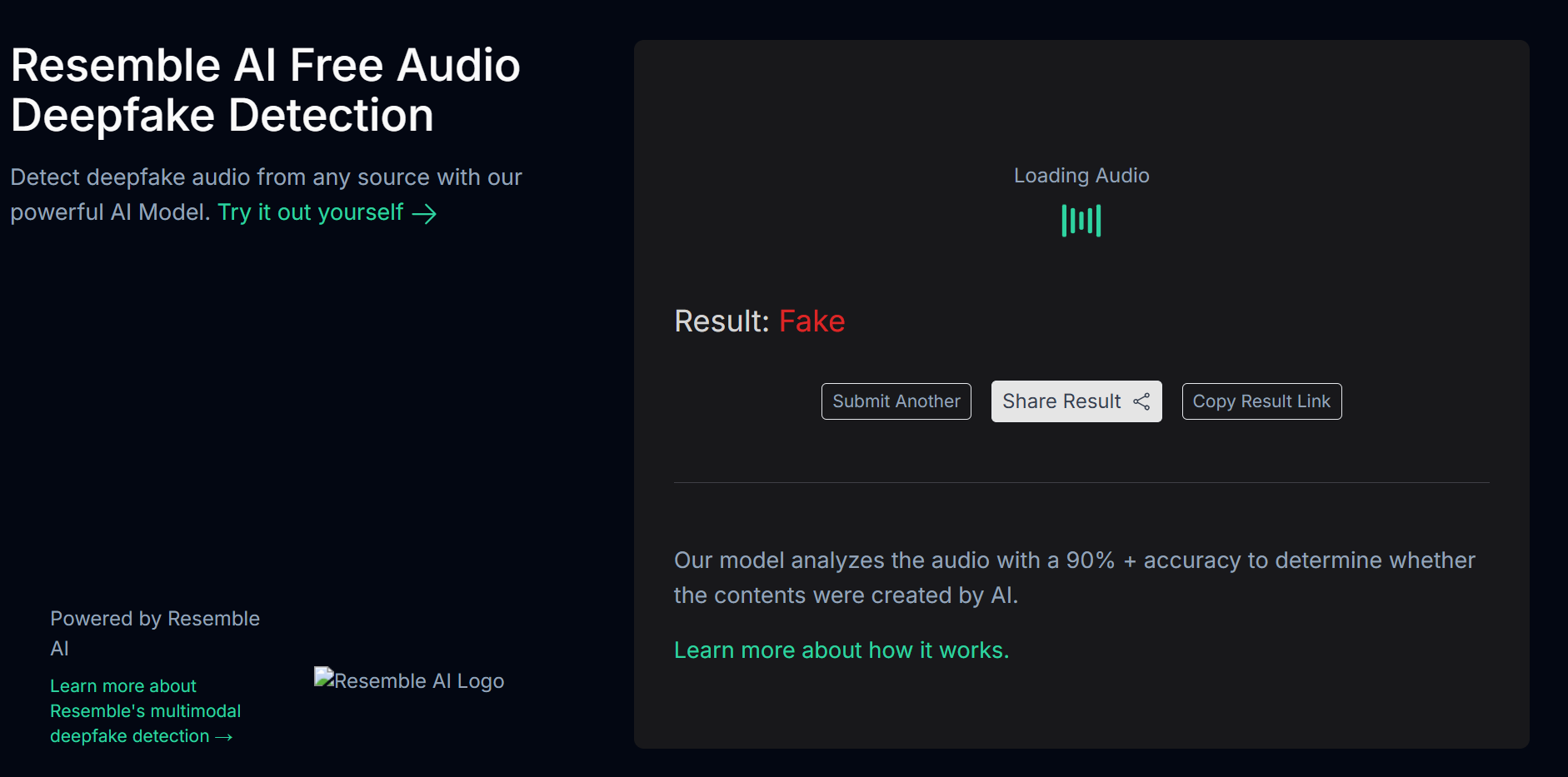

ANI had also summarised the same remarks in English in its post, which further confirmed that the viral version had been tampered with. For additional verification, the audio from the viral clip was examined using AI-based detection tools. Hiya Deepfake Voice Detector flagged it as likely fake, while Resemble AI also identified the audio as manipulated.

Conclusion:

The viral video claiming that an Indian Army Air Defence JCO resigned over ignored martyrs of “Operation Sindoor” is false. The original footage has been altered and artificial AI-generated audio was added to create a misleading narrative.

Introduction

In an alarming event, one of India’s premier healthcare institutes, AIIMS Delhi, has fallen victim to a malicious cyberattack for the second time in the year. The Incident serves as a clear-cut reminder of the escalating threat landscape faced by the healthcare organisation in this digital age. In the attack, which unfolded with grave implications, the attackers not only explored the vulnerabilities present in the healthcare sector, but this also raised the concern about the security of patient data and the uninterrupted delivery of critical healthcare services. In this blog post, we will explore the incident, what happened, and what safety measures can be taken.

Backdrop

The cyber-security systems deployed in AIIMS, New Delhi, recently detected a malware attack. The nature and scope of the attack were both sophisticated and targeted. This second hack acts as a wake-up call for healthcare organisations nationwide. As the healthcare business increasingly depends on digital technology to improve patient care and operational efficiency, cybersecurity must be prioritised to protect sensitive data. To minimise cyber-attack dangers, healthcare organisations must invest in robust defences such as multi-factor authentication, network security, frequent system upgrades, and employee training.

The attempt was successfully prevented, and the deployed cyber-security systems neutralised the threat. The e-Hospital services remain to be fully secure and are functioning normally.

Impact on AIIMS

Healthcare services have been under hackers’ radar worldwide, and the healthcare sector has been impacted badly. The attack on AIIMS Delhi’s effects has been both immediate and far-reaching. The organisation, which is recognised for delivering excellent healthcare services and performing breakthrough medical research, faced significant interruptions in its everyday operations. Patient care and treatment processes were considerably impeded, resulting in delays, cancellations, and the inability to access essential medical documents. The stolen data raises serious concerns about patient privacy and confidentiality, raising doubts about the institution’s capacity to protect sensitive information. Furthermore, the financial ramifications of the assault, such as the cost of recovery, deploying more robust cybersecurity measures, and potential legal penalties and forensic analyses, contribute to the scale of the effect. The event has also generated public concerns about the institution’s ability to preserve personal information, undermining confidence and degrading AIIMS Delhi’s image.

Impact on Patients: The attacks not only impact the institutes but also have serious implications for the patients and here are some key highlights:

Healthcare Service Disruption: The hack has affected the seamless delivery of healthcare services at AIIMS Delhi. Appointments, surgeries, and other medical treatments may be delayed, cancelled, or rescheduled. This disturbance can result in longer wait times, longer treatment periods, and potential problems from delayed or interrupted therapy.

Patient Privacy and Confidentiality are jeopardised because of the breach of sensitive patient data. Medical data, test findings, and treatment plans may have been compromised. This breach may diminish patient faith in the institution’s capacity to safeguard their personal information, discouraging them from seeking care or submitting sensitive information in the future.

As a result of the cyberattack, patients may endure mental anguish and worry. Fear of possible exploitation of personal health information, confusion about the scope of the breach, and concerns about the security of their healthcare data can all have a negative impact on their mental health. This stress might aggravate pre-existing medical issues and impede total recovery.

Trust at stake: A data breach may harm patients’ faith and confidence in AIIMS Delhi and the healthcare system. Patients rely on healthcare facilities to keep their information secure and confidential while providing safe, high-quality care. A hack can doubt the institution’s ability to safeguard patient data, affecting patients’ overall faith in the organisation and potentially leading to patients seeking care elsewhere.

Cybersecurity Measures

To avoid future hacks and protect patient data, AIIMS Delhi must prioritize enhancing its cybersecurity procedures. The institution can strengthen its resistance to changing threats by establishing strong security practices. The following steps can be considered.

Using Multi-factor Authentication: By forcing users to submit several forms of identity to access systems and data, multi-factor authentication offers an extra layer of protection. AIIMS Delhi may considerably lower the danger of unauthorised access by applying this precaution, even in the case of leaked passwords or credentials. Biometrics and one-time passwords, for example, should be integrated into the institution’s authentication systems.

Improving Network Security and Firewalls: AIIMS Delhi should improve network security by implementing strong firewalls, intrusion detection and prevention systems, and network segmentation. These techniques serve to construct barriers between internal systems and external threats, reducing attackers’ lateral movement within the network. Regular network traffic monitoring and analysis can assist in recognising and mitigating any security breaches.

Risk Assessment: Regular penetration testing and vulnerability assessments are required to uncover possible flaws and vulnerabilities in AIIMS Delhi’s systems and infrastructure. Security professionals can detect vulnerabilities and offer remedial solutions by carrying out controlled simulated assaults. This proactive strategy assists in identifying and addressing any security flaws before attackers exploit them.

Educating and training Healthcare Professionals: Education and training have a crucial role in enhancing cybersecurity practices in healthcare facilities. Healthcare workers, including physicians, nurses, administrators, and support staff, must be well-informed about the importance of cybersecurity and trained in risk-mitigation best practices. This will empower healthcare professionals to actively contribute to protecting the patient’s data and maintaining the trust and confidence of patients.

Learnings from Incidents

AIIMS Delhi should embrace cyber-attacks as learning opportunities to strengthen its security posture. Following each event, a detailed post-incident study should be performed to identify areas for improvement, update security policies and procedures, and improve employee training programs. This iterative strategy contributes to the institution’s overall resilience and preparation for future cyber-attacks. AIIMS Delhi can effectively respond to cyber incidents, minimise the impact on operations, and protect patient data by establishing an effective incident response and recovery plan, implementing data backup and recovery mechanisms, conducting forensic analysis, and promoting open communication. Proactive measures, constant review, and regular revisions to incident response plans are critical for staying ahead of developing cyber threats and ensuring the institution’s resilience in the face of potential future assaults.

Conclusion

To summarise, developing robust healthcare systems in the digital era is a key challenge that healthcare organisations must prioritise. Healthcare organisations can secure patient data, assure the continuation of key services, and maintain patients’ trust and confidence by adopting comprehensive cybersecurity measures, building incident response plans, training healthcare personnel, and cultivating a security culture. Adopting a proactive and holistic strategy for cybersecurity is critical to developing a healthcare system capable of withstanding and successfully responding to digital-age problems.