#FactCheck - "AI-Generated Image of UK Police Officers Bowing to Muslims Goes Viral”

Executive Summary:

A viral picture on social media showing UK police officers bowing to a group of social media leads to debates and discussions. The investigation by CyberPeace Research team found that the image is AI generated. The viral claim is false and misleading.

Claims:

A viral image on social media depicting that UK police officers bowing to a group of Muslim people on the street.

Fact Check:

The reverse image search was conducted on the viral image. It did not lead to any credible news resource or original posts that acknowledged the authenticity of the image. In the image analysis, we have found the number of anomalies that are usually found in AI generated images such as the uniform and facial expressions of the police officers image. The other anomalies such as the shadows and reflections on the officers' uniforms did not match the lighting of the scene and the facial features of the individuals in the image appeared unnaturally smooth and lacked the detail expected in real photographs.

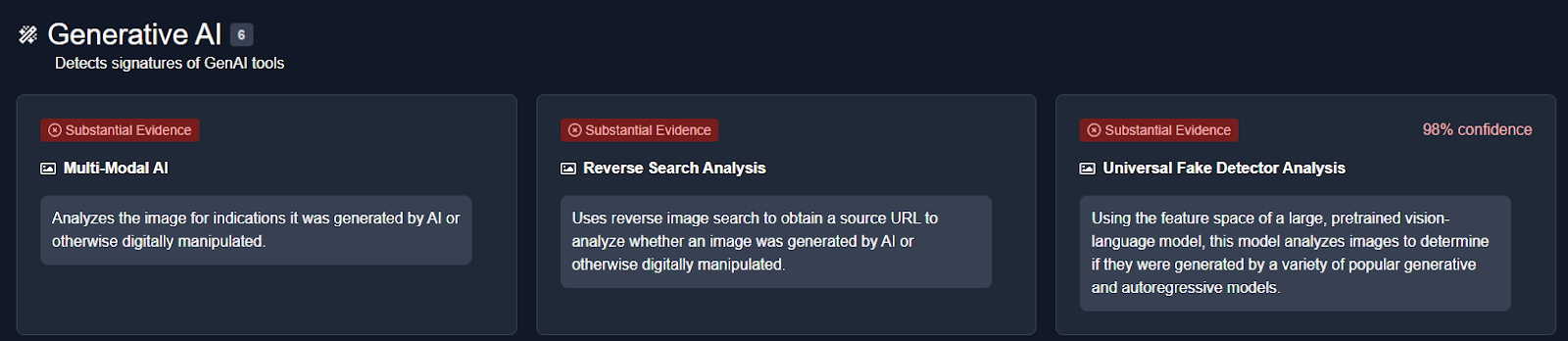

We then analysed the image using an AI detection tool named True Media. The tools indicated that the image was highly likely to have been generated by AI.

We also checked official UK police channels and news outlets for any records or reports of such an event. No credible sources reported or documented any instance of UK police officers bowing to a group of Muslims, further confirming that the image is not based on a real event.

Conclusion:

The viral image of UK police officers bowing to a group of Muslims is AI-generated. CyberPeace Research Team confirms that the picture was artificially created, and the viral claim is misleading and false.

- Claim: UK police officers were photographed bowing to a group of Muslims.

- Claimed on: X, Website

- Fact Check: Fake & Misleading

Related Blogs

Executive Summary:

A video clip being circulated on social media allegedly shows the Hon’ble President of India, Smt. Droupadi Murmu, the TV anchor Anjana Om Kashyap and the Hon’ble Chief Minister of Uttar Pradesh, Shri Yogi Adityanath promoting a medicine for diabetes. While The CyberPeace Research Team did a thorough investigation, the claim was found to be not true. The video was digitally edited, with original footage of the heavy weight persons being altered to falsely suggest their endorsement of the medication. Specific discrepancies were found in the lip movements and context of the clips which indicated AI Manipulation. Additionally, the distinguished persons featured in the video were actually discussing unrelated topics in their original footage. Therefore, the claim that the video shows endorsements of a diabetes drug by such heavy weights is debunked. The conclusion drawn from the analysis is that the video is an AI creation and does not reflect any genuine promotion. Furthermore, it's also detected by AI voice detection tools.

Claims:

A video making the rounds on social media purporting to show the Hon'ble President of India, Smt. Draupadi Murmu, TV anchor Anjana Om Kashyap, and Hon'ble Chief Minister of Uttar Pradesh Shri Yogi Adityanath giving their endorsement to a diabetes medicine.

Fact Check:

Upon receiving the post we carefully watched the video and certainly found some discrepancies between lip synchronization and the word that we can hear. Also the voice of Chief Minister of Uttar Pradesh Shri Yogi Adityanath seems to be suspicious which clearly indicates some sign of fabrication. In the video, we can hear Hon'ble President of India Smt. Droupadi Murmu endorses a medicine that cured her diabetes. We then divided the video into keyframes, and reverse-searched one of the frames of the video. We landed on a video uploaded by Aaj Tak on their official YouTube Channel.

We found something similar to the same viral video, we can see the courtesy written as Sansad TV. Taking a cue from this we did some keyword searches and found another video uploaded by the YouTube Channel Sansad TV. In this video, we found no mention of any diabetes medicine. It was actually the Swearing in Ceremony of the Hon’ble President of India, Smt. Droupadi Murmu.

In the second part, there was a man addressed as Dr. Abhinash Mishra who allegedly invented the medicine that cures diabetes. We reverse-searched the image of that person and landed at a CNBC news website where the same face was identified as Dr Atul Gawande who is a professor at Harvard School of Public Health. We watched the video and found no sign of endorsing or talking about any diabetes medicine he invented.

We also extracted the audio from the viral video and analyzed it using the AI audio detection tool named Eleven Labs, which found the audio very likely to be created using the AI Voice generation tool with the probability of 98%.

Hence, the Claim made in the viral video is false and misleading. The Video is digitally edited using different clips and the audio is generated using the AI Voice creation tool to mislead netizens. It is worth noting that we have previously debunked such voice-altered news with bogus claims.

Conclusion:

In conclusion, the viral video claiming that Hon'ble President of India, Smt. Droupadi Murmu and Chief Minister of Uttar Pradesh Shri Yogi Adityanath promoted a diabetes medicine that cured their diabetes, is found to be false. Upon thorough investigation it was found that the video is digitally edited from different clips, the clip of Hon'ble President of India, Smt. Droupadi Murmu is taken from the clip of Oath Taking Ceremony of 15th President of India and the claimed doctor Abhinash Mishra whose video was found in CNBC News Outlet. The real name of the person is Dr. Atul Gawande who is a professor at Harvard School of Public Health. Online users must be careful while receiving such posts and should verify before sharing them with others.

Claim: A video is being circulated on social media claiming to show distinguished individuals promoting a particular medicine for diabetes treatment.

Claimed on: Facebook

Fact Check: Fake & Misleading

Executive Summary

A video circulating on social media claims that Iran’s new Supreme Leader Mojtaba Khamenei has passed away, with users attributing the claim to American sources. However, research by the CyberPeace found the claim to be false. Our research confirms that Mojtaba Khamenei is alive and in good health.

Claim

A Facebook user shared the viral video, claiming that Iran’s new Supreme Leader Mojtaba Khamenei had died.

Fact Check

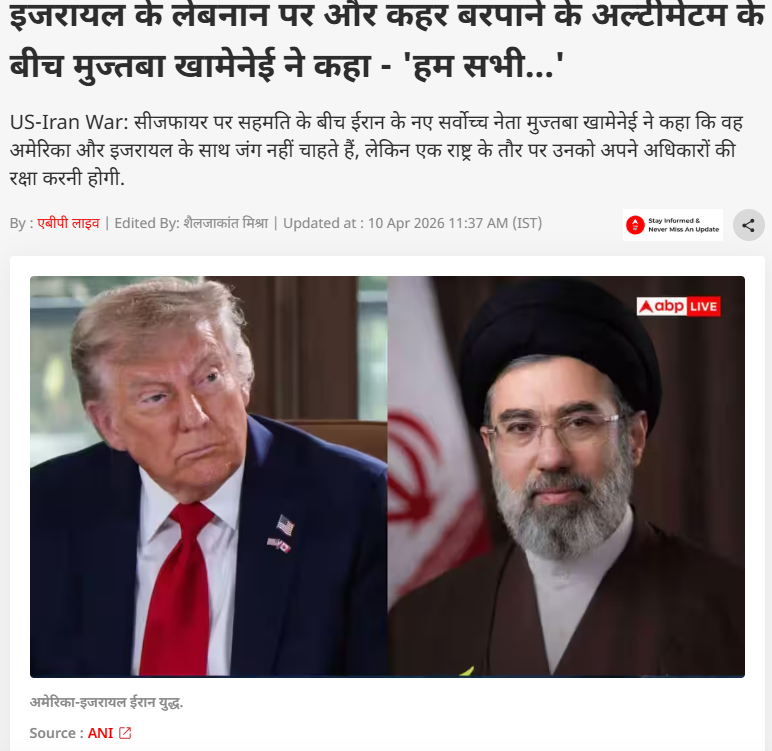

To verify the claim, we conducted keyword searches on Google but found no credible media reports confirming his death. Further research led us to a report published on April 10, 2026, by ABP News. According to the report, amid discussions around a ceasefire, Mojtaba Khamenei issued a statement saying that Iran does not seek war with the United States or Israel, but as a nation, it must defend its rights.

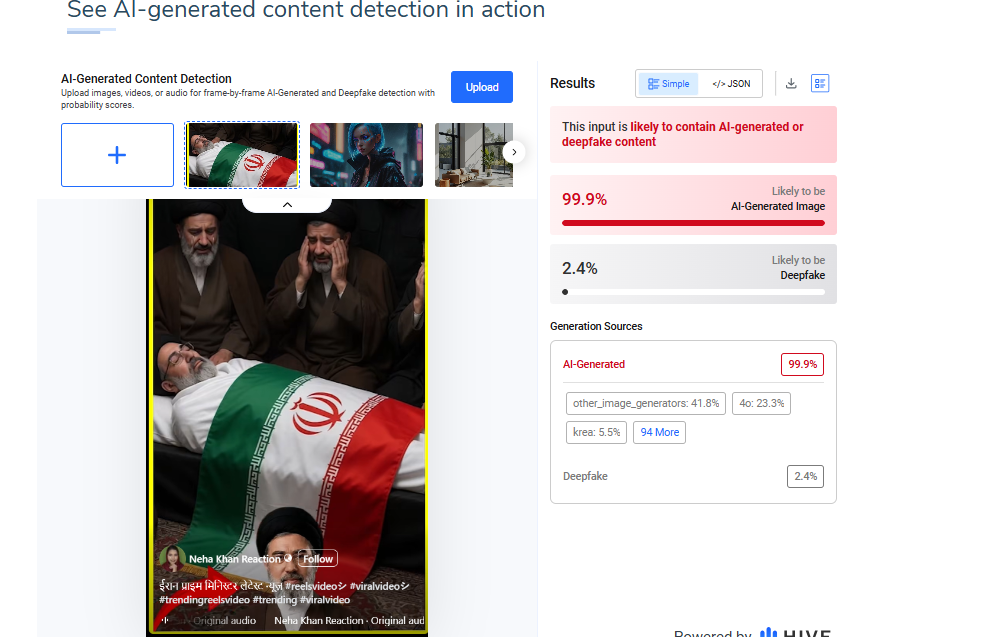

Additionally, the image used in the viral video was analyzed using the AI detection tool HIVE Moderation. The results indicated a 99% probability that the image is AI-generated.

Conclusion

The viral claim is false and misleading. There is no credible evidence to suggest that Mojtaba Khamenei has died. On the contrary, recent verified reports confirm that he is alive and has even issued public statements on ongoing geopolitical developments. The widespread circulation of this claim appears to be driven by misinformation, amplified through social media without verification. The use of AI-generated visuals further adds to the confusion, making the content appear authentic at first glance.

.webp)

Introduction

The digital ecosystem has undergone a profound transformation due to the rapid growth of artificial intelligence, especially through its generative applications. While this progress has introduced innovative technologies, it has also intensified the risks of deepfakes, misinformation, and identity theft. The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2026, introduced by the Government of India, mark an important step toward stronger digital governance and greater oversight of online activities. These latest amendments establish new regulatory standards and represent India’s most comprehensive effort so far to address synthetically generated information, including AI created audio, video, and images that closely imitate reality.

Understanding the Core Shift: From Reactive to Proactive Regulation

The 2026 amendment establishes its main characteristic through its shift from a reactive compliance system to a proactive due diligence system. Intermediaries must now operate as active participants who take responsibility for detecting, marking and controlling dangerous material instead of functioning as neutral channels. The rules establish an official definition for stands for Synthetically Generated Information(SGI), which they protect through legal regulations, while they address issues such as impersonation scams, election manipulation and non-consensual deepfake content. The current transition represents a worldwide pattern that shows that governments are starting to make online platforms responsible for the material they display.

Key Provisions of the IT Amendment Rules, 2026

1. Mandatory Labelling of AI-Generated Content

Platforms must ensure that all AI-generated content is clearly labelled or watermarked to distinguish it from authentic media. Users must reveal their uploaded content's synthetic origin while platforms must confirm the information.

2. The 3-Hour Takedown Rule

The most contentious aspect of this regulation establishes new rules that require content removal to be processed within much shorter timeframes.:

- The government and courts grant three-hour time limits for removing unlawful content.

- The two-hour deadline applies to media that includes non-consensual intimate imagery.

The current time frame allows content removal within three hours, which represents a major decrease from the previous content removal time, which lasted between 24 and 36 hours, because online misinformation needs urgent attention.

3. Traceability and Metadata Requirements

The rules require AI-generated content to include both digital fingerprints and metadata, which enables traceability and accountability through their embedded digital fingerprints. The provision serves as an essential tool for law enforcement to investigate cases while it helps identify which parties generated harmful content.

4. Safe Harbour Conditionality

Intermediaries who do not meet the following three conditions risk losing their safe harbour protection through Section 79 of the IT Act:

- The first requirement demands that intermediaries must implement proper labelling.

- The second requirement demands that intermediaries must complete their takedown responsibilities within specific timeframes

- The third requirement demands that intermediaries must complete their due diligence tasks.

This development represents a major transition for digital platforms, which will face increased responsibility for their actions.

5. Strengthened Grievance Redressal

The amendment establishes two new requirements for platforms. The amendment requires platforms to create systems that operate at all times to monitor their compliance with regulations.

Significance: Why These Rules Matter

The 2026 amendments are significant for multiple reasons:

- The rules require labelling and rapid content removal, which helps to stop the viral dissemination of misleading information.

- The framework provides better identity protection, defamation defence and protection against non-consensual imagery.

- The new rules make intermediaries responsible for their own compliance failures.

- The regulation of AI-generated misinformation protects democratic processes during electoral periods and public discussions.

The rules demonstrate India's goal to establish international standards for AI governance and digital responsibility.

Challenges and Concerns

The amendments present key issues that exist despite their positive aspects:

- The process of removing content at high speed creates risks for legitimate expression because safeguards need to be established through careful planning.

- The technical and infrastructural requirements governing compliance create financial burdens for smaller platforms that operate as intermediaries.

The existing challenges demonstrate the necessity for a solution that protects both human rights and security needs.

Conclusion

The IT Amendment Rules, 2026, establish a critical turning point for India's progress toward digital governance. The framework aims to establish a more secure digital environment through its solution of AI-generated content and deepfake detection problems, which create transparency and accountability issues. The rules will achieve their goals through proper implementation, which requires creating quick enforcement methods that protect both legal processes and free speech rights. The ongoing development of AI technology requires regulatory systems to keep changing while including all citizens and upholding democratic principles.

References

- https://vajiramandravi.com/current-affairs/it-rules-amendment-2026

- https://indianexpress.com/article/legal-news/indias-new-3-hour-deepfake-removal-rule-experts-urge-strict-compliance-10528122

- https://timesofindia.indiatimes.com/technology/tech-news/governments-new-it-rules-make-ai-content-labelling-mandatory-give-google-youtube-instagram-and-other-platforms-3-hours-for-takedowns/articleshow/128157496.cms

- https://www.drishtiias.com/daily-updates/daily-news-analysis/information-technology-amendment-rules-2026

- https://visionias.in/current-affairs/news-today/2026-02-11/science-and-technology/government-notified-the-information-technology-intermediary-guidelines-and-digital-media-ethics-code-amendment-rules-2026