#FactCheck - Digitally Altered Video of Olympic Medalist, Arshad Nadeem’s Independence Day Message

Executive Summary:

A video of Pakistani Olympic gold medalist and Javelin player Arshad Nadeem wishing Independence Day to the People of Pakistan, with claims of snoring audio in the background is getting viral. CyberPeace Research Team found that the viral video is digitally edited by adding the snoring sound in the background. The original video published on Arshad's Instagram account has no snoring sound where we are certain that the viral claim is false and misleading.

Claims:

A video of Pakistani Olympic gold medalist Arshad Nadeem wishing Independence Day with snoring audio in the background.

Fact Check:

Upon receiving the posts, we thoroughly checked the video, we then analyzed the video in TrueMedia, an AI Video detection tool, and found little evidence of manipulation in the voice and also in face.

We then checked the social media accounts of Arshad Nadeem, we found the video uploaded on his Instagram Account on 14th August 2024. In that video, we couldn’t hear any snoring sound.

Hence, we are certain that the claims in the viral video are fake and misleading.

Conclusion:

The viral video of Arshad Nadeem with a snoring sound in the background is false. CyberPeace Research Team confirms the sound was digitally added, as the original video on his Instagram account has no snoring sound, making the viral claim misleading.

- Claim: A snoring sound can be heard in the background of Arshad Nadeem's video wishing Independence Day to the people of Pakistan.

- Claimed on: X,

- Fact Check: Fake & Misleading

Related Blogs

Executive Summary

Prime Minister Narendra Modi recently appealed to citizens to reduce the consumption of gold and edible oil for a year. He also urged people to conserve petrol, diesel, and cooking gas amid the crisis arising from the ongoing tensions in West Asia involving the United States, Israel, and Iran. Amid this backdrop, a purported image of Union Home Minister Amit Shah and his son Jay Shah, who currently serves as Chairman of the International Cricket Council, has gone viral on social media. The image shows them seated inside a chartered aircraft along with two other individuals. Several social media users shared the picture as genuine while targeting the central government and Amit Shah.

However, CyberPeace Research Wing reseach found that the viral image is fake and was generated using Artificial Intelligence (AI).

Claim

An Instagram user named “Om Prakash Shukla” shared the viral image on May 24, 2026, with the caption:“People are sacrificing their desires for the nation, while they are enjoying luxury.”

https://www.instagram.com/p/DYuFqnmCh7T

Fact Check

To verify the authenticity of the viral image, we conducted a Google Lens search. However, the image was not found on any credible news platform, which raised suspicion about its authenticity. We also performed keyword-based searches related to the image using Google Search, but found no authentic reports or media coverage associated with the picture.

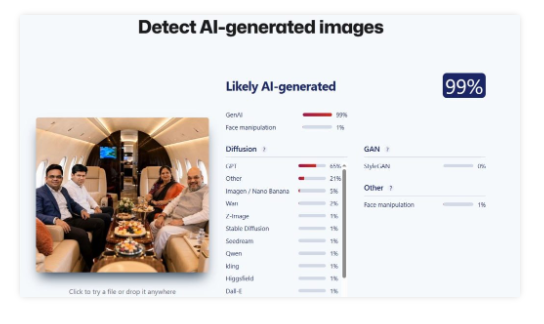

Further reseach involved analysing the image using AI detection tools. We first examined the picture using the “Sight Engine” AI detection tool, which indicated a 99 percent probability that the image was AI-generated.

We also tested the image using another AI detection platform called “TruthScan.” This tool likewise suggested, with around 97 percent probability, that the image had been created using artificial intelligence

Conclusion

The reseach found that the viral image featuring Union Home Minister Amit Shah and ICC Chairman Jay Shah inside a chartered aircraft is AI-generated. The image was digitally created using AI tools, and the viral claim associated with it is false.

Introduction

In the boundless world of the internet—a digital frontier rife with both the promise of connectivity and the peril of deception—a new spectre stealthily traverses the electronic pathways, casting a shadow of fear and uncertainty. This insidious entity, cloaked in the mantle of supposed authority, preys upon the unsuspecting populace navigating the virtual expanse. And in the heart of India's vibrant tapestry of diverse cultures and ceaseless activity, Mumbai stands out—a sprawling metropolis of dreams and dynamism, yet also the stage for a chilling saga, a cyber charade of foul play and fraud.

The city's relentless buzz and hum were punctuated by a harrowing tale that unwound within the unassuming confines of a Kharghar residence, where a 46-year-old individual's brush with this digital demon would unfold. His typical day veered into the remarkable as his laptop screen lit up with an ominous pop-up, infusing his routine with shock and dread. This deceiving popup, masquerading as an official communication from the National Crime Records Bureau (NCRB), demanded an exorbitant fine of Rs 33,850 for ostensibly browsing adult content—an offence he had not committed.

The Cyber Deception

This tale of deceit and psychological warfare is not unique, nor is it the first of its kind. It finds echoes in the tragic narrative that unfurled in September 2023, far south in the verdant land of Kerala, where a young life was tragically cut short. A 17-year-old boy from Kozhikode, caught in the snare of similar fraudulent claims of NCRB admonishment, was driven to the extreme despair of taking his own life after being coerced to dispense Rs 30,000 for visiting an unauthorised website, as the pop-up falsely alleged.

Sewn with a seam of dread and finesse, the pop-up which appeared in another recent case from Navi Mumbai, highlights the virtual tapestry of psychological manipulation, woven with threatening threads designed to entrap and frighten. In this recent incident a 46-year-old Kharghar resident was left in shock when he got a pop-up on a laptop screen warning him to pay Rs 33,850 fine for surfing a porn website. This message appeared from fake website of NCRB created to dupe people. Pronouncing that the user has engaged in browsing the Internet for some activities, it delivers an ultimatum: Pay the fine within six hours, or face the critical implications of a criminal case. The panacea it offers is simple—settle the demanded amount and the shackles on the browser shall be lifted.

It was amidst this web of lies that the man from Kharghar found himself entangled. The story, as retold by his brother, an IT professional, reveals the close brush with disaster that was narrowly averted. His brother's panicked call, and the rush of relief upon realising the scam, underscores the ruthless efficiency of these cyber predators. They leverage sophisticated deceptive tactics, even specifying convenient online payment methods to ensnare their prey into swift compliance.

A glimmer of reason pierced through the narrative as Maharashtra State cyber cell special inspector general Yashasvi Yadav illuminated the fraudulent nature of such claims. With authoritative clarity, he revealed that no legitimate government agency would solicit fines in such an underhanded fashion. Rather, official procedures involve FIRs or court trials—a structured route distant from the scaremongering of these online hoaxes.

Expert Take

Concurring with this perspective, cyber experts facsimiles. By tapping into primal fears and conjuring up grave consequences, the fraudsters follow a time-worn strategy, cloaking their ill intentions in the guise of governmental or legal authority—a phantasm of legitimacy that prompts hasty financial decisions.

To pierce the veil of this deception, D. Sivanandhan, the former Mumbai police commissioner, categorically denounced the absurdity of the hoax. With a voice tinged by experience and authority, he made it abundantly clear that the NCRB's role did not encompass the imposition of fines without due process of law—a cornerstone of justice grossly misrepresented by the scam's premise.

New Lesson

This scam, a devilish masquerade given weight by deceit, might surge with the pretence of novelty, but its underpinnings are far from new. The manufactured pop-ups that propagate across corners of the internet issue fabricated pronouncements, feigned lockdowns of browsers, and the spectre of being implicated in taboo behaviours. The elaborate ruse doesn't halt at mere declarations; it painstakingly fabricates a semblance of procedural legitimacy by preemptively setting penalties and detailing methods for immediate financial redress.

Yet another dimension of the scam further bolsters the illusion—the ominous ticking clock set for payment, endowing the fraud with an urgency that can disorient and push victims towards rash action. With a spurious 'Payment Details' section, complete with options to pay through widely accepted credit networks like Visa or MasterCard, the sham dangles the false promise of restored access, should the victim acquiesce to their demands.

Conclusion

In an era where the demarcation between illusion and reality is nebulous, the impetus for individual vigilance and scepticism is ever-critical. The collective consciousness, the shared responsibility we hold as inhabitants of the digital domain, becomes paramount to withstand the temptation of fear-inducing claims and to dispel the shadows cast by digital deception. It is only through informed caution, critical scrutiny, and a steadfast refusal to capitulate to intimidation that we may successfully unmask these virtual masquerades and safeguard the integrity of our digital existence.

References:

- https://www.onmanorama.com/news/kerala/2023/09/29/kozhikode-boy-dies-by-suicide-after-online-fraud-threatens-him-for-visiting-unauthorised-website.html

- https://timesofindia.indiatimes.com/pay-rs-33-8k-fine-for-surfing-porn-warns-fake-ncrb-pop-up-on-screen/articleshow/106610006.cms

- https://www.indiatoday.in/technology/news/story/people-who-watch-porn-receiving-a-warning-pop-up-do-not-pay-it-is-a-scam-1903829-2022-01-24

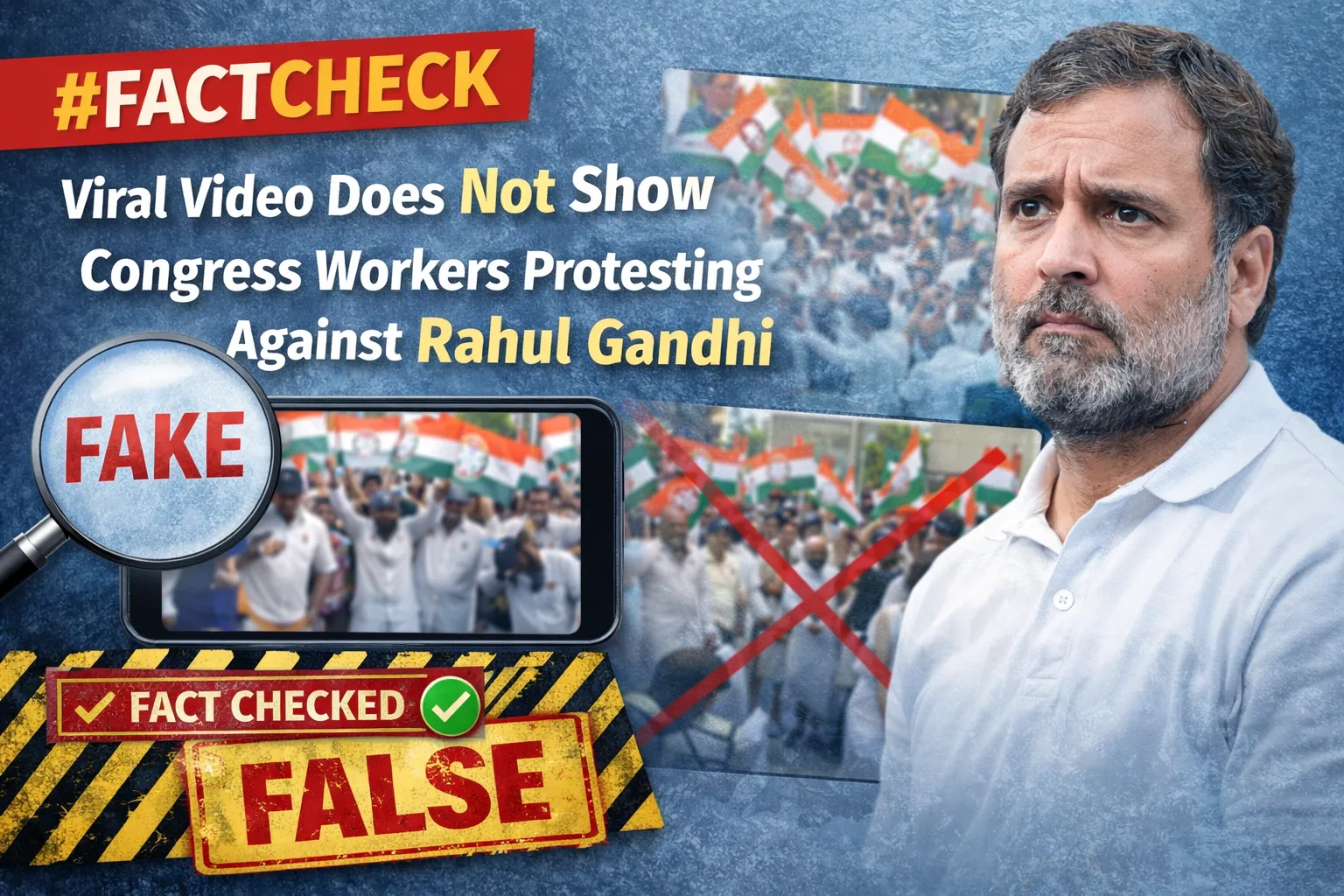

Executive Summary:

A video circulating on social media shows a group of people tearing Congress posters and raising controversial slogans. The clip is being shared with the claim that the individuals seen in the video are workers of the Congress party who were protesting against Rahul Gandhi and raising slogans against him. However, research by the CyberPeace found the viral claim to be misleading. Our research revealed that the video dates back to February 21, 2026. On that day, members of the Bharatiya Janata Yuva Morcha (BJYM) staged a protest outside a Congress office. During the demonstration, they raised slogans and tore Congress posters. The same video is now being circulated with a false narrative.

Claim

On February 24, 2026, a Facebook user shared the viral video with the caption:“Rebellion against Rahul Gandhi in Congress’ own stronghold! Party workers themselves tore posters and raised slogans — ‘Rahul Gandhi is a thief… a thief!’ This video exposes the internal truth of Congress. Congress itself is Muslim League.”

Fact Check

To verify the claim, we extracted key frames from the viral video and conducted a reverse image search using Google Lens. During the search, we found the same video uploaded on YouTube on February 21, 2026.

According to the description accompanying the video, BJP workers had staged a protest outside a Congress building. The report mentioned vandalism and stone-pelting during the protest, resulting in injuries to several individuals

- https://www.youtube.com/watch?v=pW-13mSvJ2c

Using this lead, we conducted a keyword search on Google and found a report published on February 21, 2026, by the Hindi news website Raj Express. The visuals in the report closely matched those seen in the viral clip.

According to the report, the protest in Bhopal was organized by the Bharatiya Janata Yuva Morcha in response to a T-shirt protest staged by the Youth Congress during an AI Summit held at Bharat Mandapam in New Delhi. The situation escalated when protesters marched toward the state Congress office in Shivaji Nagar. Police attempted to disperse the crowd using water cannons, but some protesters reportedly entered the Congress office premises, leading to tension.

Further, we found the same viral video on the official Facebook page of Indian National Congress - Madhya Pradesh, where it was posted on February 26, 2026. In the post, the Congress unit alleged that BJYM workers and BJP-affiliated individuals had entered the Congress office, vandalized property, and created chaos in the presence of police officials.

Conclusion

Our research found that the viral claim is misleading. The video is from February 21, 2026, when BJYM workers protested outside a Congress office and engaged in vandalism. The footage is now being falsely shared as evidence of an internal rebellion by Congress workers against Rahul Gandhi.