#FactCheck:AI-Generated War Video Falsely Linked to Israel-Iran Tensions Goes Viral

Executive Summary

A video is being widely shared on social media linking it to the ongoing tensions between Israel and Iran. The clip shows multiple fighter jets flying across the sky, while massive flames appear to be rising from tall buildings below. The visuals are dramatic and alarming, creating the impression of a large-scale military strike. Users sharing the video claim that after Israel carried out an attack, Iran launched a retaliatory strike on Israel, and that the viral footage captures the aftermath of this counterattack. However, research conducted by the CyberPeace found the claim to be misleading. Our research revealed that the viral video is not authentic but AI-generated.

Claim

On the social media platform Facebook, a user shared the viral video with the caption: “Iran has also carried out a retaliatory attack on Israel.”

(Post link and archive link provided above.)

Factcheck

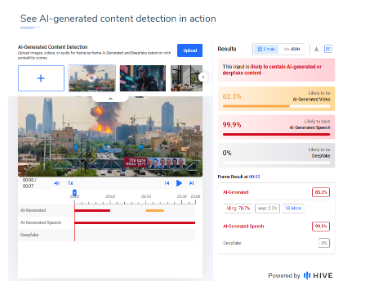

Upon closely examining the video, we noticed several irregularities in the visuals and motion patterns, which raised suspicion that the footage may have been generated using artificial intelligence. To verify this, we analyzed the video using the AI detection tool developed by Hive Moderation. According to the analysis report, there is a 62 percent likelihood that the viral video is AI-generated.

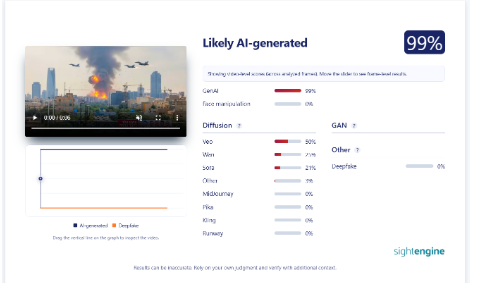

As part of further verification, we also scanned the video using Sightengine. The results indicated an even stronger probability, suggesting that the video is 99 percent AI-generated.

Conclusion

Our research confirms that the viral video does not depict a real military attack. It is AI-generated content being falsely shared in the context of Israel-Iran tensions.

Related Blogs

Executive Summary:

The picture of a boy making sand art of Indian Cricketer Virat Kohli spreading in social media, claims to be false. The picture which was portrayed, revealed not to be a real sand art. The analyses using AI technology like 'Hive' and ‘Content at scale AI detection’ confirms that the images are entirely generated by artificial intelligence. The netizens are sharing these pictures in social media without knowing that it is computer generated by deep fake techniques.

Claims:

The collage of beautiful pictures displays a young boy creating sand art of Indian Cricketer Virat Kohli.

Fact Check:

When we checked on the posts, we found some anomalies in each photo. Those anomalies are common in AI-generated images.

The anomalies such as the abnormal shape of the child’s feet, blended logo with sand color in the second image, and the wrong spelling ‘spoot’ instead of ‘sport’n were seen in the picture. The cricket bat is straight which in the case of sand made portrait it’s odd. In the left hand of the child, there’s a tattoo imprinted while in other photos the child's left hand has no tattoo. Additionally, the face of the boy in the second image does not match the face in other images. These made us more suspicious of the images being a synthetic media.

We then checked on an AI-generated image detection tool named, ‘Hive’. Hive was found to be 99.99% AI-generated. We then checked from another detection tool named, “Content at scale”

Hence, we conclude that the viral collage of images is AI-generated but not sand art of any child. The Claim made is false and misleading.

Conclusion:

In conclusion, the claim that the pictures showing a sand art image of Indian cricket star Virat Kohli made by a child is false. Using an AI technology detection tool and analyzing the photos, it appears that they were probably created by an AI image-generated tool rather than by a real sand artist. Therefore, the images do not accurately represent the alleged claim and creator.

Claim: A young boy has created sand art of Indian Cricketer Virat Kohli

Claimed on: X, Facebook, Instagram

Fact Check: Fake & Misleading

What is Juice Jacking?

We all use different devices during the day, but they converge to a common point when the battery runs out, the cables and adaptors we use to charge the devices are daily necessities for everyone. These cables and adaptors have access to the only port in the phones and hence are used for juice-jacking attacks. Juice jacking is when someone installs malware or spyware software in your device using an unknown charging port or cable.

How does juice jacking work?

We all use phones and gadgets, like I-phones, smartphones, Android devices: and smartwatches, to simplify our lives. But one thing common in it is the charging cables or USB ports, as the data and power supply pass through the same port/cable.

This is potentially a problem with devastating consequences. When your phone connects to another device, it pairs with it (ports/cables) and establishes a trusted relationship. That means the devices can exchange data. During the charging process, the USB cord opens a path into your device that a cybercriminal can exploit.

There is a default setting in the phones where data transfer is disabled, and the connections which provide the power are visible at the end. For example, in the latest models, when you plug your device into a new port or a computer, a question is pooped asking whether the device is trusted. The device owner cannot see what the USB port connects to in case of juice jacking. So, if you plug in your phone and someone checks on the other end, they may be able to transfer data between your device and theirs, thus leading to a data breach.

A leading airline was recently hacked into, which caused delayed flights across the country. When investigated, it was found that malware was planted in the system by using a USB port, which allowed the hackers access to critical data to launch their malware attack.

FBI’s Advisory

Federal Bureau of Investigation and other Interpol agencies have been very critical of cybercriminals. Inter-agency cooperation has improved the pace of investigation and chances of apprehending criminals. In a tweet by the FBI, the issue of Juice Jakcking was addressed, and public places like airports, railways stations, shopping malls etc., are pinpointed places where such attacks have been seen and reported. These places offer easy access to charging points for various devices, which are the main targets for bad actors. The FBI advises people not to use the charging points and cables at airports, railways stations and hotels and also lays emphasis upon the importance of carrying your own cable and charger.

Tips to protect yourself from juice jacking

There are a few simple and effective tips to keep your smart devices smart, such as –

- Avoid using public charging stations: The best way to protect yourself and your devices is to avoid public charging stations it’s always a good habit to charge your phones in your car, at home, and in offices when not in use.

- Using a wall outlet is a safer option: If it’s too urgent for you to use a public station, try to use wall outlets rather than poles because data can’t get easily transferred.

- Use other methods/modes of charging: If you are travelling, carrying a power bank is always safe, as it is easy to carry.

- Software security: – It’s always advised to update your phone’s software regularly. Once connected to the charging station, lock your device. This will prevent it from syncing or transferring data.

- Enable Airplane mode while charging: If you need to charge your phone from an unknown source in a public area, it is advisable to put the phone on airplane mode or switch it off to prevent anyone from gaining access to your device through any open network.

However, many mobile phones (including iPhones) turn on automatically when connected to power. As a result, your mileage may vary. This is an effective safeguard if your phone does not turn on automatically when connected to power.

Conclusion

As of present, juice-jacking attacks are less frequent. While not the most common type of attack today, the number of occurrences is expected to rise as smartphone gadget usage and penetration are rising across the globe. Our cyber safety and security are in our hands, and hence protecting them is our paramount digital duty. Always remember we see no harm in charging ports, but that doesn’t mean that the possibility of a threat can be ruled out completely. With the increased use of ports for charging, earphones, and data transfer, such crimes will continue and evolve with time. Thus, it is essential to counter these attacks by sharing knowledge and awareness of such crimes and reporting them to competent authorities to eradicate the menace of cybercriminals from our digital ecosystem.

.webp)

Introduction

YouTube is testing a new feature called ‘Notes,’ which allows users to add community-sourced context to videos. The feature allows users to clarify if a video is a parody or if it is misrepresenting information. The feature builds on existing features to provide helpful content alongside videos. Currently under testing, the feature will be available to a limited number of eligible contributors who will be invited to write notes on videos. These notes will appear publicly under a video if they are found to be broadly helpful. Viewers will be able to rate notes into three categories: ‘Helpful,’ ‘Somewhat helpful,’ or ‘Unhelpful’. Based on the ratings, YouTube will determine which notes are published. The feature will first be rolled out on mobile devices in the U.S. in English. The Google-owned platform will look at ways to improve the feature over time, including whether it makes sense to expand it to other markets.

YouTube To Roll Out The New ‘Notes’ Feature

YouTube is testing an experimental feature that allows users to add notes to provide relevant, timely, and easy-to-understand context for videos. This initiative builds on previous products that display helpful information alongside videos, such as information panels and disclosure requirements when content is altered or synthetic. YouTube in its blog clarified that the pilot will be available on mobiles in the U.S. and in the English language, to start with. During this test phase, viewers, participants, and creators are invited to give feedback on the quality of the notes.

YouTube further stated in its blog that a limited number of eligible contributors will be invited via email or Creator Studio notifications to write notes so that they can test the feature and add value to the system before the organisation decides on next steps and whether or not to expand the feature. Eligibility criteria include having an active YouTube channel in good standing with Yotube’s Community Guidelines.

Viewers in the U.S. will start seeing notes on videos in the coming weeks and months. In this initial pilot, third-party evaluators will rate the helpfulness of notes, which will help train the platform’s systems. As the pilot moves forward, contributors themselves will rate notes as well.

Notes will appear publicly under a video if they are found to be broadly helpful. People will be asked whether they think a note is helpful, somewhat helpful, or unhelpful and the reasons for the same. For example, if a note is marked as ‘Helpful,’ the evaluator will have the opportunity to specify if it is so because it cites high-quality sources or is written clearly and neutrally. A bridging-based algorithm will be used to consider these ratings and determine what notes are published. YouTube is excited to explore new ways to make context-setting even more relevant, dynamic, and unique to the videos we are watching, at scale, across the huge variety of content on YouTube.

CyberPeace Analysis: How Can Notes Help Counter Misinformation

The potential effectiveness of countering misinformation on YouTube using the proposed ‘Notes’ feature is significant. Enabling contributors to include notes on videos can offer relevant and accurate context to clarify any misleading or false information in the video. These notes can aid in enhancing viewers' comprehension of the content and detecting misinformation. The participation from users to rate the added notes as helpful, somewhat helpful, and unhelpful adds a heightened layer of transparency and public participation in identifying the accuracy of the content.

As YouTube intends to gather feedback from its various stakeholders to improve the feature over time, one can look forward to improved policy and practical over time: the feedback mechanism will allow for continuous refinement of the feature, ensuring it effectively addresses misinformation. The platform employs algorithms to identify helpful notes that cater to a broad audience across different perspectives. This helps showcase accurate information and combat misinformation.

Furthermore, along with the Notes feature, YouTube should explore and implement prebunking and debunking strategies on the platform by promoting educational content and empowering users to discern between fact and any misleading information.

Conclusion

The new feature, currently in the testing phase, aims to counter misinformation by providing context, enabling user feedback, leveraging algorithms, promoting transparency, and continuously improving information quality. Considering the diverse audience on the platform and high volumes of daily content consumption, it is important for both the platform operators and users to engage with factual, verifiable information. The fallout of misinformation on such a popular platform can be immense, and so, any mechanism or feature that can help counter the same must be developed to its full potential. Apart from this new Notes feature, YouTube has also implemented certain measures in the past to counter misinformation, such as providing authenticated sources to counter any election misinformation during the recent 2024 elections in India. These efforts are a welcome contribution to our shared responsibility as netizens to create a trustworthy, factual and truly-informational digital ecosystem.

References:

- https://blog.youtube/news-and-events/new-ways-to-offer-viewers-more-context/

- https://www.thehindu.com/sci-tech/technology/internet/youtube-tests-feature-that-will-let-users-add-context-to-videos/article68302933.ece