#FactCheck -AI-Manipulated Clip Misrepresents PM Modi’s Remarks on Iran-Israel Conflict

Executive Summary

Amid the ongoing conflict between the US-Israel and Iran, a video of Indian Prime Minister Narendra Modi is being widely circulated on social media. In the clip, he is allegedly heard supporting Israel and calling Iran a “terrorist state.” The video also appears to show him speaking about the idea of “Akhand Bharat.” Many users are sharing this video as genuine. However, a detailed research by the CyberPeacefound that the claim is false. The viral video is a deepfake created using AI technology.

Claim:

A Facebook page named “Pushpendra Kulshreshtha” shared the video on March 23, 2026, with a caption suggesting that PM Modi made strong remarks in support of Israel and against Iran.

Fact Check:

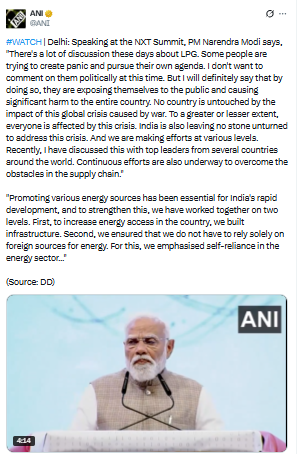

To verify the claim, we first conducted a keyword search to find any credible reports or official statements where PM Modi made such remarks. However, no reliable news reports or authentic videos supporting the claim were found. We then extracted keyframes from the viral video and performed a reverse image search using Google Lens. This led us to the original video posted on the X (formerly Twitter) handle of ANI on March 12, 2026.

The visuals, including PM Modi’s attire and the stage setup, matched the viral clip—indicating that the fake video was created using this original footage. However, in the authentic video, PM Modi did not make any statements about Iran, Israel, or “Akhand Bharat” as seen in the viral version. In the original footage, PM Modi is seen addressing the NXT Summit in Delhi, where he spoke about the global energy crisis arising from ongoing conflicts and highlighted the expansion of LPG and PNG facilities in India. Additionally, a customised keyword search led us to a press release issued by the Prime Minister's Office regarding his address at the summit. The statement heard in the viral clip was not found there either.

Conclusion:

The viral video of PM Modi is a deepfake. He did not make any statement calling Iran a “terrorist state” or expressing support for Israel in the manner shown. The original video is from a summit held in Delhi and has been manipulated using AI to spread misleading claims.