#FactCheck – Elephant Falls From Truck? No, This Elephant Fall Video Is AI-Manipulated

Executive Summary:

A video circulating on social media claims to show a live elephant falling from a moving truck due to improper transportation, followed by the animal quickly standing up and reacting on a public road. The content may raise concerns related to animal cruelty, public safety, and improper transport practices. A detailed examination using AI content detection tools, visual anomaly analysis indicates that the video is not authentic and is likely AI generated or digitally manipulated.

Claim:

The viral video (archive link) shows a disturbing scene where a large elephant is allegedly being transported in an open blue truck with barriers for support. As the truck moves along the road, the elephant shifts its weight and the weak side barrier breaks. This causes the elephant to fall onto the road, where it lands heavily on its side. Shortly after, the animal is seen getting back on its feet and reacting in distress, facing the vehicle that is recording the incident. The footage may raise serious concerns about safety, as elephants are normally transported in reinforced containers, and such an incident on a public road could endanger both the animal and people nearby.

Fact Check:

After receiving the video, we closely examined the visuals and noticed some inconsistencies that raised doubts about its authenticity. In particular, the elephant is seen recovering and standing up unnaturally quickly after a severe fall, which does not align with realistic animal behavior or physical response to such impact.

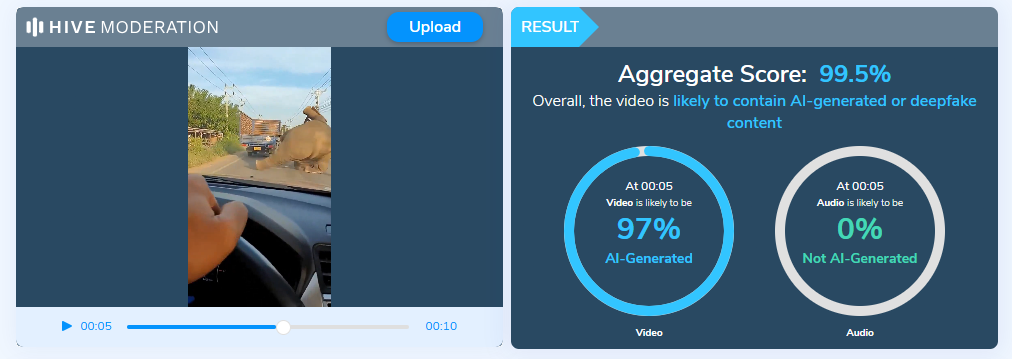

To further verify our observations, the video was analyzed using the Hive Moderation AI Detection tool, which indicated that the content is likely AI generated or digitally manipulated.

Additionally, no credible news reports or official sources were found to corroborate the incident, reinforcing the conclusion that the video is misleading.

Conclusion:

The claim that the video shows a real elephant transport accident is false and misleading. Based on AI detection results, observable visual anomalies, and the absence of credible reporting, the video is highly likely to be AI generated or digitally manipulated. Viewers are advised to exercise caution and verify such sensational content through trusted and authoritative sources before sharing.

- Claim: The viral video shows an elephant allegedly being transported, where a barrier breaks as it moves, causing the animal to fall onto the road before quickly getting back on its feet.

- Claimed On: X (Formally Twitter)

- Fact Check: False and Misleading

Related Blogs

.webp)

Executive Summary:

On July 4, 2024, a giant password dump, “RockYou2024” was posted on a cybercrime marketplace containing 9,948,575,739 plain-text credentials. This blog explains the technical aspects of this leakage and its consequences in the sphere of information security.

RockYou2024 is a list of passwords obtained from different data breaches ranging over the course of more than twenty years. It integrates older passwords with the lexical database with the additional passwords from the recent hacks, thereby, cumulating the database of genuine and existing passwords. The compilation is said to contain data from more than 4,000 databases putting the tool in the hands of potential attackers. RockYou owns the name to this type of attack since a data breach attacked a social media company named , “RockYou'' and released 3.2 million users’ passwords as a .txt file. Since then, the term gained a common meaning connected with mass password data breaches.

Technical Implications:

- Credential Stuffing Attacks: The RockYou2024 list comprises a great number of actual passwords that increases the likelihood of credential stuffing attacks. With this, the attackers help themselves with an opportunity to try to gain unlawful access into several online accounts that a user may have, particularly ones where an individual re-uses the same password.

- Brute-Force Attacks: The collection is extensive for brute force attack on systems that have no protection against such exercise. This is especially the case for devices and services that are exposed to the internet and which may use either weak or factory-set alphanumeric codes.

- Password Cracking: Web compilations that include such lists are often employed by security specialists and penetration testers who use John the Ripper or Hashcat to check the password’s strength or the system’s susceptibility to attacks.

- Machine Learning Models: The dataset could be used to create machine learning models for password prediction or analysis, which would only lead to further better methods to be used in the attacks.

Countermeasures / Mitigation:

Below are the technical risk/process operating proposed to reduce the risks associated with RockYou2024:

- Password Hashing: It is necessary to ensure that all the passwords required to be saved should be encrypted in one of the most secure algorithms like bcrypt, Argon2, or PBKDF2 along with a reasonable number of iterations.

- Salt and Pepper: The features for both salting and peppering should also be enabled to complicate the cracking of passwords even after the hashed password databases have been procured.

- Multi-Factor Authentication (MFA): Ensure the usage of complex passwords in addition to deploying MFA across all the technological systems and services within the company.

- Password Strength Policies: Adhere to password policies for features like the length, strength of the passwords and the change in password frequency.

- Rate Limiting and Account Lockouts: Inactivity methods must be used on consecutive attempts to log in and to the temporary lock out after so many attempts in a bid to discourage brute force attacks.

- Monitoring and Alerting: There should be measures in place to monitor for any violations such as login tappings or a form of credential stuffings and there should be alerts, where securities risks are likely to arise, in real time.

- API Security: The following proper API security measures that will result in the prevention of the following attacks; rate limiting, input validation, and token.

- Web Application Firewalls (WAF): To defend against threats from the internet for potential credential stuffing or brute-forcing the authentication process, utilize WAFs to operate at the application layer.

Analyzing the Impact:

To understand the potential impact of RockYou2024, organizations should assess the possible effects of RockYou2024, such as:

- Conduct Password Audits: LeakYou2024 scan current passwords database with RockYou2024 (in ethical and safe methods) and see which accounts have been compromised.

- Implement Continuous Monitoring: If this is a monthly or weekly event then there must be new information on data breaches and act on it concerning new security changes.

- Educate Users: Continued security consciousness training, regarding the effective protection of an individual’s password in combination with a password generator.

- Perform Penetration Testing: It is suggested to conduct penetration testing at least twice a year to find out if there are vulnerabilities in the systems and applications in the current use.

Conclusion:

The RockYou2024 leaked password database is a serious security risk; it contains almost 10 billion account credentials. This unprecedented leak further increases the exposure to credential stuffing, brute force and password cracking attacks. To deal with these threats, organizations need to have measures that include password hashing, multi-factor authentication, password strengthening and password audit. Patching, user awareness, bandit activities are imperative to prevent future invasions and strengthen the cyber security posture.

References :

- https://statanalytica.com/blog/rockyou-2024-txt-password/

- https://dig.watch/updates/rockyou2024-password-leak-exposes-nearly-10-billion-unique-passwords

- https://complexdiscovery.com/rockyou2024-leak-nearly-10-billion-passwords-exposed-heightening-cybersecurity-risks-for-businesses/

Introduction

Phone farms refer to setups or systems using multiple phones collectively. Phone farms are often for deceptive purposes, to create repeated actions in high numbers quickly, or to achieve goals. These can include faking popularity through increasing views, likes, and comments and growing the number of followers. It can also include creating the illusion of legitimate activity through actions like automatic app downloads, ad views, clicks, registrations, installations and in-app engagement.

A phone farm is a network where cybercriminals exploit mobile incentive programs by using multiple phones to perform the same actions repeatedly. This can lead to misattributions and increased marketing spends. Phone farming involves exploiting paid-to-watch apps or other incentive-based programs over dozens of phones to increase the total amount earned. It can also be applied to operations that orchestrate dozens or hundreds of phones to create a certain outcome, such as improving restaurant ratings or App Store Optimization(ASO). Companies constantly update their platforms to combat phone farming, but it is nearly impossible to prevent people from exploiting such services for their own benefit.

How Do Phone Farms Work?

Phone farms are a collection of connected smartphones or mobile devices used for automated tasks, often remotely controlled by software programs. These devices are often used for advertising, monetization, and artificially inflating app ratings or social media engagement. The software used in phone farms is typically a bot or script that interacts with the operating system and installed apps. The phone farm operator connects the devices to the Internet via wired or wireless networks, VPNs, or other remote access software. Once the software is installed, the operator can use a web-based interface or command-line tool to schedule and monitor tasks, setting specific schedules or monitoring device status for proper operation.

Modus Operandi Behind Phone Farms

Phone farms have gained popularity due to the growing popularity and scope of the Internet and the presence of bots. Phone farmers use multiple phones simultaneously to perform illegitimate activity and mimic high numbers. The applications can range from ‘watching’ movie trailers and clicking on ads to giving fake ratings and creating false engagements. When phone farms drive up ‘engagement actions’ on social media through numerous likes and post shares, they help perpetuate a false narrative. Through phone click farms, bad actors also earn on each ad or video watched. Phone farmers claim to use this as a side hustle, as a means of making more money. Click farms can be modeled as companies providing digital engagement services or as individual corporations to multiply clicks for various objectives. They are operated on a much larger scale, with thousands of employees and billions of daily clicks, impressions, and engagements.

The Legality of Phone Farms

The question about the legality of phone farms presents a conundrum. It is notable that phone farms are also used for legitimate application in software development and market research, enabling developers to test applications across various devices and operating systems simultaneously. However, they are typically employed for more dubious purposes, such as social media manipulation, generatiing fake clicks on online ads, spamming, spreading misinformation, and facilitating cyberattacks, and such use cases classify as illegal and unethical behaviour.

The use of the technology to misrepresent information for nefarious intents is illegitimate and unethical. Phone farms are famed for violating the terms of the apps they use to make money by simulating clicks, creating multiple fake accounts and other activities through multiple phones, which can be illegal.

Furthermore, should any entity misrepresent its image/product/services through fake reviews/ratings obtained through bots and phone farms and create deliberately-false impressions for consumers, it is to be considered an unfair trade practice and may attract liabilities.

CyberPeace Policy Recommendations

CyberPeace advocates for truthful and responsible consumption of technology and the Internet. Businesses are encouraged to refrain from using such unethical methods to gain a business advantage and mimic fake popularity online. Businesses must be mindful to avoid any actions that may misrepresent information and/ or cause injury to consumers, including online users. The ethical implications of phone farms cannot be ignored, as they can erode public trust in digital platforms and contribute to a climate of online deception. Law enforcement agencies and regulators are encouraged to keep a check on any illegal use of mobile devices by cybercriminals to commit cyber crimes. Tech and social media platforms must implement monitoring and detection systems to analyse any unusual behaviour/activity on their platforms, looking for suspicious bot activity or phone farming groups. To stay protected from sophisticated threats and to ensure a secure online experience, netizens are encouraged to follow cybersecurity best practices and verify all information from authentic sources.

Final Words

Phone farms have the ability to generate massive amounts of social media interactions, capable of performing repetitive tasks such as clicking, scrolling, downloading, and more in very high volumes in very short periods of time. The potential for misuse of phone farms is higher than the legitimate uses they can be put to. As technology continues to evolve, the challenge lies in finding a balance between innovation and ethical use, ensuring that technology is harnessed responsibly.

References

- https://www.branch.io/glossary/phone-farm/

- https://clickpatrol.com/phone-farms/

- https://www.airbridge.io/glossary/phone-farms#:~:text=A%20phone%20farm%20is%20a,monitor%20the%20tasks%20being%20performed

- https://innovation-village.com/phone-farms-exposed-the-sneaky-tech-behind-fake-likes-clicks-and-more/

Introduction

An age of unprecedented problems has been brought about by the constantly changing technological world, and misuse of deepfake technology has become a reason for concern which has also been discussed by the Indian Judiciary. Supreme Court has expressed concerns about the consequences of this quickly developing technology, citing a variety of issues from security hazards to privacy violations to the spread of disinformation. In general, misuse of deepfake technology is particularly dangerous since it may fool even the sharpest eye because they are almost identical to the actual thing.

SC judge expressed Concerns: A Complex Issue

During a recent speech, Supreme Court Justice Hima Kohli emphasized the various issues that deepfakes present. She conveyed grave concerns about the possibility of invasions of privacy, the dissemination of false information, and the emergence of security threats. The ability of deepfakes to be created so convincingly that they seem to come from reliable sources is especially concerning as it increases the potential harm that may be done by misleading information.

Gender-Based Harassment Enhanced

In this internet era, there is a concerning chance that harassment based on gender will become more severe, as Justice Kohli noted. She pointed out that internet platforms may develop into epicentres for the quick spread of false information by anonymous offenders who act worrisomely and freely. The fact that virtual harassment is invisible may make it difficult to lessen the negative effects of toxic online postings. In response, It is advocated that we can develop a comprehensive policy framework that modifies current legal frameworks—such as laws prohibiting sexual harassment online —to adequately handle the issues brought on by technology breakthroughs.

Judicial Stance on Regulating Deepfake Content

In a different move, the Delhi High Court voiced concerns about the misuse of deepfake and exercised judicial intervention to limit the use of artificial intelligence (AI)-generated deepfake content. The intricacy of the matter was highlighted by a division bench. The bench proposed that the government, with its wider outlook, could be more qualified to handle the situation and come up with a fair resolution. This position highlights the necessity for an all-encompassing strategy by reflecting the court's acknowledgement of the technology's global and borderless character.

PIL on Deepfake

In light of these worries, an Advocate from Delhi has taken it upon himself to address the unchecked use of AI, with a particular emphasis on deepfake material. In the event that regulatory measures are not taken, his Public Interest Litigation (PIL), which is filed at the Delhi High Court, emphasises the necessity of either strict limits on AI or an outright prohibition. The necessity to discern between real and fake information is at the center of this case. Advocate suggests using distinguishable indicators, such as watermarks, to identify AI-generated work, reiterating the demand for openness and responsibility in the digital sphere.

The Way Ahead:

Finding a Balance

- The authorities must strike a careful balance between protecting privacy, promoting innovation, and safeguarding individual rights as they negotiate the complex world of deepfakes. The Delhi High Court's cautious stance and Justice Kohli's concerns highlight the necessity for a nuanced response that takes into account the complexity of deepfake technology.

- Because of the increased complexity with which the information may be manipulated in this digital era, the court plays a critical role in preserving the integrity of the truth and shielding people from the possible dangers of misleading technology. The legal actions will surely influence how the Indian judiciary and legislature respond to deepfakes and establish guidelines for the regulation of AI in the nation. The legal environment needs to change as technology does in order to allow innovation and accountability to live together.

Collaborative Frameworks:

- Misuse of deepfake technology poses an international problem that cuts beyond national boundaries. International collaborative frameworks might make it easier to share technical innovations, legal insights, and best practices. A coordinated response to this digital threat may be ensured by starting a worldwide conversation on deepfake regulation.

Legislative Flexibility:

- Given the speed at which technology is advancing, the legislative system must continue to adapt. It will be required to introduce new legislation expressly addressing developing technology and to regularly evaluate and update current laws. This guarantees that the judicial system can adapt to the changing difficulties brought forth by the misuse of deepfakes.

AI Development Ethics:

- Promoting moral behaviour in AI development is crucial. Tech businesses should abide by moral or ethical standards that place a premium on user privacy, responsibility, and openness. As a preventive strategy, ethical AI practices can lessen the possibility that AI technology will be misused for malevolent purposes.

Government-Industry Cooperation:

- It is essential that the public and commercial sectors work closely together. Governments and IT corporations should collaborate to develop and implement legislation. A thorough and equitable approach to the regulation of deepfakes may be ensured by establishing regulatory organizations with representation from both sectors.

Conclusion

A comprehensive strategy integrating technical, legal, and social interventions is necessary to navigate the path ahead. Governments, IT corporations, the courts, and the general public must all actively participate in the collective effort to combat the misuse of deepfakes, which goes beyond only legal measures. We can create a future where the digital ecosystem is safe and inventive by encouraging a shared commitment to tackling the issues raised by deepfakes. The Government is on its way to come up with dedicated legislation to tackle the issue of deepfakes. Followed by the recently issued government advisory on misinformation and deepfake.