#FactCheck - Deepfake Video Falsely Claims Indian Defence Secretary Admitted Pakistan ‘Jammed Indian Systems’

Executive Summary

A video allegedly showing India’s Defence Secretary Rajesh Kumar Singh making remarks about Pakistan’s cyber capabilities is being widely shared on social media. The clip claims that Singh admitted Pakistan had “jammed Indian systems” on May 10 and described Pakistan’s cyber and electronic warfare capabilities as a major challenge for India. Research by CyberPeace Research Wing found that the viral clip is an AI-generated deepfake being circulated to spread misinformation. Rajesh Kumar Singh never made any such statement.

Claim

An X user shared the viral video claiming that India’s Defence Secretary had acknowledged Pakistan’s technological superiority. The post alleged that Singh admitted Pakistan successfully jammed Indian systems and claimed that India was lagging behind in cyber and electronic warfare technology.

Fact Check

To verify the claim, we searched relevant keywords on Google but found no credible media reports carrying such a statement from the Defence Secretary. We then extracted keyframes from the viral clip and conducted a reverse image search. During the research, we found the original video uploaded on the YouTube channel of ANI on April 30, 2026.

A review of the full video confirmed that Rajesh Kumar Singh never made the remarks heard in the viral clip. The original footage had been manipulated and altered using AI-generated audio techniques.

Conclusion

Our research confirms that the viral video is fake and AI-manipulated. The statement attributed to India’s Defence Secretary Rajesh Kumar Singh is fabricated, and the deepfake clip is being shared with misleading claims to spread disinformation.

Related Blogs

A video purportedly showing Rashtriya Swayamsevak Sangh (RSS) chief Mohan Bhagwat making remarks about the “saffronisation” of the Indian Army has been widely circulated on social media. The clip claims that Bhagwat called for the removal of non-Hindus from the armed forces and linked the issue to future political leadership changes in the country.

Claim

However, a verification by the Cyber Peace Foundation has established that the video is misleading and has been digitally manipulated.

In the video, Bhagwat is allegedly heard saying that unless more than 50 percent of non-Hindus are removed from the Indian Army by 2028, Prime Minister Narendra Modi would be replaced by Uttar Pradesh Chief Minister Yogi Adityanath. The clip further attributes another statement to him, suggesting that he would resign if the Prime Minister were to demand Nitish Kumar’s resignation.

By the time of publication, the video had been viewed over 7,000 times.( lINK, ARCHIVE Link, Screenshot

Fact Check:

The reverse image search also directed the Desk to a video uploaded on CNN-News18’s official YouTube channel on December 21, 2025. The footage was found to be a longer version of the viral clip and was recorded at the RSS centenary event held in Kolkata on the same date. A comparison of both videos confirmed that the background visuals, stage setup and camera angles were identical.

However, a careful review of the original CNN-News18 video revealed that Mohan Bhagwat did not make any of the statements attributed to him in the viral clip.

In his original address, Bhagwat spoke about unity and referred to concerns over increasing atrocities against Hindus in Bangladesh. He made no reference to the Indian Army, nor did he comment on its composition or alleged saffronisation. Here is the link to the original video, along with a screenshot: https://www.youtube.com/watch?v=KnsAUGfBQBk&t=1s

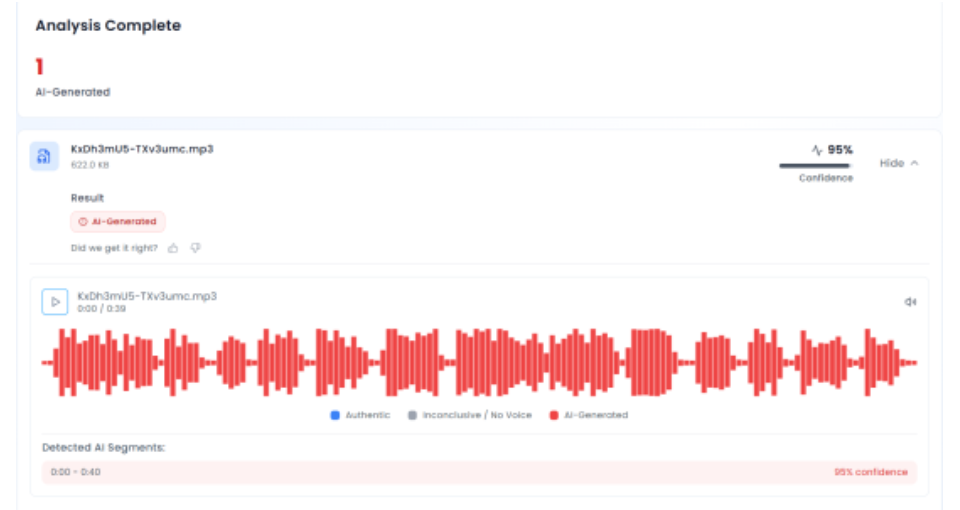

In the next phase of the investigation, the audio track from the viral video was extracted and analysed using the AI audio detection tool Aurigin. The tool’s assessment indicated that the voice heard in the clip was artificially generated, confirming that the audio did not originate from the original speech.

Conclusion

The claim that RSS chief Mohan Bhagwat called for the saffronisation of the Indian Army is false. PTI Fact Check found that the viral video was digitally manipulated, using genuine footage from an RSS centenary event but pairing it with an AI-generated audio track. The altered video was shared online to mislead viewers by falsely attributing statements Bhagwat never made.

Executive Summary

The IT giant Apple has alerted customers to the impending threat of "mercenary spyware" assaults in 92 countries, including India. These highly skilled attacks, which are frequently linked to both private and state actors (such as the NSO Group’s Pegasus spyware), target specific individuals, including politicians, journalists, activists and diplomats. In sharp contrast to consumer-grade malware, these attacks are in a league unto themselves: highly-customized to fit the individual target and involving significant resources to create and use.

As the incidence of such attacks rises, it is important that all persons, businesses, and officials equip themselves with information about how such mercenary spyware programs work, what are the most-used methods, how these attacks can be prevented and what one must do if targeted. Individuals and organizations can begin protecting themselves against these attacks by enabling "Lockdown Mode" to provide an extra layer of security to their devices and by frequently changing passwords and by not visiting the suspicious URLs or attachments.

Introduction: Understanding Mercenary Spyware

Mercenary spyware is a special kind of spyware that is developed exclusively for law enforcement and government organizations. These kinds of spywares are not available in app stores, and are developed for attacking a particular individual and require a significant investment of resources and advanced technologies. Mercenary spyware hackers infiltrate systems by means of techniques such as phishing (by sending malicious links or attachments), pretexting (by manipulating the individuals to share personal information) or baiting (using tempting offers). They often intend to use Advanced Persistent Threats (APT) where the hackers remain undetected for a prolonged period of time to steal data by continuous stealthy infiltration of the target’s network. The other method to gain access is through zero-day vulnerabilities, which is the process of gaining access to mobile devices using vulnerabilities existing in software. A well-known example of mercenary spyware includes the infamous Pegasus by the NSO Group.

Actions: By Apple against Mercenary Spyware

Apple has introduced an advanced, optional protection feature in its newer product versions (including iOS 16, iPadOS 16, and macOS Ventura) to combat mercenary spyware attacks. These features have been provided to the users who are at risk of targeted cyber attacks.

Apple released a statement on the matter, sharing, “mercenary spyware attackers apply exceptional resources to target a very small number of specific individuals and their devices. Mercenary spyware attacks cost millions of dollars and often have a short shelf life, making them much harder to detect and prevent.”

When Apple's internal threat intelligence and investigations detect these highly-targeted attacks, they take immediate action to notify the affected users. The notification process involves:

- Displaying a "Threat Notification" at the top of the user's Apple ID page after they sign in.

- Sending an email and iMessage alert to the addresses and phone numbers associated with the user's Apple ID.

- Providing clear instructions on steps the user should take to protect their devices, including enabling "Lockdown Mode" for the strongest available security.

- Apple stresses that these threat notifications are "high-confidence alerts" - meaning they have strong evidence that the user has been deliberately targeted by mercenary spyware. As such, these alerts should be taken extremely seriously by recipients.

Modus Operandi of Mercenary Spyware

- Installing advanced surveillance equipment remotely and covertly.

- Using zero-click or one-click attacks to take advantage of device vulnerabilities.

- Gain access to a variety of data on the device, including location tracking, call logs, text messages, passwords, microphone, camera, and app information.

- Installation by utilizing many system vulnerabilities on devices running particular iOS and Android versions.

- Defense by patching vulnerabilities with security updates (e.g., CVE-2023-41991, CVE-2023-41992, CVE-2023-41993).

- Utilizing defensive DNS services, non-signature-based endpoint technologies, and frequent device reboots as mitigation techniques.

Prevention Measures: Safeguarding Your Devices

- Turn on security measures: Make use of the security features that the device maker has supplied, such as Apple's Lockdown Mode, which is intended to prevent viruses of all types from infecting Apple products, such as iPhones.

- Frequent software upgrades: Make sure the newest security and software updates are installed on your devices. This aids in patching holes that mercenary malware could exploit.

- Steer clear of misleading connections: Exercise caution while opening attachments or accessing links from unidentified sources. Installing mercenary spyware is possible via phishing links or attachments.

- Limit app permissions: Reassess and restrict app permissions to avoid unwanted access to private information.

- Use secure networks: To reduce the chance of data interception, connect to secure Wi-Fi networks and stay away from public or unprotected connections.

- Install security applications: To identify and stop any spyware attacks, think about installing reliable security programs from reliable sources.

- Be alert: If Apple or other device makers send you a threat notice, consider it carefully and take the advised security precautions.

- Two-factor authentication: To provide an extra degree of protection against unwanted access, enable two-factor authentication (2FA) on your Apple ID and other significant accounts.

- Consider additional security measures: For high-risk individuals, consider using additional security measures, such as encrypted communication apps and secure file storage services

Way Forward: Strengthening Digital Defenses, Strengthening Democracy

People, businesses and administrations must prioritize cyber security measures and keep up with emerging dangers as mercenary spyware attacks continue to develop and spread. To effectively address the growing threat of digital espionage, cooperation between government agencies, cybersecurity specialists, and technology businesses is essential.

In the Indian context, the update carries significant policy implications and must inspire a discussion on legal frameworks for government surveillance practices and cyber security protocols in the nation. As the public becomes more informed about such sophisticated cyber threats, we can expect a greater push for oversight mechanisms and regulatory protocols. The misuse of surveillance technology poses a significant threat to individuals and institutions alike. Policy reforms concerning surveillance tech must be tailored to address the specific concerns of the use of such methods by state actors vs. private players.

There is a pressing need for electoral reforms that help safeguard democratic processes in the current digital age. There has been a paradigm shift in how political activities are conducted in current times: the advent of the digital domain has seen parties and leaders pivot their campaigning efforts to favor the online audience as enthusiastically as they campaign offline. Given that this is an election year, quite possibly the most significant one in modern Indian history, digital outreach and online public engagement are expected to be at an all-time high. And so, it is imperative to protect the electoral process against cyber threats so that public trust in the legitimacy of India’s democratic is rewarded and the digital domain is an asset, and not a threat, to good governance.

A news graphic bearing the Navbharat Times logo is being widely circulated on social media. The graphic claims that religious preacher Devkinandan Thakur made an extremely offensive and casteist remark targeting the ‘Shudra’ community. Social media users are sharing the graphic and claiming that the statement was actually made by Devkinandan Thakur. Cyber Peace Foundation’s research and verification found that the claim being shared online is misleading. Our research found that the viral news graphic is completely fake and that Devkinandan Thakur did not make any such casteist statement.

Claim

A viral news graphic claims that Devkinandan Thakur made a derogatory and caste-based statement about Shudras.On 17 January 2026, an Instagram user shared the viral graphic with the caption, “This is probably the formula of Ram Rajya.”The text on the graphic reads: “People of Shudra castes reproduce through sexual intercourse, whereas Brahmins give birth to children after marriage through the power of their mantras, without intercourse.” The graphic also carries Devkinandan Thakur’s photograph and identifies him as a ‘Kathavachak’ (religious storyteller).

Fact Check:

To verify the claim, we first searched for relevant keywords on Google. However, no credible or verified media reports were found supporting the claim. In the next stage of verification, we found a post published by NBT Hindi News (Navbharat Times) on X (formerly Twitter) on 17 January 2026, in which the organisation explicitly debunked the viral graphic. Navbharat Times clarified that the graphic circulating online was fake and also shared the original and authentic post related to the news.

Further research led us to Devkinandan Thakur’s official Facebook account, where he posted a clarification on 17 January 2026. In his post, he stated that anti-social elements are creating fake ‘Sanatani’ profiles and spreading false news, misusing the names of reputed media houses and platforms to mislead and divide people. He described the viral content as part of a deliberate conspiracy and fake agenda aimed at weakening unity. He also warned that AI-generated fake videos and fabricated statements are increasingly being used to create confusion, mistrust and division.

Devkinandan Thakur urged people not to believe or share any post, news or video without verification, and advised checking information through official websites, verified social media accounts or trusted sources.

Conclusion

The viral news graphic attributing a casteist statement to Devkinandan Thakur is completely fake.Devkinandan Thakur did not make the alleged remark, and the graphic circulating with the Navbharat Times logo is fabricated.