Introduction

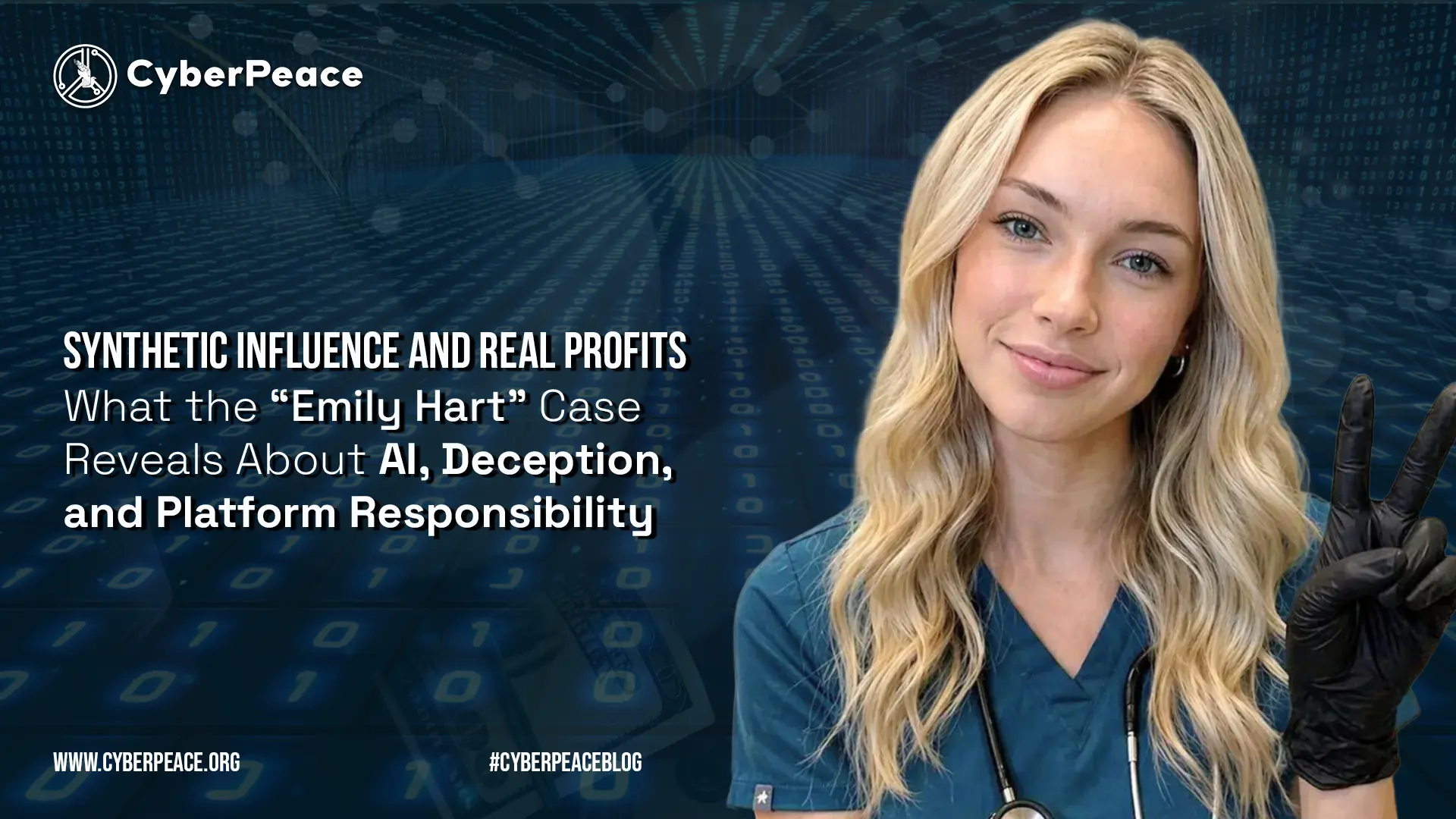

In April 2026, there was a fascinating example of the risks of generative artificial intelligence (AI). An Indian medical student, aged 22, developed a fake AI-driven influencer "Emily Hart" and leveraged the persona to amass a substantial social media following, engagement and revenue.

It isn't just a case of online fraud. It is a turning point in the nature of influence, veracity, and profitability in the digital world. Ultimately, it poses a troubling question. If users can't tell the difference between real and fake people, then what is online trust?

The Making of a Synthetic Influencer

“Emily Hart” was a young, conservative American nurse. The identity was completely made-up, created with the help of AI programs that produced eerily realistic images, captions and engagement techniques.

The creator did not work with random content. They crafted the influencer to cater to a particular audience. With this in mind, the account was able to target conservatives in the United States who are politically active. It is reported that some of its posts have achieved millions of views, and within a few months, the influencer had thousands of followers.

Monetisation followed naturally. The account owner monetised through subscriptions and the sale of merchandise, reportedly earning thousands of dollars a month with fewer than an hour a day of "work" on the account.

The disproportionate effort and reward is what is interesting about this case. This is a unique example of how people can now use very little capital to create digital personas that attract value.

Why It Worked: Engagement, Identity, and Algorithmic Incentives

The "Emily Hart" case was no accident. It was enabled by three complementary factors.

First, identity targeting was crucial. The persona was constructed to fit a particular worldview and culture, making it more relevant and resonating with the target audience. AI platforms were even deployed to better target and position the persona, and it is suggested that micro-targeting would increase engagement.

Second, it was amplified by algorithms. Social media algorithms favour engagement, sometimes favouring emotional and divisive content. The account exploited this by producing visually appealing content with a strong political message, what the creator called "engageable" content.

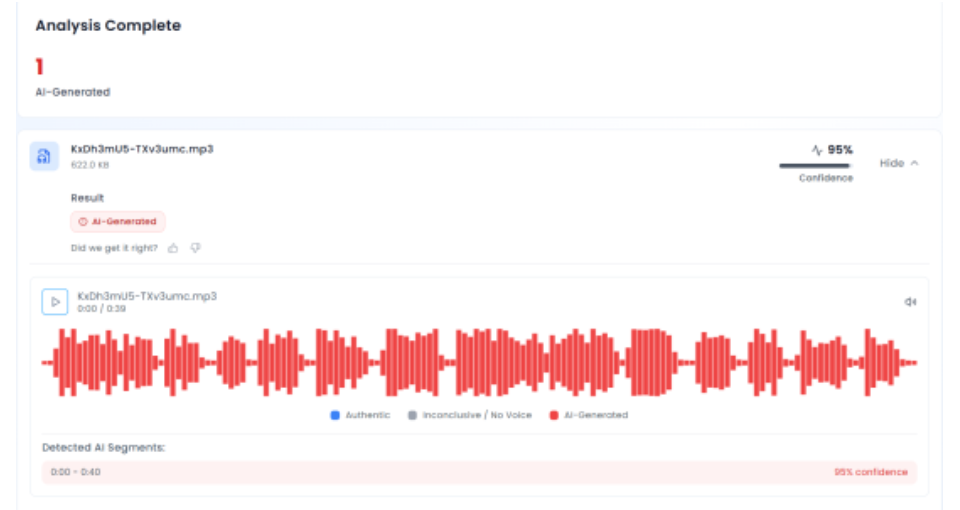

Third, the authenticity of the AI content minimised distrust. Generative models have become so realistic that it is hard to tell if images are real or not. Specialists point out that AI increases the credibility and scalability of fake profiles, increasing their influence and reach.

All of this combined to make deception profitable.

The Blurring of Authenticity in Digital Spaces

The "Emily Hart" phenomenon is emblematic of a broader shift in authenticity. Historically, influence was correlated with human personalities who establish trust over time. But AI upends this paradigm by allowing the creation of entirely fabricated personalities capable of mimicking, and even surpassing, human influencers.

This has two immediate consequences.

First, the truth is harder to discern. While platforms might require that AI-generated content be disclosed, there are inconsistencies in how this is policed. Here, the account apparently didn't disclose until it was banned for fraud.

Second, authenticity may not be as important to consumers. Consumers may view content for ideological or emotional reasons, rather than for its accuracy. This indicates that the rise of synthetic influencers is not just a technical problem but also a behavioural one.

The implication is stark. The internet is evolving into a place where authenticity is more important than truth.

Economic Incentives and the Rise of Synthetic Monetisation

The key difference between this fraud and previous ones is the business model. This creator didn't break into a computer or steal personal information. He cultivated an audience and sold attention.

This is an example of how the internet economy works. Attention is a commodity and platforms aim to generate it. AI reduces the cost of creating attention-generating artefacts, enabling people to amplify their reach.

This gives rise to synthetic monetisation. Online characters can be developed, fine-tuned and leveraged as money-spinning assets. In this case, identity is a product.

This raises regulatory challenges. Current laws on fraud, advertising and consumer protection may not be sufficient to cover cases of deceptive content sourced from an identity.

Platform Responsibility and Enforcement Gaps

The role of platforms in enabling such scenarios cannot be overlooked. Although platforms have policy guidelines on disclosure of AI-generated content, these are inconsistently applied.

In the case of "Emily Hart", the account apparently existed for some time before being shut down for scamming. This implies that either the ability to detect such accounts is weak or the tools used are reactive.

The challenge is structural. Companies are rewarded for engagement, and fake accounts can help to achieve this. But they must also promote authenticity and protect against fraud.

This presents a challenge between commercial interests and user safety. Without enforcement, synthetic influencers will become more prevalent.

Policy Implications: Rethinking Trust and Verification

The "Emily Hart" incident highlights a number of policy issues.

First, disclosure policies must be improved and harmonised. Consumers need to be clear when content is generated by AI, and platforms need to police this.

Second, identity verification needs to be updated. Classic forms of verification may not hold up in an era of imaginary characters amassing legions of fans. Alternative digital verification may be needed.

Third, new regulations should apply to synthetic identities. This means clarifying distinctions between art, commerce and fraud.

Finally, digital literacy becomes critical. Consumers need to be equipped to operate in a space where virtual personas aren't always human.

Conclusion

The rise of "Emily Hart" is not just an example of one person using AI to make money. It is a glimpse of a digital revolution.

AI is redefining how influence can be generated, trust can be established and value can be monetized. As digital personas become more realistic, the distinction between human and machine will remain unclear.

The challenge will not be to stop AI being used to generate content. It is to ensure that the systems that mediate our online interactions are able to tell the difference, and that we are not left on our own to sort it all out.

When anyone can make a convincing identity for themselves, trust will no longer be a given. It will need to be engineered, policed and protected.