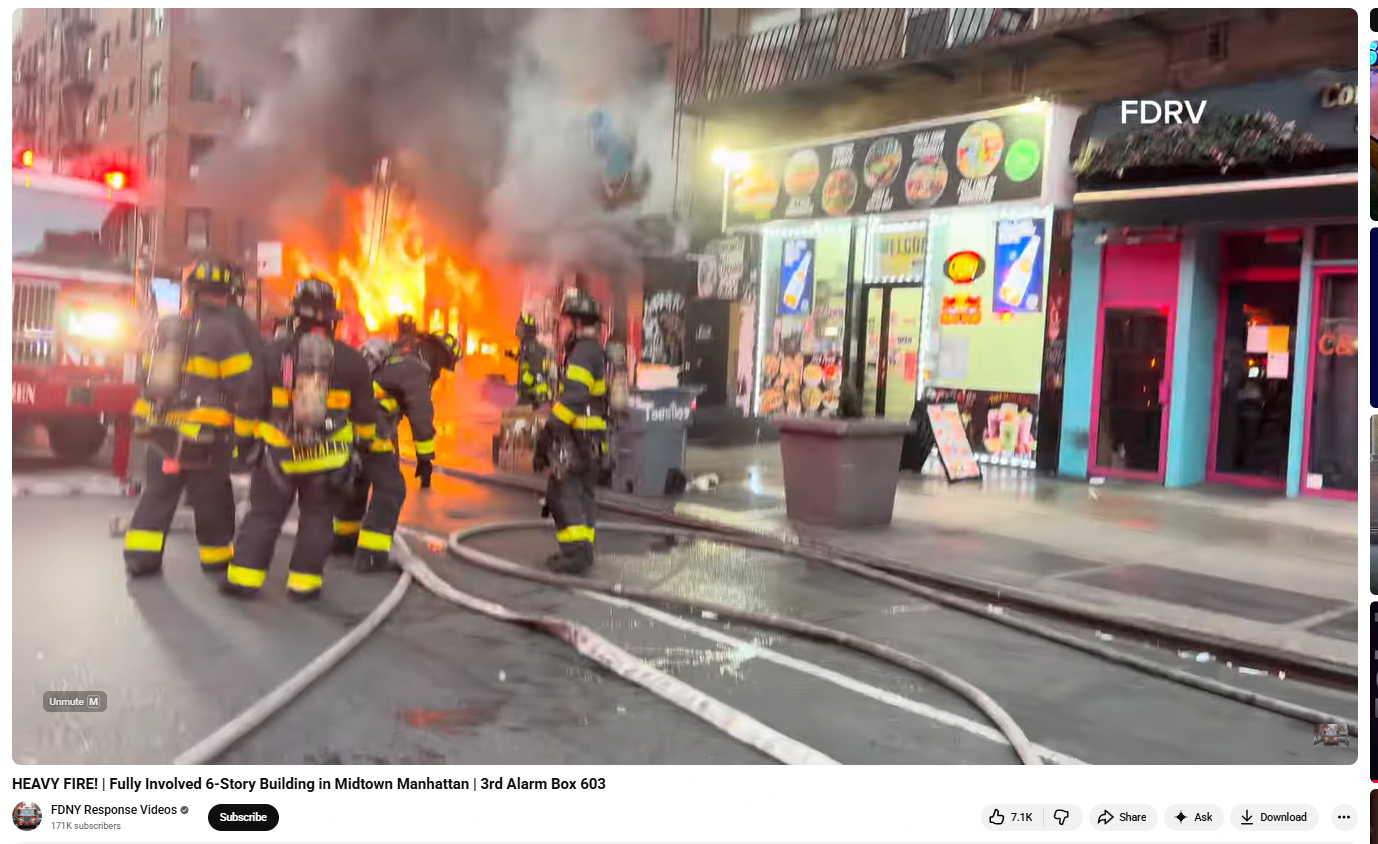

#FactCheck- Manhattan Fire Video Falsely Shared as Hezbollah Attack on Israel

Executive Summary

A video showing a building engulfed in flames is going viral on social media, with users claiming it depicts an attack by Hezbollah on Israel’s military headquarters. The clip is being shared with assertions that several Israeli soldiers were killed and many remain trapped inside the burning structure. However, a research by the CyberPeace Research Wing found that the claim is false. The viral video is not from Israel but from New York City in the Manhattan area, where a residential building caught fire.

Claim

A Facebook user, ‘Nazim Khan Tirwadiya’, shared the video on April 15, 2026, claiming that Hezbollah had targeted an Israeli military headquarters, resulting in heavy casualties and ongoing fire.

Fact Check

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. This led us to a longer version of the same clip uploaded on the YouTube channel “FDNY Response Videos” on April 12, 2026. The video description identified the location as Manhattan, New York City.

Further keyword searches led us to a report published by ABC7NY on April 12, 2026. According to the report, a massive fire broke out in a six-storey apartment building in Manhattan’s Midtown area around 6 a.m. Firefighters worked extensively to control the blaze, and two firefighters sustained minor injuries. No fatalities were reported.

Conclusion

The viral claim is false. The video does not show an attack on Israel by Hezbollah. Instead, it captures a fire incident in a residential building in Manhattan, New York City. The clip has been shared with a misleading narrative unrelated to the actual event.

Related Blogs

Introduction

Human Trafficking has been a significant concern and threat to society for a very long time. The aspects of our physical safety also have been influenced by human traffickers and the modus operandi they have adopted and deployed over the years. We are always cautious of younger children in regard to trafficking whenever we go out to crowded or unknown places. This concern and threat have also migrated to cyberspace and now pose new and different tangents of threats. These crimes are committed using technology and are further substantiated by different cybercrimes.

What is Cyber-Enabled Human Trafficking?

Cyber-enabled human trafficking is the new evolution of human trafficking in the digital age. Bad actors lure the victims via the internet and use social engineering to exploit their vulnerabilities to get them into their traps. In today's time, crime is often substantiated in lieu of fake job offers and a better lifestyle in new and major metropolitan cities. Now this crime has gone beyond the geographical boundaries of our nation, and often the victims end up in remote locations in the Middle East or South East Asia.

Cybercrime Hubs in Myanmar

The reports have indicated that a lot of trafficked victims are taken down to various cybercrime hubs in Myanmar. The victims are often lured on the pretext of job offers overseas, which pay handsomely. The victims make their way into the foreign nation but are then cornered by the bad actors and are segregated and taken into different hubs. The victims are often school graduates and seek basic jobs for their earnings. The victims are taken into Cybercrime hubs which Chinese syndicate criminals allegedly run.The victims are kept in tough conditions, beaten up, and held captive in remote jungles. Once the victim has lost hope, the criminals train them to commit cyber frauds like phishing. The victims are given scripts and mobile numbers to commit cybercrimes. The victims are given targets to ensure their survival, and due to the dark and threatening conditions, the victims just give up on the demands just to remain alive. Some of the victims make their way back home as well, but that is after 6-7 years of such constant torture and abuse to commit cybercrimes. The majority of such survivors face trouble seeking legal assistance as the criminals are almost impossible to track, thus making redressal for crimes and rehabilitation for survivors tough.

How to stay safe?

The criminals in such acts often target the vulnerable sector of the population, these people generally hail from tier 3 towns and rural areas. These victims aspire for a better life and earning opportunities, and due to less education and minimal awareness, they fail to see the traps set by the victims. The population at large can deploy the following measures and safe practices to avoid such horrific threats-

- Avoid Stranger interaction: Avoid interacting with strangers on any online platform or portal. Social media sites are the most used platforms by bad actors to make contact with potential victims.

- Do not Share: Avoid sharing any personal information with anyone online, and avoid filling out third-party surveys/forms seeking personal information.

- Check, Check and Recheck: Always be on alert for threats and always check and cross-check any link or platform you use or access.

- Too good to be true: If something feels like Too good to be true, it probably is and hence avoid falling for attractive job offers and work-from-home opportunities on social media platforms.

- Know your helplines: One should know the helpline numbers to make sure to exercise the reporting duty and also encourage your family members to report in case of any threat or issue.

- Raise Awareness: It is the duty of all netizens to raise awareness in society to arm more people against cybercrimes and fraud.

Conclusion

The name of cybercriminals is spreading all across the ecosystems, and now the technology is being deployed by such bad actors to even substantiate physical crimes. We need to be on alert and remain aware of such crimes and the modus Operandi of cyber criminals. Awareness and education are our best weapons to combat the threats and issues of cyber-enabled human trafficking, as the criminals feed on our vulnerabilities, lets eradicate them for once and for all and work towards creating a wholesome safe cyber ecosystem for all.https://www.scmp.com/week-asia/politics/article/3228543/inside-chinese-run-crime-hubs-myanmar-are-conning-world-we-can-kill-you-here

Introduction

A policy, no matter how artfully conceived, is like a timeless idiom, its truth self-evident, its purpose undeniable, standing in silent witness before those it vows to protect, yet trapped in the stillness of inaction, where every moment of delay erodes the very justice it was meant to serve. This is the case of the Digital Personal Data Protection Act, 2023, which holds in its promise a resolution to all the issues related to data protection and a protection framework at par with GDPR and Global Best Practices. While debates on its substantive efficacy are inevitable, its execution has emerged as a site of acute contention. The roll-out and the decision-making have been making headlines since late July on various fronts. The government is being questioned by industry stakeholders, media and independent analysts on certain grounds, be it “slow policy execution”, “centralisation of power” or “arbitrary amendments”. The act is now entrenched in a never-ending dilemma of competing interests under the DPDP Act.

The change to the Right to Information Act (RTI), 2005, made possible by Section 44(3) of the DPDP Act, has become a focal point of debate. This amendment is viewed by some as an attack on weakening the hard-won transparency architecture of Indian democracy by substituting an absolute exemption for personal information for the “public interest override” in Section 8(1)(j) of the RTI Act.

The Lag Ledger: Tracking the Delays in DPDP Enforcement

As per a news report of July 28, 2025, the Parliamentary Standing Committee on Information and Communications Technology has expressed its concern over the delayed implementation and has urged the Ministry of Electronics and Information Technology (MeitY) to ensure that data privacy is adequately ensured in the nation. In the report submitted to the Lok Sabha on July 24, the committee reviewed the government’s reaction to the previous recommendations and concluded that MeitY had only been able to hold nine consultations and twenty awareness workshops about the Draft DPDP Rules, 2025. In addition, four brainstorming sessions with academic specialists were conducted to examine the needs for research and development. The ministry acknowledges that this is a specialised field that urgently needs industrial involvement. Another news report dated 30th July, 2025, of a day-long consultation held where representatives from civil society groups, campaigns, social movements, senior lawyers, retired judges, journalists, and lawmakers participated on the contentious and chilling effects of the Draft Rules that were notified in January this year. The organisers said in a press statement the DPDP Act may have a negative impact on the freedom of the press and people’s right to information and the activists, journalists, attorneys, political parties, groups and organisations “who collect, analyse, and disseminate critical information as they become ‘data fiduciaries’ under the law.”

The DPDP Act has thus been caught up in an uncomfortable paradox: praised as a significant legislative achievement for India’s digital future, but caught in a transitional phase between enactment and enforcement, where every day not only postpones protection but also feeds worries about the dwindling amount of room for accountability and transparency.

The Muzzling Effect: Diluting Whistleblower Protections

The DPDP framework raises a number of subtle but significant issues, one of which is the possibility that it would weaken safeguards for whistleblowers. Critics argue that the Act runs the risk of trapping journalists, activists, and public interest actors who handle sensitive material while exposing wrongdoing because it expands the definition of “personal data” and places strict compliance requirements on “data fiduciaries.”One of the most important checks on state overreach may be silenced if those who speak truth to power are subject to legal retaliation in the absence of clear exclusions of robust public-interest protections.

Noted lawyer Prashant Bhushan has criticised the law for failing to protect whistleblowers, warning that “If someone exposes corruption and names officials, they could now be prosecuted for violating the DPDP Act.”

Consent Management under the DPDP Act

In June 2025, the National e-Governance Division (NeGD) under MeitY released a Business Requirement Document (BRD) for developing consent management systems under the DPDP Act, 2023. The document supports the idea of “Consent Manager”, which acts as a single point of contact between Data Principals and Data Fiduciaries. This idea is fundamental to the Act, which is now being operationalised with the help of MeitY’s “Code for Consent: The DPDP Innovation Challenge.” The government has established a collaborative ecosystem to construct consent management systems (CMS) that can serve as a single, standardised interface between Data Principals and Data Fiduciaries by choosing six distinct entities, such as Jio Platforms, IDfy, and Zoop. Such a framework could enable people to have meaningful control over their personal data, lessen consent fatigue, and move India’s consent architecture closer to international standards if it is implemented precisely and transparently.

There is no debate to the importance of this development however, there are various concerns associated with this advancement that must be considered. Although effective, a centralised consent management system may end up being a single point of failure in terms of political overreach and technical cybersecurity flaws. Concerns are raised over the concentration of power over the framing, seeking, and recording of consent when big corporate entities like Jio are chosen as key innovators. Critics contend that the organisations responsible for generating revenue from user data should not be given the responsibility for designing the gatekeeping systems. Furthermore, the CMS can create opaque channels for data access, compromising user autonomy and whistleblower protections, in the absence of strong safeguards, transparency mechanisms and independent oversight.

Conclusion

Despite being hailed as a turning point in India’s digital governance, the DPDP Act is still stuck in a delayed and unequal transition from promise to reality. Its goals are indisputable, but so are the conundrum it poses to accountability, openness, and civil liberties. Every delay increases public mistrust, and every safeguard that remains unsolved. The true test of a policy intended to safeguard the digital rights of millions lies not in how it was drafted, but in the integrity, pace, and transparency with which it is to be implemented. In the digital age, the true cost of delay is measured not in time, but in trust. CyberPeace calls for transparent, inclusive, and timely execution that balances innovation with the protection of digital rights.

References

- https://www.storyboard18.com/how-it-works/parliamentary-committee-raises-concern-with-meity-over-dpdp-act-implementation-lag-77105.htm

- https://thewire.in/law/excessive-centralisation-of-power-lawyers-activists-journalists-mps-express-fear-on-dpdp-act

- https://www.medianama.com/2025/08/223-jio-idfy-meity-consent-management-systems-dpdpa/

- https://www.downtoearth.org.in/governance/centre-refuses-to-amend-dpdp-act-to-protect-journalists-whistleblowers-and-rti-activists

Executive Summary:

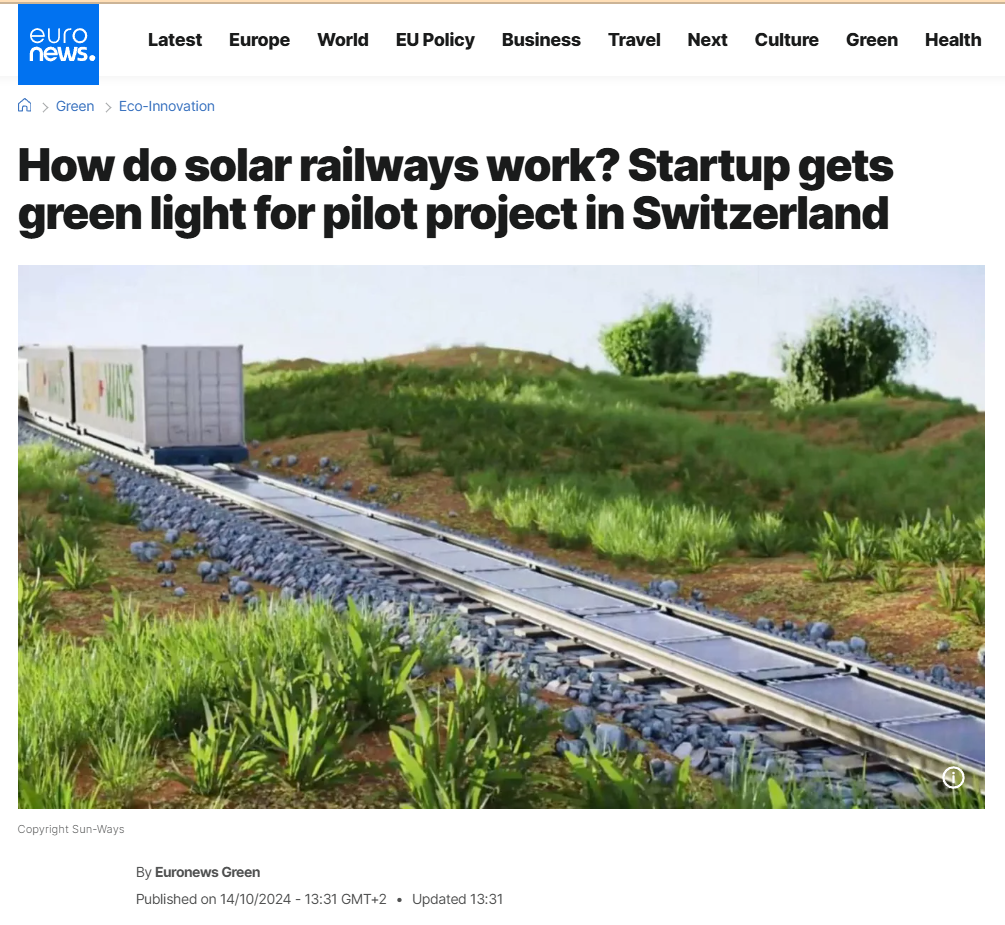

Social media has been overwhelmed by a viral post that claims Indian Railways is beginning to install solar panels directly on railway tracks all over the country for renewable energy purposes. The claim also purports that India will become the world's first country to undertake such a green effort in railway systems. Our research involved extensive reverse image searching, keyword analysis, government website searches, and global media verification. We found the claim to be completely false. The viral photos and information are all incorrectly credited to India. The images are actually from a pilot project by a Swiss start-up called Sun-Ways.

Claim:

According to a viral post on social media, Indian Railways has started an all-India initiative to install solar panels directly on railway tracks to generate renewable energy, limit power expenses, and make global history in environmentally sustainable rail operations.

Fact check:

We did a reverse image search of the viral image and were soon directed to international media and technology blogs referencing a project named Sun-Ways, based in Switzerland. The images circulated on Indian social media were the exact ones from the Sun-Ways pilot project, whereby a removable system of solar panels is being installed between railway tracks in Switzerland to evaluate the possibility of generating energy from rail infrastructure.

We also thoroughly searched all the official Indian Railways websites, the Ministry of Railways news article, and credible Indian media. At no point did we locate anything mentioning Indian Railways engaging or planning something similar by installing solar panels on railway tracks themselves.

Indian Railways has been engaged in green energy initiatives beyond just solar panel installation on program rooftops, and also on railway land alongside tracks and on train coach roofs. However, Indian Railways have never installed solar panels on railway tracks in India. Meanwhile, we found a report of solar panel installations on the train launched on 14th July 2025, first solar-powered DEMU (diesel electrical multiple unit) train from the Safdarjung railway station in Delhi. The train will run from Sarai Rohilla in Delhi to Farukh Nagar in Haryana. A total of 16 solar panels, each producing 300 Wp, are fitted in six coaches.

We also found multiple links to support our claim from various media links: Euro News, World Economy Forum, Institute of Mechanical Engineering, and NDTV.

Conclusion:

After extensive research conducted through several phases including examining facts and some technical facts, we can conclude that the claim that Indian Railways has installed solar panels on railway tracks is false. The concept and images originate from Sun-Ways, a Swiss company that was testing this concept in Switzerland, not India.

Indian Railways continues to use renewable energy in a number of forms but has not put any solar panels on railway tracks. We want to highlight how important it is to fact-check viral content and other unverified content.

- Claim: India’s solar track project will help Indian Railways run entirely on renewable energy.

- Claimed On: Social Media

- Fact Check: False and Misleading