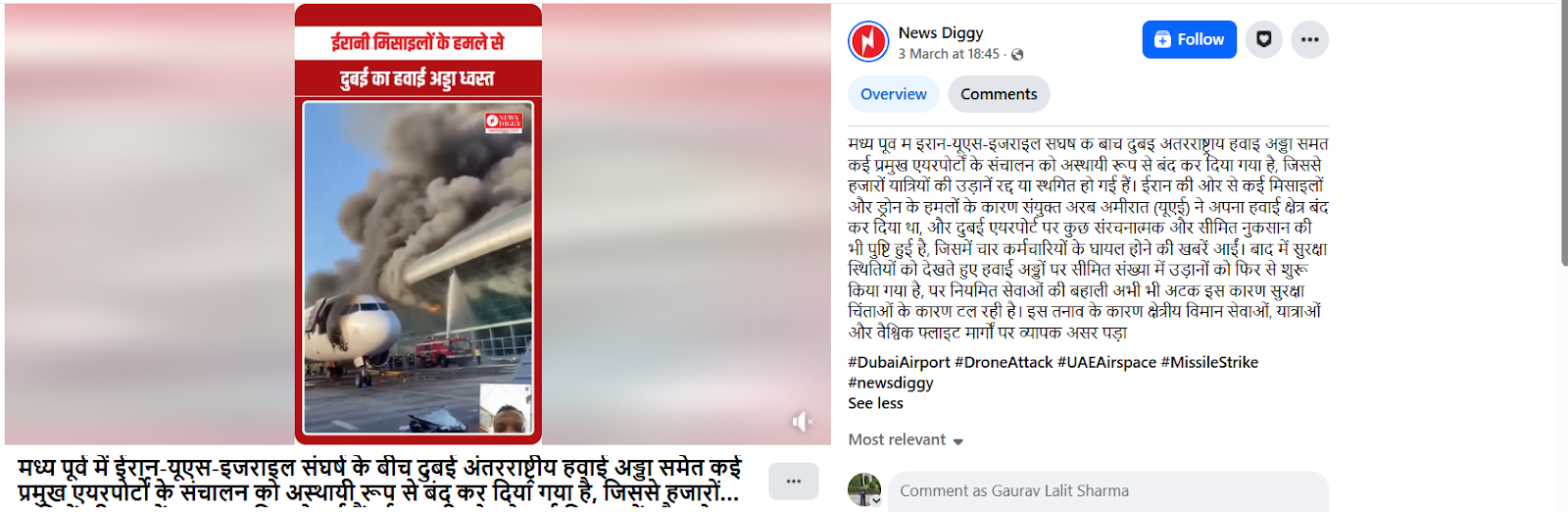

#FactCheck - Viral Video of Burning Aircraft Falsely Linked to UAE, Found to Be AI-Generated

Executive Summary:

A video is being shared on social media showing an aircraft engulfed in massive flames on an airport runway. The video is being linked to the UAE. It is being claimed that a UAE airport was completely destroyed due to recent drone and missile attacks by Iran. Research by the CyberPeace found the viral claim to be false. Our research revealed that the viral video is not real, but AI-generated.

Claim:

On social media platform Facebook, a user shared the viral video on March 3, 2026, and wrote, “Amid the Iran-US-Israel conflict in the Middle East, operations at several major airports, including Dubai International Airport, have been temporarily suspended, causing thousands of flight cancellations and delays. Due to multiple missile and drone attacks from Iran, the United Arab Emirates (UAE) had shut its airspace, and limited structural damage at Dubai Airport was also confirmed, with reports of four staff members being injured. Later, considering the security situation, a limited number of flights were resumed, but full operations are still delayed due to ongoing safety concerns. This tension has significantly impacted regional aviation, travel, and global flight routes.”

Fact Check:

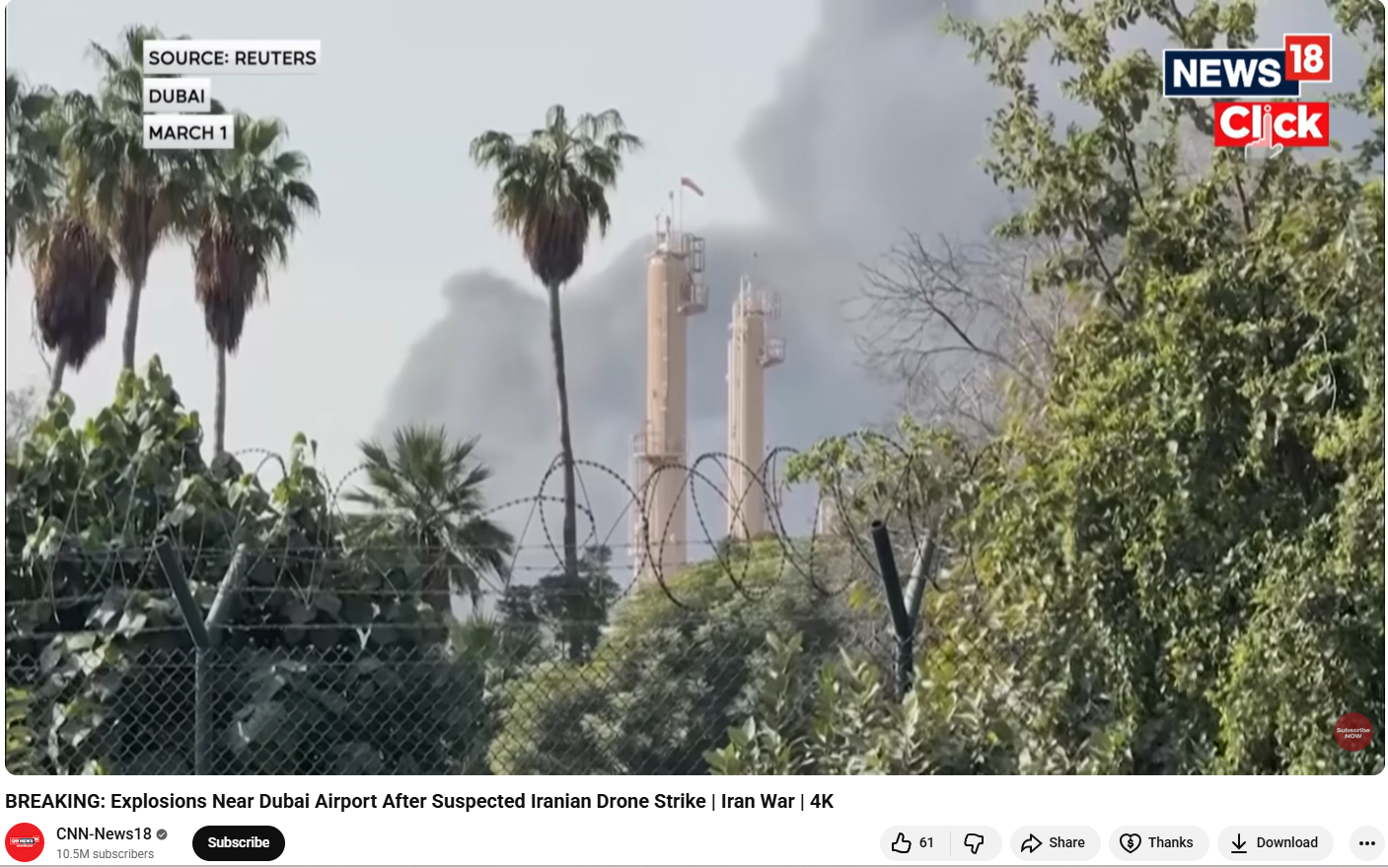

To verify the viral video, we searched relevant keywords on Google. However, we did not find any credible media report confirming the claim.However, we found a video report on the YouTube channel of CNN-News18 mentioning explosions near Dubai Airport after a suspected Iranian drone strike. But the visuals shown in that report are completely different from the viral video.

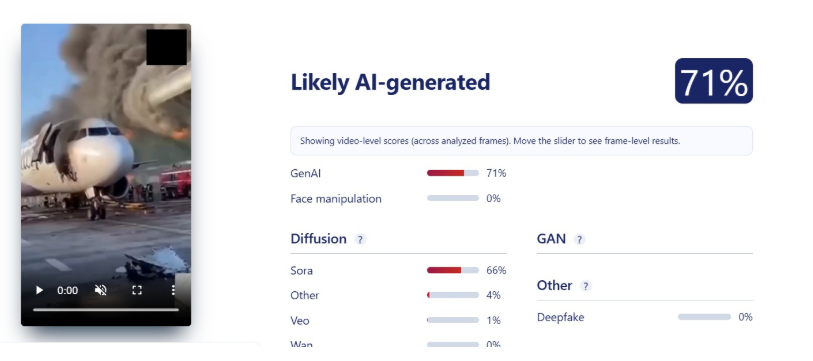

Upon closely examining the viral video, we noticed several inconsistencies, raising suspicion that it might be AI-generated. We then analyzed the video using the AI detection tool Sightengine. The results indicated that the video is 71 percent likely to be AI-generated.

Conclusion:

Our research found that the viral video is not real, but AI-generated.

Related Blogs

Introduction

The digital landscape of the nation has reached a critical point in its evolution. The rapid adoption of technologies such as cloud computing, mobile payment systems, artificial intelligence, and smart infrastructure has led to a high degree of integration between digital systems and governance, commercial activity, and everyday life. As dependence on these systems continues to grow, a wide range of cyber threats has emerged that are complex, multi-layered, and closely interconnected. By 2026, cyber security threats directed at India are expected to include an increasing number of targeted, well-organised, and strategic cyber attacks. These attacks are likely to focus on exploiting the trust placed in technology, institutions, automation, and the fast pace of technological change.

1. Social Engineering 2.0: Hyper-Personalised AI Phishing & Mobile Banking Malware

Cybercriminals have moved from generalised methods to hyper-targeted attacks through AI-based psychological manipulation. In addition to social media profiles, data breaches, and digital/tracking footprints, the latest types of cybercrimes expected in 2026 will involve AI-based analysis of this information to create and increase the use of hyper-targeted phishing emails.

Phishing emails are capable of impersonating banks, employers, and even family members, with all the same regionally or culturally relevant tone, language, and context as would be done if these persons were sending the emails in person.

With malicious applications disguised as legitimate service apps, cybercriminals have the ability to intercept and capture One-Time Passwords (OTPs), hijack user sessions, and steal money from user accounts in a matter of minutes.

These types of attempts or attacks are successful not only because of their technical sophistication, but because they take advantage of human trust at scale, giving them an almost limitless reach into the financial systems of people around the world through their computers and mobile devices.

2. Cloud and Supply Chain Vulnerabilities

As Indian organisations increasingly migrate to cloud infrastructure, cloud misconfigurations are emerging as a major cybersecurity risk. Weak identity controls, exposed storage, and improper access management can allow attackers to bypass traditional network defences. Alongside this, supply chain attacks are expected to intensify in 2026.

In supply chain attacks, cybercriminals compromise a trusted software vendor or service provider to infiltrate multiple downstream organisations. Even entities with strong internal security can be affected through third-party dependencies. For India’s startup ecosystem, government digital platforms, and IT service providers, this presents a systemic risk. Strengthening vendor risk management and visibility across digital supply chains will be essential.

3. Threats to IoT and Critical Infrastructure

By implementing smart cities, digital utilities, and connected public services, IoT has opened itself up to increased levels of operational technology (OT) through India’s initiative. However, there is currently a lack of adequate security in the form of strong authentication, encryption, and update methods available on many IoT devices. By the year 2026, attackers are going to be able to exploit these vulnerabilities much more than they already are.

Cyberattacks on critical infrastructure such as energy, transportation, healthcare, and telecom systems have far-reaching consequences that extend well beyond data loss; they directly affect the provision of essential services, can damage public safety, and raise concerns over national security. Effectively securing critical infrastructure needs to involve dedicated security solutions to deal with the specific needs of critical infrastructure, in contrast to conventional IT security.

4. Hidden File Vectors and Stealth Payload Delivery

SVG File Abuse in Stealth Attacks

Cybercriminals are continually searching for ways to bypass security filters, and hidden file vectors are emerging as a preferred tactic. One such method involves the abuse of SVG (Scalable Vector Graphics) files. Although commonly perceived as harmless image files, SVGs can contain embedded scripts capable of executing malicious actions.

By 2026, SVG-based attacks are expected to be used in phishing emails, cloud file sharing, and messaging platforms. Because these files often bypass traditional antivirus and email security systems, they provide an effective stealth delivery mechanism. Indian organisations will need to rethink assumptions about “safe” file formats and strengthen deep content inspection capabilities.

5. Quantum-Era Cyber Risks and “Harvest Now, Decrypt Later” Attacks

Although practical quantum computers are still emerging, quantum-era cyber risks are already a present-day concern. Adversaries are believed to be intercepting and storing encrypted data now with the intention of decrypting it in the future once quantum capabilities mature—a strategy known as “harvest now, decrypt later.” This poses serious long-term confidentiality risks.

Recognising this threat, the United States took early action during the Biden administration through National Security Memorandum 10, which directed federal agencies to prepare for the transition to quantum-resistant cryptography. For India, similar foresight is essential, as sensitive government communications, financial data, health records, and intellectual property could otherwise be exposed retrospectively. Preparing for quantum-safe cryptography will therefore become a strategic priority in the coming years.

6. AI Trust Manipulation and Model Exploitation

Poisoning the Well – Direct Attacks on AI Models

As artificial intelligence systems are increasingly used for decision-making—ranging from fraud detection and credit scoring to surveillance and cybersecurity—attackers are shifting focus from systems to models themselves. “Poisoning the well” refers to attacks that manipulate training data, feedback mechanisms, or input environments to distort AI outputs.

In the context of India's rapidly growing digital ecosystem, compromised AI models can result in biased decisions, false security alerts or denying legitimate services. The big problem with these types of attacks is they may occur without triggering conventional security measures. Transparency, integrity and continuous monitoring of AI systems will be key to creating and maintaining stakeholder confidence in the decision-making process of the automated systems.

Recommendations

Despite the increasing sophistication of malicious cyber actors, India is entering this phase with a growing level of preparedness and institutional capacity. The country has strengthened its cyber security posture through dedicated mechanisms and relevant agencies such as the Indian Cyber Crime Coordination Centre, which play a central role in coordination, threat response, and capacity building. At the same time, sustained collaboration among government bodies, non-governmental organisations, technology companies, and academic institutions has expanded cyber security awareness, skill development, and research. These collective efforts have improved detection capabilities, response readiness, and public resilience, placing India in a stronger position to manage emerging cyber threats and adapt to the evolving digital environment.

Conclusion

By 2026, complexity, intelligence, and strategic intent will increasingly define cyber threats to the digital ecosystem. Cyber criminals are expected to use advanced methods of attack, including artificial intelligence assisted social engineering and the exploitation of cloud supply chain risks. As these threats evolve, adversaries may also experiment with quantum computing techniques and the manipulation of AI models to create new ways of influencing and disrupting digital systems. In response, the focus of cybersecurity is shifting from merely preventing breaches to actively protecting and restoring digital trust. While technical controls remain essential, they must be complemented by strong cybersecurity governance, adherence to regulatory standards, and sustained user education. As India continues its digital transformation, this period presents a valuable opportunity to invest proactively in cybersecurity resilience, enabling the country to safeguard citizens, institutions, and national interests with confidence in an increasingly complex and dynamic digital future.

References

- https://www.seqrite.com/india-cyber-threat-report-2026/

- https://www.uscsinstitute.org/cybersecurity-insights/blog/ai-powered-phishing-detection-and-prevention-strategies-for-2026

- https://www.expresscomputer.in/guest-blogs/cloud-security-risks-that-should-guide-leadership-in-2026/130849/

- https://www.hakunamatatatech.com/our-resources/blog/top-iot-challenges

- https://csrc.nist.gov/csrc/media/Presentations/2024/u-s-government-s-transition-to-pqc/images-media/presman-govt-transition-pqc2024.pdf

- https://www.cyber.nj.gov/Home/Components/News/News/1721/214

Executive Summary

Amid the ongoing conflict between the US-Israel and Iran, a video of Indian Prime Minister Narendra Modi is being widely circulated on social media. In the clip, he is allegedly heard supporting Israel and calling Iran a “terrorist state.” The video also appears to show him speaking about the idea of “Akhand Bharat.” Many users are sharing this video as genuine. However, a detailed research by the CyberPeacefound that the claim is false. The viral video is a deepfake created using AI technology.

Claim:

A Facebook page named “Pushpendra Kulshreshtha” shared the video on March 23, 2026, with a caption suggesting that PM Modi made strong remarks in support of Israel and against Iran.

Fact Check:

To verify the claim, we first conducted a keyword search to find any credible reports or official statements where PM Modi made such remarks. However, no reliable news reports or authentic videos supporting the claim were found. We then extracted keyframes from the viral video and performed a reverse image search using Google Lens. This led us to the original video posted on the X (formerly Twitter) handle of ANI on March 12, 2026.

The visuals, including PM Modi’s attire and the stage setup, matched the viral clip—indicating that the fake video was created using this original footage. However, in the authentic video, PM Modi did not make any statements about Iran, Israel, or “Akhand Bharat” as seen in the viral version. In the original footage, PM Modi is seen addressing the NXT Summit in Delhi, where he spoke about the global energy crisis arising from ongoing conflicts and highlighted the expansion of LPG and PNG facilities in India. Additionally, a customised keyword search led us to a press release issued by the Prime Minister's Office regarding his address at the summit. The statement heard in the viral clip was not found there either.

Conclusion:

The viral video of PM Modi is a deepfake. He did not make any statement calling Iran a “terrorist state” or expressing support for Israel in the manner shown. The original video is from a summit held in Delhi and has been manipulated using AI to spread misleading claims.

Introduction

This tale, the Toothbrush Hack, straddles the ordinary and the sophisticated; an unassuming household item became the tool for committing cyber crime. Herein lies the account of how three million electronic toothbrushes turned into the unwitting infantry in a cyber skirmish—a Distributed Denial of Service (DDoS) assault that flirted with the thin line that bridges the real and the outlandish.

In January, within the Swiss borders, a story began circulating—first reported by the Aargauer Zeitung, a Swiss German-language daily newspaper. A legion of cybercriminals, with honed digital acumen, had planted malware on some three million electric toothbrushes. These devices, mere slivers of plastic and circuitry, became agents of chaos, converging their electronic requests upon the servers of an undisclosed Swiss firm, hurling that digital domain into digital blackout for several hours and wreaking an economic turmoil calculated in seven-figure sums.

The entire Incident

It was claimed that three million electric toothbrushes were allegedly used for a distributed denial-of-service (DDoS) attack, first reported by the Aargauer Zeitung, a Swiss German-language daily newspaper. The article claimed that cybercriminals installed malware on the toothbrushes and used them to access a Swiss company's website, causing the site to go offline and causing significant financial loss. However, cybersecurity experts have questioned the veracity of the story, with some describing it as "total bollocks" and others pointing out that smart electric toothbrushes are connected to smartphones and tablets via Bluetooth, making it impossible for them to launch DDoS attacks over the web. Fortinet clarified that the topic of toothbrushes being used for DDoS attacks was presented as an illustration of a given type of attack and that no IoT botnets have been observed targeting toothbrushes or similar embedded devices.

The Tech Dilemma - IOT Hack

Imagine the juxtaposition of this narrative against our common expectations of technology: 'This example, which could have been from a cyber thriller, did indeed occur,' asserted the narratives that wafted through the press and social media. The story radiated outward with urgency, painting the image of IoT devices turned to evil tools of digital unrest. It was disseminated with such velocity that face value became an accepted currency amid news cycles. And yet, skepticism took root in the fertile minds of those who dwell in the domains of cyber guardianship.

Several cyber security and IOT experts, postulated that the information from Fortinet had been contorted by the wrench of misinterpretation. They and their ilk highlighted a critical flaw: smart electric toothbrushes are bound to their smartphone or tablet counterparts by the tethers of Bluetooth, not the internet, stripping them of any innate ability to conduct DDoS or any other type of cyber attack directly.

With this unraveling of an incident fit for our cyber age, we are presented with a sobering reminder of the threat spectrum that burgeons as the tendrils of the Internet of Things (IoT) insinuate themselves into our everyday fabrics. Innocuous devices, previously deemed immune to the internet's shadow, now stand revealed as potential conduits for cyber evil. The layers of impact are profound, touching the private spheres of individuals, the underpinning frameworks of national security, and the sinews that clutch at our economic realities. The viral incident was a misinformation.

IOT Weakness

IoT devices bear inherent weaknesses for twin reasons: the oft-overlooked element of security and the stark absence of a means to enact those security measures. Ponder this problem Is there a pathway to traverse the security settings of an electric toothbrush? Or to install antivirus measures within the cooling confines of a refrigerator? The answers point to an unsettling simplicity—you cannot.

How to Protect

Vigilance - What then might be the protocol to safeguard our increasingly digital space? It begins with vigilance, the cornerstone of digital self-defense. Ensure the automatic updating of all IoT devices when they beckon with the promise of a new security patch.

Self Awareness - Avoid the temptation of public USB charging stations, which, while offering electronic succor to your devices, could also stand as the Trojan horses for digital pathogens. Be attuned to signs of unusual power depletion in your gadgets, for it may well serve as the harbinger of clandestine malware. Navigate the currents of public Wi-Fi with utmost care, as they are as fertile for data interception as they are convenient for your connectivity needs.

Use of Firewall - A firewall can prove stalwart against the predators of the internet interlopers. Your smart appliances, from the banality of a kitchen toaster to the novelty of an internet-enabled toilet, if shielded by this barrier, remain untouched, and by extension, uncompromised. And let us not dismiss this notion with frivolity, for the prospect of a malware-compromised toilet or any such smart device leaves a most distasteful specter.

Limit the use of IOT - Additionally, and this is conveyed with the gravity warranted by our current digital era, resist the seduction of IoT devices whose utility does not outweigh their inherent risks. A smart television may indeed be vital for the streaming aficionado amongst us, yet can we genuinely assert the need for a connected laundry machine, an iron, or indeed, a toothbrush? Here, prudence is a virtue; exercise it with judicious restraint.

Conclusion

As we step forward into an era where connectivity has shifted from a mere luxury to an omnipresent standard, we must adopt vigilance and digital hygiene practices with the same fervour as those for our corporal well-being. Let the toothbrush hack not simply be a tale of caution, consigned to the annals of internet folklore, but a fable that imbues us with the recognition of our role in maintaining discipline in a realm where even the most benign objects might be mustered into service by a cyberspace adversary.

References

- https://www.bleepingcomputer.com/news/security/no-3-million-electric-toothbrushes-were-not-used-in-a-ddos-attack/

- https://www.zdnet.com/home-and-office/smart-home/3-million-smart-toothbrushes-were-not-used-in-a-ddos-attack-but-they-could-have-been/

- https://www.securityweek.com/3-million-toothbrushes-abused-for-ddos-attacks-real-or-not/