#FactCheck - Viral Claim About Nitish Kumar’s Resignation Over UGC Protests Is Misleading

Executive Summary

A news video is being widely circulated on social media with the claim that Bihar Chief Minister Nitish Kumar has resigned from his post in protest against the ongoing UGC-related controversy. Several users are sharing the clip while alleging that Kumar stepped down after opposing the issue. However, CyberPeace research has found the claim to be false. The researchrevealed that the video being shared is from 2022 and has no connection whatsoever with the UGC or any recent protests related to it. An old video has been misleadingly linked to a current issue to spread misinformation on social media.

Claim:

An Instagram user shared a video on January 26 claiming that Bihar Chief Minister Nitish Kumar had resigned. The post further alleged that the news was first aired on Republic channel and that Kumar had submitted his resignation to then-Governor Phagu Chauhan. The link to the post, its archived version, and screenshots can be seen below. (Links as provided)

Fact Check:

To verify the claim, CyberPeace first conducted a keyword-based search on Google. No credible or established media organisation reported any such resignation, clearly indicating that the viral claim lacked authenticity.

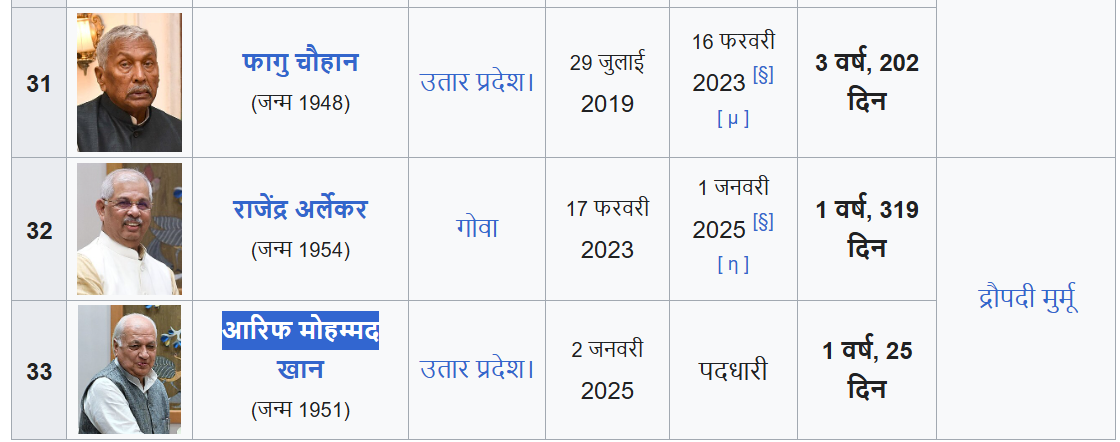

Further, the voiceover in the viral video states that Nitish Kumar handed over his resignation to Governor Phagu Chauhan. However, Phagu Chauhan ceased to be the Governor of Bihar in February 2023. The current Governor of Bihar is Arif Mohammad Khan, making the claim in the video factually incorrect and misleading.

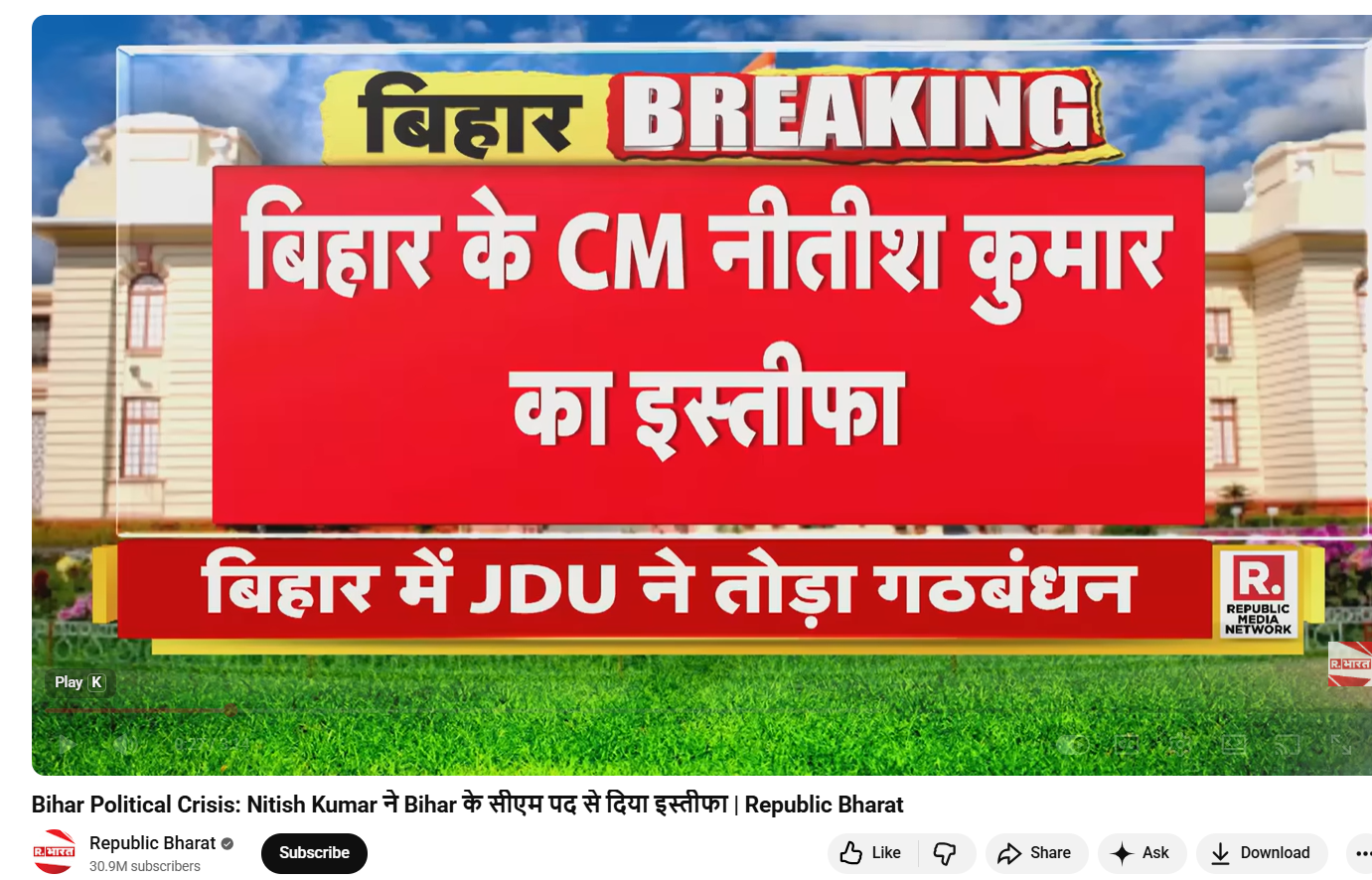

In the next step, keyframes from the viral video were extracted and reverse-searched using Google Lens. This led to the official YouTube channel of Republic Bharat, where the full version of the same video was found. The video was uploaded on August 9, 2022. This clearly establishes that the clip circulating on social media is not recent and is being shared out of context.

Conclusion

CyberPeace’s research confirms that the viral video claiming Nitish Kumar resigned over the UGC issue is false. The video dates back to 2022 and has no link to the current UGC controversy. An old political video has been deliberately circulated with a misleading narrative to create confusion on social media.

Related Blogs

Executive Summary:

One of the most complex threats that have appeared in the space of network security is focused on the packet rate attacks that tend to challenge traditional approaches to DDoS threats’ involvement. In this year, the British based biggest Internet cloud provider of Europe, OVHcloud was attacked by a record and unprecedented DDoS attack reaching the rate of 840 million packets per second. Targets over 1 Tbps have been observed more regularly starting from 2023, and becoming nearly a daily occurrence in 2024. The maximum attack on May 25, 2024, got to 2.5 Tbps, this points to a direction to even larger and more complex attacks of up to 5 Tbps. Many of these attacks target critical equipment such as Mikrotik models within the core network environment; detection and subsequent containment of these threats prove a test for cloud security measures.

Modus Operandi of a Packet Rate Attack:

A type of cyberattack where an attacker sends with a large volume of packets in a short period of time aimed at a network device is known as packet rate attack, or packet flood attack or network flood attack under volumetric DDoS attack. As opposed to the deliberately narrow bandwidth attacks, these raids target the computation time linked with package processing.

Key technical characteristics include:

- Packet Size: Usually compact, and in many cases is less than 100 bytes

- Protocol: Named UDP, although it can also involve TCP SYN or other protocol flood attacks

- Rate: Exceeding 100 million packets per second (Mpps), with recent attacks exceeding 840 Mpps

- Source IP Diversity: Usually originating from a small number of sources and with a large number of requests per IP, which testifies about the usage of amplification principles

- Attack on the Network Stack : To understand the impact, let's examine how these attacks affect different layers of the network stack:

1. Layer 3 (Network Layer):

- Each packet requires routing table lookups and hence routers and L3 switches have the problem of high CPU usage.

- These mechanisms can often be saturated so that network communication will be negatively impacted by the attacker.

2. Layer 4 (Transport Layer):

- Other stateful devices (e.g. firewalls, load balancers) have problems with tables of connections

- TCP SYN floods can also utilize all connection slots so that no incoming genuine connection can be made.

3. Layer 7 (Application Layer):

- Web servers and application firewalls may be triggered to deliver a better response in a large number of requests

- Session management systems can become saturated, and hence, the performance of future iterations will be a little lower than expected in terms of their perceived quality by the end-user.

Technical Analysis of Attack Vectors

Recent studies have identified several key vectors exploited in high-volume packet rate attacks:

1.MikroTik RouterOS Exploitation:

- Vulnerability: CVE-2023-4967

- Impact: Allows remote attackers to generate massive packet floods

- Technical detail: Exploits a flaw in the FastTrack implementation

2.DNS Amplification:

- Amplification factor: Up to 54x

- Technique: Exploits open DNS resolvers to generate large responses to small queries

- Challenge: Difficult to distinguish from legitimate DNS traffic

3.NTP Reflection:

- Command: monlist

- Amplification factor: Up to 556.9x

- Mitigation: Requires NTP server updates and network-level filtering

Mitigation Strategies: A Technical Perspective

1. Combating packet rate attacks requires a multi-layered approach:

- Hardware-based Mitigation:

- Implementation: FPGA-based packet processing

- Advantage: Can handle millions of packets per second with minimal latency

- Challenge: High cost and specialized programming requirements

2.Anycast Network Distribution:

- Technique: Distributing traffic across multiple global nodes

- Benefit: Dilutes attack traffic, preventing single-point failures

- Consideration: Requires careful BGP routing configuration

3.Stateless Packet Filtering:

- Method: Applying filtering rules without maintaining connection state

- Advantage: Lower computational overhead compared to stateful inspection

- Trade-off: Less granular control over traffic

4.Machine Learning-based Detection:

- Approach: Using ML models to identify attack patterns in real-time

- Key metrics: Packet size distribution, inter-arrival times, protocol anomalies

- Challenge: Requires continuous model training to adapt to new attack patterns

Performance Metrics and Benchmarking

When evaluating DDoS mitigation solutions for packet rate attacks, consider these key performance indicators:

- Flows per second (fps) or packet per second (pps) capability

- Dispersion and the latency that comes with it is inherent to mitigation systems.

- The false positive rate in the case of the attack detection

- Exposure time before beginning of mitigation from the moment of attack

Way Forward

The packet rate attacks are constantly evolving where the credible defenses have not stayed the same. The next step entails extension to edge computing and 5G networks for distributing mitigation closer to the attack origins. Further, AI-based proactive tools of analysis for prediction of such threats will help to strengthen the protection of critical infrastructure against them in advance.

In order to stay one step ahead in this, it is necessary to constantly conduct research, advance new technologies, and work together with other cybersecurity professionals. There is always a need to develop secure defenses that safeguard these networks.

Reference:

https://blog.ovhcloud.com/the-rise-of-packet-rate-attacks-when-core-routers-turn-evil/

https://cybersecuritynews.com/record-breaking-ddos-attack-840-mpps/

https://www.cloudflare.com/learning/ddos/famous-ddos-attacks/

Introduction

Deepfake technology, which combines the words "deep learning" and "fake," uses highly developed artificial intelligence—specifically, generative adversarial networks (GANs)—to produce computer-generated content that is remarkably lifelike, including audio and video recordings. Because it can provide credible false information, there are concerns about its misuse, including identity theft and the transmission of fake information. Cybercriminals leverage AI tools and technologies for malicious activities or for committing various cyber frauds. By such misuse of advanced technologies such as AI, deepfake, and voice clones. Such new cyber threats have emerged.

India Topmost destination for deepfake attacks

According to Sumsub’s identity fraud report 2023, a well-known digital identity verification company with headquarters in the UK. India, Bangladesh, and Pakistan have become an important participants in the Asia-Pacific identity fraud scene with India’s fraud rate growing exponentially by 2.99% from 2022 to 2023. They are among the top ten nations most impacted by the use of deepfake technology. Deepfake technology is being used in a significant number of cybercrimes, according to the newly released Sumsub Identity Fraud Report for 2023, and this trend is expected to continue in the upcoming year. This highlights the need for increased cybersecurity awareness and safeguards as identity fraud poses an increasing concern in the area.

How Deeepfake Works

Deepfakes are a fascinating and worrisome phenomenon that have emerged in the modern digital landscape. These realistic-looking but wholly artificial videos have become quite popular in the last few months. Such realistic-looking, but wholly artificial, movies have been ingrained in the very fabric of our digital civilisation as we navigate its vast landscape. The consequences are enormous and the attraction is irresistible.

Deep Learning Algorithms

Deepfakes examine large datasets, frequently pictures or videos of a target person, using deep learning techniques, especially Generative Adversarial Networks. By mimicking and learning from gestures, speech patterns, and facial expressions, these algorithms can extract valuable information from the data. By using sophisticated approaches, generative models create material that mixes seamlessly with the target context. Misuse of this technology, including the dissemination of false information, is a worry. Sophisticated detection techniques are becoming more and more necessary to separate real content from modified content as deepfake capabilities improve.

Generative Adversarial Networks

Deepfake technology is based on GANs, which use a dual-network design. Made up of a discriminator and a generator, they participate in an ongoing cycle of competition. The discriminator assesses how authentic the generated information is, whereas the generator aims to create fake material, such as realistic voice patterns or facial expressions. The process of creating and evaluating continuously leads to a persistent improvement in Deepfake's effectiveness over time. The whole deepfake production process gets better over time as the discriminator adjusts to become more perceptive and the generator adapts to produce more and more convincing content.

Effect on Community

The extensive use of Deepfake technology has serious ramifications for several industries. As technology develops, immediate action is required to appropriately manage its effects. And promoting ethical use of technologies. This includes strict laws and technological safeguards. Deepfakes are computer trickery that mimics prominent politicians' statements or videos. Thus, it's a serious issue since it has the potential to spread instability and make it difficult for the public to understand the true nature of politics. Deepfake technology has the potential to generate totally new characters or bring stars back to life for posthumous roles in the entertainment industry. It gets harder and harder to tell fake content from authentic content, which makes it simpler for hackers to trick people and businesses.

Ongoing Deepfake Assaults In India

Deepfake videos continue to target popular celebrities, Priyanka Chopra is the most recent victim of this unsettling trend. Priyanka's deepfake adopts a different strategy than other examples including actresses like Rashmika Mandanna, Katrina Kaif, Kajol, and Alia Bhatt. Rather than editing her face in contentious situations, the misleading film keeps her look the same but modifies her voice and replaces real interview quotes with made-up commercial phrases. The deceptive video shows Priyanka promoting a product and talking about her yearly salary, highlighting the worrying development of deepfake technology and its possible effects on prominent personalities.

Actions Considered by Authorities

A PIL was filed requesting the Delhi High Court that access to websites that produce deepfakes be blocked. The petitioner's attorney argued in court that the government should at the very least establish some guidelines to hold individuals accountable for their misuse of deepfake and AI technology. He also proposed that websites should be asked to identify information produced through AI as such and that they should be prevented from producing illegally. A division bench highlighted how complicated the problem is and suggested the government (Centre) to arrive at a balanced solution without infringing the right to freedom of speech and expression (internet).

Information Technology Minister Ashwini Vaishnaw stated that new laws and guidelines would be implemented by the government to curb the dissemination of deepfake content. He presided over a meeting involving social media companies to talk about the problem of deepfakes. "We will begin drafting regulation immediately, and soon, we are going to have a fresh set of regulations for deepfakes. this might come in the way of amending the current framework or ushering in new rules, or a new law," he stated.

Prevention and Detection Techniques

To effectively combat the growing threat posed by the misuse of deepfake technology, people and institutions should place a high priority on developing critical thinking abilities, carefully examining visual and auditory cues for discrepancies, making use of tools like reverse image searches, keeping up with the latest developments in deepfake trends, and rigorously fact-check reputable media sources. Important actions to improve resistance against deepfake threats include putting in place strong security policies, integrating cutting-edge deepfake detection technologies, supporting the development of ethical AI, and encouraging candid communication and cooperation. We can all work together to effectively and mindfully manage the problems presented by deepfake technology by combining these tactics and adjusting the constantly changing terrain.

Conclusion

Advanced artificial intelligence-powered deepfake technology produces extraordinarily lifelike computer-generated information, raising both creative and moral questions. Misuse of tech or deepfake presents major difficulties such as identity theft and the propagation of misleading information, as demonstrated by examples in India, such as the latest deepfake video involving Priyanka Chopra. It is important to develop critical thinking abilities, use detection strategies including analyzing audio quality and facial expressions, and keep up with current trends in order to counter this danger. A thorough strategy that incorporates fact-checking, preventative tactics, and awareness-raising is necessary to protect against the negative effects of deepfake technology. Important actions to improve resistance against deepfake threats include putting in place strong security policies, integrating cutting-edge deepfake detection technologies, supporting the development of ethical AI, and encouraging candid communication and cooperation. We can all work together to effectively and mindfully manage the problems presented by deepfake technology by combining these tactics and making adjustments to the constantly changing terrain. Creating a true cyber-safe environment for netizens.

References:

- https://yourstory.com/2023/11/unveiling-deepfake-technology-impact

- https://www.indiatoday.in/movies/celebrities/story/deepfake-alert-priyanka-chopra-falls-prey-after-rashmika-mandanna-katrina-kaif-and-alia-bhatt-2472293-2023-12-05

- https://www.csoonline.com/article/1251094/deepfakes-emerge-as-a-top-security-threat-ahead-of-the-2024-us-election.html

- https://timesofindia.indiatimes.com/city/delhi/hc-unwilling-to-step-in-to-curb-deepfakes-delhi-high-court/articleshow/105739942.cms

- https://www.indiatoday.in/india/story/india-among-top-targets-of-deepfake-identity-fraud-2472241-2023-12-05

- https://sumsub.com/fraud-report-2023/

Introduction:

This Op-ed sheds light on the perspectives of the US and China regarding cyber espionage. Additionally, it seeks to analyze China's response to the US accusation regarding cyber espionage.

What is Cyber espionage?

Cyber espionage or cyber spying is the act of obtaining personal, sensitive, or proprietary information from individuals without their knowledge or consent. In an increasingly transparent and technological society, the ability to control the private information an individual reveals on the Internet and the ability of others to access that information are a growing concern. This includes storage and retrieval of e-mail by third parties, social media, search engines, data mining, GPS tracking, the explosion of smartphone usage, and many other technology considerations. In the age of big data, there is a growing concern for privacy issues surrounding the storage and misuse of personal data and non-consensual mining of private information by companies, criminals, and governments.

Cyber espionage aims for economic, political, and technological gain. Fox example Stuxnet (2010) cyber-attack by the US and its allies Israel against Iran’s Nuclear facilities. Three espionage tools were discovered connected to Stuxnet, such as Gauss, FLAME and DuQu, for stealing data such as passwords, screenshots, Bluetooth, Skype functions, etc.

Cyber espionage is one of the most significant and intriguing international challenges globally. Many nations and international bodies, such as the US and China, have created their definitions and have always struggled over cyber espionage norms.

The US Perspective

In 2009, US officials (along with other allied countries) mentioned that cyber espionage was acceptable if it safeguarded national security, although they condemned economically motivated cyber espionage. Even the Director of National Intelligence said in 2013 that foreign intelligence capabilities cannot steal foreign companies' trade secrets to benefit their firms. This stance is consistent with the Economic Espionage Act (EEA) of 1996, particularly Section 1831, which prohibits economic espionage. This includes the theft of a trade secret that "will benefit any foreign government, foreign agent or foreign instrumentality.

Second, the US advocates for cybersecurity market standards and strongly opposes transferring personal data extracted from the US Office of Personnel Management (OPM) to cybercrime markets. Furthermore, China has been reported to sell OPM data on illicit markets. It became a grave concern for the US government when the Chinese government managed to acquire sensitive details of 22.1 million US government workers through cyber intrusions in 2014.

Third, Cyber-espionage is acceptable unless it’s utilized for Doxing, which involves disclosing personal information about someone online without their consent and using it as a tool for political influence operations. However, Western academics and scholars have endeavoured to distinguish between doxing and whistleblowing. They argue that whistleblowing, exemplified by events like the Snowden Leaks and Vault 7 disclosures, serves the interests of US citizens. In the US, being regarded as an open society, certain disclosures are not promoted but rather required by mandate.

Fourth, the US argues that there is no cyber espionage against critical infrastructure during peacetime. According to the US, there are 16 critical infrastructure sectors, including chemical, nuclear, energy, defence, food, water, and so on. These sectors are considered essential to the US, and any disruption or harm would impact security, national public health and national economic security.

The US concern regarding China’s cyber espionage

According to James Lewis (a senior vice president at the Center for US-China Economic and Security Review Commission), the US faces losses between $ 20 billion and $30 billion annually due to China’s cyberespionage. The 2018 U.S. Trade Representative (USTR) Section 301 report highlighted instances, where the Chinese government and executives from Chinese companies engaged in clandestine cyber intrusions to obtaining commercially valuable information from the U.S. businesses, such as in 2018 where officials from China’s Ministry of State Security, stole trade from General Electric aviation and other aerospace companies.

China's response to the US accusations of cyber espionage

China's perspective on cyber espionage is outlined by its 2014 anti-espionage law, which was revised in 2023. Article 1 of this legislation is formulated to prevent, halt, and punish espionage actions to maintain national security. Article 4 addresses the act of espionage and does not differentiate between state-sponsored cyber espionage for economic purposes and state-sponsored cyber espionage for national security purposes. However, China doesn't make a clear difference between government-to-government hacking (spying) and government-to-corporate sector hacking, unlike the US. This distinction is less apparent in China due to its strong state-owned enterprise (SOE) sector. However, military spying is considered part of the national interest in the US, while corporate spying is considered a crime.

China asserts that the US has established cyber norms concerning cyber espionage to normalize public attribution as acceptable conduct. This is achieved by targeting China for cyber operations, imposing sanctions on accused Chinese individuals, and making political accusations, such as blaming China and Russia for meddling in US elections. Despite all this, Washington D.C has never taken responsibility for the infamous Flame and Stuxnet cyber operations, which were widely recognized as part of a broader collaborative initiative known as Operation Olympic Games between the US and Israel. Additionally, the US takes the lead in surveillance activities conducted against China, Russia, German Chancellor Angela Merkel, the United Nations (UN) Secretary-General, and several French presidents. Surveillance programs such as Irritant Horn, Stellar Wind, Bvp47, the Hive, and PRISM are recognized as tools used by the US to monitor both allies and adversaries to maintain global hegemony.

China urges the US to cease its smear campaign associated with Volt Typhoon’s cyberattack for cyber espionage, citing the publication of a report titled “Volt Typhoon: A Conspiratorial Swindling Campaign Targets with U.S. Congress and Taxpayers Conducted by U.S. Intelligence Community” by China's National Computer Virus Emergency Response Centre and the 360 Digital Security Group on 15 April. According to the report, 'Volt Typhoon' is a ransomware cyber criminal group self-identified as the 'Dark Power' and is not affiliated with any state or region. Multiple cybersecurity authorities in the US collaborated to fabricate this story just for more budgets from Congress. In the meantime, Microsoft and other U.S. cybersecurity firms are seeking more big contracts from US cybersecurity authorities. The reality behind “Volt Typhoon '' is a conspiratorial swindling campaign to achieve two objectives by amplifying the "China threat theory" and cheating money from the U.S. Congress and taxpayers.

Beijing condemned the US claims of cyber espionage without any solid evidence. China also blames the US for economic espionage by citing the European Parliament report that the National Security Agency (NSA) was also involved in assisting Boeing in beating Airbus for a multi-billion dollar contract. Furthermore, Brazilian President Dilma Rousseff also accused the US authorities of spying against the state-owned oil company “Petrobras” for economic reasons.

Conclusion

In 2015, the US and China marked a milestone as both President Xi Jinping and Barack Obama signed an agreement, committing that neither country's government would conduct or knowingly support cyber-enabled theft of trade secrets, intellectual property, or other confidential business information to grant competitive advantages to firms or commercial sectors. However, the China Cybersecurity Industry Alliance (CCIA) published a report titled 'US Threats and Sabotage to the Security and Development of Global Cyberspace' in 2024, highlighting the US escalating cyber-attack and espionage activities against China and other nations. Additionally, there has been a considerable increase in the volume and sophistication of Chinese hacking since 2016. According to a survey by the Center for International and Strategic Studies, out of 224 cyber espionage incidents reported since 2000, 69% occurred after Xi assumed office. Therefore, China and the US must address cybersecurity issues through dialogue and cooperation, utilizing bilateral and multilateral agreements.