#FactCheck - Video Showing Sadhus in Ice Is Artificially Generated

Executive Summary

A video showing a group of Hindu ascetics (sadhus) allegedly performing intense penance while their bodies appear to be covered in ice is being widely shared on social media. Users are circulating the video as real and claiming that it represents an ancient tradition of Sanatan Dharma. CyberPeace research found the viral claim to be false.The research revealed that the video circulating on social media is not real but has been generated using artificial intelligence (AI).

Claim

On social media platform Facebook, a user shared the viral video on January 16, 2026. The video shows several ascetics engaged in penance, with their bodies seemingly covered in ice. Users shared the video while claiming that it depicts an authentic spiritual practice rooted in Sanatan Dharma.

Links to the post, archive link, and screenshots can be seen below.

Fact Check:

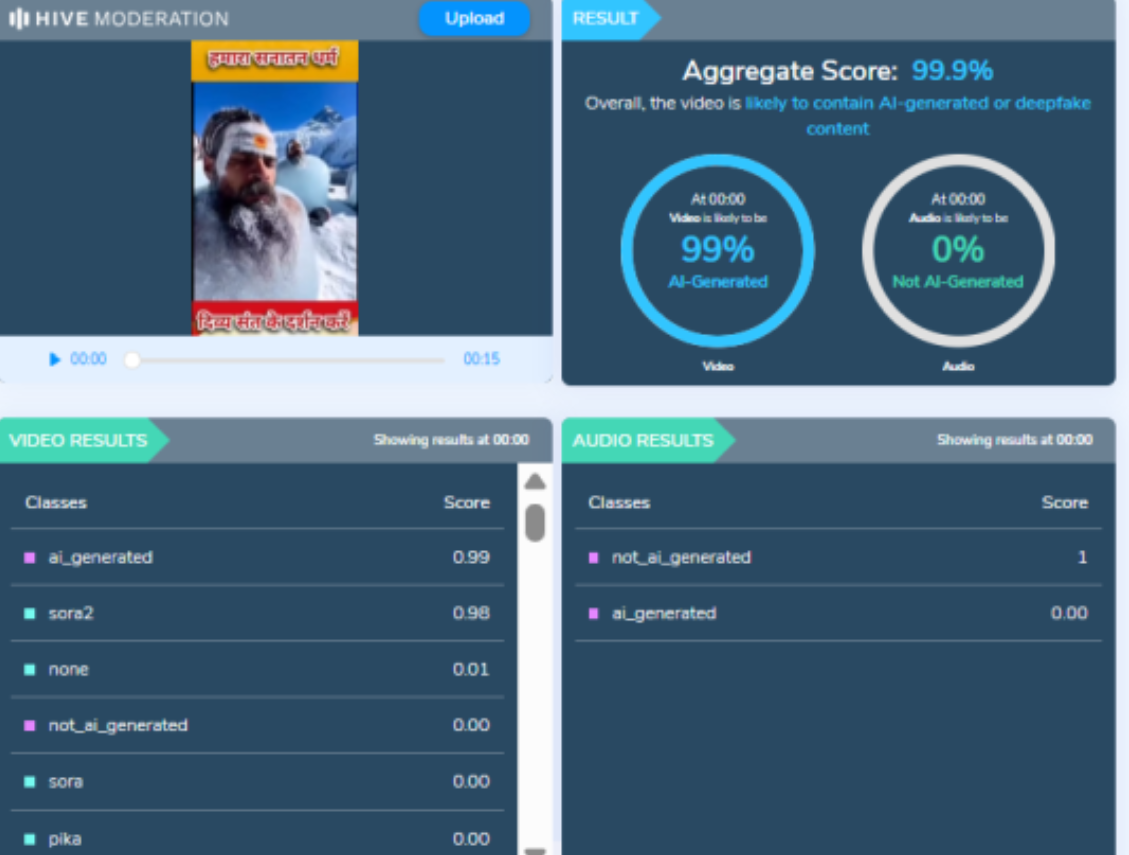

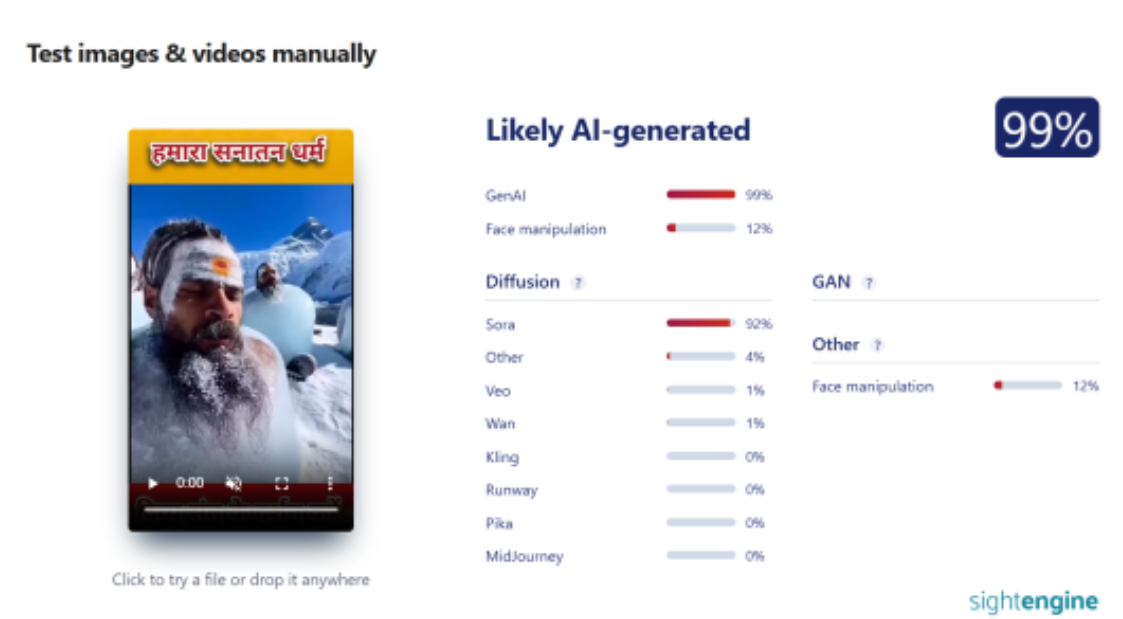

To verify the authenticity of the viral claim, CyberPeace searched relevant keywords on Google. However, no credible or reliable media reports supporting the claim were found. A close examination of the viral video raised suspicion that it may have been AI-generated. To verify this, the video was analysed using the AI detection tool Hive Moderation. According to the results, the video was found to be 99 percent AI-generated.

In the next step of the research, the same video was analysed using another AI detection tool, Sightengine. The results again indicated that the video was 99 percent AI-generated.

Conclusion

CyberPeace concludes that the video circulating on social media is not real. The viral video showing ascetics covered in ice was generated using artificial intelligence and does not depict an actual religious or spiritual practice.

Related Blogs

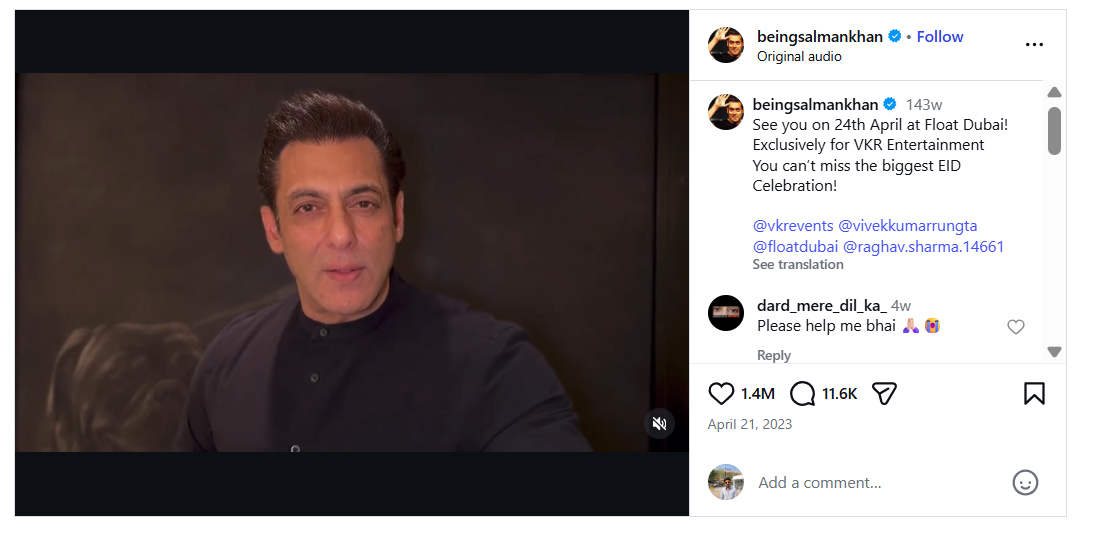

A video of Bollywood actor Salman Khan is being widely circulated on social media, in which he can allegedly be heard saying that he will soon join Asaduddin Owaisi’s party, the All India Majlis-e-Ittehadul Muslimeen (AIMIM). Along with the video, a purported image of Salman Khan with Asaduddin Owaisi is also being shared. Social media users are claiming that Salman Khan is set to join the AIMIM party.

CyberPeace research found the viral claim to be false. Our research revealed that Salman Khan has not made any such statement, and that both the viral video and the accompanying image are AI-generated.

Claim

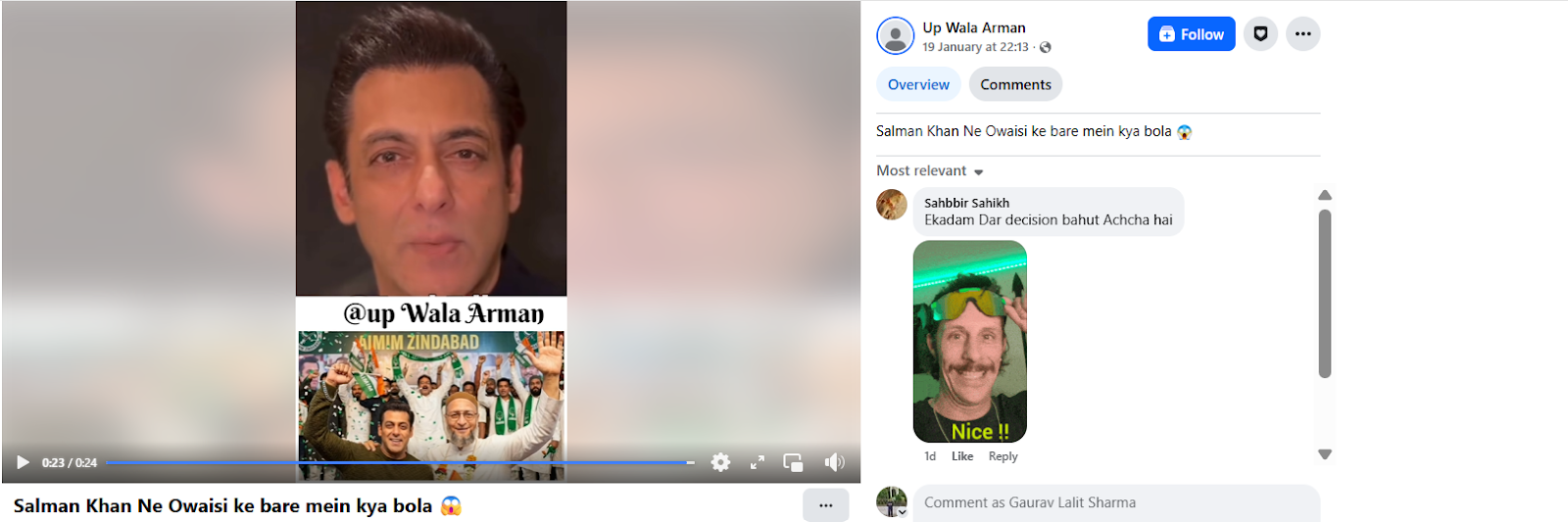

Social media users claim that Salman Khan has announced his decision to join AIMIM.On 19 January 2026, a Facebook user shared the viral video with the caption, “What did Salman say about Owaisi?” In the video, Salman Khan can allegedly be heard saying that he is going to join Owaisi’s party. (The link to the post, its archived version, and screenshots are available.)

Fact Check:

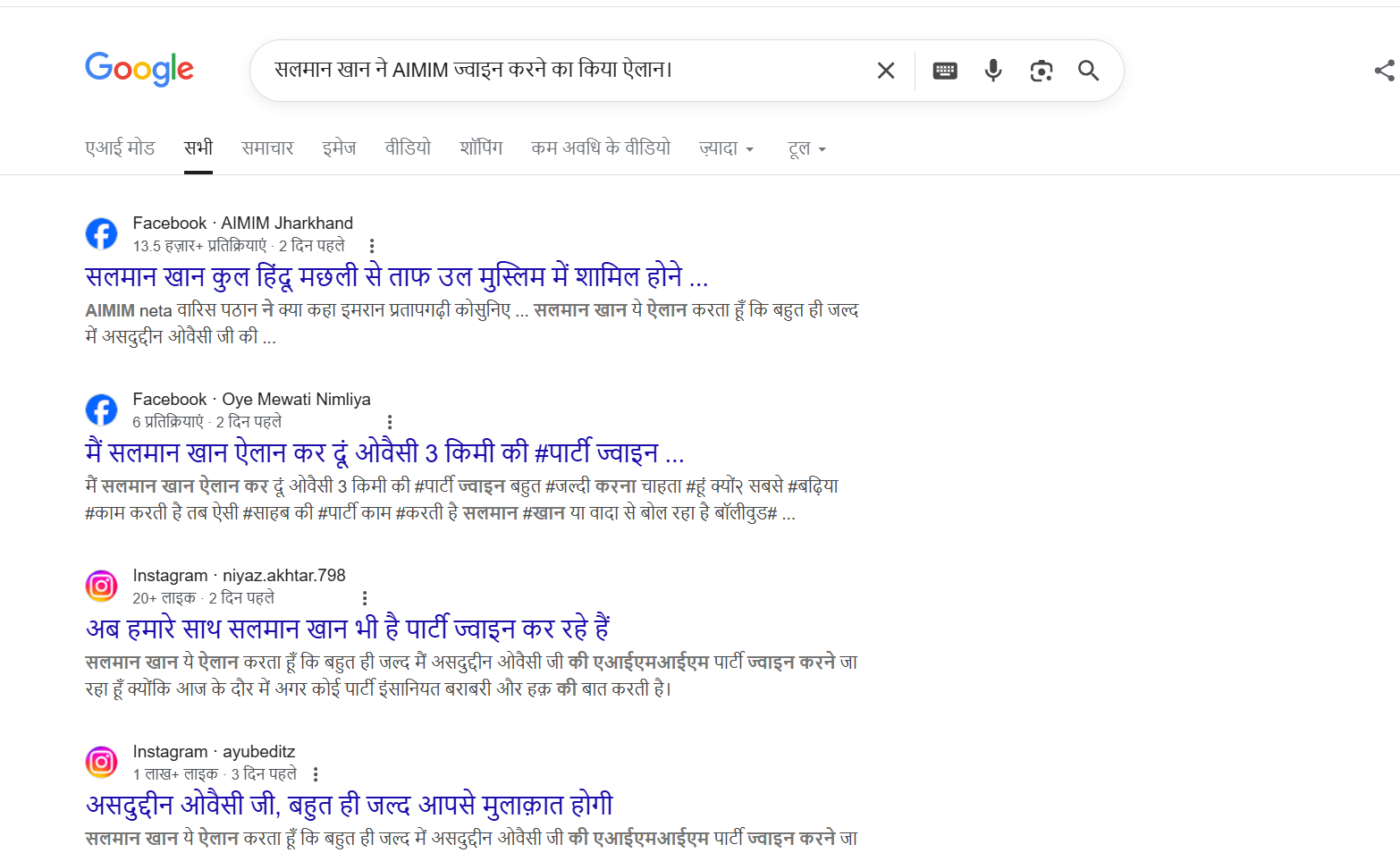

To verify the claim, we first searched Google using relevant keywords. However, no credible or reliable media reports were found supporting the claim that Salman Khan is joining AIMIM.

In the next step of verification, we extracted key frames from the viral video and conducted a reverse image search using Google Lens. This led us to a video posted on Salman Khan’s official Instagram account on 21 April 2023. In the original video, Salman Khan is seen talking about an event scheduled to take place in Dubai. A careful review of the full video confirmed that no statement related to AIMIM or Asaduddin Owaisi is made.

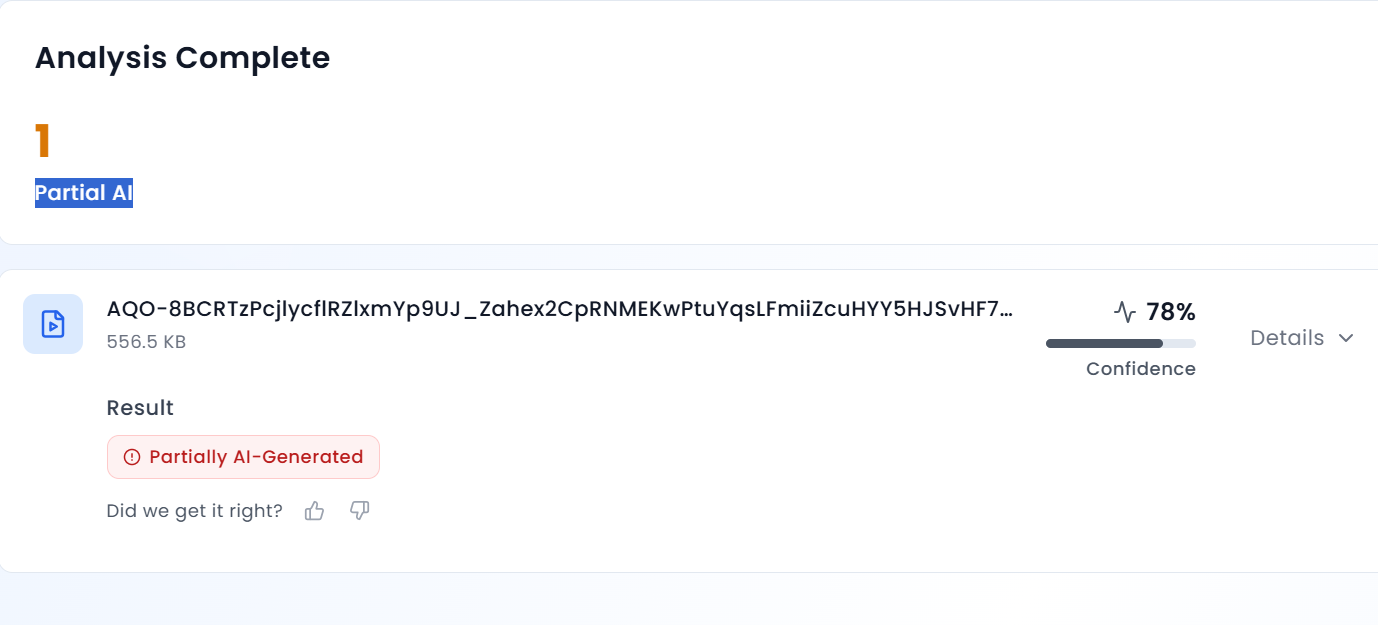

Further analysis of the viral clip revealed that Salman Khan’s voice sounds unnatural and robotic. To verify this, we scanned the video using AURGIN AI, an AI-generated content detection tool. According to the tool’s analysis, the viral video was generated using artificial intelligence.

Conclusion

Salman Khan has not announced that he is joining the AIMIM party. The viral video and the image circulating on social media are AI-generated and manipulated.

.webp)

Introduction

India's National Commission for Protection of Child Rights (NCPCR) is set to approach the Ministry of Electronics and Information Technology (MeitY) to recommend mandating a KYC-based system for verifying children's age under the Digital Personal Data Protection (DPDP) Act. The decision to approach or send recommendations to MeitY was taken by NCPCR in a closed-door meeting held on August 13 with social media entities. In the meeting, NCPCR emphasised proposing a KYC-based age verification mechanism. In this background, Section 9 of the Digital Personal Data Protection Act, 2023 defines a child as someone below the age of 18, and Section 9 mandates that such children have to be verified and parental consent will be required before processing their personal data.

Requirement of Verifiable Consent Under Section 9 of DPDP Act

Regarding the processing of children's personal data, Section 9 of the DPDP Act, 2023, provides that for children below 18 years of age, consent from parents/legal guardians is required. The Data Fiduciary shall, before processing any personal data of a child or a person with a disability who has a lawful guardian, obtain verifiable consent from the parent or lawful guardian. Additionally, behavioural monitoring or targeted advertising directed at children is prohibited.

Ongoing debate on Method to obtain Verifiable Consent

Section 9 of the DPDP Act gives parents or lawful guardians more control over their children's data and privacy, and it empowers them to make decisions about how to manage their children's online activities/permissions. However, obtaining such verifiable consent from the parent or legal guardian presents a quandary. It was expected that the upcoming 'DPDP rules,' which have yet to be notified by the Central Government, would shed light on the procedure of obtaining such verifiable consent from a parent or lawful guardian.

However, In the meeting held on 18th July 2024, between MeitY and social media companies to discuss the upcoming Digital Personal Data Protection Rules (DPDP Rules), MeitY stated that it may not intend to prescribe a ‘specific mechanism’ for Data Fiduciaries to verify parental consent for minors using digital services. MeitY instead emphasised obligations put forth on the data fiduciary under section 8(4) of the DPDP Act to implement “appropriate technical and organisational measures” to ensure effective observance of the provisions contained under this act.

In a recent update, MeitY held a review meeting on DPDP rules, where they focused on a method for determining children's ages. It was reported that the ministry is making a few more revisions before releasing the guidelines for public input.

CyberPeace Policy Outlook

CyberPeace in its policy recommendations paper published last month, (available here) also advised obtaining verifiable parental consent through methods such as Government Issued ID, integration of parental consent at ‘entry points’ like app stores, obtaining consent through consent forms, or drawing attention from foreign laws such as California Privacy Law, COPPA, and developing child-friendly SIMs for enhanced child privacy.

CyberPeace in its policy paper also emphasised that when deciding the method to obtain verifiable consent, the respective platforms need to be aligned with the fact that verifiable age verification must be done without compromising user privacy. Balancing user privacy is a question of both technological capabilities and ethical considerations.

DPDP Act is a brand new framework for protecting digital personal data and also puts forth certain obligations on Data Fiduciaries and provides certain rights to Data Principal. With upcoming ‘DPDP Rules’ which are expected to be notified soon, will define the detailed procedure for the implementation of the provisions of the Act. MeitY is refining the DPDP rules before they come out for public consultation. The approach of NCPCR is aimed at ensuring child safety in this digital era. We hope that MeitY comes up with a sound mechanism for obtaining verifiable consent from parents/lawful guardians after taking due consideration to recommendations put forth by various stakeholders, expert organisations and concerned authorities such as NCPCR.

References

- https://www.moneycontrol.com/technology/dpdp-rules-ncpcr-to-recommend-meity-to-bring-in-kyc-based-age-verification-for-children-article-12801563.html

- https://pune.news/government/ncpcr-pushes-for-kyc-based-age-verification-in-digital-data-protection-a-new-era-for-child-safety-215989/#:~:text=During%20this%20meeting%2C%20NCPCR%20issued,consent%20before%20processing%20their%20data

- https://www.hindustantimes.com/india-news/ncpcr-likely-to-seek-clause-for-parents-consent-under-data-protection-rules-101724180521788.html

- https://www.drishtiias.com/daily-updates/daily-news-analysis/dpdp-act-2023-and-the-isssue-of-parental-consent

Introduction

Indian Cybercrime Coordination Centre (I4C) was established by the Ministry of Home Affairs (MHA) to provide a framework for law enforcement agencies (LEAs) to deal with cybercrime in a coordinated and comprehensive manner. The Indian Ministry of Home Affairs approved a scheme for the establishment of the Indian Cyber Crime Coordination Centre (I4C) in October 2018. I4C is actively working towards initiatives to combat the emerging threats in cyberspace and it has become a strong pillar of India’s cyber security and cybercrime prevention. The ‘National Cyber Crime Reporting Portal’ equipped with a 24x7 helpline number 1930, is one of the key components of the I4C.

On 10 September 2024, I4Ccelebrated its foundation day for the first time at Vigyan Bhawan, New Delhi. This celebration marked a major milestone in India’s efforts against cybercrimes and in enhancing its cybersecurity infrastructure. Union Home Minister and Minister of Cooperation, Shri Amit Shah, launched key initiatives aimed at strengthening the country’s cybersecurity landscape.

Launch of Key Initiatives to Strengthen Cybersecurity

- Cyber Fraud Mitigation Centre (CFMC): As a product of Prime Minister Shri Narendra Modi’s vision, the Cyber Fraud Mitigation Centre (CFMC), was incorporated to bring together banks, financial institutions, telecom companies, Internet Service Providers, and law enforcement agencies on a single platform to tackle online financial crimes efficiently. This integrated approach is expected to minimise the time required to streamline operations and to track and neutralise cyber fraud.

- Cyber Commando: The Cyber Commandos Program is an initiative in which a specialised wing of trained Cyber Commandos will be established in states, Union Territories, and Central Police Organizations. These commandos will work to secure the nation’s digital space and counter rising cyber threats. They will form the first line of defence in safeguarding India from the growing cyber threats.

- Samanvay Platform: The Samanvay platform is a web-based Joint Cybercrime Investigation Facility System that was introduced as a one-stop data repository for cybercrime. It facilitates cybercrime mapping, data analytics, and cooperation among law enforcement agencies across the country. This will play a pivotal role in fostering collaborations in combating cybercrimes. Mr. Shah recognised the Samanvay platform as a crucial step in fostering data sharing and collaboration. He called for a shift from the “need to know” principle to a “duty to share” mindset in dealing with cyber threats. The Samanvay platform will serve as India’s first shared data repository, significantly enhancing the country’s cybercrime response.

- Suspect Registry: The Suspect Registry Portal is a national-level platform that has been designed to track cybercriminals. The portal registry will be connected to the National Cybercrime Reporting Portal (NCRP) which aims to help banks, financial intermediaries, and law enforcement agencies strengthen fraud risk management. The initiative is expected to improve the real-time tracking of cyber suspects, preventing repeat offences and improving fraud detection mechanisms.

Rising Digitalization: Prioritizing Cybersecurity

The number of internet users in India has grown from 25 crores in 2014 to 95 crores in 2024, accompanied by a 78-foldincrease in data consumption. This growth is echoed in the number of growing cybersecurity challenges in the digital era. With the rise of digital transactions through Jan Dhan accounts, Rupay debit cards, and UPI systems, Shri Shah underscored the growing threat of digital fraud. He emphasised the need to protect personal data, prevent online harassment, and counter misinformation, fake news, and child abuse in the digital space.

The three new criminal laws, the Bharatiya Nyaya Sanhita (BNS), Bharatiya Nagrik Suraksha Sanhita (BNSS), and Bharatiya Sakshya Adhiniyam (BSA), which aim to strengthen India’s legal framework for cybercrime prevention, were also referred to in the address bythe Home Minister. These laws incorporate tech-driven solutions that will ensure investigations are conducted scientifically and effectively.

Mr. Shah emphasised popularising the 1930Cyber Crime Helpline. Additionally, he noted that I4C has issued over 600advisories, blocked numerous websites and social media pages operated by cybercriminals, and established a National Cyber Forensic Laboratory in Delhi. Over 1,100 officers have already received cyber forensics training under theI4C umbrella.

In response to the regional cybercrime challenges, the formation of Joint Cyber Coordination Teams in cybercrime hotspot areas like Mewat, Jamtara, Ahmedabad, Hyderabad, Chandigarh, Visakhapatnam and Guwahati was highlighted as a coordinated response to local cybercrime hotspot issues.

Conclusion

With the launch of initiatives like the Cyber Fraud Mitigation Centre, the Samanvay platform, and the Cyber Commandos Program, I4C is positioned to play a crucial role in combating cybercrime. The I4C is moving forward with a clear vision for a secure digital future and safeguarding India's digital ecosystem.

References:

● https://pib.gov.in/PressReleaseIframePage.aspx?PRID=2053438