#FactCheck - Out-of-Context Clip of PM Modi Misused to Claim He Insulted India

Executive Summary:

A short video clip of Prime Minister Narendra Modi is going viral on social media. In the clip, he can be heard saying, “What sins did we commit in our previous life that we were born in India?” Users are sharing this video claiming that the Prime Minister insulted India and its people during a foreign visit. However, an research by the CyberPeace found that the claim is misleading. The viral clip is taken out of context from a longer speech delivered by Modi during his visit to Shanghai, China, in 2015

Claim:

A Facebook user named “Bittu Yadav” shared the reel, portraying the statement as anti-India. The caption reads:“Look at this, and you supporters—see how your ‘leader’ is praising the country.”

Post link and archive link:

Fact Check:

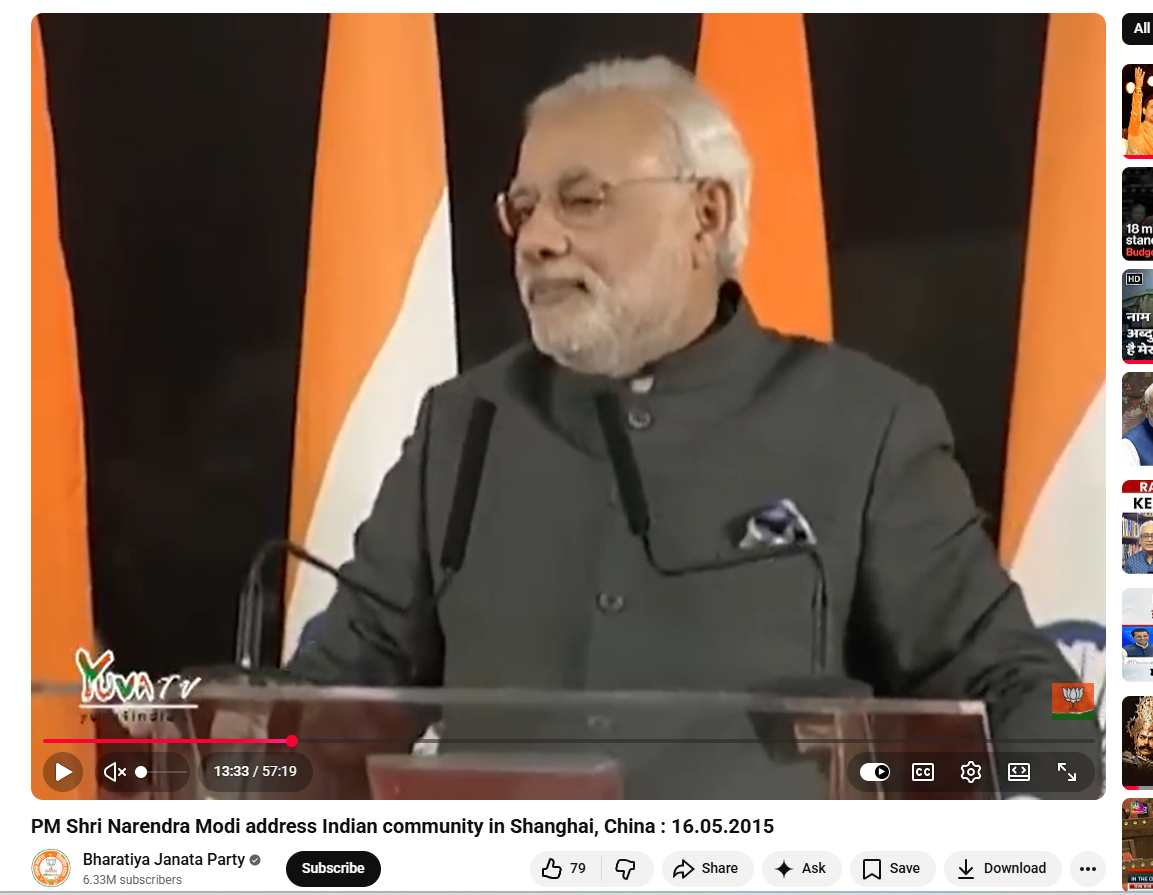

To verify the claim, we searched relevant keywords on Google and found the full video uploaded on May 16, 2015, on the official YouTube channel of the Bharatiya Janata Party. The video shows Prime Minister Narendra Modi addressing the Indian community in Shanghai, China.

In the 57-minute speech, at around 51 minutes 25 seconds, Modi was referring to the pessimistic atmosphere in India before 2014. He said: “Within a year… people used to say, ‘Leave it, nothing will happen now. Who knows what sins we committed in our previous life that we were born in India’… From that mindset, today the world says that if there is a country growing at the fastest pace, it is India.”

This clearly shows that Modi was citing a past sentiment to highlight how perceptions about India have changed over time, not expressing his personal view. Media reports from his May 2015 China visit also noted that he addressed around 5,000 members of the Indian community in Shanghai, where he spoke about India’s economic growth and initiatives like “Make in India.”

Conclusion:

The viral claim is false. The video has been edited and shared out of context. In reality, Prime Minister Narendra Modi was referring to a past mindset before 2014 while highlighting the change in India’s global perception.

Related Blogs

As Generative AI continues to make strides by creating content through user prompts, the increasing sophistication of language models widens the scope of the services they can deliver. However, they have their own limitations. Recently, alerts by Apple Intelligence on the iPhone’s latest version have come under fire for misrepresenting news by news agencies.

The new feature was introduced with the aim of presenting an effective way to group and summarise app notifications in a single alert on a user’s lock screen. This was to enable an easier scan for important details amongst a large number of notifications, doing away with overwhelming updates for the user. This, however, resulted in the misrepresentation of news channels and reporting of fake news such as the arrest of Israeli Prime Minister Benjamin Netanyahu, Luke Litter winning the PDC World Darts Championship even before the competition, tennis Player Rafael Nadal coming out as gay, among other news alerts. Following false alerts, BBC had complained about its journalism being misrepresented. In response, Apple’s proposed solution was to clarify to the user that when the text summary is displayed in the notifications, it is clearly stated to be a product of notification Apple Intelligence and not of the news agency. It also claimed the complexity of having to compress content into short summaries which resulted in fallacious alerts. Further comments revealed that the AI alert feature was in beta and is continuously being worked on depending on the user’s feedback. Owing to the backlash, Apple has suspended this service and announced that an improved version of the feature is set to be released in the near future, however, no dates have been set.

CyberPeace Insights

The rush to release new features often exacerbates the problem, especially when AI-generated alerts are responsible for summarising news reports. This can significantly damage the credibility and trust that brands have worked hard to build. The premature release of features that affect the dissemination, content, and public comprehension of information carries substantial risks, particularly in the current environment where misinformation is widespread. Timely action and software updates, which typically require weeks to implement, are crucial in mitigating these risks. The desire to be ahead in the game and bring out competitive features must not resolve the responsibility of providing services that are secure and reliable. This aforementioned incident highlights the inherent nature of generative AI, which operates by analysing the data it was trained on to deliver the best possible responses based on user prompts. However, these responses are not always accurate or reliable. When faced with prompts beyond its scope, AI systems often produce untrustworthy information, underlining the need for careful oversight and verification. A question to deliberate on is whether we require such services at all, which in practice, do save our time, but do so at the risk of the spread of false tidbits.

References

- https://www.theguardian.com/technology/2025/jan/07/apple-update-ai-inaccurate-news-alerts-bbc-apple-intelligence-iphone

- https://www.firstpost.com/tech/apple-intelligence-hallucinates-falsely-credits-bbc-for-fake-news-broadcaster-lodges-complaint-13845214.html

- https://www.cnbc.com/2025/01/08/apple-ai-fake-news-alerts-highlight-the-techs-misinformation-problem.html

- https://news.sky.com/story/apple-ai-feature-must-be-revoked-over-notifications-misleading-users-say-journalists-13288716

- https://www.hindustantimes.com/world-news/apple-to-pay-95-million-in-user-privacy-violation-lawsuit-on-siri-101735835058198.html

- https://www.hindustantimes.com/business/apple-denies-claims-of-siri-violating-user-privacy-after-95-million-class-action-suit-settlement-101736445941497.html#:~:text=Apple%20denies%20claims%20of%20Siri,action%20suit%20settlement%20%2D%20Hindustan%20Times

- https://www.google.com/search?q=apple+AI+alerts+misinformation&oq=apple+AI+alerts+misinformation+&gs_lcrp=EgZjaHJvbWUyBggAEEUYOTIHCAEQIRigATIHCAIQIRigATIHCAMQIRigATIHCAQQIRigAdIBCTEyMzUxajBqN6gCALACAA&sourceid=chrome&ie=UTF-8

- https://www.fastcompany.com/91261727/apple-intelligence-news-summaries-mistakes

- https://timesofindia.indiatimes.com/technology/tech-news/siris-secret-listening-costs-apple-95m/articleshow/116906209.cms

- https://www.theguardian.com/technology/2025/jan/17/apple-suspends-ai-generated-news-alert-service-after-bbc-complaint

Introduction

The Computer Emergency Response Team (CERT-in) is a nodal agency of the government established and appointed as a national agency in respect of cyber incidents and cyber security incidents in terms of the provisions of section 70B of the Information Technology (IT) Act, 2000. CERT-In has issued a cautionary note to Microsoft Edge, Adobe and Google Chrome users. Users have been alerted to many vulnerabilities by the government's cybersecurity agency, which hackers might use to obtain private data and run arbitrary code on the targeted machine. Users are advised by CERT-In to apply a security update right away in order to guard against the problem.

Vulnerability note

Vulnerability notes CIVN-2023-0361, CIVN-2023-0362 and CIVN-2023-0364 for Google Chrome for Desktop, Microsoft Edge and Adobe respectively, include more information on the alert. The problems have been categorized as high-severity issues by CERT-In, which suggests applying a security upgrade right now. According to the warning, there is a security risk if you use Google Chrome versions earlier than v120.0.6099.62 on Linux and Mac, or earlier than 120.0.6099.62/.63 on Windows. Similar to this, the vulnerability may also impact users of Microsoft Edge browser versions earlier than 120.0.2210.61.

Cause of the Problem

These vulnerabilities are caused by "Use after release in Media Stream, Side Panel Search, and Media Capture; Inappropriate implementation in Autofill and Web Browser UI, “according to the explanation in the issue note on the CERT-In website. The alert further warns that individuals who use the susceptible Microsoft Edge and Google Chrome browsers could end up being targeted by a remote attacker using these vulnerabilities to send a specially crafted request.” Once these vulnerabilities are effectively exploited, hackers may obtain higher privileges, obtain sensitive data, and run arbitrary code on the system of interest.

High-security issues: consequences

CERT-In has brought attention to vulnerabilities in Google Chrome, Microsoft Edge, and Adobe that might have serious repercussions and put users and their systems at risk. The vulnerabilities found in widely used browsers, like Adobe, Microsoft Edge, and Google Chrome, present serious dangers that might result in data breaches, unauthorized code execution, privilege escalation, and remote attacks. If these vulnerabilities are taken advantage of, private information may be violated, money may be lost, and reputational harm may result.

Additionally, the confidentiality and integrity of sensitive information may be compromised. The danger also includes the potential to interfere with services, cause outages, reduce productivity, and raise the possibility of phishing and social engineering assaults. Users may become less trusting of the impacted software as a result of the urgent requirement for security upgrades, which might make them hesitant to utilize these platforms until guarantees of thorough security procedures are provided.

Advisory

- Users should update their Google Chrome, Microsoft Edge, and Adobe software as soon as possible to protect themselves against the vulnerabilities that have been found. These updates are supplied by the individual software makers. Furthermore, use caution when browsing and refrain from downloading things from unidentified sites or clicking on dubious links.

- Make use of reliable ad-blockers and strong, often updated antivirus and anti-malware software. Maintain regular backups of critical data to reduce possible losses in the event of an attack, and keep up with best practices for cybersecurity. Maintaining current security measures with vigilance and proactiveness can greatly lower the likelihood of becoming a target for prospective vulnerabilities.

References

Introduction

Intricate and winding are the passageways of the modern digital age, a place where the reverberations of truth effortlessly blend, yet hauntingly contrast, with the echoes of falsehood. Within this complex realm, the World Economic Forum (WEF) has illuminated the darkened corners with its powerful spotlight, revealing the festering, insidious network of misinformation and disinformation that snakes through the virtual and physical worlds alike. Gravely identified by the “WEF's Global Risks Report 2024” as the most formidable and immediate threats to our collective well-being, this malignant duo—misinformation and disinformation.

The report published with the solemn tone suitable for the prelude to such a grand international gathering as the Annual Summit in Davos, the report presents a vivid tableau of our shared global landscape—one that is dominated by the treacherous pitfalls of deceits and unverified claims. These perils, if unrecognised and unchecked by societal checks and balances, possess the force to rip apart the intricate tapestry of our liberal institutions, shaking the pillars of democracies and endangering the vulnerable fabric of social cohesion.

Election Mania

As we find ourselves perched on the edge of a future, one where the voices of nearly three billion human beings make their mark on the annals of history—within the varied electoral processes of nations such as Bangladesh, India, Indonesia, Mexico, Pakistan, the United Kingdom, and the United States. However, the spectre of misinformation can potentially corrode the integrity of the governing entities that will emerge from these democratic processes. The warning issued by the WEF is unambiguous: we are flirting with the possibility of disorder and turmoil, where the unchecked dispersion of fabrications and lies could kindle flames of unrest, manifesting in violent protests, hate-driven crimes, civil unrest, and the scourge of terrorism.

Derived from the collective wisdom of over 1,400 experts in global risk, esteemed policymakers, and industry leaders, the report crafts a sobering depiction of our world's journey. It paints an ominous future that increasingly endows governments with formidable power—to brandish the weapon of censorship, to unilaterally declare what is deemed 'true' and what ought to be obscured or eliminated in the virtual world of sharing information. This trend signals a looming potential for wider and more comprehensive repression, hindering the freedoms traditionally associated with the Internet, journalism, and unhindered access to a panoply of information sources—vital fora for the exchange of ideas and knowledge in a myriad of countries across the globe.

Prominence of AI

When the gaze of the report extends further over a decade-long horizon, the prominence of environmental challenges such as the erosion of biodiversity and alarming shifts in the Earth's life-support systems ascend to the pinnacle of concern. Yet, trailing closely, the digital risks continue to pulsate—perpetuated by the distortions of misinformation, the echoing falsities of disinformation, and the unpredictable repercussions stemming from the utilization and, at times, the malevolent deployment of artificial intelligence (AI). These ethereal digital entities, far from being illusory shades, are the precursors of a disintegrating world order, a stage on which regional powers move to assert and maintain their influence, instituting their own unique standards and norms.

The prophecies set forth by the WEF should not be dismissed as mere academic conjecture; they are instead a trumpet's urgent call to mobilize. With a startling 30 percent of surveyed global experts bracing for the prospect of international calamities within the mere span of the coming two years, and an even more significant portion—nearly two-thirds—envisaging such crises within the forthcoming decade, it is unmistakable that the time to confront and tackle these looming risks is now. The clarion is sounding, and the message is clear: inaction is no longer an available luxury.

Maldives and India Row

To pluck precise examples from the boundless field of misinformation, we might observe the Lakshadweep-Malé incident wherein an ordinary boat accident off the coast of Kerala was grotesquely transformed into a vessel for the far-reaching tendrils of fabricated narratives, erroneously implicating Lakshadweep in the spectacle. Similarly, the tension-laden India-Maldives diplomatic exchange becomes a harrowing testament to how strained international relations may become fertile ground for the rampant spread of misleading content. The suspension of Maldivian deputy ministers following offensive remarks, the immediate tumult that followed on social media, and the explosive proliferation of counterfeit news targeting both nations paint a stark and intricate picture of how intertwined are the threads of politics, the digital platforms of social media, and the virulent propagation of falsehoods.

Yet, these are mere fragments within the extensive and elaborate weave of misinformation that threatens to enmesh our globe. As we venture forth into this dangerous and murky topography, it becomes our collective responsibility to maintain a sense of heightened vigilance, to consistently question and verify the sources and content of the information that assails us from all directions, and to cultivate an enduring culture anchored in critical thinking and discernment. The stakes are colossal—for it is not merely truth itself that we defend, but rather the underlying tenets of our societies and the sanctity of our cherished democratic institutions.

Conclusion

In this fraught era, marked indelibly by uncertainty and perched precariously on the cusp of numerous pivotal electoral ventures, let us refuse the role of passive bystanders to unraveling our collective reality. We must embrace our role as active participants in the relentless pursuit of truth, fortified with the stark awareness that our entwined futures rest precariously on our willingness and ability to distinguish the veritable from the spurious within the perilous lattice of falsehoods of misinformation. We must continually remind ourselves that, in the quest for a stable and just global order, the unerring discernment of fact from fiction becomes not only an act of intellectual integrity but a deed of civic and moral imperative.

References

- https://www.businessinsider.in/politics/world/election-fuelled-misinformation-is-serious-global-risk-in-2024-says-wef/articleshow/106727033.cms

- https://www.deccanchronicle.com/nation/current-affairs/100124/misinformation-tops-global-risks-2024.html

- https://www.msn.com/en-in/news/India/fact-check-in-lakshadweep-male-row-kerala-boat-accident-becomes-vessel-for-fake-news/ar-AA1mOJqY

- https://www.boomlive.in/news/india-maldives-muizzu-pm-modi-lakshadweep-fact-check-24085

- https://www.weforum.org/press/2024/01/global-risks-report-2024-press-release/