#FactCheck - AI-Generated Video of Peacock ‘Rescue’ Falsely Shared as Real

Executive Summary:

A video showing a peacock allegedly trapped in ice has been going viral on social media. In the clip, the peacock appears to be frozen in a snow-covered area. Moments later, a man is seen approaching with a hammer and breaking the ice to rescue the bird. Social media users are sharing the video as a real-life incident, praising the peacock’s resilience and describing the scene as inspiring. However, CyberPeace research found the viral claim to be misleading. Our research revealed that the video was created using Artificial Intelligence (AI) and is being falsely circulated as a real incident.

Claim:

Facebook user ‘Ras Bihari Pathak’ shared the viral video on January 25, 2026, with the caption: “This peacock is not standing on ice, but on courage. It reminds us that no matter how harsh the circumstances are, hope always returns in colours.” The archived version of the post can be accessed here.

Fact Check:

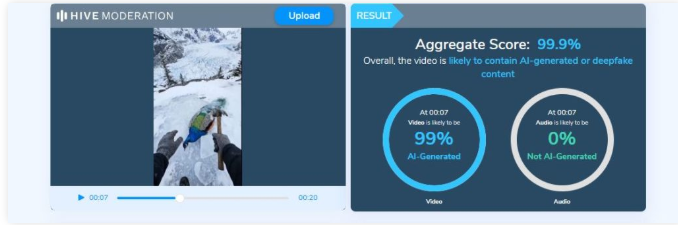

To verify the claim, we first conducted a keyword search on Google to check whether any such real incident involving a peacock trapped in ice had been reported. However, no credible or verified media reports were found. Next, we closely examined the viral video. Upon observation, the peacock’s movements and reactions appeared unnatural and artificial. The motion lacked realistic physical behaviour, raising suspicion that the video might have been digitally generated. To confirm this, we analysed the clip using the AI video detection tool Hive Moderation, which indicated a 99 per cent or higher likelihood that the video was AI-generated.

Conclusion:

CyberPeace research confirms that the viral video showing a peacock allegedly trapped in ice is not real. The clip has been created using Artificial Intelligence and is being shared on social media with a false and misleading claim.

Related Blogs

Introduction

In the contemporary information environment, misinformation has emerged as a subtle yet powerful force capable of shaping public perception, influencing behavior, and undermining institutional credibility. Unlike overt falsehoods, misinformation often gains traction because it appears authentic, familiar, and authoritative. The rapid circulation of content through digital platforms has intensified this challenge, allowing altered or misleading material to reach wide audiences before verification mechanisms can respond. When misinformation mimics official communication, its impact becomes especially concerning, as citizens tend to place implicit trust in documents that carry the appearance of state authority. This growing vulnerability of public information systems was illustrated by the calendar incident in Himachal Pradesh in January 2026.

The calendar incident of Himachal Pradesh in January 2026 shows how a small lie can lead to large social and governance problems. A person whose identity is still unknown posted a modified version of the Government Calendar 2026, changing the official dates and resulting in public confusion and reputational damage to the Printing and Stationery Department. The incident may not appear very serious at first sight, but it indicates a deeper systemic issue. Misinformation is posing increasing dangers to public information ecosystems, especially when official documents are misrepresented and disseminated through digital platforms.

Misinformation as a Governance Challenge

Government calendars and official documents are necessary for public awareness and administrative coordination, and their manipulation impedes the credibility of institutions and the trustworthiness of governance. In Himachal Pradesh, modified dates might have led to confusion regarding public holidays, interference in school and administrative planning, and misinformation among the people. Such misinformation is a direct interference in the social contract that exists between the citizens and the State, where accurate information is the foundation of trust, compliance, and participation.

Impact on Citizens: Confusion, Distrust, and Digital Fatigue

For the general public, the dissemination of fake government information leads to a situation where people are confused and, at the same time, lose their trust in the government communication channels. If someone continuously gets to see the changed or misleading information misrepresented as credible, that person will find it hard to differentiate the truth from lies in the end.

This results in:

- Decision paralysis occurs when the public cannot make up their minds and either postpones or refrains from action due to the doubts they have

- Erosion of trust, not only in one department but also in the whole government communications department

- Digital fatigue occurs when people stop following public information completely, since they think that all content can be unreliable

Misinformation in a digital society is not limited to one platform only. It spreads quickly through direct messaging apps, community groups, and social networks, thus creating greater confusion among people before the official clarifications can reach the same audience.

Institutional Harm and Reputational Damage

The intentional tampering with official documents is not only a violation of ethics but also a crime and an immoral act from a governance perspective. The Printing and Stationery Department noted that such practices tarnish the public image of government bodies, which are based on accuracy, neutrality, and trust.

When untrue material gets to be known as official content:

- Departments have to communicate reactively.

- Money and manpower that could have been used for the normal administrative work are now spent on the control of the situation.

The registration of a First Information Report (FIR) in this matter is an indication of the gradual shift in the perception of law enforcement agencies that misinformation is not a playful act but rather a technology-assisted crime with serious consequences.

The Role of Verifiable Information and Trusted Sources

Such occurrences stress the need for trustworthy information as well as confirmed sources to be at the centre of the digital era. It should be the responsibility of the authorities to lead the citizens to practice and ENABLING to depend on official websites, verified social media accounts, government portals, and press releases for authentication.

Platform Responsibility and Digital Literacy

The spread of misinformation poses a significant challenge for social media platforms, which frequently amplify highly engaging content. There are some ways that the social media networks can try to limit the damage, and these are: tagging of non-verified material, limiting the sharing and working with authorities in the area of fact-checking support. However, one more thing which is crucial here is ‘public knowledge’ about digital platforms, as even unintentional dissemination of fake “official” materials can lead to legal and social repercussions. The advice of the Himachal state government is a good thing, but constantly informing the public is still a requirement.

Legal Accountability as a Deterrent

The active participation of the Cyber Crime Cells unequivocally indicates that digital misinformation, especially involving government documents, will face severe consequences. The establishment of legal responsibility acts as a preventive measure and reiterates the notion that the right to speak one's mind does not cover the right to lie or undermine public institutions. Nonetheless, to have an effective enforcement, it has to be accompanied by preventive actions such as good communication, strong governance, and public trust-building. Consistent enforcement against digital misinformation can contribute to greater accountability within society. Digital Literacy programs should be conducted periodically for netizens and institutions.

Conclusion

The incident of the creation of fake calendars in Himachal Pradesh served as a signal for the authorities to adopt accurate communication strategies. The ratification of misinformation can be achieved only if there is shared participation of governments, digital platforms, citizens and civil societies. The main goal of all this is to maintain public trust and the dissemination of information in democratic processes.

Introduction

Emerging technologies in the digital era have made their inroads in manifold domains and locations, including the “Aviation industry”. A 2022 Cranfield University and Inmarsat report has made the point for digitalization powering a reviving age for the aviation industry. Several airport authorities are presently mobilizing power of emerging technologies such as Artificial Intelligence (AI) across the airport bedrock to provide travelers with a plain sailing and expeditious air travel experience.

The Perils of Juice-Jacking

Today, Universal Serial Bus (USB) charging ports are ubiquitous and a convenient way for travelers to keep their devices powered up. In their busy, mundane lives, people use the public charging facility while travelling. However, cybersecurity experts have warned that charging in public areas could wipe off data from an electronic device or install malware, and they have urged people to stay away from USB charging ports at airports and other public areas. This leads to the possibility that fraudsters may manipulate susceptible users via juice jacking.

Investigative journalist Brian Krebs in 2011 coined the term "Juice Jacking". It isa form of cyber attack where a public USB charging port is fiddled with and infected using hardware and software changes to pocket data or install malware on devices connected to it. The term “juice jacking” is a slang representation for electric power or energy, and “hijacking” indicates an unauthorized key toa device.

While the preliminary purpose of juice jacking is usually to pilfer sensitive information from corresponding devices, such as passwords and payment card details, attackers can exploit this stolen information to attain unauthorized to your financial accounts. If the adversary attacker installs malware in the electronic device during the juice jacking strategy, the attacker may further observe the individual's movements even after one has disconnected the device from the USB port. However, the hazards of Juice Jacking include malware infection, data heist, economic loss and damage to the reputation of an individual.

RedFlags from Agencies

In2023, the Federal Bureau of Investigation (FBI) forewarned travelers against using charging stations in public zones such as hotels, airports, and shopping malls due to malicious actors attempting to use the public USB to introduce monitoring software and malware into devices. The U.S. Federal Communications Commission (FCC) has also administered a new advisory regarding “juice jacking "and its possibility of launching a hushed cyber attack against a mobile gadget while one is charging the phone with a USB cord. Similarly, according to new research from International Business Machines (IBM) Security, many nation-state hackers are currently training their eyes on travelers.

RBI Advisory

Recently in 2024, The Reserve Bank of India (RBI) has likewise administered a warning statement to mobile phone users urging them against charging their devices using public ports. RBI has additionally accentuated the importance of safeguarding private and financial data while using mobile devices. Juice jacking is further cited as one of the scams in the RBI booklet on the modus operandi of financial fraudsters in the financial space.

Preventing juice jacking attacks

The routes to avoid Juice Jacking are to keep a tab on the USB devices, not use the public charging ports, update the phone software regularly, enable and utilize the software security measures of the device, use a USB pass-through device, a wall outlet, or a backup battery; never use unknown charging cables and use only the trusted security apps. It is further important to avoid using cables that are left behind by other travelers in any public space. Users can correspondingly turn off their devices before connecting to a wary charging port. Nevertheless, the absence of documented cases does not necessarily imply that users cannot be a target of such an attack and a warning is still recommended when securing personal gadgets with susceptible user data while using standard cables. Also, using a virtual private network (VPN) and assuring that devices have the updated security updates established can aid in mitigating the danger of cyber attacks. It is equally important to utilize the security features of your device, such as passcodes, fingerprints, or facial recognition, enabled to count as a supplementary layer of safeguard.

Conclusion

In the contemporary digital age, individuals, on the whole, need to be vigilant about “Cybersecurity hygiene” and avoid accessing susceptible data or conducting financial transactions on unsecured networks. Mobile phones or devices should run on the latest operating system, and antivirus software should be revamped to mitigate conceivable security susceptibilities.

References

- https://www.forbes.com/sites/suzannerowankelleher/2023/04/20/juice-jacking-malware-phone-airports-hotels/?sh=47adab7e82ed

- https://www.businessairportinternational.com/features/how-ai-is-improving-business-aviation-operations.html

- https://www.news18.com/business/juice-jacking-attack-scam-bank-frauds-india-8412037.html

- https://www.comparitech.com/blog/information-security/juice-jacking/

- https://blogs.blackberry.com/en/2023/04/juice-jacking-advisory

- https://www.thehindubusinessline.com/info-tech/juice-jacking-rbi-issues-warning-against-charging-mobile-phones-using-public-ports/article67895091.ece

- https://www.thehindu.com/sci-tech/technology/juice-jacking-how-hackers-target-smartphones-tethered-to-public-charging-points/article67026433.ece

- https://www.forbes.com/sites/suzannerowankelleher/2019/05/21/why-you-should-never-use-airport-usb-charging-stations/?sh=630f026a5955

- https://edition.cnn.com/2023/04/12/tech/fbi-public-charging-port-warning/index.html

- https://social-innovation.hitachi/en-in/knowledge-hub/hitachi-voice/digital-transformation/

- https://www.inmarsat.com/en/insights/aviation/2022/future-aviation-connectivity.html

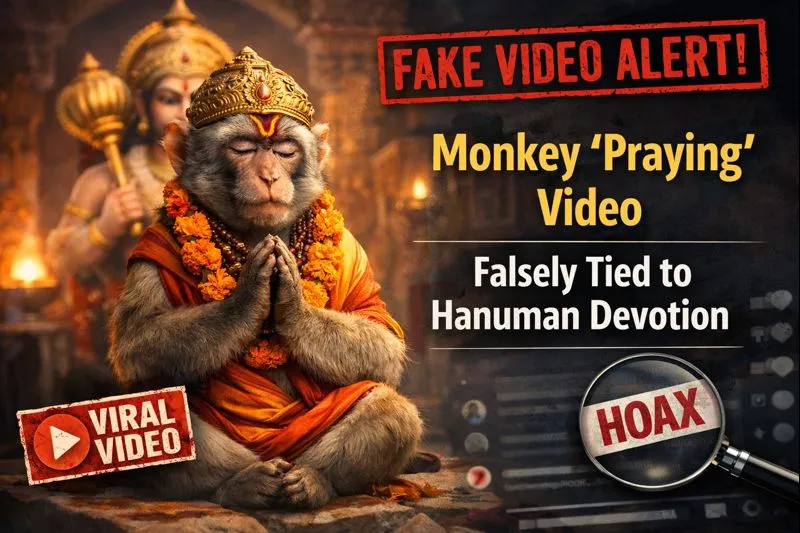

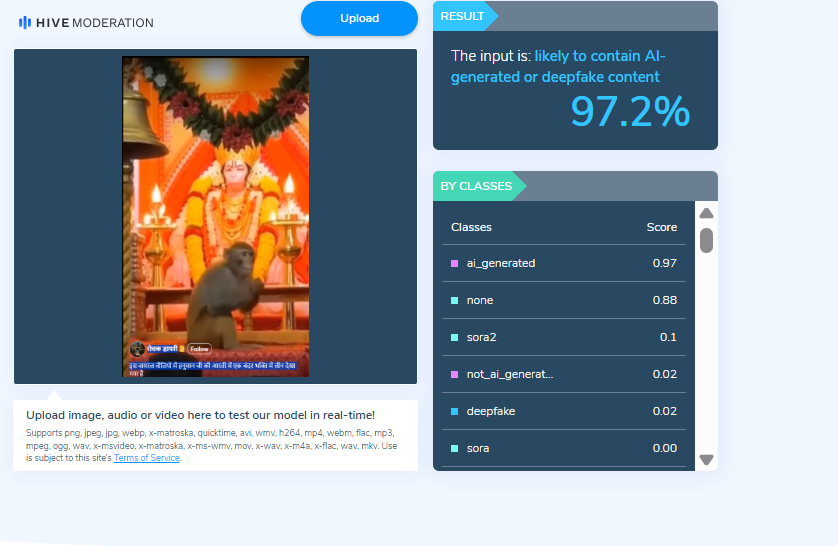

A video is being widely shared on social media showing a monkey, with users claiming that the animal is immersed in devotion to Lord Hanuman. The clip is being circulated with assertions that the monkey was seen participating in Hanuman Aarti. Cyber Peace Foundation’s research found that the viral claim is fake. Our investigation revealed that the video is not real and has been generated using artificial intelligence tools.

Claim

On January 6, 2026, Facebook users shared the viral video claiming, “A monkey was seen immersed in devotion during Hanuman Aarti.”

- Post link: https://www.facebook.com/reel/1261813845766976

- Archived link: https://archive.ph/anid5

Screenshots of the post can be seen below.

FactCheck:

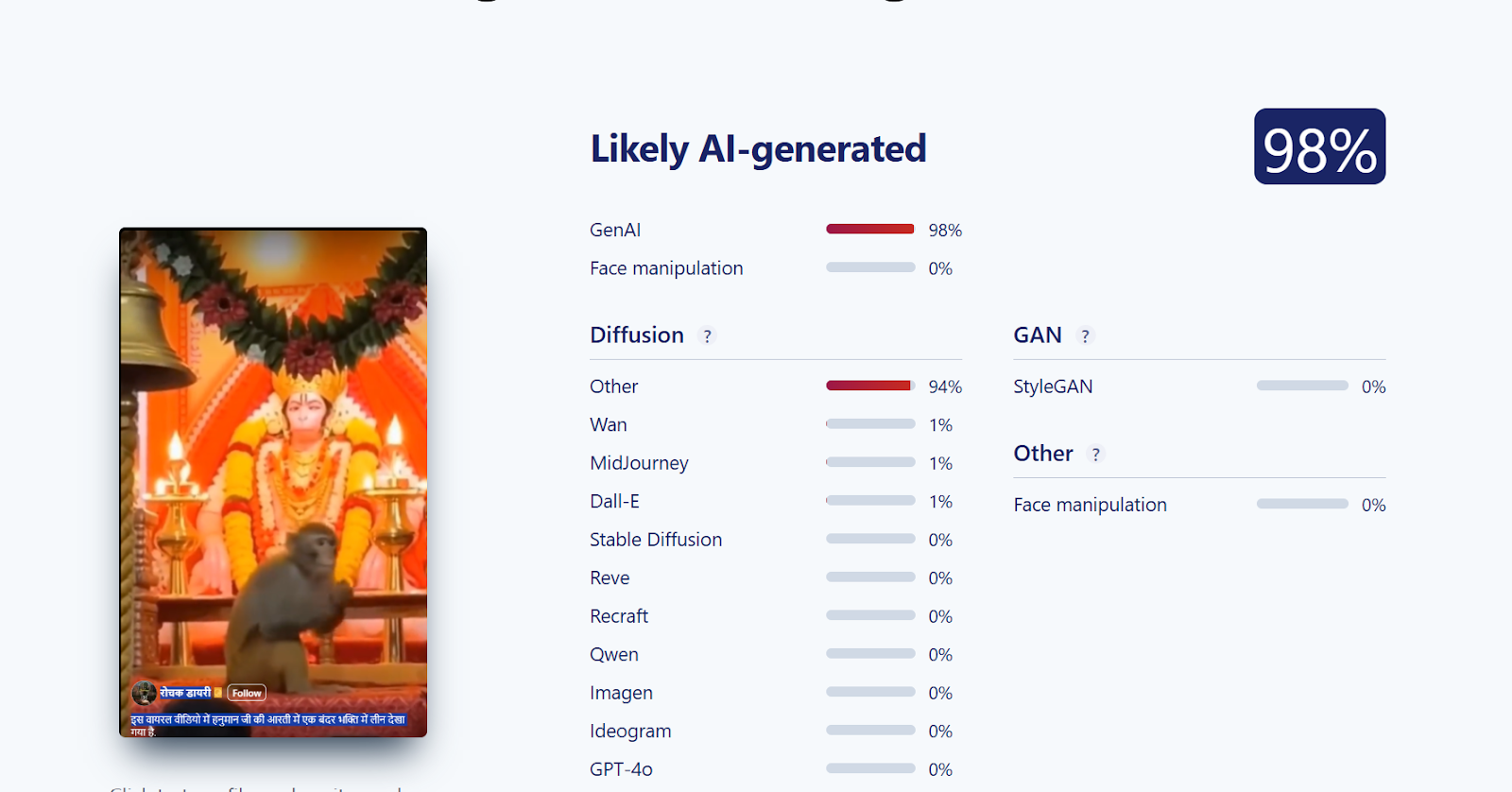

When we closely examined the viral video, we noticed several visual inconsistencies. These anomalies raised suspicion that the video might be AI-generated. To verify this, we scanned the video using the AI detection tool Hive Moderation. According to the results, the video was found to be 97 percent AI-generated.

Further, we analysed the video using another AI detection tool, Sightengine. The tool’s assessment indicated that the viral video is 98 percent AI-generated.

Conclusion

Our investigation confirms that the viral video claiming to show a monkey immersed in devotion to Lord Hanuman is AI-generated and not real. The claim circulating on social media is false and misleading.