#FactCheck - AI-Generated Video of Monkey Falsely Linked to Hanuman Devotion

A video is being widely shared on social media showing a monkey, with users claiming that the animal is immersed in devotion to Lord Hanuman. The clip is being circulated with assertions that the monkey was seen participating in Hanuman Aarti. Cyber Peace Foundation’s research found that the viral claim is fake. Our investigation revealed that the video is not real and has been generated using artificial intelligence tools.

Claim

On January 6, 2026, Facebook users shared the viral video claiming, “A monkey was seen immersed in devotion during Hanuman Aarti.”

- Post link: https://www.facebook.com/reel/1261813845766976

- Archived link: https://archive.ph/anid5

Screenshots of the post can be seen below.

FactCheck:

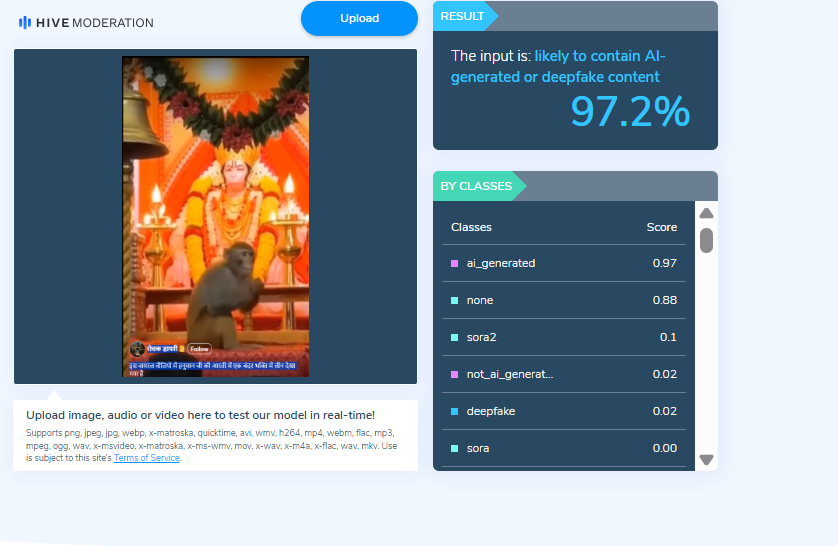

When we closely examined the viral video, we noticed several visual inconsistencies. These anomalies raised suspicion that the video might be AI-generated. To verify this, we scanned the video using the AI detection tool Hive Moderation. According to the results, the video was found to be 97 percent AI-generated.

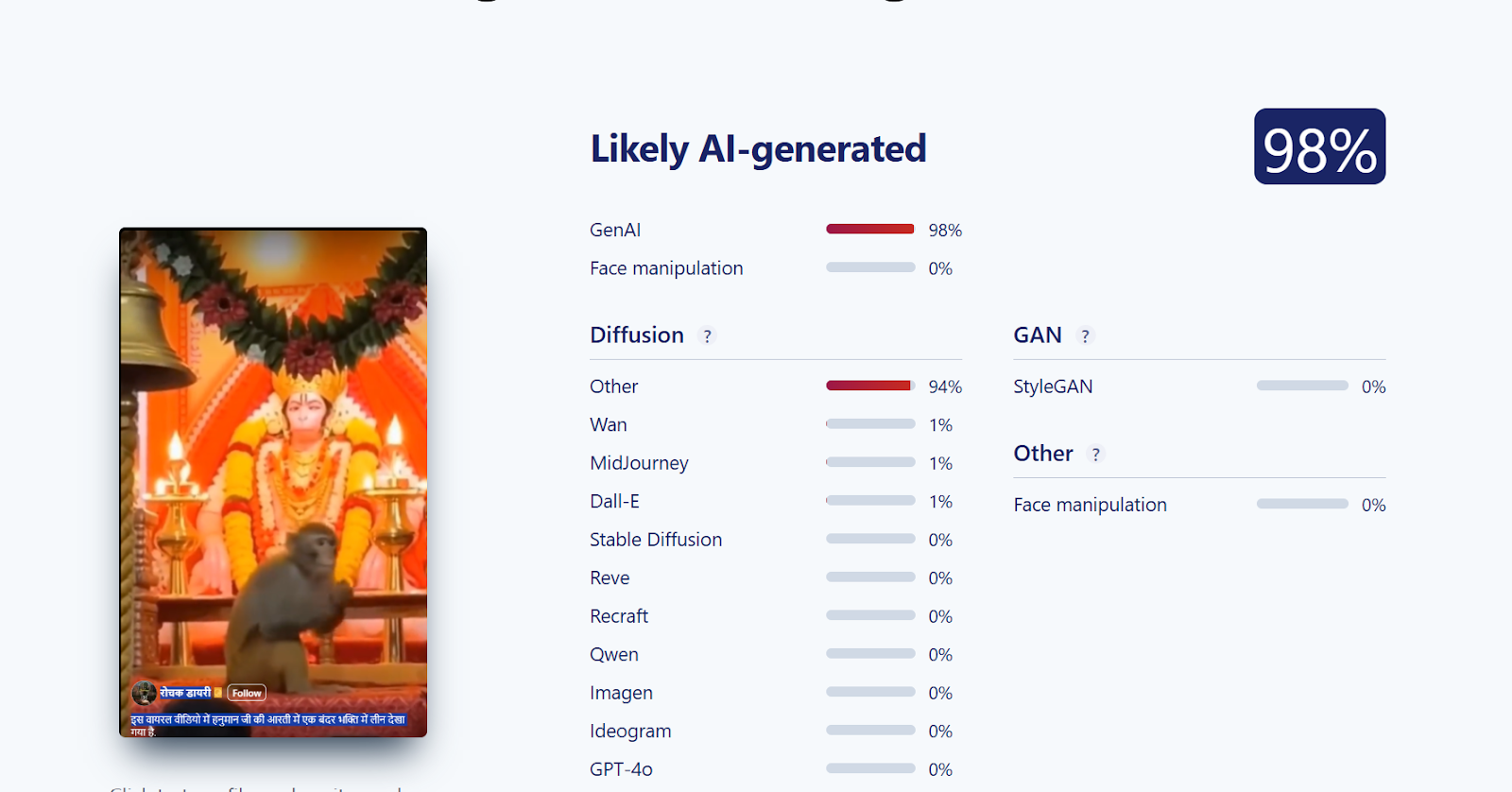

Further, we analysed the video using another AI detection tool, Sightengine. The tool’s assessment indicated that the viral video is 98 percent AI-generated.

Conclusion

Our investigation confirms that the viral video claiming to show a monkey immersed in devotion to Lord Hanuman is AI-generated and not real. The claim circulating on social media is false and misleading.

Related Blogs

Executive Summary:

A number of false information is spreading across social media networks after the users are sharing the mistranslated video with Indian Hindus being congratulated by Italian Prime Minister Giorgia Meloni on the inauguration of Ram Temple in Ayodhya under Uttar Pradesh state. Our CyberPeace Research Team’s investigation clearly reveals that those allegations are based on false grounds. The true interpretation of the video that actually is revealed as Meloni saying thank you to those who wished her a happy birthday.

Claims:

A X (Formerly known as Twitter) user’ shared a 13 sec video where Italy Prime Minister Giorgia Meloni speaking in Italian and user claiming to be congratulating India for Ram Mandir Construction, the caption reads,

“Italian PM Giorgia Meloni Message to Hindus for Ram Mandir #RamMandirPranPratishta. #Translation : Best wishes to the Hindus in India and around the world on the Pran Pratistha ceremony. By restoring your prestige after hundreds of years of struggle, you have set an example for the world. Lots of love.”

Fact Check:

The CyberPeace Research team tried to translate the Video in Google Translate. First, we took out the transcript of the Video using an AI transcription tool and put it on Google Translate; the result was something else.

The Translation reads, “Thank you all for the birthday wishes you sent me privately with posts on social media, a lot of encouragement which I will treasure, you are my strength, I love you.”

With this we are sure that it was not any Congratulations message but a thank you message for all those who sent birthday wishes to the Prime Minister.

We then did a reverse Image Search of frames of the Video and found the original Video on the Prime Minister official X Handle uploaded on 15 Jan, 2024 with caption as, “Grazie. Siete la mia” Translation reads, “Thank you. You are my strength!”

Conclusion:

The 13 Sec video shared by a user had a great reach at X as a result many users shared the Video with Similar Caption. A Misunderstanding starts from one Post and it spreads all. The Claims made by the X User in Caption of the Post is totally misleading and has no connection with the actual post of Italy Prime Minister Giorgia Meloni speaking in Italian. Hence, the Post is fake and Misleading.

- Claim: Italian Prime Minister Giorgia Meloni congratulated Hindus in the context of Ram Mandir

- Claimed on: X

- Fact Check: Fake

Introduction

The digital expanse of the metaverse has recently come under scrutiny following a gruesome incident. In a digital realm crafted for connection and exploration, a 16-year-old girl’s avatar falls victim to an agonising assault that kindled the fire of ethno-legal and societal discourse. The incident is a stark reminder that the cyberverse, offering endless possibilities and experiences, also has glaring challenges that require serious consideration. The incident involves a sixteen-year-old teen girl being raped through her digital avatar by a few members of Metaverse.

This incident has sparked a critical question of genuine psychological trauma inflicted by virtual experiences. The incident with a 16-year-old girl highlights the strong emotional repercussions caused by illicit virtual actions. While the physical realm remains unharmed, the digital assault can leave permanent scars on the psyche of the girl. This issue raises a critical question about the ethical implications of virtual interactions and the responsibilities of service providers to protect users' well-being on their platforms.

The Judicial Quagmire

The digital nature of these assaults gives impetus to complex jurisdictions which are profound in cyber offences. We are still novices in navigating the digital labyrinth where avatars have the ability to transcend borders with just a click of a mouse. The current legal structure is not equipped to tackle virtual crimes, calling for urgent reforms in critical legal structure. The Policymakers and legal Professionals must define virtual offenses first with clear and defined jurisdictional boundaries ensuring justice isn’t hampered due to geographical restrictions.

Meta’s Accountability

Meta, a platform where this gruesome incident occurred, finds itself at the crossroads of ethical dilemma. The company implemented plenty of safeguards that proved futile in preventing such harrowing acts. The incident has raised several questions about the broader role and responsibilities of tech juggernauts. Some of the questions demanding immediate answers as how a company can strike a balance between innovation and the protection of its users.

The Tightrope of Ethics

Metaverse is the epitome of innovation, yet this harrowing incident highlights a fundamental ethical contention. The real challenge is to harness the power of virtual reality while addressing the risks of digital hostilities. Society is still facing this conundrum, stakeholders must work in tandem to formulate robust and effective legal structures to protect the rights and well-being of users. This also includes balancing technological development and ethical challenges which require collective effort.

Reflections of Society

Beyond legal and ethical considerations, this act calls for wider societal reflections. It emphasises the pressing need for a cultural shift fostering empathy, digital civility and respect. As we tread deeper into the virtual realm, we must strive to cultivate ethos upholding dignity in both the digital and real world. This shift is only possible through awareness campaigns, educational initiatives and strong community engagement to foster a culture of respect and responsibility.

Safer and Ethical Way Forward

A multidimensional approach is essential to address the complicated challenges cyber violence poses. Several measures can pave the way for safer cyberspace for netizens.

- Legislative Reforms - There’s an urgent need to revamp legislative frameworks to mitigate and effectively address the complexities of these new and emerging virtual offences. The tech companies must collaborate with the government on formulating best practices and help develop standard security measures prioritising user protection.

- Public Awareness and Engagement - Initiating public awareness campaigns to educate users on crucial issues such as cyber resilience, ethics, digital detox and responsible online behaviour play a critical role in making netizens vigilant to avoid cyber hostilities and help fellow netizens in distress. Civil society organisations and think tanks such as CyberPeace Foundation are the pioneers of cyber safety campaigns in the country, working in tandem with governments across the globe to curb the evil of cyber hostilities.

- Interdisciplinary Research: The policymakers should delve deeper into the ethical, psychological and societal ramifications of digital interactions. The multidisciplinary approach in research is crucial for formulating policy based on evidence.

Conclusion

The digital Gang Rape is a wake-up call, demanding the bold measure to confront the intricate legal, societal and ethical pitfalls of the metaverse. As we navigate digital labyrinth, our collective decisions will help shape the metaverse's future. By nurturing the culture of empathy, responsibility and innovation, we can forge a path honouring the dignity of netizens, upholding ethical principles and fostering a vibrant and safe cyberverse. In this significant movement, ethical vigilance, diligence and active collaboration are indispensable.

References:

- https://www.thehindu.com/sci-tech/technology/virtual-gang-rape-reported-in-the-metaverse-probe-underway/article67705164.ece

- https://thesouthfirst.com/news/teen-uk-girl-virtually-gang-raped-in-metaverse-are-indian-laws-equipped-to-handle-similar-cases/

AI and other technologies are advancing rapidly. This has ensured the rapid spread of information, and even misinformation. LLMs have their advantages, but they also come with drawbacks, such as confident but inaccurate responses due to limitations in their training data. The evidence-driven retrieval systems aim to address this issue by using and incorporating factual information during response generation to prevent hallucination and retrieve accurate responses.

What is Retrieval-Augmented Response Generation?

Evidence-driven Retrieval Augmented Generation (or RAG) is an AI framework that improves the accuracy and reliability of large language models (LLMs) by grounding them in external knowledge bases. RAG systems combine the generative power of LLMs with a dynamic information retrieval mechanism. The standard AI models rely solely on pre-trained knowledge and pattern recognition to generate text. RAG pulls in credible, up-to-date information from various sources during the response generation process. RAG integrates real-time evidence retrieval with AI-based responses, combining large-scale data with reliable sources to combat misinformation. It follows the pattern of:

- Query Identification: When misinformation is detected or a query is raised.

- Evidence Retrieval: The AI searches databases for relevant, credible evidence to support or refute the claim.

- Response Generation: Using the evidence, the system generates a fact-based response that addresses the claim.

How is Evidence-Driven RAG the key to Fighting Misinformation?

- RAG systems can integrate the latest data, providing information on recent scientific discoveries.

- The retrieval mechanism allows RAG systems to pull specific, relevant information for each query, tailoring the response to a particular user’s needs.

- RAG systems can provide sources for their information, enhancing accountability and allowing users to verify claims.

- Especially for those requiring specific or specialised knowledge, RAG systems can excel where traditional models might struggle.

- By accessing a diverse range of up-to-date sources, RAG systems may offer more balanced viewpoints, unlike traditional LLMs.

Policy Implications and the Role of Regulation

With its potential to enhance content accuracy, RAG also intersects with important regulatory considerations. India has one of the largest internet user bases globally, and the challenges of managing misinformation are particularly pronounced.

- Indian regulators, such as MeitY, play a key role in guiding technology regulation. Similar to the EU's Digital Services Act, the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, mandate platforms to publish compliance reports detailing actions against misinformation. Integrating RAG systems can help ensure accurate, legally accountable content moderation.

- Collaboration among companies, policymakers, and academia is crucial for RAG adaptation, addressing local languages and cultural nuances while safeguarding free expression.

- Ethical considerations are vital to prevent social unrest, requiring transparency in RAG operations, including evidence retrieval and content classification. This balance can create a safer online environment while curbing misinformation.

Challenges and Limitations of RAG

While RAG holds significant promise, it has its challenges and limitations.

- Ensuring that RAG systems retrieve evidence only from trusted and credible sources is a key challenge.

- For RAG to be effective, users must trust the system. Sceptics of content moderation may show resistance to accepting the system’s responses.

- Generating a response too quickly may compromise the quality of the evidence while taking too long can allow misinformation to spread unchecked.

Conclusion

Evidence-driven retrieval systems, such as Retrieval-Augmented Generation, represent a pivotal advancement in the ongoing battle against misinformation. By integrating real-time data and credible sources into AI-generated responses, RAG enhances the reliability and transparency of online content moderation. It addresses the limitations of traditional AI models and aligns with regulatory frameworks aimed at maintaining digital accountability, as seen in India and globally. However, the successful deployment of RAG requires overcoming challenges related to source credibility, user trust, and response efficiency. Collaboration between technology providers, policymakers, and academic experts can foster the navigation of these to create a safer and more accurate online environment. As digital landscapes evolve, RAG systems offer a promising path forward, ensuring that technological progress is matched by a commitment to truth and informed discourse.

References

- https://experts.illinois.edu/en/publications/evidence-driven-retrieval-augmented-response-generation-for-onlin

- https://research.ibm.com/blog/retrieval-augmented-generation-RAG

- https://medium.com/@mpuig/rag-systems-vs-traditional-language-models-a-new-era-of-ai-powered-information-retrieval-887ec31c15a0

- https://www.researchgate.net/publication/383701402_Web_Retrieval_Agents_for_Evidence-Based_Misinformation_Detection