#FactCheck - AI-Generated Video Falsely Shared as Iran’s Attack on Israeli Nuclear Site

Executive Summary:

A video is going viral on social media linking it to the ongoing conflict between the US-Israel and Iran. The clip shows explosions on buildings and is being shared with the claim that it depicts an attack on Israel. It is further claimed that Iran targeted a nuclear site located near the sea in Israel, and this video shows that attack. However, an research by the CyberPeace found the claim to be false. The video is not from a real incident but has been created using AI.

Claim:

On social media platform X, a user shared the viral video on March 8, 2026, with the caption: “Iran attacked an Israeli nuclear site located near the sea.”

Fact Check:

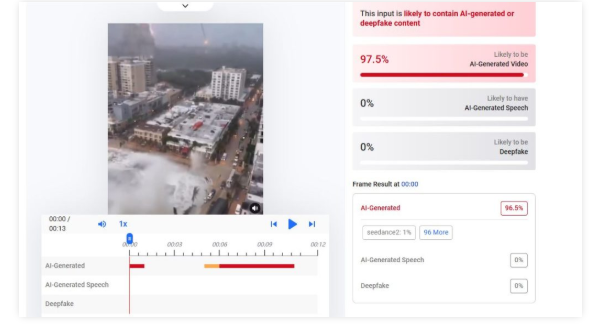

To verify the viral claim, we searched relevant keywords on Google but found no credible news reports supporting it.On closely examining the video, we observed several technical inconsistencies. The person seen in the video appears robotic, raising suspicion that the content may be AI-generated. To confirm this, we analyzed the video using AI detection tools. The tool Hive Moderation indicated that the video is approximately 97.5 percent likely to be generated using artificial intelligence.

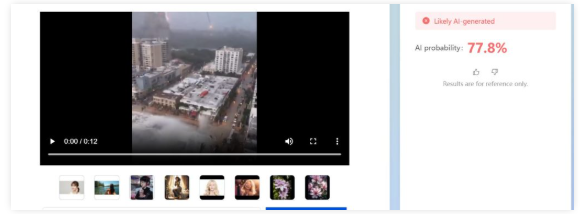

We also used the AI detection tool Matrix.Tencent. The results suggested that the video is likely AI-generated, with around a 77 percent probability.

Conclusion:

Our research found that the viral video claiming to show an Iranian attack on Israel is AI-generated and not related to any real incident.

Related Blogs

Executive Summary:

Social media is buzzing with a link that claims to offer an iPhone 15 as a gift from LuLu Hypermarket, presented as part of Holi celebrations. This article examines the deceptive tactics behind this fraudulent offer and provides guidance on recognizing and avoiding such scams.

False Claim:

The link being shared is misleading and falsely claims that LuLu Hypermarket is giving away free iPhone 15 phones. This is taking advantage of the Holi festival to trick unsuspecting people. When users click on the link, they are redirected multiple times and end up on a page with LuLu Hypermarket's photo and some simple questions. Fake comments are also used to make the offer seem genuine, but it is all a deception.

The Deceptive Scheme:

The plan uses psychological tricks by linking the offer to a famous brand and a popular celebration. The landing page's simplicity and phoney comments try to make users trust it and feel like they need to act fast, so they'll join the scam.

The Fraudulent Campaign Analysed:

The scammers are using psychological tactics to manipulate people. They're exploiting the trust people have in LuLu Hypermarket and the excitement around the new iPhone 15 during the Holi festival. The fake questionnaire serves no real purpose, but it's a way to engage users and make the scam seem legitimate. Testimonials claiming people have successfully received the iPhone 15 are also fake, designed to create a false sense of credibility. Users are prompted to select a "gift box," which adds an interactive element to draw them in further. When a user selects a box, they're falsely congratulated on winning the iPhone 15, giving them a sense of accomplishment. Finally, users are urged to share the link via WhatsApp to "claim" the gift, spreading the scam to more potential victims.

What do we Analyse? :

- We analyse the deceptive tactics employed by the scam, including psychological manipulation, false engagement techniques, and fake testimonials, all aimed at convincing users of the offer's legitimacy.

Link : (https://sophisticateddistort[.]top/nTiwpTTTT526?llue1696559991144)

- It is important to note that at this particular point, there has not been any official declaration or a proper confirmation of an offer made by Lulu Hypermarket So, people must be very careful when encountering such messages because they are often employed as lures in phishing attacks or misinformation campaigns. Before engaging or transmitting such claims, it is always advisable to authenticate the information from trustworthy sources in order to protect oneself online and prevent the spread of wrongful information

- The campaign is hosted on a third party domain instead of any official Website of LuLu Hypermarket, this raised suspicion. Also the domain was registered last year.

- The intercepted request revealed a connection to a China-linked analytical service, Baidu in the backend.

- Domain Name: sophisticateddistort.top

- Registry Domain ID: D20230629G10001G_04181852-top

- Registrar WHOIS Server: whois.west263.com

- Registrar URL: www.west263.com

- Updated Date: 2023-07-01T02:55:34Z

- Creation Date: 2023-06-29T06:05:00Z

- Registry Expiry Date: 2024-06-29T06:05:00Z

- Registrar: Chengdu west dimension digital

- Registrant State/Province: Shan Xi

- Registrant Country: CN (China)

- Name Server: curt.ns.cloudflare.com

- Name Server: harlee.ns.cloudflare.com

Note: Cybercriminal used Cloudflare technology to mask the actual IP address of the fraudulent website.

CyberPeace Advisory:

- Do not open those messages received from social platforms in which you think that such messages are suspicious or unsolicited. In the beginning, your own discretion can become your best weapon.

- Falling prey to such scams could compromise your entire system, potentially granting unauthorised access to your microphone, camera, text messages, contacts, pictures, videos, banking applications, and more. Keep your cyber world safe against any attacks.

- Never, in any case, reveal such sensitive data as your login credentials and banking details to entities you haven't validated as reliable ones.

- Before sharing any content or clicking on links within messages, always verify the legitimacy of the source. Protect not only yourself but also those in your digital circle.

- For the sake of the truthfulness of offers and messages, find the official sources and companies directly. Verify the authenticity of alluring offers before taking any action.

Conclusion:

During the festive season, as we engage in merrymaking and online activities, we should be mindful of fraudster's exploitation strategies. Another instance is the illegitimate Lulu Hypermarket offer of the upcoming iPhone 15. With the knowledge and carefulness, we can report any suspicious actions to avoid being victims of fraud in this way. Keep in mind the fact that legitimate offers are usually issued by trustworthy sources while if, the offer looks too good to be true, then it is rather a scam.

A Foray into the Digital Labyrinth

In our digital age, the silhouette of truth is often obfuscated by a fog of technological prowess and cunning deception. With each passing moment, the digital expanse sprawls wider, and within it, synthetic media, known most infamously as 'deepfakes', emerge like phantoms from the machine. These adept forgeries, melding authenticity with fabrication, represent a new frontier in the malleable narrative of understood reality. Grappling with the specter of such virtual deceit, social media behemoths Facebook and YouTube have embarked on a prodigious quest. Their mission? To formulate robust bulwarks around the sanctity of fact and fiction, all the while fostering seamless communication across channels that billions consider an inextricable part of their daily lives.

In an exploration of this digital fortress besieged by illusion, we unpeel the layers of strategy that Facebook and YouTube have unfurled in their bid to stymie the proliferation of these insidious technical marvels. Though each platform approaches the issue through markedly different prisms, a shared undercurrent of necessity and urgency harmonizes their efforts.

The Detailing of Facebook's Strategic

Facebook's encampment against these modern-day chimaeras teems with algorithmic sentinels and human overseers alike—a union of steel and soul. The company’s layer upon layer of sophisticated artificial intelligence is designed to scrupulously survey, identify, and flag potential deepfake content with a precision that borders on the prophetic. Employing advanced AI systems, Facebook endeavours to preempt the chaos sown by manipulated media by detecting even the slightest signs of digital tampering.

However, in an expression of profound acumen, Facebook also serves reminder of AI's fallibility by entwining human discernment into its fabric. Each flagged video wages its battle for existence within the realm of these custodians of reality—individuals entrusted with the hefty responsibility of parsing truth from technologically enabled fiction.

Facebook does not rest on the laurels of established defense mechanisms. The platform is in a perpetual state of flux, with policies and AI models adapting to the serpentine nature of the digital threat landscape. By fostering its cyclical metamorphosis, Facebook not only sharpens its detection tools but also weaves a more resilient protective web, one capable of absorbing the shockwaves of an evolving battlefield.

YouTube’s Overture of Transparency and the Exposition of AI

Turning to the amphitheatre of YouTube, the stage is set for an overt commitment to candour. Against the stark backdrop of deepfake dilemmas, YouTube demands the unveiling of the strings that guide the puppets, insisting on full disclosure whenever AI's invisible hands sculpt the content that engages its diverse viewership.

YouTube's doctrine is straightforward: creators must lift the curtains and reveal any artificial manipulation's role behind the scenes. With clarity as its vanguard, this requirement is not just procedural but an ethical invocation to showcase veracity—a beacon to guide viewers through the murky waters of potential deceit.

The iron fist within the velvet glove of YouTube's policy manifests through a graded punitive protocol. Should a creator falter in disclosing the machine's influence, repercussions follow, ensuring that the ecosystem remains vigilant against hidden manipulation.

But YouTube's policy is one that distinguishes between malevolence and benign use. Artistic endeavours, satirical commentary, and other legitimate expositions are spared the policy's wrath, provided they adhere to the overarching principle of transparency.

The Symbiosis of Technology and Policy in a Morphing Domain

YouTube's commitment to refining its coordination between human insight and computerized examination is unwavering. As AI's role in both the generation and moderation of content deepens, YouTube—which, like a skilled cartographer, must redraw its policies increasingly—traverses this ever-mutating landscape with a proactive stance.

In a Comparative Light: Tracing the Convergence of Giants

Although Facebook and YouTube choreograph their steps to different rhythms, together they compose an intricate dance aimed at nurturing trust and authenticity. Facebook leans into the proactive might of their AI algorithms, reinforced by updates and human interjection, while YouTube wields the virtue of transparency as its sword, cutting through masquerades and empowering its users to partake in storylines that are continually rewritten.

Together on the Stage of Our Digital Epoch

The sum of Facebook and YouTube's policies is integral to the pastiche of our digital experience, a multifarious quilt shielding the sanctum of factuality from the interloping specters of deception. As humanity treads the line between the veracious and the fantastic, these platforms stand as vigilant sentinels, guiding us in our pursuit of an old-age treasure within our novel digital bazaar—the treasure of truth. In this labyrinthine quest, it is not merely about unmasking deceivers but nurturing a wisdom that appreciates the shimmering possibilities—and inherent risks—of our evolving connection with the machine.

Conclusion

The struggle against deepfakes is a complex, many-headed challenge that will necessitate a united front spanning technologists, lawmakers, and the public. In this digital epoch, where the veneer of authenticity is perilously thin, the valiant endeavours of these tech goliaths serve as a lighthouse in a storm-tossed sea. These efforts echo the importance of evergreen vigilance in discerning truth from artfully crafted deception.

References

- https://about.fb.com/news/2020/01/enforcing-against-manipulated-media/

- https://indianexpress.com/article/technology/artificial-intelligence/google-sheds-light-on-how-its-fighting-deep-fakes-and-ai-generated-misinformation-in-india-9047211/

- https://techcrunch.com/2023/11/14/youtube-adapts-its-policies-for-the-coming-surge-of-ai-videos/

- https://www.trendmicro.com/vinfo/us/security/news/cybercrime-and-digital-threats/youtube-twitter-hunt-down-deepfakes

Introduction

Intricate and winding are the passageways of the modern digital age, a place where the reverberations of truth effortlessly blend, yet hauntingly contrast, with the echoes of falsehood. The latest thread in this fabric of misinformation is a claim that has scurried through the virtual windows of social media platforms, gaining the kind of traction that is both revelatory and alarming of our times. It is a narrative that speaks to the heart of India's cultural and religious fabric—the construction of the Ram Temple in Ayodhya, a project enshrined in the collective consciousness of a nation and steeped in historical significance.

The claim in question, a spectre of misinformation, suggests that the Ram Temple's construction has been covertly shifted 3 kilometres from its original, hallowed ground—the birthplace, as it were, of Lord Ram. This assertion, which spread through the echo chambers of social media, has been bolstered by a screenshot of Google Maps, a digital cartographer that has accidentally become a pawn in this game of truth and deception. The image purports to showcase the location of Ram Mandir as distinct and distant from the site where the Babri Masjid once stood, a claim went viral on social media and has caught the public's reactions.

The Viral Tempest

In the face of such a viral tempest, IndiaTV's fact-checking arm, IndiaTVFactCheck, has stepped into the fray, wielding the sword of veracity against the Goliath of falsehood. Their investigation into this viral claim was meticulous, a deep dive into the digital representations that have fueled this controversy. Upon examining the viral Google Maps screenshot, they noticed markings at two locations: one labelled as Shri Ram Janmabhoomi Temple and the other as Babri Masjid. The latter, upon closer inspection and with the aid of Google's satellite prowess, was revealed to be the Shri Sita-Ram Birla Temple, a place of worship that stands in quiet dignity, far removed from the contentious whispers of social media.

The truth, as it often does, lay buried beneath layers of user-generated content on Google Maps, where the ability to tag any location with a name has sometimes led to the dissemination of incorrect information. This can be corrected, of course, but not before it has woven itself into the fabric of public discourse. The fact-check by IndiaTV revealed that the location mentioned in the viral screenshot is, indeed, the Shri Sita-Ram Birla Temple and the Ram Temple is being constructed at its original, intended site.

This revelation is not merely a victory for truth over falsehood but also a testament to the resilience of facts in the face of a relentless onslaught of misinformation. It is a reminder that the digital realm, for all its wonders, is also a shadowy theatre where narratives are constructed and deconstructed with alarming ease. The very basis of all the fake narratives that spread around significant events, such as the consecration ceremony of the Ram Temple, is the manipulation of truth, the distortion of reality to serve nefarious ends of spreading misinformation.

Fake Narratives; Misinformation

Consider the elaborate fake narratives spun around the ceremony, where hours have been spent on the internet building a web of deceit. Claims such as 'Mandir wahan nahin banaya gaya' (The temple is not being built at the site of the demolition) and the issuance of new Rs 500 notes for the Ram Mandir were some pieces of misinformation that went viral on social media amid the preparations for the consecration ceremony. These repetitive claims, albeit differently worded, were spread to further a single narrative on the internet, a phenomenon that a study published in Nature said could be attributed to people taking some peripheral cues as signals for truth, which can increase with repetition.

The misinformation incidents surrounding the Ram Temple in Ayodhya are a microcosm of the larger battle between truth and misinformation. The false claims circulating online assert that the ongoing construction is not taking place at the original Babri Masjid site but rather 3 kilometres away. This misinformation, shared widely on social media has been debunked upon closer examination. The claim is based on a screenshot of Google Maps showing two locations: the construction site of the Shri Ram Janmabhoomi Temple and another spot labeled 'Babar Masjid permanently closed' situated 3 kilometers away. The assertion questions the legitimacy of demolishing the Babri Masjid if the temple is being built elsewhere. However, a thorough fact-check reveals the claim to be entirely unfounded.

Deep Scrutiny

Upon scrutiny, the screenshot indicates that the second location marked as 'Babar Masjid' is, in fact, the Sita-Ram Birla Temple in Ayodhya. This is verified by comparing the Google Maps satellite image with the actual structure of the Birla Temple. Notably, the viral screenshot misspells 'Babri Masjid' as 'Babar Masjid,' casting doubt on its credibility. Satellite images from Google Earth Pro clearly depict the construction of a temple-like structure at the precise coordinates of the original Babri Masjid demolition site (26°47'43.74'N 82°11'38.77'E). Comparing old and new satellite images further confirms that major construction activities began in 2011, aligning with the initiation of the Ram Temple construction.

Moreover, existing photographs of the Babri Masjid, though challenging to precisely match, share essential structural elements with the current construction site, reinforcing the location as the original site of the mosque. Hence the viral claim that the Ram Temple is being constructed 3 kilometers away from the Babri Masjid site is indubitably false. Evidence from historical photographs, satellite images and google images conclusively refute this misinformation, attesting that the temple construction is indeed taking place at the same location as the original Babri Masjid.

Viral Misinformation: A false claim based on a misleading Google Maps screenshot suggests the Ram Temple construction in Ayodhya has been covertly shifted 3 kilometres away from its original Babri Masjid site.

Fact Check Revealed: IndiaTVFactCheck debunked the misinformation, confirming that the viral screenshot actually showed the Shri Sita-Ram Birla Temple, not the Babri Masjid site. The Ram Temple is indeed being constructed at its original, intended location, exposing the falsehood of the claim.

Conclusion

The case of the Ram Temple is a pitiful reminder of the power of misinformation and the significance of fact-checking in preserving the integrity of truth. It is a clarion call to question, to uphold the integrity of facts in a world increasingly stymied in the murky waters of falsehoods. Widespread misinformation highlights the critical role of fact-checking in dispelling false narratives. It serves as a reminder of the ongoing battle between truth and misinformation in the digital age, emphasising the importance of upholding the integrity of facts for a more informed society.

References

- https://www.indiatvnews.com/fact-check/fact-check-is-ram-temple-being-built-3-km-away-from-the-birthplace-here-truth-behind-viral-claim-2024-01-19-912633

- https://www.thequint.com/news/webqoof/misinformation-spread-around-events-ayodhya-ram-mandir-g20-elections-bharat-jodo-yatra