#FactCheck - AI-Generated Image Falsely Linked to Doda Army Vehicle Accident

Executive Summary

On January 22, an Indian Army vehicle met with an accident in Jammu and Kashmir’s Doda district, resulting in the death of 10 soldiers, while several others were injured. In connection with this tragic incident, a photograph is now going viral on social media. The viral image shows an Army vehicle that appears to have fallen into a deep gorge, with several soldiers visible around the site. Users sharing the image are claiming that it depicts the actual scene of the Doda accident.

However, an research by the CyberPeacehas found that the viral image is not genuine. The photograph has been generated using Artificial Intelligence (AI) and does not represent the real accident. Hence, the viral post is misleading.

Claim

An Instagram user shared the viral image on January 22, 2026, writing:“Deeply saddened by the tragic accident in Doda, Jammu & Kashmir today, in which 10 brave soldiers lost their lives. My heartfelt tribute to the martyrs who laid down their lives in the line of duty.Sincere condolences to the bereaved families, and prayers for the speedy recovery of the injured soldiers.The nation will forever remember your sacrifice.”

The link and screenshot of the post can be seen below.

- https://www.instagram.com/p/DT0UBIRk_3k/

- https://archive.ph/submit/?url=https%3A%2F%2Fwww.instagram.com%2Fp%2FDT0UBIRk_3k%2F+

Fact Check:

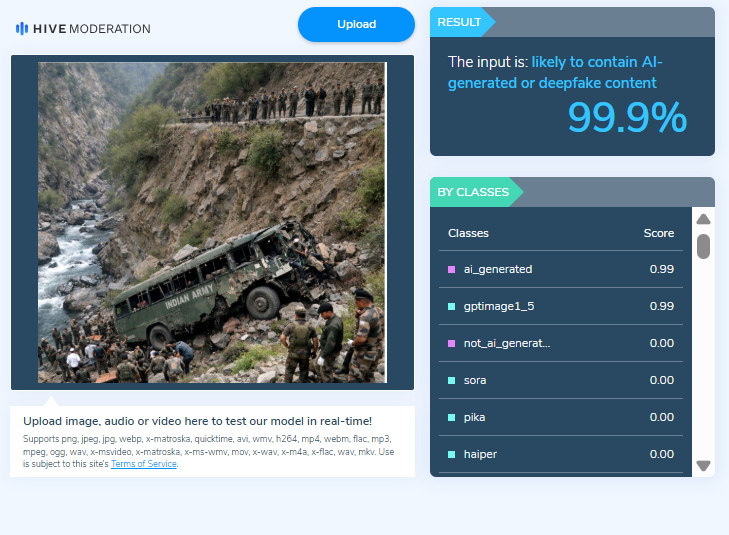

To verify the claim, we first closely examined the viral image. Several visual inconsistencies were observed. The structure of the soldier visible inside the damaged vehicle appears distorted, and the hands and limbs of people involved in the rescue operation look unnatural. These anomalies raised suspicion that the image might be AI-generated. Based on this, we ran the image through the AI detection tool Hive Moderation, which indicated that the image is over 99.9% likely to be AI-generated.

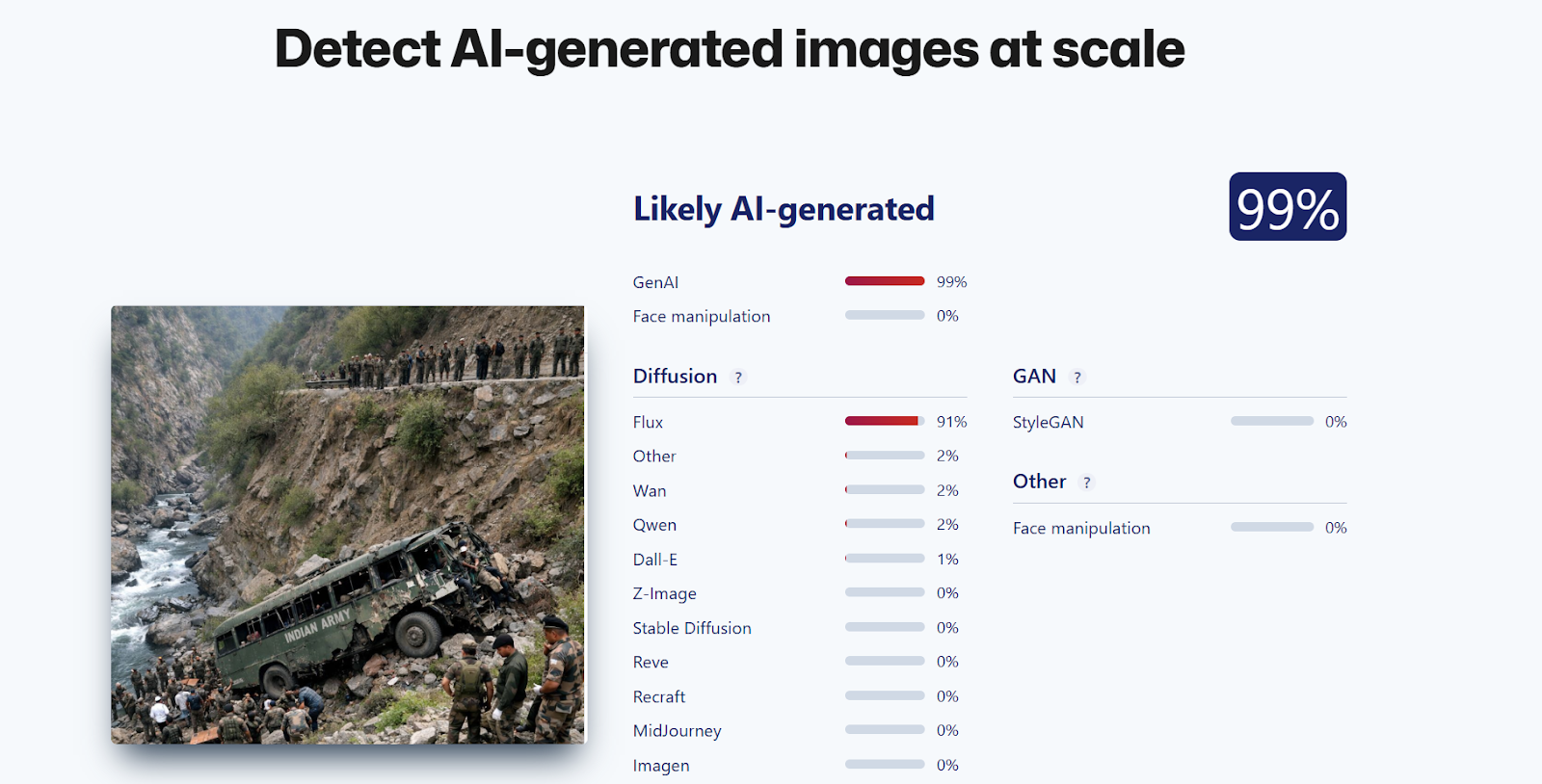

Another AI image detection tool, Sightengine, also flagged the image as 99% AI-generated.

During further research , we found a report published by Navbharat Times on January 22, 2026, which confirmed that an Indian Army vehicle had indeed fallen into a deep gorge in Doda district. According to officials, 10 soldiers were killed and 7 others were injured, and rescue operations were immediately launched.

However, it is important to note that the image circulating on social media is not an actual photograph from the incident.

Conclusion

CyberPeace research confirms that the viral image linked to the Doda Army vehicle accident has been created using Artificial Intelligence. It is not a real photograph from the incident, and therefore, the viral post is misleading.