#FactCheck - AI-Cloned Audio in Viral Anup Soni Video Promoting Betting Channel Revealed as Fake

Executive Summary:

A morphed video of the actor Anup Soni popular on social media promoting IPL betting Telegram channel is found to be fake. The audio in the morphed video is produced through AI voice cloning. AI manipulation was identified by AI detection tools and deepfake analysis tools. In the original footage Mr Soni explains a case of crime, a part of the popular show Crime Patrol which is unrelated to betting. Therefore, it is important to draw the conclusion that Anup Soni is in no way associated with the betting channel.

Claims:

The facebook post claims the IPL betting Telegram channel which belongs to Rohit Khattar is promoted by Actor Anup Soni.

Fact Check:

Upon receiving the post, the CyberPeace Research Team closely analyzed the video and found major discrepancies which are mostly seen in AI-manipulated videos. The lip sync of the video does not match the audio. Taking a cue from this we analyzed using a Deepfake detection tool by True Media. It is found that the voice of the video is 100% AI-generated.

We then extracted the audio and checked in an audio Deepfake detection tool named Hive Moderation. Hive moderation found the audio to be 99.9% AI-Generated.

We then divided the video into keyframes and reverse searched one of the keyframes and found the original video uploaded by the YouTube channel named LIV Crime.

Upon analyzing we found that in the 3:18 time frame the video was edited, and altered with an AI voice.

Hence, the viral video is an AI manipulated video and it’s not real. We have previously debunked such AI voice manipulation with different celebrities and politicians to misrepresent the actual context. Netizens must be careful while believing in such AI manipulation videos.

Conclusion:

In conclusion, the viral video claiming that IPL betting Telegram channel promotion by actor Anup Soni is false. The video has been manipulated using AI voice cloning technology, as confirmed by both the Hive Moderation AI detector and the True Media AI detection tool. Therefore, the claim is baseless and misleading.

- Claim: An IPL betting Telegram channel belonging to Rohit Khattar promoted by Actor Anup Soni.

- Claimed on: Facebook

- Fact Check: Fake & Misleading

Related Blogs

Introduction

In today’s digital world, data has emerged as the new currency that influences global politics, markets, and societies. Companies, governments, and tech behemoths aim to control data because it accords them influence and power. However, a fundamental challenge brought about by this increased reliance on data is how to strike a balance between privacy protection and innovation and utility.

In recognition of these dangers, more than 200 Nobel laureates, scientists, and world leaders have recently signed the Global Call for AI Red Lines. Governments are urged by this initiative to create legally binding international regulations on artificial intelligence by 2026. Its goal is to stop AI from going beyond moral and security bounds, particularly in areas like political manipulation, mass surveillance, cyberattacks, and dangers to democratic institutions.

One way to address the threat to privacy is through pseudonymization, which makes it possible to use data valuable for research and innovation by substituting personal identifiers for artificial ones. Pseudonymization thus directly advances the AI Red Lines initiative's mission of facilitating technological advancement while lowering the risks of data misuse and privacy violations.

The Red Lines of AI: Why do they matter?

The Global Call for AI Red Lines initiative represents a collective attempt to impose precaution before catastrophe, which was done with the objective of recognising the Red Lines in the use of AI tools. Thus, anything that unites the risks of using AI is due to the absence of global safeguards. Some of these Red Lines can be understood as;

- Cybersecurity breaches in the form of exposure of financial and personal data due to AI-driven hacking and surveillance.

- Occurrence of privacy invasions due to endless tracking.

- Generative AI can also help to create realistic fake content, undermining the trust of public discourses, leading to misinformation.

- Algorithmic amplification of polarising content can also threaten civic stability, leading to a demographic disruption.

Legal Frameworks and Regulatory Landscape

The regulations of Artificial Intelligence stand fragmented across jurisdictions, leaving significant loopholes aside. Some of the frameworks already provide partial guidance. The European Union’s Artificial Intelligence Act 2024 bans “unacceptable” AI practices, whereas the US-China Agreement also ensures that nuclear weapons remain under human, not machine-controlled. The UN General Assembly has adopted resolutions urging safe and ethical AI usage, with a binding and elusive global treaty.

On the front of data protection, the General Data Protection Regulations (GDPR) of EU offers a clear definition of Pseudonymisation under Article 4(5). It also describes a process where personal data is altered in a way that it cannot be attributed to an individual without additional information, which must be stored securely and separately. Importantly, pseudonymised data still qualifies as “personal data” under GDPR. However, India’s Digital Personal Data Protection Act (DPDP) 2023 adopts a similar stance. It does not explicitly define pseudonymisation in broad terms, such as “personal data” by including potentially reversible identifiers. According to Section 8(4) of the Act, companies are meant to adopt appropriate technical or organisational measures. International bodies and conventions like the OECD Principles on AI or the Council of Europe Convention 108+ emphasize accountability, transparency, and data minimisation. Collectively, these instruments point towards pseudonymization as a best practice, though interpretations of its scope differ.

Strategies for Corporate Implementation

For a company, pseudonymisation is not just about compliance, it is also a practical solution that offers measurable benefits. By pseudonymising data, businesses can get benefits, such as;

- Enhancing Privacy protection by masking identifiers like names or IDs by reducing the impact of data breaches.

- Preserving Data Utility, unlike having a full anonymisation, pseudonymisation also retains patterns that are essential for analytical innovation.

- Facilitating data sharing can allow organizations to collaborate with their partners and researchers while maintaining proper trust.

According to these benefits, competitive advantages get translated to clauses where customers find it more likely to trust organizations that prioritise data protection, while pseudonymisation further enables the firms to engage in cross-border collaboration without violating local data laws.

Balancing Privacy Rights and Data Utility

Balancing is a central dilemma; on one side lies the case of necessity over data utility, where companies, researchers and governments rely on large datasets to enhance the scale of AI innovation. On the other hand lies the question of the right to privacy, which is a non-negotiable principle protected under the international human rights law.

Pseudonymisation offers a practical compromise by enabling the use of sensitive data while reducing the privacy risks. Taking examples of different domains, such as healthcare, it allows the researchers to work with patient information without exposing identities, whereas in finance, it supports fraud detection without revealing the customer details.

Conclusion

The rapid rise of artificial intelligence has led to the outpacing of regulations, raising urgent questions related to safety, fairness and accountability. The global call for recognising the AI red lines is a bold step that looks in the direction of setting universal boundaries. Yet, alongside the remaining global treaties, practical safeguards are also needed. Pseudonymisation exemplifies such a safeguard, which is legally recognised under the GDPR and increasingly relevant in India’s DPDP Act. It balances the twin imperatives of privacy, protection, and data utility. For organizations, adopting pseudonymisation is not only about ensuring regulatory compliance, rather, it is also about building trust, ensuring resilience, and aligning with the broader ethical responsibilities in this digital age. As the future of AI is debatable, the guiding principles also need to be clear. By embedding techniques for preserving privacy, like pseudonymisation, into AI systems, we can take a significant step towards developing a sustainable, ethical and innovation-driven digital ecosystem.

References

https://www.techaheadcorp.com/blog/shadow-ai-the-risks-of-unregulated-ai-usage-in-enterprises/

https://planetmainframe.com/2024/11/the-risks-of-unregulated-ai-what-to-know/

https://cepr.org/voxeu/columns/dangers-unregulated-artificial-intelligence

https://www.forbes.com/sites/bernardmarr/2023/06/02/the-15-biggest-risks-of-artificial-intelligence/

Executive Summary:

A viral picture on social media showing UK police officers bowing to a group of social media leads to debates and discussions. The investigation by CyberPeace Research team found that the image is AI generated. The viral claim is false and misleading.

Claims:

A viral image on social media depicting that UK police officers bowing to a group of Muslim people on the street.

Fact Check:

The reverse image search was conducted on the viral image. It did not lead to any credible news resource or original posts that acknowledged the authenticity of the image. In the image analysis, we have found the number of anomalies that are usually found in AI generated images such as the uniform and facial expressions of the police officers image. The other anomalies such as the shadows and reflections on the officers' uniforms did not match the lighting of the scene and the facial features of the individuals in the image appeared unnaturally smooth and lacked the detail expected in real photographs.

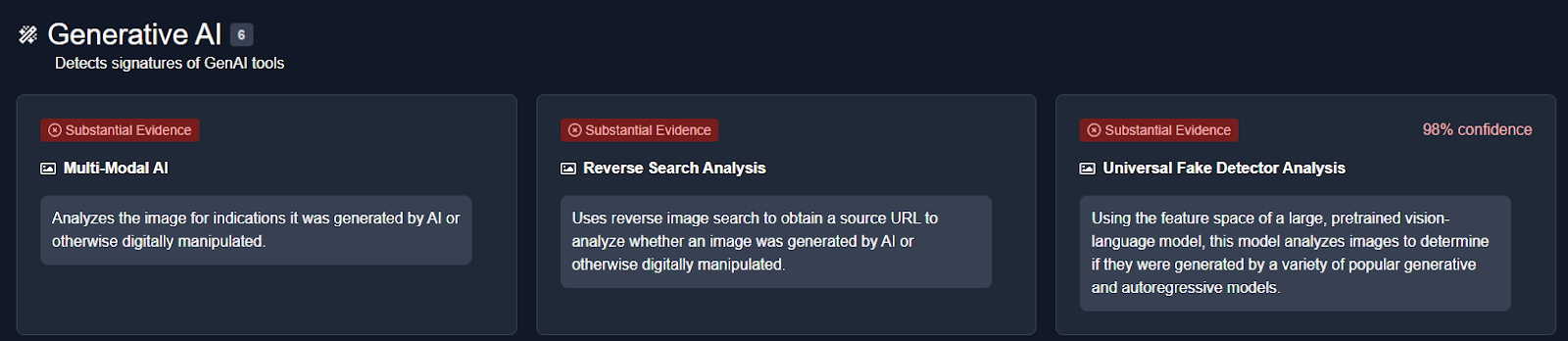

We then analysed the image using an AI detection tool named True Media. The tools indicated that the image was highly likely to have been generated by AI.

We also checked official UK police channels and news outlets for any records or reports of such an event. No credible sources reported or documented any instance of UK police officers bowing to a group of Muslims, further confirming that the image is not based on a real event.

Conclusion:

The viral image of UK police officers bowing to a group of Muslims is AI-generated. CyberPeace Research Team confirms that the picture was artificially created, and the viral claim is misleading and false.

- Claim: UK police officers were photographed bowing to a group of Muslims.

- Claimed on: X, Website

- Fact Check: Fake & Misleading

Introduction

Freedom of speech and expression is fundamental to democracy and is constitutionally entrenched in Article 19(1)(a) of the Indian Constitution. The explosion of online spaces, brought about by the digital age, in the form of social media, blogs, and messaging apps, has reinterpreted how information is authored, disseminated, and consumed. This digital revolution has galvanised individuals to engage further inclusively in public debate, but has also fanatically magnified the risks of misinformation, hate speech, and threats to public order. Against this background, the judiciary is increasingly called upon to determine the limits of free speech, primarily where state regulation seeks to infringe upon constitutional protection.

Constitutional and Statutory Framework related to Freedom of Speech

The judiciary plays an integral role in balancing the fundamental right of freedom of speech with the regulation of online content, especially during the fast-paced evolution of the digital world. In India, with Article 19(1)(a) of the Constitution guaranteeing the freedom of speech, the courts bear the critical responsibility of protecting this liberty while recognising the State's legitimate interests in restricting harmful or unlawful content on a digital scale. This adjudicatory dilemma is even trickier because the said right has been held by the Supreme Court not to be an absolute one and is subject to "reasonable restrictions" as in Article 19(2), which recognises restrictions in the interest of sovereignty, security, public order, decency, and morality. Freedom of speech, being the cornerstone of democracy in India, does have an umbrella of reasonable restrictions under which the state can regulate any form of speech that infringes upon other equally compelling societal interests. However, with the coming of the internet and other digital communication arrangements, there was a need to develop new statutory instruments, i.e., Information Technology Act, 2000 (IT Act) and Rules made thereunder, including Information Technology (Intermediary Guidelines) and Digital Media Ethics Code Rules, 2021. These enactments attempt to regulate digital content, confronting issues such as hate speech, misinformation, and content that threatens public order. The judiciary's mandate is to interpret the enactments within the constitutional precincts, thus ensuring that the arbitrariness of State action is not aggravated or that the regulation is not overbroad. Judicial Landmark Decisions Affirming Balance The judiciary has played a front-ranking role in elaborating a jurisprudence protecting free speech in delineating legitimate regulation thereof. The Supreme Court judgment in Shreya Singhal v. Union of India, 2015, is seminal. Section 66A of the IT Act was struck down as it was vague and overly broad, causing a chilling effect on online speech. The Court has emphasised that any limitation on speech must be precise and fall strictly within the parameters laid down in Article 19(2). While the Court recognises that harmful online content needs to be addressed, the remedy must not encroach upon free political debate, satire, and criticism vital for democracy.

Following this, the Anuradha Bhasin case clarified the convergence of free speech and online access. The court held that the right to free speech had a vital medium in the form of the internet and that it would have to be an inevitable, proportionate shutdown, and transparent for challenge before the judiciary for any shutdown of the internet. This reaffirmed that restrictions on online speech must be rigorously tested.

Subsequent cases involve limitations on the 2021 IT Rules, whereby such government bodies can demand that “fake” or “misleading” material be taken off the internet. Courts move with circumspection, recognising the government's interest in fighting bogus information but remaining vigilant against over-regulation that can be code for pre-emptive censorship and threatening healthy discourses.

The virtual world raises particular and deeper questions: the viral nature of online speech multiplies its impact, distributing both democratic ideas and abusive material instantaneously. The courts recognise this twinning. While pressurising the legislature and executive to formulate clearer, more precise rules, courts simultaneously act as constitutional Guardians, avoiding breaches of the right with executive excess or vague laws. There is a strain between judicial activism, which promotes constitutional rights aggressively, and the fear of judicial paternalism, courts overreaching into policy arenas. But there is a need for vigilance by the judiciary due to the rapidly changing nature of digital technologies and threats to the freedoms of democracy. The judiciary continues to give contours to free speech and online regulation. There are enforcement issues, such as ongoing abuse of struck-down provisions, such as Section 66A, that the court counters with reaffirmation of constitutional directives. The evolving jurisprudence balances on thin stilts, upholding the democratic spirit of India by securing speech on online spaces and sanctioning reasonable, transparent moderation of harmful speech.

Conclusion

The Indian judiciary's leadership in balancing online content regulation with the freedom of speech is central and refined. The courts continually emphasise that speech on the digital medium is highly constitutionally protected and that restrictions must be legally valid, specific, essential, and proportionate. By classical decisions and constant review of new regulating actions, courts safeguard democratic participation in the digital public domain from unmeritorious censorship. Concurrently, the courts recognize the responsibility of the state in regulating digital ills such as mis recipe and hate speech, demanding parameters that uphold constitutional freedoms and the due process. The balancing act of the judiciary continues to be fundamental in defining India's digital democracy so that free speech can thrive even as the state upholds public order and human dignity in the digital communication age.