#FactCheck-Air Taxi is a prototype and is not launched to commercial public

Executive Summary:

Recent reports circulating on various social media platforms have falsely claimed that an air taxi prototype is operational and providing services between Amritsar, Chandigarh, Delhi, and Jaipur. These claims, accompanied by images and videos, have been widely shared, leading to significant public attention. However, upon conducting a thorough examination using reverse image search, it has been determined that the information is misleading and inaccurate. These assertions do not reflect the current reality and are not substantiated by credible sources

Claim:

The claim suggests that an air taxi prototype is already operational, servicing routes between Amritsar, Chandigarh, Delhi, and Jaipur. This assertion is accompanied by images of a futuristic aircraft, implying that such technology is currently being used to transport commercial passengers.

Fact Check:

The claim of air taxi and routes between Amritsar, Chandigarh, Delhi, and Jaipur has been found to be misleading. Also, so far, neither the Indian government nor the respective aviation authorities have issued any sort of public declarations nor industry insiders to claim any launch of any air taxi service. Further research followed a keyword-based search that directed us to a news report published in The Times of India on January 20, 2025. A similar post to the one seen in the viral video accompanied the report. It stated that Bengaluru-based aerospace startup Sarla Aviation launched its prototype air taxi called “Shunya” during the Bharat Mobility Global Expo. Under this plan, it looks to initiate electric flying taxis in Bangalore by 2028. This urban air transport program for India will be similar to what they are posting in this regard.

Conclusion:

The viral claim saying that there is an air taxi service in India between Amritsar, Chandigarh, Delhi, and Jaipur is entirely false. The pictures and information going viral are misleading and do not relate to any progress or implementation of air taxi technology in India. To date, there is no official confirmation or credible evidence that supports such a service. Information must be verified from reliable sources before it is believed or shared in order to prevent the spread of misinformation.

- Claim: A viral post claims an air taxi is operational between Amritsar, Chandigarh, Delhi, and Jaipur.

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

Introduction

In September 2025, social media feeds were flooded with strikingly vintage saree-type portraits. These images were not taken by professional photographers, but AI-generated images. More than a million people turned to the "Nano Banana" AI tool of Google Gemini, uploading their ordinary selfies and watching them transform into Bollywood-style, cinematic, 1990s posters. The popularity of this trend is evident, as are the concerns of law enforcement agencies and cybersecurity experts regarding risks of infringement of privacy, unauthorised data sharing, and threats related to deepfake misuse.

What is the Trend?

This trend in AI sarees is created using Google Geminis' Nano Banana image-editing tool, editing and morphing uploaded selfies into glitzy vintage portraits in traditional Indian attire. A user would upload a clear photograph of a solo subject and enter prompts to generate images of cinematic backgrounds, flowing chiffon sarees, golden-hour ambience, and grainy film texture, reminiscent of classic Bollywood imagery. Since its launch, the tool has processed over 500 million images, with the saree trend marking one of its most popular uses. Photographs are uploaded to an AI system, which uses machine learning to alter the pictures according to the description specified. The transformed AI portraits are then shared by users on their Instagram, WhatsApp, and other social media platforms, thereby contributing to the viral nature of the trend.

Law Enforcement Agency Warnings

- A few Indian police agencies have issued strong advisories against participation in such trends. IPS Officer VC Sajjanar warned the public: "The uploading of just one personal photograph can make greedy operators go from clicking their fingers to joining hands with criminals and emptying one's bank account." His advisory had further warned that sharing personal information through trending apps can lead to many scams and fraud.

- Jalandhar Rural Police issued a comprehensive warning stating that such applications put the user at risk of identity theft and online fraud when personal pictures are uploaded. A senior police officer stated: "Once sensitive facial data is uploaded, it can be stored, analysed, and even potentially misused to open the way for cyber fraud, impersonation, and digital identity crimes.

The Cyber Crime Police also put out warnings on social media platforms regarding how photo applications appear entertaining but can pose serious risks to user privacy. They specifically warned that selfies uploaded can lead to data misuse, deepfake creation, and the generation of fake profiles, which are punishable under Sections 66C and 66D of the IT Act 2000.

Consequences of Such Trends

The massification of AI photo trends has several severe effects on private users and society as a whole. Identity fraud and theft are the main issues, as uploaded biometric information can be used by hackers to generate imitated identities, evading security measures or committing financial fraud. The facial recognition information shared by means of these trends remains a digital asset that could be abused years after the trend has passed. ‘Deepfake’ production is another tremendous threat because personal images shared on AI platforms can be utilised to create non-consensual artificial media. Studies have found that more than 95,000 deepfake videos circulated online in 2023 alone, a 550% increase from 2019. The images uploaded can be leveraged to produce embarrassing or harmful content that can cause damage to personal reputation, relationships, and career prospects.

Financial exploitation is also when fake applications in the guise of genuine AI tools strip users of their personal data and financial details. Such malicious platforms tend to look like well-known services so as to trick users into divulging sensitive information. Long-term privacy infringement also comes about due to the permanent retention and possible commercial exploitation of personal biometric information by AI firms, even when users close down their accounts.

Privacy Risks

A few months ago, the Ghibli trend went viral, and now this new trend has taken over. Such trends may subject users to several layers of privacy threats that go far beyond the instant gratification of taking pleasing images. Harvesting of biometric data is the most critical issue since facial recognition information posted on these sites becomes inextricably linked with user identities. Under Google's privacy policy for Gemini tools, uploaded images might be stored temporarily for processing and may be kept for longer periods if used for feedback purposes or feature development.

Illegal data sharing happens when AI platforms provide user-uploaded content to third parties without user consent. A Mozilla Foundation study in 2023 discovered that 80% of popular AI apps had either non-transparent data policies or obscured the ability of users to opt out of data gathering. This opens up opportunities for personal photographs to be shared with anonymous entities for commercial use. Exploitation of training data includes the use of personal photos uploaded to enhance AI models without notifying or compensating users. Although Google provides users with options to turn off data sharing within privacy settings, most users are ignorant of these capabilities. Integration of cross-platform data increases privacy threats when AI applications use data from interlinked social media profiles, providing detailed user profiles that can be taken advantage of for purposeful manipulation or fraud. Inadequacy of informed consent continues to be a major problem, with users engaging in trends unaware of the entire context of sharing information. Studies show that 68% of individuals show concern regarding the misuse of AI app data, but 42% use these apps without going through the terms and conditions.

CyberPeace Expert Recommendations

While the Google Gemini image trend feature operates under its own terms and conditions, it is important to remember that many other tools and applications allow users to generate similar content. Not every platform can be trusted without scrutiny, so users who engage in such trends should do so only on trustworthy platforms and make reliable, informed choices. Above all, following cybersecurity best practices and digital security principles remains essential.

Here are some best practices:-

1.Immediate Protection Measures for User

In a nutshell, protection of personal information may begin by not uploading high-resolution personal photos into AI-based applications, especially those trained for facial recognition. Instead, a person can play with stock images or non-identifiable pictures to the degree that it satisfies the program's creative features without compromising biometric security. Strong privacy settings should exist on every social media platform and AI app by which a person can either limit access to their data, content, or anything else.

2.Organisational Safeguards

AI governance frameworks within organisations should enumerate policies regarding the usage of AI tools by employees, particularly those concerning the upload of personal data. Companies should appropriately carry out due diligence before the adoption of an AI product made commercially available for their own use in order to ensure that such a product has its privacy and security levels as suitable as intended by the company. Training should instruct employees regarding deepfake technology.

3.Technical Protection Strategies

Deepfake detection software should be used. These tools, which include Microsoft Video Authenticator, Intel FakeCatcher, and Sensity AI, allow real-time detection with an accuracy higher than 95%. Use blockchain-based concepts to verify content to create tamper-proof records of original digital assets so that the method of proposing deepfake content as original remains very difficult.

4.Policy and Awareness Initiatives

For high-risk transactions, especially in banks and identity verification systems, authentication should include voice and face liveness checks to ensure the person is real and not using fake or manipulated media. Implement digital literacy programs to empower users with knowledge about AI threats, deepfake detection techniques, and safe digital practices. Companies should also liaise with law enforcement, reporting purported AI crimes, thus offering assistance in combating malicious applications of synthetic media technology.

5.Addressing Data Transparency and Cross-Border AI Security

Regulatory systems need to be called for requiring the transparency of data policies in AI applications, along with providing the rights and choices to users regarding either Biometric data or any other data. Promotion must be given to the indigenous development of AI pertaining to India-centric privacy concerns, assuring the creation of AI models in a secure, transparent, and accountable manner. In respect of cross-border AI security concerns, there must be international cooperation for setting common standards of ethical design, production, and use of AI. With the virus-like contagiousness of AI phenomena such as saree editing trends, they portray the potential and hazards of the present-day generation of artificial intelligence. While such tools offer newer opportunities, they also pose grave privacy and security concerns, which should have been considered quite some time ago by users, organisations, and policy-makers. Through the setting up of all-around protection mechanisms and keeping an active eye on digital privacy, both individuals and institutions will reap the benefits of this AI innovation, and they shall not fall on the darker side of malicious exploitation.

References

- https://www.hindustantimes.com/trending/amid-google-gemini-nano-banana-ai-trend-ips-officer-warns-people-about-online-scams-101757980904282.html%202

- https://www.moneycontrol.com/news/india/viral-banana-ai-saree-selfies-may-risk-fraud-warn-jalandhar-rural-police-13549443.html

- https://www.parliament.nsw.gov.au/researchpapers/Documents/Sexually%20explicit%20deepfakes.pdf

- https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-2023-generative-ais-breakout-year

- https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-2023-generative-ais-breakout-year

- https://socradar.io/top-10-ai-deepfake-detection-tools-2025/

Introduction

The rapid adoption of artificial intelligence (AI) tools and applications in companies has been largely presented as a groundbreaking development for enterprises. The potential for increased productivity and efficiently scaled companies eliminates repetitive tasks and builds a narrative that practically writes itself for executives. What has largely been ignored, however, is its effect on its users- the employees. Evidence from across the United States, United Kingdom, and continental Europe indicates an increase in psychological disengagement from work, along with an increase in the number of people who are actively sabotaging the very systems that companies have invested millions of dollars to implement, as a direct result of being forced to work with AI.

The Backdrop: Quiet Quitting

Quiet quitting is a form of employee disengagement wherein workers meet only the basic expectations of their job without. Gallup puts global employee engagement at just 21%. State of the Global Workplace 2026 report which analysed employee well-being across 160 countries reports that in India, employee and manager engagement has declined. Around 62% of workers describe themselves as not engaged, and another 17% are actively disengaged — not just drifting, but potentially pulling in the opposite direction. What does this mean for productivity? Gallup estimates this costs the global economy roughly $8.9 trillion in lost productivity each year, around 9% of world GDP. This is the workplace AI has entered into.

How AI Is Changing the Nature of Work

The promise was simpler work but employees report that the reality is often more of it. AI raises output expectations without necessarily reducing effort. Workers now lose the equivalent of 51 working days per year to technology friction, nearly two full months up 42% from 2025. Poorly integrated systems force employees to spend hours troubleshooting or correcting AI-generated outputs, adding cognitive load rather than removing it. Focus efficiency dropped to a three-year low of 60%, as collaboration time surged 34% and multitasking climbed 12%. AI is not eliminating work. It is transforming it into something more demanding and more fragmented. The psychological dimension is equally documented. TalentLMS research found that 54% of employees report persistent workplace unhappiness, with one in five experiencing it frequently or constantly. 29% report unmanageable workloads during this transition, and 15% do not clearly understand their role expectations in an AI-transformed workplace. When workers cannot see where they fit, withdrawal is a rational response.

Then there is the fear. IBM announced it would not replace roughly 7,800 back-office positions that could be handled by AI, framing it as natural attrition. Klarna said its AI assistant was doing the work of 700 full-time customer service agents. Dropbox laid off 16% of its workforce, with its CEO explicitly citing the need to “make room for AI.” AI was the leading cause of job cuts in March 2026 the first time that has happened since tracking began.

The Causal Link: AI Anxiety to Quiet Quitting

A peer-reviewed study published in March 2025 establishes the causal mechanism between forced AI adoption and employee disengagement. Conducted across 457 employees in Turkish SMEs, it found that AI anxiety does not directly compel people to resign. Instead, it triggers quiet quitting a form of progressive disengagement that functions as a precursor to departure. Drawing on Withdrawal Progression Theory, the study frames quiet quitting as a preliminary stage of turnover intention, where withdrawal progresses from mild detachment toward eventual exit. The integrated causal chain runs as follows: forced AI adoption creates work intensification and job anxiety, which produce burnout and loss of autonomy, which trigger psychological withdrawal, which precedes turnover. DHR Global’s Workforce Trends Report for 2026 found that overall employee engagement dropped from 88% to 64% in a single year. Crucially, 69% of C-suite leaders say their company has communicated clearly about AI’s impact on jobs but only 12% of entry-level staff agree. When the people most exposed to displacement are also the least informed about what is happening to their roles, disengagement is not a mystery. It is a response to a vacuum of information.

From Disengagement to Active Withdrawal

Quiet quitting is then a natural response. But what has emerged alongside it is something more active, and it is where the disengagement crisis tips into something organisations are unprepared for. The Writer and Workplace Intelligence survey of 2,400 knowledge workers found that 29% of employees admit to willfully withdrawing from their company’s AI strategy. Among Gen Z workers, that figure jumps to 44%. Active withdrawal takes several forms: entering proprietary data into public AI chatbots, using unapproved tools, outright refusing to engage with mandated platforms, and in some cases deliberately generating low-quality outputs to make the technology look ineffective. For Gen Z, the resistance has a structural logic. Junior roles in finance, law, and tech the traditional “learning by doing” rungs of the career ladder have declined by 32% since 2022. For a 22-year-old, AI is not a tool; it is a competitor that has already taken their first job. Workers who resist AI out of fear for their jobs are making themselves more vulnerable to the outcome they dread. 77% of executives say employees who refuse to become proficient in AI will not be considered for promotions or leadership roles, and 60% are considering cutting those who refuse to adopt it entirely.

Meanwhile, 75% of executives admit their company’s AI strategy is “more for show” than a meaningful guide to outcomes. Only 29% report significant ROI from generative AI, despite 97% claiming to have already deployed agents across their organisation. 39% of business leaders admit they made employees redundant as a result of deploying AI of whom 55% concede they made the wrong decisions about those redundancies. Organisations are moving fast, getting it wrong, and the cost is being absorbed by the workforce.

Conclusion

AI is not directly causing quiet quitting. However, AI is changing how we view working relationships; it will continue to result in predictable outcomes of poor execution of AI (i.e. passive to active disengagement) and radically change the way that we work, primarily by creating an increase in job demands, reducing autonomy, and raising worker anxiety without providing any transparency about future AI technology use. If AI continues to create a challenging work environment, it may lead to increased psychological detachment from work and ultimately result in productivity losses, possibly canceling out the very gains expected from AI integration. This globally rising disengagement from AI tools begets the question: is technology being deployed responsibly?

References

- https://www.gallup.com/workplace/349484/state-of-the-global-workplace.aspx

- https://www.walkme.com/news-releases/enterprises-lose-51-workdays-per-employee-to-technology-friction-annually-despite-record-ai-investment-walkme-global-study-of-3750-finds/.

- https://www.activtrak.com/resources/state-of-the-workplace/

- https://peoplemanagingpeople.com/employee-retention/quiet-cracking/

- https://www.webpronews.com/the-quiet-revolt-gen-z-workers-are-deliberately-undermining-ai-deployments-from-the-inside/

- https://www.uctoday.com/productivity-automation/44-of-gen-z-workers-are-sabotaging-your-enterprise-ai-rollout-the-problem-isnt-gen-z/

- https://pmc.ncbi.nlm.nih.gov/articles/PMC11939379/

- https://huntscanlon.com/workforce-trends-2026-leaders-confront-burnout-disengagement-and-ai-driven-change/

- https://fortune.com/2026/04/08/gen-z-workers-sabotage-ai-rollout-backlash/

- https://peoplemanagingpeople.com/employee-retention/quiet-cracking/

- https://www.hrgrapevine.com/us/content/article/2026-04-09-ai-adoption-is-tearing-companies-apart-says-new-report

- https://economictimes.indiatimes.com/news/new-updates/india-leads-in-workplace-disengagement-as-quiet-quitting-trend-rises-why-are-indians-mentally-checking-out-at-jobs/articleshow/130104773.cms?from=mdr

Executive Summary:

After the reported attacks by Israel and the United States on Iran, a video allegedly showing footballer Cristiano Ronaldo has been widely circulated on social media. In the clip, Ronaldo appears to be holding a Palestinian flag and chanting “Free Palestine.” Several users are sharing the video with the claim that Ronaldo waved the Palestinian flag and raised “Free Palestine” slogans after the death of Iran’s Supreme Leader Ali Khamenei. However, a research by CyberPeace found that the claim is false. The viral clip does not depict a real event and has been generated using artificial intelligence. The fabricated video is being shared online with misleading claims.

Claim

An Instagram user “ham_313_ka_admi” shared the viral video on March 2, 2026. The text on the video reads: “Cristiano Ronaldo waved the Palestinian flag after Khamenei’s death. Mashallah. Free Palestine.”

Fact Check:

To verify the claim, we searched Google using relevant keywords but found no credible news reports supporting the viral claim. We also reviewed the official social media accounts of Cristiano Ronaldo, where no such video or statement was posted. This raised suspicion that the clip might be AI-generated.

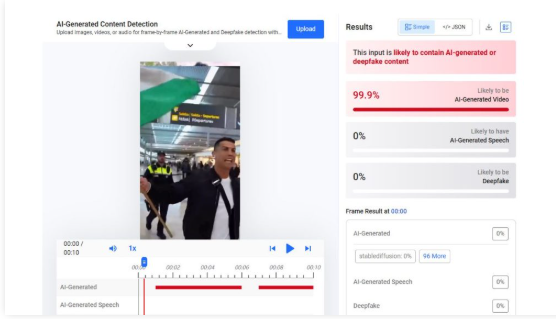

To further examine the video, we analyzed it using AI detection tools. The tool Hive Moderation indicated a 99.9% probability that the video was created using artificial intelligence.

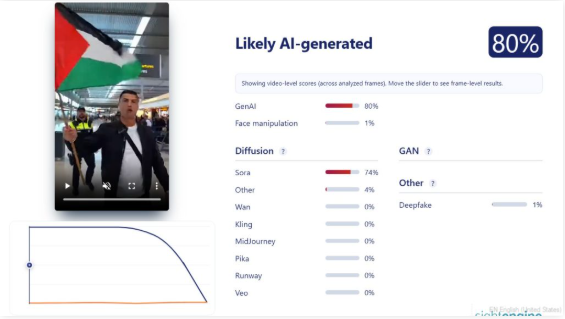

We also analyzed the footage using the Sightengine AI detection tool. The results suggested an 80% likelihood that the video was AI-generated. The tool also indicated that the clip may have been created using Sora, an AI video-generation tool.

Conclusion

The viral video claiming that Cristiano Ronaldo waved the Palestinian flag and chanted “Free Palestine” after the death of Ali Khamenei is AI-generated. It does not depict a real incident and is being shared with a misleading claim.