#FactCheck: AI-Generated Audio Falsely Claims COAS Admitted to Loss of 6 Jets and 250 Soldiers

Executive Summary:

A viral video (archive link) claims General Upendra Dwivedi, Chief of Army Staff (COAS), admitted to losing six Air Force jets and 250 soldiers during clashes with Pakistan. Verification revealed the footage is from an IIT Madras speech, with no such statement made. AI detection confirmed parts of the audio were artificially generated.

Claim:

The claim in question is that General Upendra Dwivedi, Chief of Army Staff (COAS), admitted to losing six Indian Air Force jets and 250 soldiers during recent clashes with Pakistan.

Fact Check:

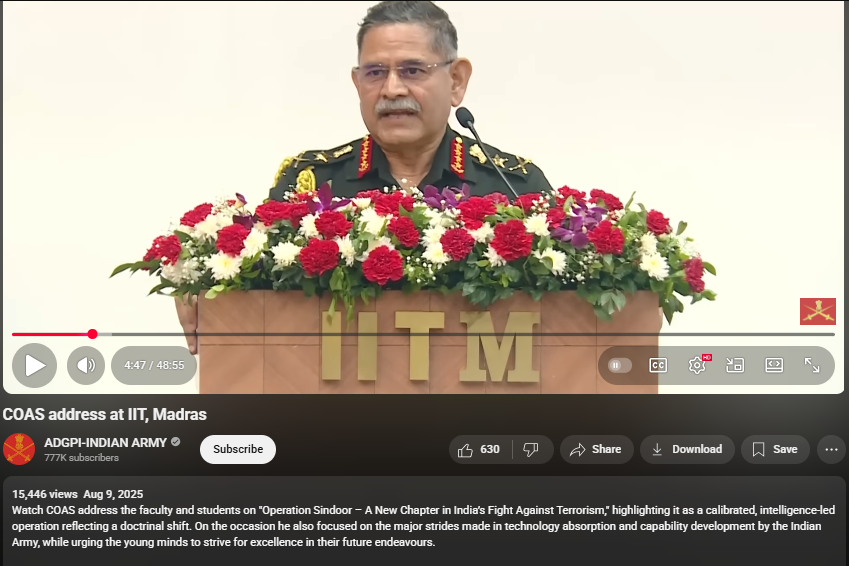

Upon conducting a reverse image search on key frames from the video, it was found that the original footage is from IIT Madras, where the Chief of Army Staff (COAS) was delivering a speech. The video is available on the official YouTube channel of ADGPI – Indian Army, published on 9 August 2025, with the description:

“Watch COAS address the faculty and students on ‘Operation Sindoor – A New Chapter in India’s Fight Against Terrorism,’ highlighting it as a calibrated, intelligence-led operation reflecting a doctrinal shift. On the occasion, he also focused on the major strides made in technology absorption and capability development by the Indian Army, while urging young minds to strive for excellence in their future endeavours.”

A review of the full speech revealed no reference to the destruction of six jets or the loss of 250 Army personnel. This indicates that the circulating claim is not supported by the original source and may contribute to the spread of misinformation.

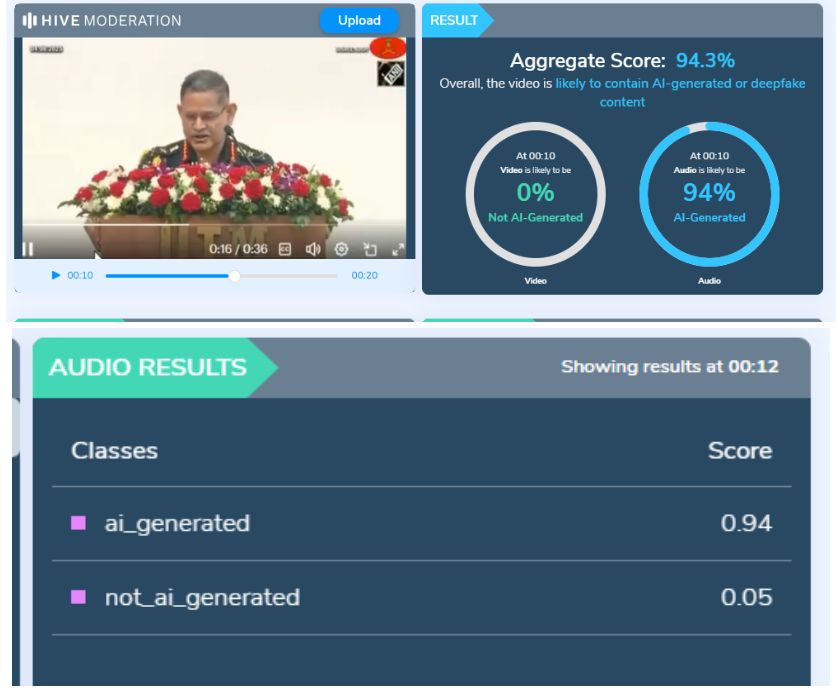

Further using AI Detection tools like Hive Moderation we found that the voice is AI generated in between the lines.

Conclusion:

The claim is baseless. The video is a manipulated creation that combines genuine footage of General Dwivedi’s IIT Madras address with AI-generated audio to fabricate a false narrative. No credible source corroborates the alleged military losses.

- Claim: AI-Generated Audio Falsely Claims COAS Admitted to Loss of 6 Jets and 250 Soldiers

- Claimed On: Social Media

- Fact Check: False and Misleading

Related Blogs

.webp)

Introduction

In India, the rights of children with regard to protection of their personal data are enshrined under the Digital Personal Data Protection Act, 2023 which is the newly enacted digital personal data protection law of India. The DPDP Act requires that for the processing of children's personal data, verifiable consent of parents or legal guardians is a necessary requirement. If the consent of parents or legal guardians is not obtained then it constitutes a violation under the DPDP Act. Under section 2(f) of the DPDP act, a “child” means an individual who has not completed the age of eighteen years.

Section 9 under the DPDP Act, 2023

With reference to the collection of children's data section 9 of the DPDP Act, 2023 provides that for children below 18 years of age, consent from Parents/Legal Guardians is required. The Data Fiduciary shall, before processing any personal data of a child or a person with a disability who has a lawful guardian, obtain verifiable consent from the parent or the lawful guardian. Section 9 aims to create a safer online environment for children by limiting the exploitation of their data for commercial purposes or otherwise. By virtue of this section, the parents and guardians will have more control over their children's data and privacy and they are empowered to make choices as to how they manage their children's online activities and the permissions they grant to various online services.

Section 9 sub-section (3) specifies that a Data Fiduciary shall not undertake tracking or behavioural monitoring of children or targeted advertising directed at children. However, section 9 sub-section (5) further provides room for exemption from this prohibition by empowering the Central Government which may notify exemption to specific data fiduciaries or data processors from the behavioural tracking or target advertising prohibition under the future DPDP Rules which are yet to be announced or released.

Impact on social media platforms

Social media companies are raising concerns about Section 9 of the DPDP Act and upcoming Rules for the DPDP Act. Section 9 prohibits behavioural tracking or targeted advertising directed at children on digital platforms. By prohibiting intermediaries from tracking a ‘child's internet activities’ and ‘targeted advertising’ - this law aims to preserve children's privacy. However, social media corporations contended that this limitation adversely affects the efficacy of safety measures intended to safeguard young users, highlighting the necessity of monitoring specific user signals, including from minors, to guarantee the efficacy of safety measures designed for them.

Social media companies assert that tracking teenagers' behaviour is essential for safeguarding them from predators and harmful interactions. They believe that a complete ban on behavioural tracking is counterproductive to the government's objectives of protecting children. The scope to grant exemption leaves the door open for further advocacy on this issue. Hence it necessitates coordination with the concerned ministry and relevant stakeholders to find a balanced approach that maintains both privacy and safety for young users.

Furthermore, the impact on social media platforms also extends to the user experience and the operational costs required to implement the functioning of the changes created by regulations. This also involves significant changes to their algorithms and data-handling processes. Implementing robust age verification systems to identify young users and protect their data will also be a technically challenging step for the various scales of platforms. Ensuring that children’s data is not used for targeted advertising or behavioural monitoring also requires sophisticated data management systems. The blanket ban on targeted advertising and behavioural tracking may also affect the personalisation of content for young users, which may reduce their engagement with the platform.

For globally operating platforms, aligning their practices with the DPDP Act in India while also complying with data protection laws in other countries (such as GDPR in Europe or COPPA in the US) can be complex and resource-intensive. Platforms might choose to implement uniform global policies for simplicity, which could impact their operations in regions not governed by similar laws. On the same page, competitive dynamics such as market shifts where smaller or niche platforms that cater specifically to children and comply with these regulations may gain a competitive edge. There may be a drive towards developing new, compliant ways of monetizing user interactions that do not rely on behavioural tracking.

CyberPeace Policy Recommendations

A balanced strategy should be taken into account which gives weightage to the contentions of social media companies as well as to the protection of children's personal information. Instead of a blanket ban, platforms can be obliged to follow and encourage openness in advertising practices, ensuring that children are not exposed to any misleading or manipulative marketing techniques. Self-regulation techniques can be implemented to support ethical behaviour, responsibility, and the safety of young users’ online personal information through the platform’s practices. Additionally, verifiable consent should be examined and put forward in a manner which is practical and the platforms have a say in designing the said verification. Ultimately, this should be dealt with in a manner that behavioural tracking and targeted advertising are not affecting the children's well-being, safety and data protection in any way.

Final Words

Under section 9 of the DPDP Act, the prohibition of behavioural tracking and targeted advertising in case of processing children's personal data - will compel social media platforms to overhaul their data collection and advertising practices, ensuring compliance with stricter privacy regulations. The legislative intent behind this provision is to enhance and strengthen the protection of children's digital personal data security and privacy. As children are particularly vulnerable to digital threats due to their still-evolving maturity and cognitive capacities, the protection of their privacy stands as a priority. The innocence of children is a major cause for concern when it comes to digital access because children simply do not possess the discernment and caution required to be able to navigate the Internet safely. Furthermore, a balanced approach needs to be adopted which maintains both ‘privacy’ and ‘safety’ for young users.

References

- https://www.meity.gov.in/writereaddata/files/Digital%20Personal%20Data%20Protection%20Act%202023.pdf

- https://www.firstpost.com/tech/as-govt-of-india-starts-preparing-rules-for-dpdp-act-social-media-platforms-worried-13789134.html#google_vignette

- https://www.business-standard.com/industry/news/social-media-platforms-worry-new-data-law-could-affect-child-safety-ads-124070400673_1.html

A video clip of journalist Palki Sharma is being widely shared on social media. Along with the video, it is being claimed that during Prime Minister Narendra Modi’s recent Middle East visit, she questioned Jordan’s diplomatic protocol.

In the viral clip, Palki Sharma is allegedly seen asking why Jordan’s King Abdullah II did not come to the airport to receive Prime Minister Modi, and whether this indicated a downgrade in the level of welcome.

However, an investigation by the Cyber Peace Foundation found this claim to be misleading. The probe revealed that while the visuals in the viral video are genuine, the audio has been altered using Artificial Intelligence (AI).

On the social media platform ‘X’, a user named “Ammar Solangi” shared this video on 18 December. The post claimed that the video was related to questions raised about Jordan’s diplomatic protocol during Prime Minister Modi’s visit. According to the post, Palki Sharma questioned why King Abdullah II did not receive Prime Minister Modi at the airport. The archive link of the viral post can be seen here: https://ghostarchive.org/archive/26aK0

Verification

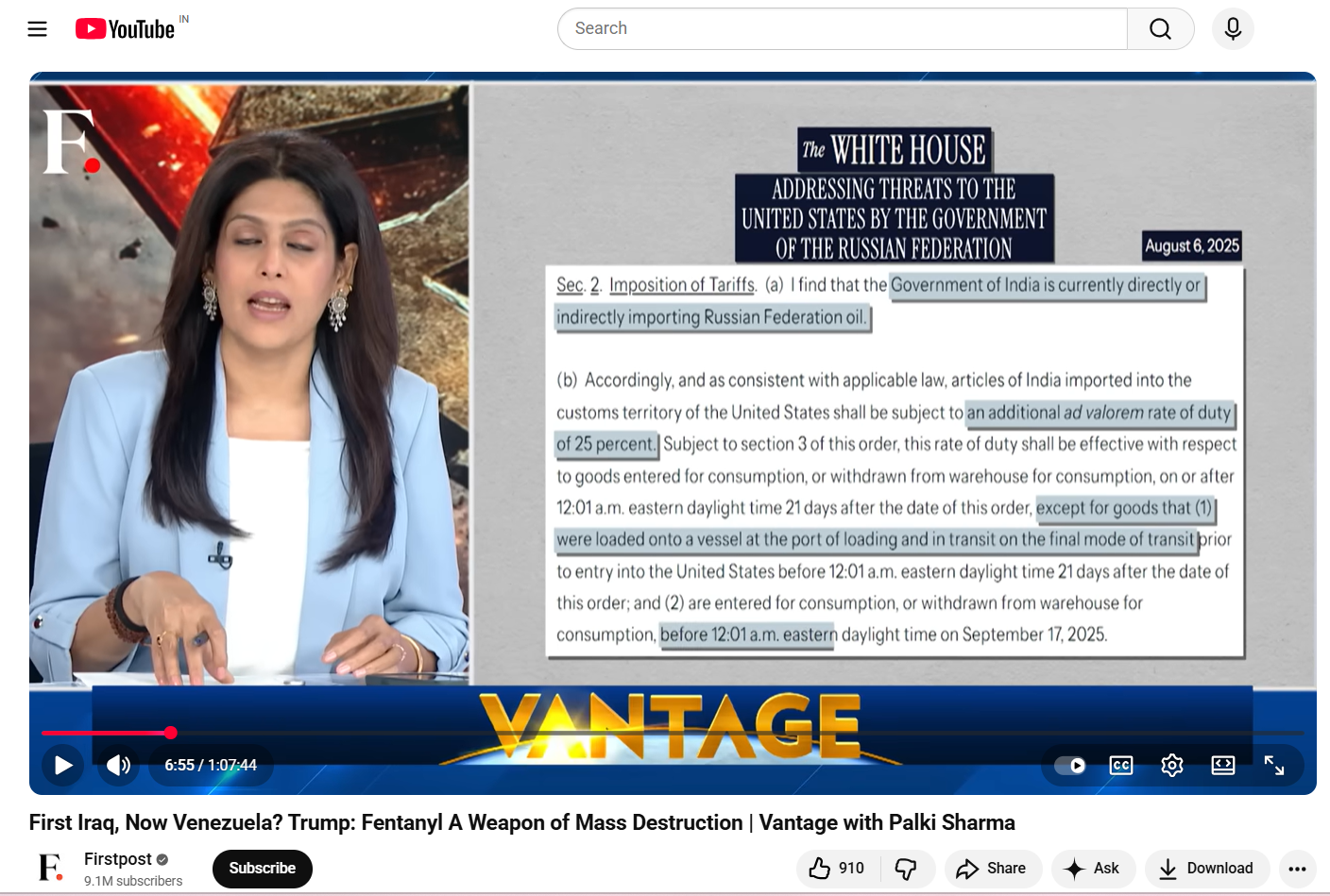

During the investigation, the fact-check desk noticed the ‘Firstpost’ logo in the top-left corner of the viral video. Based on this clue, a customized Google search was conducted, which led to the original news report.

The investigation revealed that the viral video was taken from an episode of journalist Palki Sharma’s show “Vantage with Palki Sharma”, which aired on 17 December.

Analysis of the video showed that the visuals appearing at the 33 minutes 30 seconds timestamp in the original report exactly match those used in the viral clip. However, in the original broadcast, Palki Sharma neither questioned Jordan’s protocol nor made any comment about King Abdullah II not being present at the airport.

In the original video, Palki Sharma says:

“Prime Minister Modi was on a diplomatic tour of Jordan, Ethiopia, and Oman, and in Jordan he was received at the airport by the country’s Prime Minister…” The link to the original report can be seen here: https://www.youtube.com/watch?v=-VYZYe9l6Bs

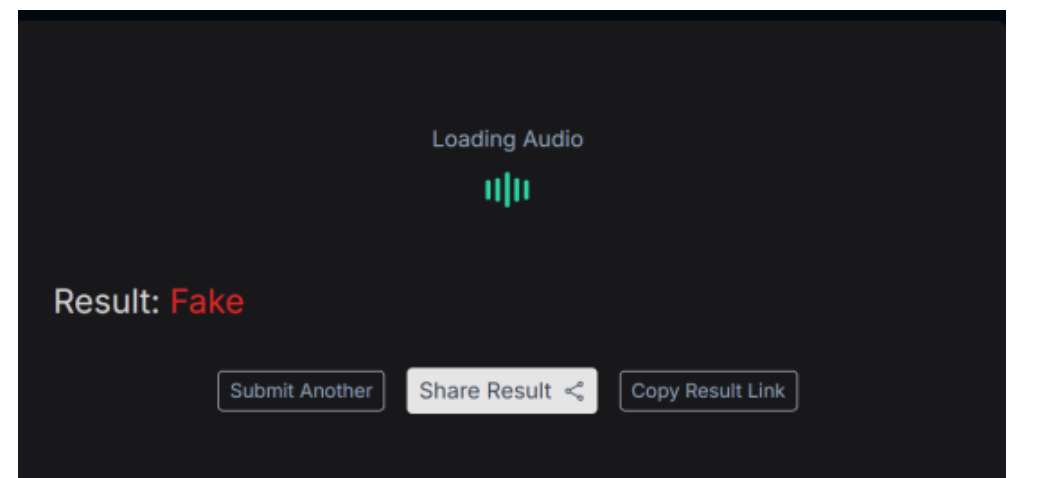

AI Audio Examination

Further investigation involved separating the audio from the viral video and analyzing it using the AI voice detection tool ‘Resemble AI’. The tool’s results confirmed that fake, AI-generated audio had been added over the real footage in the viral clip to spread a misleading claim. A screenshot of the results from this examination can be seen below.

Conclusion

The video being circulated in the name of journalist Palki Sharma has been tampered with. Her voice has been altered using AI technology, and the claim made regarding the Jordan visit is completely misleading.

Introduction

In recent years, the online gaming sector has seen tremendous growth and is one of the fastest-growing components of the creative economy, contributing significantly to innovation, employment generation and export earnings. India possesses a large pool of skilled young professionals, strong technological capabilities and a rapidly growing domestic market, which together provide an opportunity for the country to assume a leadership role in the global value chain of online gaming. With this, the online gaming industry has also faced an environment of exploitation, abuse, with notable cases of fraud, money laundering, and other emerging cybercrimes. In order to protect the interests of players, ensure fair play and competition, safe and secure online gaming environment, the need for introducing and establishing dedicated gaming regulation was a need of the hour.

On 20 August 2025, the Union government introduced a new bill, ‘Promotion and Regulation of Online Gaming Bill, 2025’ in Lok Sabha that seeks to prohibit online money gaming, including advertisements and financial transactions related to such platforms. From the introduction, the said bill was passed at 5 PM on the same date. Further, the upper house of parliament (Rajya Sabha) passed the bill on 21st August 2025. The bill can be seen as a progressive step towards building safer online gaming spaces for everyone, especially for our youth and combating the emerging cybercrime threats present in the online gaming landscape.

Key Highlights of the Bill

The Bill extends to the whole of India. It also applies to any online money gaming service offered within India or operated from outside the country but accessible in India.

- Definition of E-sports:

Section 2(1)(c) of the Bill defines e-sports as:-

(i) is played as part of multi-sports events;

(ii) involves organised competitive events between individuals or teams, conducted in multiplayer formats governed by predefined rules;

(iii) is duly recognised under the National Sports Governance Act, 2025, and registered with the Authority or agency under section 3;

(iv) has outcome determined solely by factors such as physical dexterity, mental agility, strategic thinking or other similar skills of users as players;

(v) may include payment of registration or participation fees solely for the purpose of entering the competition or covering administrative costs and may include performance-based prize money by the player; and

(vi)shall not involve the placing of bets, wagers or any other stakes by any person, whether or not such person is a participant, including any winning out of such bets, wagers or any other stakes;

- Prohibition of Online Money Gaming and Advertisement thereof

The Bill prohibits the offering of online money games and online money gaming services. It also bans all forms of advertisements or promotions connected to online money games. This includes endorsements by individuals or entities. - Financial Transactions

Banks, financial institutions, and other intermediaries are barred from facilitating transactions related to online money gaming services. - Criminal Liability

Violation of the provisions on online money gaming can result in imprisonment for up to three years, or a fine of up to ₹1 crore, or both. Repeat offenders face stricter punishment with higher fines and longer jail terms. - Cognizable and Non-Bailable Offences

Offences relating to offering online money gaming services and facilitating financial transactions for such games are categorised as cognizable and non-bailable. This gives law enforcement agencies greater power to act without requiring prior approval.

In conversation with CyberPeace ~

Shailendra Vikram Singh, Former Deputy Secretary (Cyber & Information Security), Ministry of Home Affairs, GOI . He highlighted that

"The passage of the Promotion and Regulation of Online Gaming Bill, 2025 in the Lok Sabha highlights the government’s growing priority on national security, public safety, and health in digital regulation. Unfortunately, the real money gaming industry, despite its growth and promise, did not take proactive steps to address these concerns. The absence of safeguards and engagement left the government with no choice but to adopt a blanket ban."Having worked on this issue from both the government and industry side, the clear lesson is that in sensitive digital sectors, early regulatory alignment and constructive dialogue are not optional but essential. Going forward, collaboration is the only way to achieve a balance between innovation and responsibility.”

CyberPeace Outlook

The Promotion and Regulation of Online Gaming Bill, 2025, marks a decisive policy shift by simultaneously fostering the growth of e-sports, educational and social gaming, and imposing an absolute prohibition on online money games. By recognising e-sports as legitimate, skill-based competitive sports under the National Sports Governance Act, 2025, and establishing a central Authority for oversight, registration, and regulation, the Bill creates an institutional framework for safe and responsible development of the sector. The Bill completely bans real money games (RMGs), regardless of whether they are skill-based or chance-based or both, hence it poses significant questions on RMG companies' legal standing, upon which the gaming industry has raised its conundrum. Further, it addresses urgent threats such as cybercrime, gaming addiction, online betting, money laundering, and the misuse of gaming platforms for illicit activities. The move reflects a balanced approach, encouraging innovation and digital skill-building, while safeguarding public order, consumer interests, and financial integrity.

References

- https://prsindia.org/files/bills_acts/bills_parliament/2025/Bill_Text-Online_Gaming_Bill_2025.pdf

- https://prsindia.org/billtrack/the-promotion-and-regulation-of-online-gaming-bill-2025

- https://www.hindustantimes.com/india-news/rajya-sabha-clears-online-gaming-bill-a-day-after-lok-sabha-approval-101755766847840.html