#FactCheck -Viral video of Yogi Adityanath and Ravi Kishan’s march is not a UGC protest

Executive Summary

A video circulating on social media shows Uttar Pradesh Chief Minister Yogi Adityanath and Gorakhpur MP Ravi Kishan walking with a group of people. Users are claiming that the two leaders were participating in a protest against the University Grants Commission (UGC). Research by CyberPeace has found the viral claim to be misleading. Our research revealed that the video is from September 2025 and is being shared out of context with recent events. The video was recorded when Chief Minister Yogi Adityanath undertook a foot march in Gorakhpur on a Monday. Ravi Kishan, MP from Gorakhpur, was also present. During the march, the Chief Minister visited local markets, malls, and shops, interacting with traders and gathering information on the implementation of GST rate cuts.

Claim Details:

On Instagram, a user shared the viral video on 27 January 2026. The video shows the Chief Minister and the MP walking with a group of people. The text “UGC protest” appears on the video, suggesting that it is connected to a protest against the University Grants Commission.

Fact Check:

To verify the claim, we searched Google using relevant keywords, but found no credible media reports confirming it.Next, we extracted key frames from the video and searched them using Google Lens. The video was traced to NBT Uttar Pradesh’s X (formerly Twitter) account, posted on 22 September 2025.

According to NBT Uttar Pradesh, CM Yogi Adityanath undertook a foot march in Gorakhpur, visiting malls and shops to interact with traders and check the implementation of GST rate cuts.

Conclusion:

The viral video is not related to any recent UGC guidelines. It dates back to September 2025, showing CM Yogi Adityanath and MP Ravi Kishan on a foot march in Gorakhpur, interacting with traders about GST rate cuts.The claim that the video depicts a protest against the University Grants Commission is therefore false and misleading.

Related Blogs

Introduction

In 2025, the internet is entering a new paradigm and it is hard not to witness it. The internet as we know it is rapidly changing into a treasure trove of hyper-optimised material over which vast bot armies battle to the death, thanks to the amazing advancements in artificial intelligence. All of that advancement, however, has a price, primarily in human lives. It turns out that releasing highly personalised chatbots on a populace that is already struggling with economic stagnation, terminal loneliness, and the ongoing destruction of our planet isn’t exactly a formula for improved mental health. This is the truth of 75% of the kids and teen population who have had chats with chatbot-generated fictitious characters. AI, or artificial intelligence, Chatbots are becoming more and more integrated into our daily lives, assisting us with customer service, entertainment, healthcare, and education. But as the impact of these instruments grows, accountability and moral behaviour become more important. An investigation of the internal policies of a major international tech firm last year exposed alarming gaps: AI chatbots were allowed to create content with child romantic roleplaying, racially discriminatory reasoning, and spurious medical claims. Although the firm has since amended aspects of these rules, the exposé underscores an underlying global dilemma - how can we regulate AI to maintain child safety, guard against misinformation, and adhere to ethical considerations without suppressing innovation?

The Guidelines and Their Gaps

The tech giants like Meta and Google are often reprimanded for overlooking Child Safety and the overall increase in Mental health issues in children and adolescents. According to reports, Google introduced Gemini AI kids, a kid-friendly version of its Gemini AI chatbot, which represents a major advancement in the incorporation of generative artificial intelligence (Gen-AI) into early schooling. Users under the age of thirteen can use supervised accounts on the Family Link app to access this version of Gemini AI Kids.

AI operates on the premise of data collection and analysis. To safeguard children’s personal information in the digital world, the Digital Personal Data Protection Act, 2023 (DPDP Act) introduces particular safeguards. According to Section 9, before processing the data of children, who are defined as people under the age of 18, Data Fiduciaries, entities that decide the goals and methods of processing personal data, must get verified consent from a parent or legal guardian. Furthermore, the Act expressly forbids processing activities that could endanger a child’s welfare, such as behavioural surveillance and child-targeted advertising. According to court interpretations, a child's well-being includes not just medical care but also their moral, ethical, and emotional growth.

While the DPDP Act is a big start in the right direction, there are still important lacunae in how it addresses AI and Child Safety. Age-gating systems, thorough risk rating, and limitations specific to AI-driven platforms are absent from the Act, which largely concentrates on consent and damage prevention in data protection. Furthermore, it ignores the threats to children’s emotional safety or the long-term psychological effects of interacting with generative AI models. Current safeguards are self-regulatory in nature and dispersed across several laws, such as the Bhartiya Nyaya Sanhita, 2023. These include platform disclaimers, technology-based detection of child-sexual abuse content, and measures under the IT (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021.

Child Safety and AI

- The Risks of Romantic Roleplay - Enabling chatbots to engage in romantic roleplaying with youngsters is among the most concerning discoveries. These interactions can result in grooming, psychological trauma, and relaxation to inappropriate behaviour, even if they are not explicitly sexual. Having illicit or sexual conversations with kids in cyberspace is unacceptable, according to child protection experts. However, permitting even "flirtatious" conversation could normalise risky boundaries.

- International Standards and Best Practices - The concept of "safety by design" is highly valued in child online safety guidelines from around the world, including UNICEF's Child Online Protection Guidelines and the UK's Online Safety Bill. This mandating of platforms and developers to proactively remove risks, not reactively to respond to harms, is the bare minimum standard that any AI guidelines must meet if they provide loopholes for child-directed roleplay.

Misinformation and Racism in AI Outputs

- The Disinformation Dilemma - The regulations also allowed AI to create fictional narratives with disclaimers. For example, chatbots were able to write articles promulgating false health claims or smears against public officials, as long as they were labelled as "untrue." While disclaimers might give thin legal cover, they add to the proliferation of misleading information. Indeed, misinformation tends to spread extensively because users disregard caveat labels in favour of provocative assertions.

- Ethical Lines and Discriminatory Content - It is ethically questionable to allow AI systems to generate racist arguments, even when requested. Though scholarly research into prejudice and bias may necessitate such examples, unregulated generation has the potential to normalise damaging stereotypes. Researchers warn that such practice brings platforms from being passive hosts of offensive speech to active generators of discriminatory content. It is a difference that makes a difference, as it places responsibility squarely on developers and corporations.

The Broader Governance Challenge

- Corporate Responsibility and AI Material generated by AI is not equivalent to user speech—it is a direct reflection of corporate training, policy decisions, and system engineering. This fact requires a greater level of accountability. Although companies can update guidelines following public criticism, that there were such allowances in the first place indicates a lack of strong ethical regulation.

- Regulatory Gaps Regulatory regimes for AI are currently in disarray. The EU AI Act, the OECD AI Principles, and national policies all emphasise human rights, transparency, and accountability. The few, though, specify clear guidelines for content risks such as child roleplay or hate narratives. This absence of harmonised international rules leaves companies acting in the shadows, establishing their own limits until contradicted.

An active way forward would include

- Express Child Protection Requirements: AI systems must categorically prohibit interactions with children involving flirting or romance.

- Misinformation Protections: Generative AI must not be allowed to generate knowingly false material, disclaimers being irrelevant.

- Bias Reduction: Developers need to proactively train systems against generating discriminatory accounts, not merely tag them as optional outputs.

- Independent Regulation: External audit and ethics review boards can supply transparency and accountability independent of internal company regulations.

Conclusion

The guidelines that are often contentious are more than the internal folly of just one firm; they point to a deeper systemic issue in AI regulation. The stakes rise as generative AI becomes more and more integrated into politics, healthcare, education, and social interaction. Racism, false information, and inadequate child safety measures are severe issues that require quick resolution. Corporate regulation is only one aspect of the future; other elements include multi-stakeholder participation, stronger global systems, and ethical standards. In the end, rather than just corporate interests, trust in artificial neural networks will be based on their ability to preserve the truth, protect the weak, and represent universal human values.

References

- https://www.esafety.gov.au/newsroom/blogs/ai-chatbots-and-companions-risks-to-children-and-young-people

- https://www.lakshmisri.com/insights/articles/ai-for-children/#

- https://the420.in/meta-ai-chatbot-guidelines-child-safety-racism-misinformation/

- https://www.unicef.org/documents/guidelines-industry-online-child-protection

- https://www.oecd.org/en/topics/sub-issues/ai-principles.html

- https://artificialintelligenceact.eu/

Introduction

In the hyperconnected world, cyber incidents can no longer be treated as sporadic disruptions; such incidents have become an everyday occurrence. The attack landscape today is very consequential and shows significant multiplication in its frequency, with ransomware attacks incapacitating a health system, phishing attacks hitting a financial institution, or state-sponsored attacks on critical infrastructures. Towards counteracting such threats, traditional ways alone are not enough, they gravely rely on manual research and human intellect. Attackers exercise speed, scale, and stealth, and defenders are always four steps behind. With such a widening gap, it is deemed necessary to facilitate incident response and crisis management with the intervention of automation and artificial intelligence (AI) for faster detection, context-driven decision-making, and collaborative response beyond human capabilities.

Incident Response and Crisis Management

Incident response is the structured way in which organisations deal with responding to detecting, segregating, and recovering from security incidents. Crisis management takes this even further, dealing not only with the technical fallout of a breach but also its business, reputation, and regulatory implications. Echelon used to depend on manual teams of people sorting through logs, cross-correlating alarms, and generating responses, a paradigm effective for small numbers but quickly inadequate in today's threat climate. Today's opponents attack at machine speed, employing automation to launch attacks. Under such circumstances, responding with slow, manual methods means delay and draconian consequences. The AI and automation introduction is a paradigm change that allows organisations to equate the pace and precision with which attackers initiate attacks in responding to incidents.

How Automation Reinvents Response

Cybercrime automation liberates cybercrime analysts from boring and repetitive tasks that consume time. An analyst manually detects potential threats from a list of hundreds each day, while automated systems sift through noise and focus only on genuine threats. Malware can automatically cause infected computers to be disconnected from the network to avoid spreading or may automatically have its suspicious account permissions removed without human intervention. The security orchestration systems move further by introducing playbooks, predefined steps describing how incidents of a certain type (e.g., phishing attempts or malware infections) should be handled. This ensures fast containment while ensuring consistency and minimising human error amid the urgency of dealing with thousands of alerts.

Automation takes care of threat detection, prioritisation, and containment, allowing human analysts to refocus on more complex decision-making. Instead of drowning in the sea of trivial alerts, security teams can now devote their efforts to more strategic areas: threat hunting and longer-term resilience. Automation is a strong tool of defence, cutting response times down from hours to minutes.

The Intelligence Layer: AI in Action

If automation provides speed, then AI is what allows the brain to be intelligent and flexible. Working with old and fixed-rule systems, AI-enabled solutions learn from experiences, adapt to changes in threats, and discover hidden patterns of which human analysts themselves would be unaware. For instance, machine learning algorithms identify normal behaviour on a corporate network and raise alerts on any anomalies that could indicate an insider attack or an advanced persistent threat. Similarly, AI systems sift through global threat intelligence to predict likely attack vectors so organisations can have their vulnerabilities fixed before they are exploited.

AI also boosts forensic analysis. Instead of searching forever for clues, analysts let AI-driven systems trace back to the origin of an event, identify vulnerabilities exploited by attackers, and flag systems that are still under attack. During a crisis, AI is a decision support that predicts outcomes of different scenarios and recommends the best response. In response to a ransomware attack, for example, based on context, AI might advise separating a single network segment or restoring from backup or alerting law enforcement.

Real-World Applications and Case Studies

Already, this mitigation has been provided in the form of real-world applications of automation and AI. Consider, for example, IBM Watson for Cybersecurity, which has been applied in analysing unstructured threat intelligence and providing analysts with actionable results in minutes, rather than days. Like this, systems driven by AI in DARPA's Cyber Grand Challenge demonstrated the ability to automatically identify an instant vulnerability, patch it, and reveal the potential of a self-healing system. AI-powered fraud detection systems stop suspicious transactions in the middle of their execution and work all night to prevent losses. What is common in all these examples is that automation and AI lessen human effort, increase accuracy, and in the event of a cyberattack, buy precious time.

Challenges and Limitations

While promising, the technology is still not fully mature. The quality of an AI system is highly dependent on the training data provided; poor training can generate false positives that drown teams or worse false negatives that allow attackers to proceed unabated. Attackers have also started targeting AI itself by poisoning datasets or designing malware that does not get detected. Aside from risks that are more technical, the operational and financial costs involved in implementing advanced AI-based systems present expensive threats to any company. Organisations will have to make expenditures not only on technology but also for the training of staff to best utilise these tools. There are some ethical and privacy issues to consider as well because systems may be processing sensitive personal data, so global data protection laws such as the GDPR or India's DPDP Act could come into conflict.

Creating a Human-AI Collaboration

The future is not going to be one of substitution by machines but of creating human-AI synergy. Automation can do the drudgery, AI can provide smarts, and human professionals can use judgment, imagination, and ethical decisions. One would want to build AI-fuelled Security Operations Centres where technology and human experts work in tandem. Continuous training must be provided to AI models to reduce false alarms and make them most resistant against adversarial attacks. Regular conduct of crisis drills that combine AI tools and human teams can ensure preparedness for real-time events. Likewise, it is worth integrating ethical AI guidelines into security frameworks to ensure a stronger defence while respecting privacy and regulatory compliance.

Conclusion

Cyber-attacks are an eventuality in this modern time, but the actual impact need not be so harsh. The organisations can maintain the programmatic method of integrating automation and AI into incident response and crisis management so that the response against the very threat can be shifted from reactive firefighting to proactive resilience. Automation gives speed and efficiency while AI gives intelligence and foresight, hence putting the defenders on par and possibly exceeding the speed and sophistication of the attackers. But an utmost system without human inquisitiveness, ethical reasoning, and strategic foresight would remain imperfect. The best defence is in that human-machine relationship symbiotic system wherein automation and AI take care of how fast and how many cyber threats come in, whereas human intellect ensures that every response is aligned with larger organizational goals. This synergy is where cybersecurity resiliency will reside in the future-the defenders won't just be reacting to emergencies but will rather be driving the way.

References

- https://www.sisainfosec.com/blogs/incident-response-automation/

- https://stratpilot.ai/role-of-ai-in-crisis-management-and-its-critical-importance/

- https://www.juvare.com/integrating-artificial-intelligence-into-crisis-management/

- https://www.motadata.com/blog/role-of-automation-in-incident-management/

Executive Summary

A video circulating on social media is being linked to the ongoing tensions in West Asia involving the United States, Israel, and Iran. The clip shows an aircraft crashing into a residential area, with users claiming that a Dubai-bound plane carrying Israeli soldiers crashed near Tel Aviv airport, killing everyone on board. However, an research by the CyberPeace has found the claim to be false. The viral video is AI-generated, and no such incident has taken place in Israel.

Claim

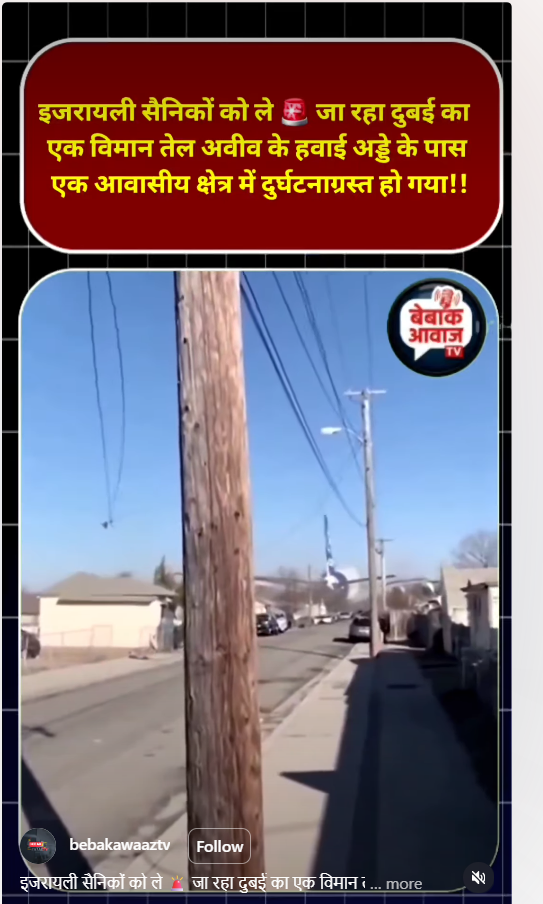

An Instagram user “bebakawaaztv” shared the video on April 7, 2026, claiming that a Dubai aircraft carrying Israeli soldiers crashed near Tel Aviv airport in a residential area, allegedly after being hit by debris from an Iranian hypersonic missile.

Fact Check

To verify the claim, we closely examined the viral video. Several visual inconsistencies indicated that it was not real. The aircraft appears to be flying unusually low over a residential area—something that is highly improbable under normal aviation conditions. Its landing gear seems to touch rooftops without causing any visible damage. Additionally, the wings of the aircraft pass through structures like poles without any collision impact, which is physically impossible. These anomalies strongly suggested that the video was artificially created.

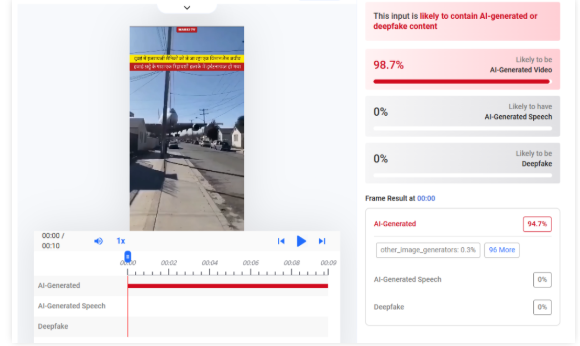

We further analyzed the video using the AI detection tool HIVE Moderation, which indicated a 99% probability that the content is AI-generated.

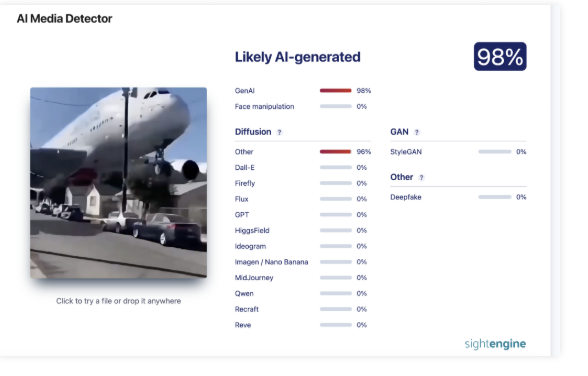

Another analysis using Sightengine also flagged the video as likely AI-generated.

Conclusion

The viral claim is false and misleading. There is no credible evidence or verified report confirming that any Dubai aircraft carrying Israeli soldiers crashed near Tel Aviv airport. No such incident has been reported by any reliable international or local media outlets. The video in question is digitally fabricated using AI technology, and the visual inconsistencies within the clip clearly indicate manipulation. Such content is often designed to exploit ongoing geopolitical tensions and spread misinformation at scale