#FactCheck- Viral ‘Army Jump Accident’ Video Is AI-Generated

Executive Summary

A video is being widely shared on social media showing a man in an army uniform jumping from a height, losing balance mid-air, and appearing to meet with an accident. The clip is being circulated as a real-life incident. However, a research by the CyberPeace found the claim to be false. The viral video is not real but AI-generated.

Claim

On social media platform Facebook, a user shared the video with a caption suggesting it shows a real accident, warning against risky stunts.

- https://archive.ph/BH6dl#selection-347.0-347.122

- https://www.facebook.com/ashok.yadav.9041083/posts/1593460528549619/

Fact Check

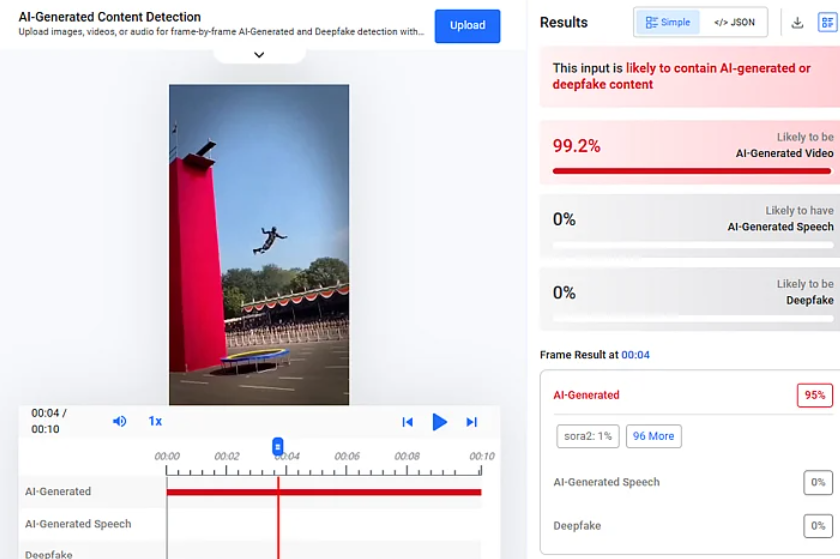

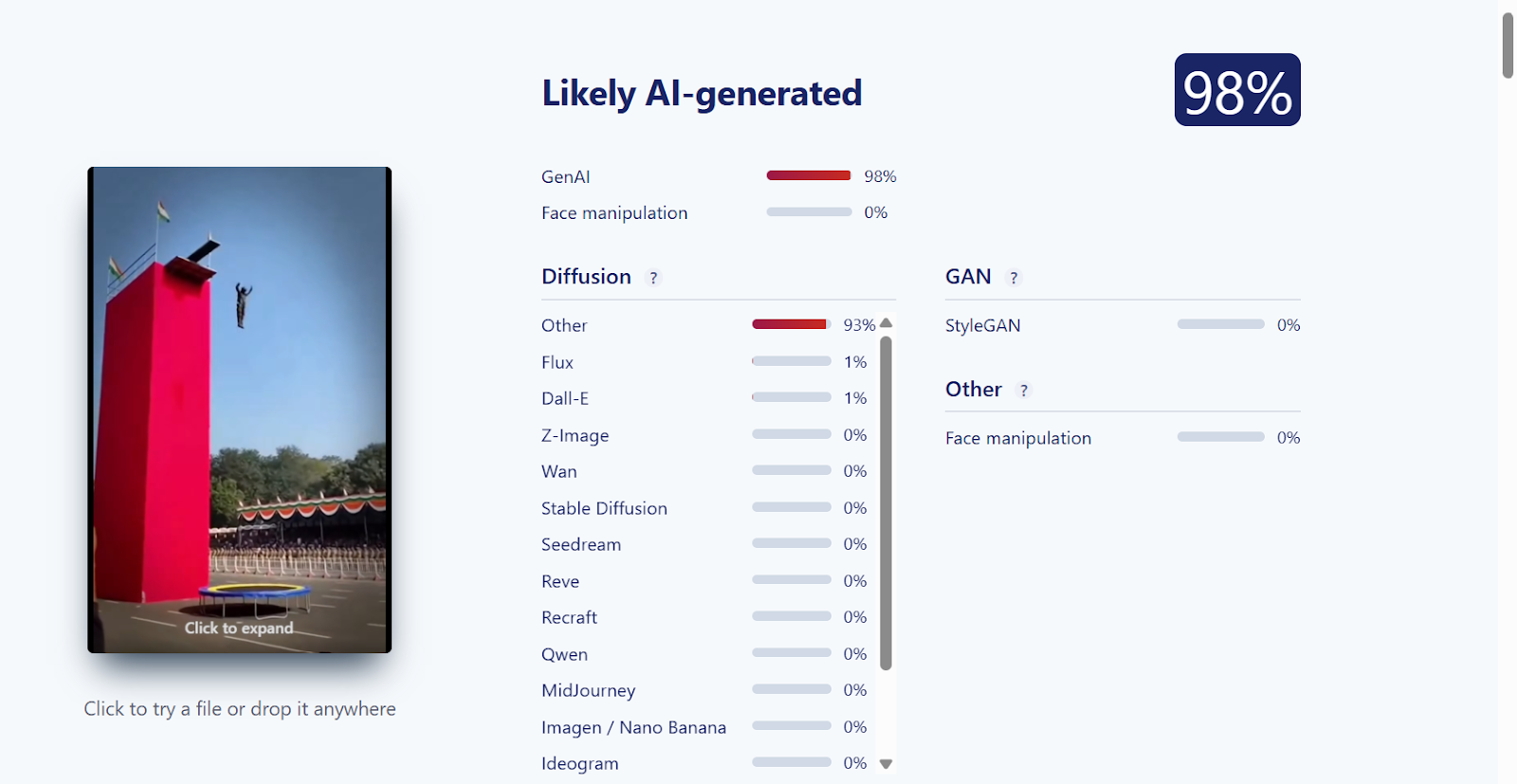

To verify the claim, we conducted a reverse image search using Google Lens but found no credible news reports or official sources mentioning such an incident. A closer look at the video revealed several inconsistencies commonly associated with AI-generated content. For instance, the person appears to disappear momentarily while falling, the head is not clearly visible after impact, and the background audio seems unnatural. We further analyzed the video using AI detection tools. On Hive Moderation, the video showed a 99.2% probability of being AI-generated.

Additionally, analysis using Sightengine indicated a 98% likelihood that the video was synthetically created.

Conclusion

The viral claim is false. The video does not depict a real incident but is an AI-generated clip. It has been shared with a misleading narrative, and there is no evidence to support the claim that it shows an actual accident.

Related Blogs

Executive Summary:

A photo allegedly shows an Israeli Army dog attacking an elderly Palestinian woman has been circulating online on social media. However, the image is misleading as it was created using Artificial Intelligence (AI), as indicated by its graphical elements, watermark ("IN.VISUALART"), and basic anomalies. Although there are certain reports regarding the real incident in several news channels, the viral image was not taken during the actual event. This emphasizes the need to verify photos and information shared on social media carefully.

Claims:

A photo circulating in the media depicts an Israeli Army dog attacking an elderly Palestinian woman.

Fact Check:

Upon receiving the posts, we closely analyzed the image and found certain discrepancies that are commonly seen in AI-generated images. We can clearly see the watermark “IN.VISUALART” and also the hand of the old lady looks odd.

We then checked in AI-Image detection tools named, True Media and contentatscale AI detector. Both found potential AI Manipulation in the image.

Both tools found it to be AI Manipulated. We then keyword searched for relevant news regarding the viral photo. Though we found relevant news, we didn’t get any credible source for the image.

The photograph that was shared around the internet has no credible source. Hence the viral image is AI-generated and fake.

Conclusion:

The circulating photo of an Israeli Army dog attacking an elderly Palestinian woman is misleading. The incident did occur as per the several news channels, but the photo depicting the incident is AI-generated and not real.

- Claim: A photo being shared online shows an elderly Palestinian woman being attacked by an Israeli Army dog.

- Claimed on: X, Facebook, LinkedIn

- Fact Check: Fake & Misleading

.webp)

Overview:

WazirX is the platform for cryptocurrencies, based in India that has been hacked, and it made a loss of more than $230 million in cryptocurrency. This case concerned an unauthorized transaction with a multisignature or multisig, wallet controlled through Liminal’a digital asset management platform. These attacking incidents have thereafter raised more questions on the security of the Cryptocurrency exchanges and efficiency of the existing policies and laws.

Wallet Configuration and Security Measures

This wallet was breached and had a multisig setting meaning that more than one signature was needed to authorize a transaction. Specifically, it had six signatories: five are funded by WazirX and one is funded by Liminal. Every transaction needed the approval of at least three signatories of WazirX, all of whom had addressed security concerns by using Ledger’s hardware wallets; while the Liminal, too, had a signatory, for approval.

To further increase the level of security of the transactions, a whitelisting policy was introduced, only limited addresses were authorized to receive funds. This system was rather vulnerable, and the attackers managed to grasp the discrepancy between the information available through Liminal’s interface and the content of the transaction to seize unauthorized control over the wallet and implement the theft.

Modus Operandi: Attack Mechanics

The cyber attack appears to have been carefully carried out, with preliminary investigations suggesting the following tactics:

- Payload Manipulation: The attackers apparently substituted the transaction’s payload during signing; hence, they can reroute the collected funds into an unrelated wallet.

- Chain Hopping: To make it much harder to track their movements, the attackers split large amounts of money across multiple blockchains and broke tens of thousands of dollars into thousands of transactions involving different cryptocurrencies. This technique makes it difficult to trace people and things.

- Zero Balance Transactions: There were also some instances where it ended up with no Ethereum (ETH) in the balance and such wallets also in use for the purpose of further anonymization of the transactions.

- Analysis of the blockchain data suggested the enemy might have been making the preparations for this attack for several days prior to their attack and involved a high amount of planning.

Actions taken by WazirX:

Following the attack, WazirX implemented a series of immediate actions:

- User Notifications: The users were immediately notified of the occurrence of the breach and the possible risk it posed to them.

- Law Enforcement Engagement: The matters were reported to the National Cyber Crime Reporting Portal and specific authorities of which the Financial Intelligence Unit (FIU) and the Computer Emergency Response Team (CERT-In).

- Service Suspension: WazirX had suspended all its trading operations and user deposits’ and withdrawals’ to minimize further cases and investigate.

- Global Outreach: The exchange contacted more than 500 cryptocurrency exchanges and requested to blacklist the wallet’s addresses linked to the theft.

- Bounty Program: A bounty program was announced to encourage people to share information that can enable the authorities to retrieve the stolen money. A maximum of 23 million dollars was placed on the bounty.

Further Investigations

WazirX stated that it has contracted the services of cybersecurity professionals to help in the prosecution process of identifying and compensating for the losses. The exchange is still investigating the forensic data and working with the police for tracking the stolen assets. Nevertheless, the prospects of full recovery may be quite questionable primarily because of complexity of the attack and the methods used by the attackers.

Precautionary measures:

The WazirX cyber attack clearly implies that there is the necessity to improve the security and the regulation of the cryptocurrency industry. As exchanges become increasingly targeted by hackers, there is a pressing need for:

- Stricter Security Protocols: The commitment to technical innovations, such as integration of MFA, as well as constant monitoring of the users’ wallets’ activities.

- Regulatory Oversight: Formalization of the laws that require proper security for the cryptocurrency exchange platforms to safeguard their users as well as their investments.

- Community Awareness: To bypass such predicaments, there is a need to study on emergent techniques in spreading awareness, particularly in cases of scams or phishing attempts that are likely to follow such breaches.

Conclusion:

The cyber attack on WazirX in the field of cryptocurrency market, shows weaknesses and provides valuable lessons for enhancing the security. This attack highlights critical vulnerabilities in cryptocurrency exchanges, even though employing advanced security measures like multisignature wallets and whitelisting policies. The attack's complexity, involving payload manipulation, chain hopping, and zero balance transactions, underscores the attackers' meticulous planning and the challenges in tracing stolen assets. This case brings a strong message regarding the necessity of solid security measures, and constant attention to security in the rapidly growing world of digital assets. Furthermore, the incident highlights the importance of community awareness and education on emerging threats like scams and phishing attempts, which usually follow such breaches. By fostering a culture of vigilance and knowledge, the cryptocurrency community can better defend against future attacks.

Reference:

https://wazirx.com/blog/important-update-cyber-attack-incident-and-measures-to-protect-your-assets/

https://www.linkedin.com/pulse/wazirx-cyberattack-in-depth-analysis-jyqxf

Introduction

India plans to draft the first AI regulations framework. The draft will be discussed and debated in June-July this year as stated by Union Minister of Skill Development and Entrepreneurship Rajeev Chandrasekhar. He aims to harness AI for economic growth, healthcare, and agriculture, ensuring its significant impact. The Indian government plans to fully utilise AI for economic growth, focusing on healthcare, drug discovery, agriculture, and farmer productivity.

Government Approach to Regulating AI

Chandrasekhar stated that the government's approach to AI regulation involves establishing principles and a comprehensive list of harms and criminalities. They prefer clear platform standards to address bias and misuse during model training rather than regulating AI at specific stages of its development. Union Minister Chandrasekhar also highlights the importance of legal compliance and the risks faced by entrepreneurs who disregard regulations in the digital economy. He warned of "severe consequences" for non-compliance.

Addressing the opening session of the two-day Nasscom leadership summit in Mumbai, the Union minister added that the intention is to harness AI for economic growth and address potential risks and harms. Mr. Chandrasekhar stated that the government is committed to developing AI-skilled individuals. He also highlighted the importance of a global governance framework that deals with the safety and trust of AI.

Union Minister Chandrasekhar also said that 900 million Indians online and 1.3 billion people will be connected to the global internet soon, providing India with both an opportunity and a responsibility to collaborate on regulations to establish legal safeguards that protect consumers and citizens. He further added that the framework is being retrofitted to address the complexity and impact of AI in safety infrastructure. The goal is to ensure legal guardrails for Al, a kinetic enabler of the digital economy, safety and trust, and accountability for those using the AI platform.

Prioritizing Safety and Trust in AI Development

Union minister Chandrasekhar announced that the framework will be discussed at the upcoming Global Partnership on Artificial Intelligence (GPAI) event, a multi-stakeholder initiative with 29 member countries aiming to bridge the gap between theory and practice on AI by supporting research on AI-related priorities. Chandrasekhar emphasises the importance of safety and trust in generative AI development. He believes that every platform must be legally accountable for any harm it causes or enables and should not enable criminality. He advocated for safe and trustworthy AI.

Conclusion

India is drafting its first AI regulation framework, as highlighted by Union Minister Rajeev Chandrasekhar. This framework aims to harness the potential of AI while ensuring safety, trust, and accountability. The framework will focus on principles, comprehensive standards, and legal compliance to navigate the complexities of AI's impact on sectors like healthcare, agriculture, and the digital economy. India recognises the need for robust legal safeguards to protect citizens and foster innovation and economic growth while fostering a culture of trustworthy AI development.

References:

- https://www.livemint.com/ai/artificial-intelligence/india-to-come-up-with-ai-regulations-framework-by-june-july-this-year-rajeev-chandrasekhar-msde-11708409300377.html

- https://timesofindia.indiatimes.com/business/india-business/india-to-develop-draft-ai-framework-by-june-july-chandrasekhar/articleshow/107865548.cms

- https://newsonair.gov.in/News?title=Government-to-come-out-with-draft-regulatory-framework-for-Artificial-Intelligence-by-July-2024&id=477637