#FactCheck -Truth Behind the Viral Snake Rain Video: AI-Generated, Not Real

Executive Summary

A shocking video claiming to show snakes raining down from the sky is going viral on social media. The clip shows what appear to be cobras and pythons falling in large numbers instead of rain, while people are seen running in panic through a marketplace. The video is being shared with the claim that it is the result of “tampering with nature” and that sudden snake rainfall occurred in an unidentified country. (Links and archived versions provided)

CyberPeace researched the viral claim and found it to be false. The video does not depict a real incident. Instead, it has been generated using artificial intelligence (AI).

Fact Check

To verify the authenticity of the video, we extracted keyframes and conducted a reverse image search using Google Lens. However, we did not find any credible media report linked to the viral footage. We also searched relevant keywords on Google but found no reliable national or international news coverage supporting the claim. If snakes had genuinely rained from the sky in any country, the incident would have received widespread media attention globally. A frame-by-frame analysis of the video revealed multiple inconsistencies and visual anomalies:

In the first two seconds, a massive snake appears to fall onto electric wires, yet its body passes unrealistically through the wires — something that is physically impossible. The snakes falling from the sky and crawling on the ground move in an unnatural manner. Instead of falling under gravity, they appear to float mid-air. Around the 9–10 second mark, a person lying on the ground has a visibly distorted hand structure, a common artifact seen in AI-generated videos.

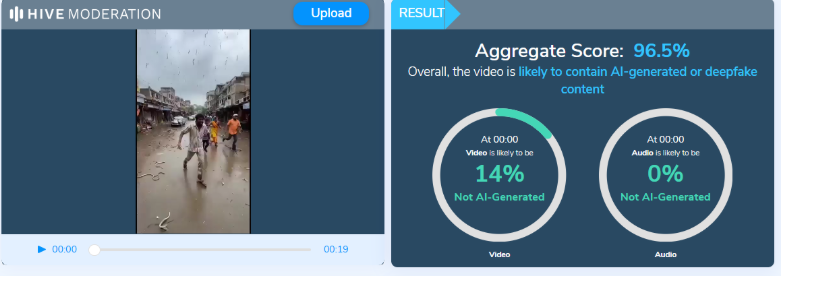

Such irregularities are typical indicators of AI-generated content. The viral video was further analyzed using the AI detection tool Hive Moderation, which indicated a 96.5% probability that the video was AI-generated.

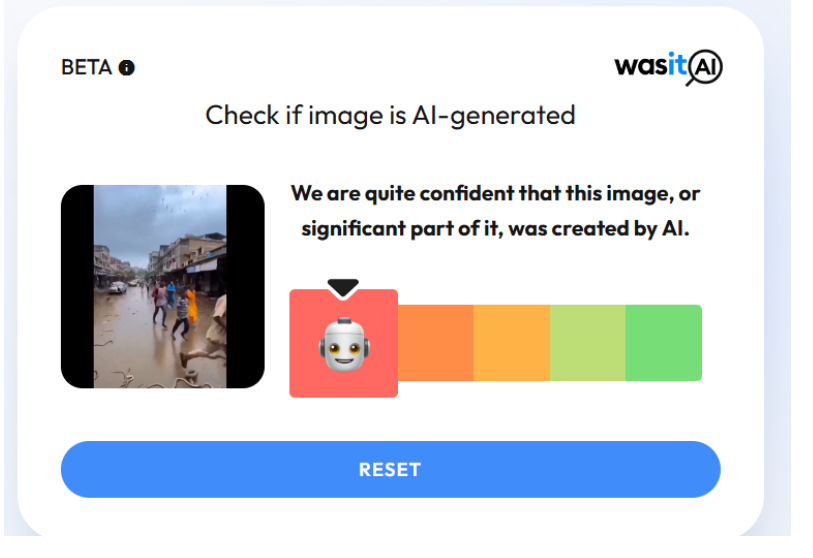

Additionally, image detection tool WasitAI also classified the visuals in the viral clip as highly likely to be AI-generated.

Conclusion

CyberPeace ’s research confirms that the viral video claiming to show snakes raining from the sky is not authentic. The footage has been created using artificial intelligence and does not depict a real event.