#FactCheck- AI-Generated Image Falsely Shows SRH Team Seeking Blessings

Executive Summary

A post is rapidly going viral on social media claiming to show Sunrisers Hyderabad (SRH) captain Ishan Kishan, CEO Kavya Maran, and the team seeking blessings in front of a portrait of Jesus Christ at the Rajiv Gandhi International Cricket Stadium before a match. The image is being shared as a genuine pre-match moment. However, research by the CyberPeace found that the viral image is not real but generated using artificial intelligence (AI). There are no credible media reports or official updates from Sunrisers Hyderabad confirming any such pre-match activity. Further analysis using multiple AI detection tools also indicated that the image is likely synthetic. Therefore, the claim made in the viral post is false.

Claim

A Facebook user shared the image with the caption:“Preparation starts from within. Before taking the field at the Rajiv Gandhi Stadium, Ishan Kishan, Abhishek Sharma, and the SRH squad seek blessings. With Kavya Maran and the team united in faith, the Orange Army is ready for battle!”

- https://archive.ph/wip/dtbZ0

- https://www.facebook.com/13CricketNews/posts/preparation-starts-from-within-before-taking-the-field-at-the-rajiv-gandhi-stadi/1790225659038036/

Fact Check

A close inspection of the viral image revealed several inconsistencies. A cooler box in the image bears a sticker of Mumbai Indians, even though Mumbai Indians and Sunrisers Hyderabad had not played each other in IPL 2026 at the time implied by the claim. Their scheduled match is set for April 29, 2026, at Wankhede Stadium, not at the Hyderabad venue shown in the image.

- https://www.iplt20.com/teams/sunrisers-hyderabad/schedule

Additionally, the image incorrectly displays Dream11 as the title sponsor for SRH, whereas Shree Cement is the official title sponsor for the IPL 2026 season.

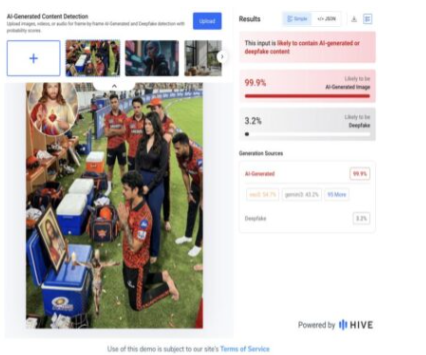

To further verify authenticity, the image was analysed using AI detection tools. Hive Moderation assigned it a 99.9% probability of being AI-generated, strongly indicating that it is not genuine.

Conclusion

The viral claim is false. The image showing Sunrisers Hyderabad players and their CEO praying before a match is AI-generated and does not depict a real event. It has been circulated with a misleading narrative and lacks any factual basis.

Related Blogs

Executive Summary:

A viral claim circulating on social media suggests that the Indian government is offering a 50% subsidy on tractor purchases under the so-called "Kisan Tractor Yojana." However, our research reveals that the website promoting this scheme, allegedly under the Ministry of Agriculture and Farmers Welfare, is misleading. This report aims to inform readers about the deceptive nature of this claim and emphasize the importance of safeguarding personal information against fraudulent schemes.

Claim:

A website has been circulating misleading information, claiming that the Indian government is offering a 50% subsidy on tractor purchases under the so-called "Kisan Tractor Yojana." Additionally, a YouTube video promoting this scheme suggests that individuals can apply by submitting certain documents and paying a small, supposedly refundable application fee.

Fact Check:

Our research has confirmed that there is no scheme by the Government of India named 'PM Kisan Tractor Yojana.' The circulating announcement is false and appears to be an attempt to defraud farmers through fraudulent means.

While the government does provide various agricultural subsidies under recognized schemes such as the PM Kisan Samman Nidhi and the Sub-Mission on Agricultural Mechanization (SMAM), no such initiative under the name 'PM Kisan Tractor Yojana' exists. This misleading claim is, therefore, a phishing attempt aimed at deceiving farmers and unlawfully collecting their personal or financial information.

Farmers and stakeholders are advised to rely only on official government sources for scheme-related information and to exercise caution against such deceptive practices.

To assess the authenticity of the “PM Kisan Tractor Yojana” claim, we reviewed the websites farmertractoryojana.in and tractoryojana.in. Our analysis revealed several inconsistencies, indicating that these websites are fraudulent.

As part of our verification process, we evaluated tractoryojana.in using Scam Detector to determine its trustworthiness. The results showed a low trust score, raising concerns about its legitimacy. Similarly, we conducted the same check for farmertractoryojana.in, which also appeared untrustworthy and risky. The detailed results of these assessments are attached below.

Given that these websites falsely present themselves as government-backed initiatives, our findings strongly suggest that they are part of a fraudulent scheme designed to mislead and exploit individuals seeking genuine agricultural subsidies.

During our research, we examined the "How it Works" section of the website, which outlines the application process for the alleged “PM Kisan Tractor Yojana.” Notably, applicants are required to pay a refundable application fee to proceed with their registration. It is important to emphasize that no legitimate government subsidy program requires applicants to pay a refundable application fee.

Our research found that the address listed on the website, “69A, Hanuman Road, Vile Parle East, Mumbai 400057,” is not associated with any government office or agricultural subsidy program. This further confirms the website’s fraudulent nature. Farmers should verify subsidy programs through official government sources to avoid scams.

A key inconsistency is the absence of a verified social media presence. Most legitimate government programs maintain official social media accounts for updates and communication. However, these websites fail to provide any such official handles, further casting doubt on their authenticity.

Upon attempting to log in, both websites redirect to the same page, suggesting they may be operated by the same entity or individual. This further raises concerns about their legitimacy and reinforces the likelihood of fraudulent activity.

Conclusion:

Our research confirms that the "PM Kisan Tractor Yojana" claim is fraudulent. No such government scheme exists, and the websites promoting it exhibit multiple red flags, including low trust scores, a misleading application process requiring a refundable fee, a false address, and the absence of an official social media presence. Additionally, both websites redirect to the same page, suggesting they are operated by the same entity. Farmers are advised to rely on official government sources to avoid falling victim to such scams.

- Claim: PM-Kisan Tractor Yojana Government Offering Subsidy on tractors.

- Claimed On: Social Media

- Fact Check: False and Misleading

Introduction

In today’s time, everything is online, and the world is interconnected. Cases of data breaches and cyberattacks have been a reality for various organisations and industries, In the recent case (of SAS), Scandinavian Airlines experienced a cyberattack that resulted in the exposure of customer details, highlighting the critical importance of preventing customer privacy. The incident is a wake-up call for Airlines and businesses to evaluate their cyber security measures and learn valuable lessons to safeguard customers’ data. In this blog, we will explore the incident and discuss the strategies for protecting customers’ privacy in this age of digitalisation.

Analysing the backdrop

The incident has been a shocker for the aviation industry, SAS Scandinavian Airlines has been a victim of a cyberattack that compromised consumer data. Let’s understand the motive of cyber crooks and the technique they used :

Motive Behind the Attack: Understanding the reasons that may have driven the criminals is critical to comprehending the context of the Scandinavian Airlines cyber assault. Financial gain, geopolitical conflicts, activism, or personal vendettas are common motivators for cybercriminals. Identifying the purpose of the assault can provide insight into the attacker’s aims and the possible impact on both the targeted organisation and its consumers. Understanding the attack vector and strategies used by cyber attackers reveals the amount of complexity and possible weaknesses in an organisation’s cybersecurity defences. Scandinavian Airlines’ cyber assault might have included phishing, spyware, ransomware, or exploiting software weaknesses. Analysing these tactics allows organisations to strengthen their security against similar assaults.

Impact on Victims: The Scandinavian Airlines (SAS) cyber attack victims, including customers and individuals related to the company, have suffered substantial consequences. Data breaches and cyber-attack have serious consequences due to the leak of personal information.

1)Financial Losses and Fraudulent Activities: One of the most immediate and upsetting consequences of a cyber assault is the possibility of financial loss. Exposed personal information, such as credit card numbers, can be used by hackers to carry out illegal activities such as unauthorised transactions and identity theft. Victims may experience financial difficulties and the need to spend time and money resolving these concerns.

2)Concerns about privacy and personal security: A breach of personal data can significantly impact the privacy and personal security of victims. The disclosed information, including names, addresses, and contact information, might be exploited for nefarious reasons, such as targeted phishing or physical harassment. Victims may have increased anxiety about their safety and privacy, which can interrupt their everyday life and create mental pain.

3) Reputational Damage and Trust Issues: The cyber attack may cause reputational harm to persons linked with Scandinavian Airlines, such as workers or partners. The breach may diminish consumers’ and stakeholders’ faith in the organisation, leading to a bad view of its capacity to protect personal information. This lack of trust might have long-term consequences for the impacted people’s professional and personal relationships.

4) Emotional Stress and Psychological Impact: The psychological impact of a cyber assault can be severe. Fear, worry, and a sense of violation induced by having personal information exposed can create emotional stress and psychological suffering. Victims may experience emotions of vulnerability, loss of control, and distrust toward digital platforms, potentially harming their overall quality of life.

5) Time and Effort Required for Remediation: Addressing the repercussions of a cyber assault demands significant time and effort from the victims. They may need to call financial institutions, reset passwords, monitor accounts for unusual activity, and use credit monitoring services. Resolving the consequences of a data breach may be a difficult and time-consuming process, adding stress and inconvenience to the victims’ lives.

6) Secondary Impacts: The impacts of an online attack could continue beyond the immediate implications. Future repercussions for victims may include trouble acquiring credit or insurance, difficulties finding future work, and continuous worry about exploiting their personal information. These secondary effects can seriously affect victims’ financial and general well-being.

Apart from this, the trust lost would take time to rebuild.

Takeaways from this attack

The cyber-attack on Scandinavian Airlines (SAS) is a sharp reminder of cybercrime’s ever-present and increasing menace. This event provides crucial insights that businesses and people may use to strengthen cybersecurity defences. In the lessons that were learned from the Scandinavian Airlines cyber assault and examine the steps that may be taken to improve cybersecurity and reduce future risks. Some of the key points that can be considered are as follows:

Proactive Risk Assessment and Vulnerability Management: The cyber assault on Scandinavian Airlines emphasises the significance of regular risk assessments and vulnerability management. Organisations must proactively identify and fix possible system and network vulnerabilities. Regular security audits, penetration testing, and vulnerability assessments can help identify flaws before bad actors exploit them.

Strong security measures and best practices: To guard against cyber attacks, it is necessary to implement effective security measures and follow cybersecurity best practices. Lessons from the Scandinavian Airlines cyber assault emphasise the importance of effective firewalls, up-to-date antivirus software, secure setups, frequent software patching, and strong password rules. Using multi-factor authentication and encryption technologies for sensitive data can also considerably improve security.

Employee Training and Awareness: Human mistake is frequently a big component in cyber assaults. Organisations should prioritise employee training and awareness programs to educate employees about phishing schemes, social engineering methods, and safe internet practices. Employees may become the first line of defence against possible attacks by cultivating a culture of cybersecurity awareness.

Data Protection and Privacy Measures: Protecting consumer data should be a key priority for businesses. Lessons from the Scandinavian Airlines cyber assault emphasise the significance of having effective data protection measures, such as encryption and access limits. Adhering to data privacy standards and maintaining safe data storage and transfer can reduce the risks connected with data breaches.

Collaboration and Information Sharing: The Scandinavian Airlines cyber assault emphasises the need for collaboration and information sharing among the cybersecurity community. Organisations should actively share threat intelligence, cooperate with industry partners, and stay current on developing cyber threats. Sharing information and experiences can help to build the collective defence against cybercrime.

Conclusion

The Scandinavian Airlines cyber assault is a reminder that cybersecurity must be a key concern for organisations and people. Organisations may improve their cybersecurity safeguards, proactively discover vulnerabilities, and respond effectively to prospective attacks by learning from this occurrence and adopting the lessons learned. Building a strong cybersecurity culture, frequently upgrading security practices, and encouraging cooperation within the cybersecurity community are all critical steps toward a more robust digital world. We may aim to keep one step ahead of thieves and preserve our important information assets by constantly monitoring and taking proactive actions.

Executive Summary:

A circulating picture which is said to be of United States President Joe Biden wearing military uniform during a meeting with military officials has been found out to be AI-generated. This viral image however falsely claims to show President Biden authorizing US military action in the Middle East. The Cyberpeace Research Team has identified that the photo is generated by generative AI and not real. Multiple visual discrepancies in the picture mark it as a product of AI.

Claims:

A viral image claiming to be US President Joe Biden wearing a military outfit during a meeting with military officials has been created using artificial intelligence. This picture is being shared on social media with the false claim that it is of President Biden convening to authorize the use of the US military in the Middle East.

Similar Post:

Fact Check:

CyberPeace Research Team discovered that the photo of US President Joe Biden in a military uniform at a meeting with military officials was made using generative-AI and is not authentic. There are some obvious visual differences that plainly suggest this is an AI-generated shot.

Firstly, the eyes of US President Joe Biden are full black, secondly the military officials face is blended, thirdly the phone is standing without any support.

We then put the image in Image AI Detection tool

The tool predicted 4% human and 96% AI, Which tells that it’s a deep fake content.

Let’s do it with another tool named Hive Detector.

Hive Detector predicted to be as 100% AI Detected, Which likely to be a Deep Fake Content.

Conclusion:

Thus, the growth of AI-produced content is a challenge in determining fact from fiction, particularly in the sphere of social media. In the case of the fake photo supposedly showing President Joe Biden, the need for critical thinking and verification of information online is emphasized. With technology constantly evolving, it is of great importance that people be watchful and use verified sources to fight the spread of disinformation. Furthermore, initiatives to make people aware of the existence and impact of AI-produced content should be undertaken in order to promote a more aware and digitally literate society.

- Claim: A circulating picture which is said to be of United States President Joe Biden wearing military uniform during a meeting with military officials

- Claimed on: X

- Fact Check: Fake