Introduction

Smartphones have revolutionised human connectivity. In 2023, it was estimated that almost 96% of the global digital population is accessing the internet via their mobile phones and India alone has 1.05 billion users. Information consumption has grown exponentially due to the enhanced accessibility that these mobiles provide. These devices allow accessibility to information no matter where one is, and have completely transformed how we engage with the world around us, be it to skim through work emails while commuting, video streaming during breaks, reading an ebook at our convenience or even catching up on news at any time or place. Mobile phones grant us instant access to the web and are always within reach.

But this instant connection has its downsides too, and one of the most worrying of these is the rampant rise of misinformation. These tiny screens and our constant, on-the-go dependence on them can be directly linked to the spread of “fake news,” as people consume more and more content in rapid bursts, without taking the time to really process the same or think deeply about its authenticity. There is an underlying cultural shift in how we approach information and learning currently: the onslaught of vast amounts of “bite-sized information” discourages people from researching what they’re being told or shown. The focus has shifted from learning deeply to consuming more and sharing faster. And this change in audience behaviour is making us vulnerable to misinformation, disinformation and unchecked foreign influence.

The Growth of Mobile Internet Access

More than 5 billion people are connected to the internet and web traffic is increasing rapidly. The developed countries in North America and Europe are experiencing mobile internet penetration at a universal rate. Contrastingly, the developing countries of Africa, Asia, and Latin America are experiencing rapid growth in this penetration. The introduction of affordable smartphones and low-cost mobile data plans has expanded access to internet connectivity. 4G and 5G infrastructure development have further bridged any connectivity gaps. This widespread access to the mobile internet has democratised information, allowing millions of users to participate in the digital economy. Access to educational resources while at the same time engaging in global conversations is one such example of the democratisation of information. This reduces the digital divide between diverse groups and empowers communities with unprecedented access to knowledge and opportunities.

The Nature of Misinformation in the Mobile Era

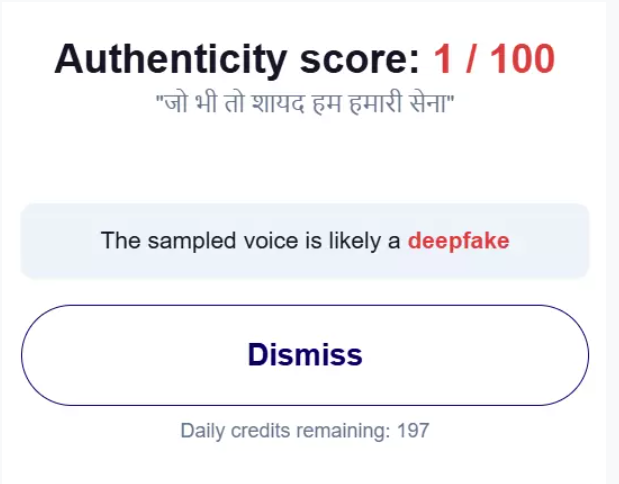

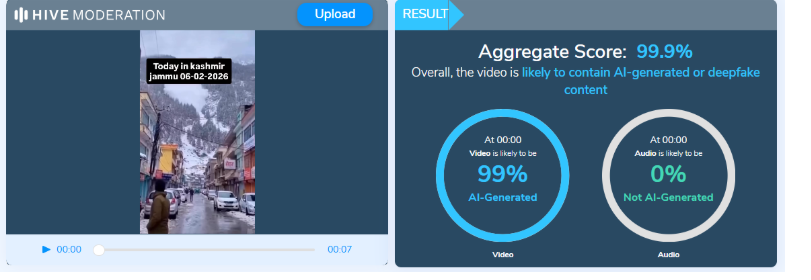

Misinformation spread has become more prominent in recent times and one of the contributing factors is the rise of mobile internet. This instantaneous connection has made social media platforms like Facebook, WhatsApp, and X (formerly Twitter) available on a single compact and portable device. These social media platforms enable users to share content instantly and to a wide user base, many times without verifying its accuracy. The virality of social media sharing, where posts can reach thousands of users in seconds, accelerates the spread of false information. This ease of sharing, combined with algorithms that prioritise engagement, creates a fertile ground for misinformation to flourish, misleading vast numbers of people before corrections or factual information can be disseminated.

Some of the factors that are amplifying misinformation sharing through mobile internet are algorithmic amplification which prioritises engagement, the ease of sharing content due to instant access and user-generated content, the limited media literacy of users and the echo chambers which reinforce existing biases and spread false information.

Gaps and Challenges due to the increased accessibility of Mobile Internet

Despite growing concerns about misinformation spread due to mobile internet, policy responses remain inadequate, particularly in developing countries. These gaps include: the lack of algorithm regulation, as social media platforms prioritise engaging content, often fueling misinformation. Inadequate international cooperation further complicates enforcement, as addressing the cross-border nature of misinformation has been a struggle for national regulations.

Additionally, balancing content moderation with free speech remains challenging, with efforts to curb misinformation sometimes leading to concerns over censorship.

Finally, a deficit in media literacy leaves many vulnerable to false information. Governments and international organisations must prioritise public education to equip users with the required skills to evaluate online content, especially in low-literacy regions.

CyberPeace Recommendations

Addressing misinformation via mobile internet requires a collaborative, multi-stakeholder approach.

- Governments should mandate algorithm transparency, ensuring social media platforms disclose how content is prioritised and give users more control.

- Collaborative fact-checking initiatives between governments, platforms, and civil society could help flag or correct false information before it spreads, especially during crises like elections or public health emergencies.

- International organisations should lead efforts to create standardised global regulations to hold platforms accountable across borders.

- Additionally, large-scale digital literacy campaigns are crucial, teaching the public how to assess online content and avoid misinformation traps.

Conclusion

Mobile internet access has transformed information consumption and bridged the digital divide. At the same time, it has also accelerated the spread of misinformation. The global reach and instant nature of mobile platforms, combined with algorithmic amplification, have created significant challenges in controlling the flow of false information. Addressing this issue requires a collective effort from governments, tech companies, and civil society to implement transparent algorithms, promote fact-checking, and establish international regulatory standards. Digital literacy should be enhanced to empower users to assess online content and counter any risks that it poses.

References

.webp)

%20(2).webp)