#FactCheck - AI-Generated Clip of Lion Carrying Woman Shared as Real Incident

Executive Summary

A video circulating on social media shows a lion carrying away a woman who was washing clothes near a pond. Users are sharing the clip claiming it depicts a real incident. However, research by CyberPeace found the viral claim to be false. The research revealed that the video is not real but AI-generated.

Claim

A user on Facebook shared the viral video claiming that a lion attacked and carried away a woman from a pond while she was washing clothes. The link to the post and its archived version are provided below

Fact Check:

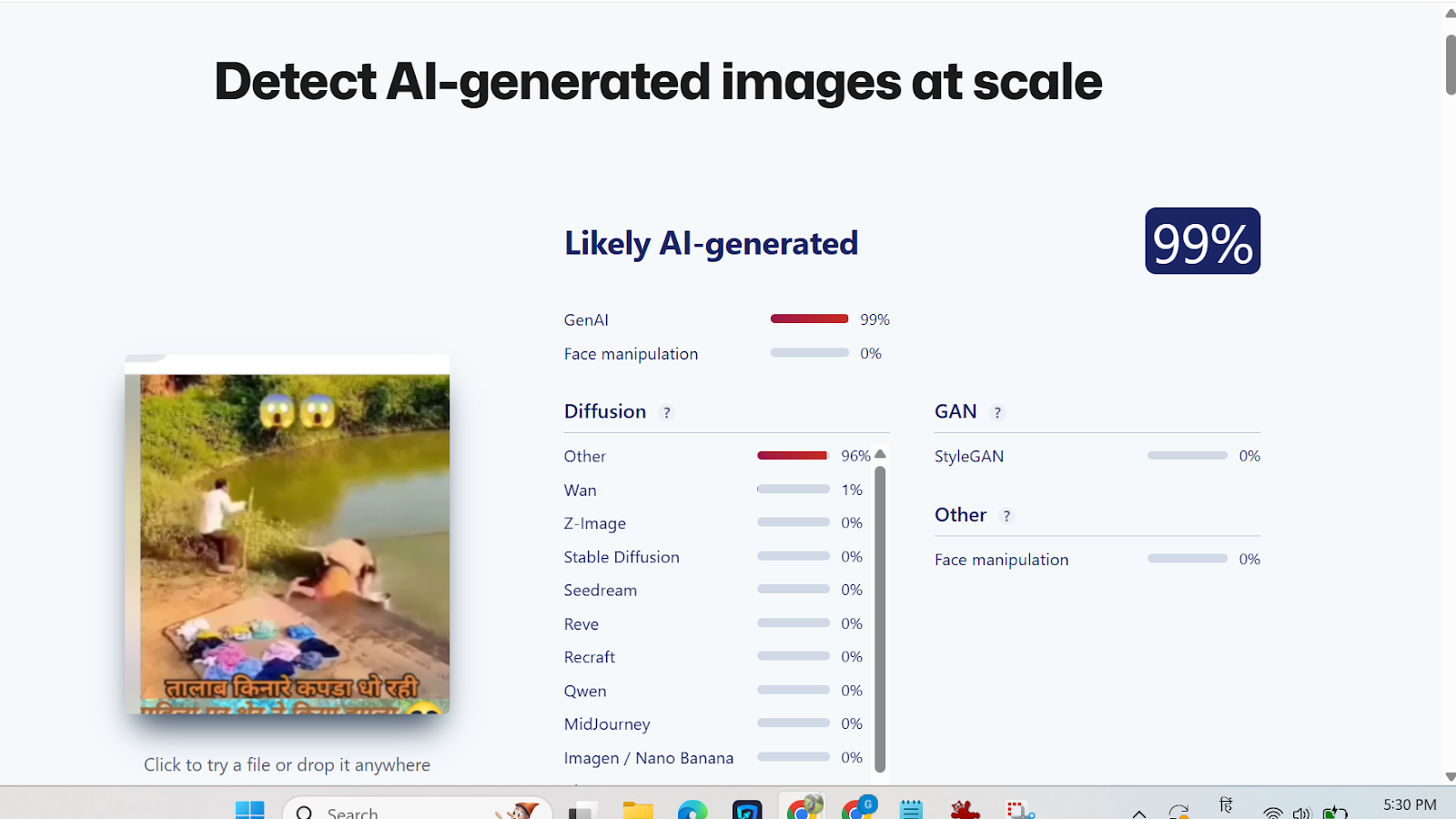

Upon closely examining the viral clip, we noticed several visual inconsistencies that raised suspicion about its authenticity. The video was then analyzed using the AI-detection tool Sightengine. According to the analysis results, the viral video was identified as AI-generated.

Conclusion

The research confirms that the viral video does not depict a real incident. The clip is digitally created using artificial intelligence and is being falsely shared as a genuine event.

Related Blogs

.webp)

Introduction

The link between social media and misinformation is undeniable. Misinformation, particularly the kind that evokes emotion, spreads like wildfire on social media and has serious consequences, like undermining democratic processes, discrediting science, and promulgating hateful discourses which may incite physical violence. If left unchecked, misinformation propagated through social media has the potential to incite social disorder, as seen in countless ethnic clashes worldwide. This is why social media platforms have been under growing pressure to combat misinformation and have been developing models such as fact-checking services and community notes to check its spread. This article explores the pros and cons of the models and evaluates their broader implications for online information integrity.

How the Models Work

- Third-Party Fact-Checking Model (formerly used by Meta) Meta initiated this program in 2016 after claims of extraterritorial election tampering through dis/misinformation on its platforms. It entered partnerships with third-party organizations like AFP and specialist sites like Lead Stories and PolitiFact, which are certified by the International Fact-Checking Network (IFCN) for meeting neutrality, independence, and editorial quality standards. These fact-checkers identify misleading claims that go viral on platforms and publish verified articles on their websites, providing correct information. They also submit this to Meta through an interface, which may link the fact-checked article to the social media post that contains factually incorrect claims. The post then gets flagged for false or misleading content, and a link to the article appears under the post for users to refer to. This content will be demoted in the platform algorithm, though not removed entirely unless it violates Community Standards. However, in January 2025, Meta announced it was scrapping this program and beginning to test X’s Community Notes Model in the USA, before rolling it out in the rest of the world. It alleges that the independent fact-checking model is riddled with personal biases, lacks transparency in decision-making, and has evolved into a censoring tool.

- Community Notes Model ( Used by X and being tested by Meta): This model relies on crowdsourced contributors who can sign up for the program, write contextual notes on posts and rate the notes made by other users on X. The platform uses a bridging algorithm to display those notes publicly, which receive cross-ideological consensus from voters across the political spectrum. It does this by boosting those notes that receive support despite the political leaning of the voters, which it measures through their engagements with previous notes. The benefit of this system is that it is less likely for biases to creep into the flagging mechanism. Further, the process is relatively more transparent than an independent fact-checking mechanism since all Community Notes contributions are publicly available for inspection, and the ranking algorithm can be accessed by anyone, allowing for external evaluation of the system by anyone.

CyberPeace Insights

Meta’s uptake of a crowdsourced model signals social media’s shift toward decentralized content moderation, giving users more influence in what gets flagged and why. However, the model’s reliance on diverse agreements can be a time-consuming process. A study (by Wirtschafter & Majumder, 2023) shows that only about 12.5 per cent of all submitted notes are seen by the public, making most misleading content go unchecked. Further, many notes on divisive issues like politics and elections may not see the light of day since reaching a consensus on such topics is hard. This means that many misleading posts may not be publicly flagged at all, thereby hindering risk mitigation efforts. This casts aspersions on the model’s ability to check the virality of posts which can have adverse societal impacts, especially on vulnerable communities. On the other hand, the fact-checking model suffers from a lack of transparency, which has damaged user trust and led to allegations of bias.

Since both models have their advantages and disadvantages, the future of misinformation control will require a hybrid approach. Data accuracy and polarization through social media are issues bigger than an exclusive tool or model can effectively handle. Thus, platforms can combine expert validation with crowdsourced input to allow for accuracy, transparency, and scalability.

Conclusion

Meta’s shift to a crowdsourced model of fact-checking is likely to have bigger implications on public discourse since social media platforms hold immense power in terms of how their policies affect politics, the economy, and societal relations at large. This change comes against the background of sweeping cost-cutting in the tech industry, political changes in the USA and abroad, and increasing attempts to make Big Tech platforms more accountable in jurisdictions like the EU and Australia, which are known for their welfare-oriented policies. These co-occurring contestations are likely to inform the direction the development of misinformation-countering tactics will take. Until then, the crowdsourcing model is still in development, and its efficacy is yet to be seen, especially regarding polarizing topics.

References

- https://www.cyberpeace.org/resources/blogs/new-youtube-notes-feature-to-help-users-add-context-to-videos

- https://en-gb.facebook.com/business/help/315131736305613?id=673052479947730

- http://techxplore.com/news/2025-01-meta-fact.html

- https://about.fb.com/news/2025/01/meta-more-speech-fewer-mistakes/

- https://communitynotes.x.com/guide/en/about/introduction

- https://blogs.lse.ac.uk/impactofsocialsciences/2025/01/14/do-community-notes-work/?utm_source=chatgpt.com

- https://www.techpolicy.press/community-notes-and-its-narrow-understanding-of-disinformation/

- https://www.rstreet.org/commentary/metas-shift-to-community-notes-model-proves-that-we-can-fix-big-problems-without-big-government/

- https://tsjournal.org/index.php/jots/article/view/139/57

.webp)

Introduction

On the precipice of a new domain of existence, the metaverse emerges as a digital cosmos, an expanse where the horizon is not sky, but a limitless scope for innovation and imagination. It is a sophisticated fabric woven from the threads of social interaction, leisure, and an accelerated pace of technological progression. This new reality, a virtual landscape stretching beyond the mundane encumbrances of terrestrial life, heralds an evolutionary leap where the laws of physics yield to the boundless potential inherent in our creativity. Yet, the dawn of such a frontier does not escape the spectre of an age-old adversary—financial crime—the shadow that grows in tandem with newfound opportunity, seeping into the metaverse, where crypto-assets are no longer just an alternative but the currency du jour, dazzling beacons for both legitimate pioneers and shades of illicit intent.

The metaverse, by virtue of its design, is a canvas for the digital repaint of society—a three-dimensional realm where the lines between immersive experiences and entertainment blur, intertwining with surreal intimacy within this virtual microcosm. Donning headsets like armor against the banal, individuals become avatars; digital proxies that acquire the ability to move, speak, and perform an array of actions with an ease unattainable in the physical world. Within this alternative reality, users navigate digital topographies, with experiences ranging from shopping in pixelated arcades to collaborating in virtual offices; from witnessing concerts that defy sensory limitations to constructing abodes and palaces from mere codes and clicks—an act of creation no longer beholden to physicality but to the breadth of one's ingenuity.

The Crypto Assets

The lifeblood of this virtual economy pulsates through crypto-assets. These digital tokens represent value or rights held on distributed ledgers—a technology like blockchain, which serves as both a vault and a transparent tapestry, chronicling the pathways of each digital asset. To hop onto the carousel of this economy requires a digital wallet—a storeroom and a gateway for acquisition and trade of these virtual valuables. Cryptocurrencies, with NFTs—Non-fungible Tokens—have accelerated from obscure digital curios to precious artifacts. According to blockchain analytics firm Elliptic, an astonishing figure surpassing US$100 million in NFTs were usurped between July 2021 and July 2022. This rampant heist underlines their captivating allure for virtual certificates. Empowers do not just capture art, music, and gaming, but embody their very soul.

Yet, as the metaverse burgeons, so does the complexity and diversity of financial transgressions. From phishing to sophisticated fraud schemes, criminals craft insidious simulacrums of legitimate havens, aiming to drain the crypto-assets of the unwary. In the preceding year, a daunting figure rose to prominence—the vanishing of US$14 billion worth of crypto-assets, lost to the abyss of deception and duplicity. Hence, social engineering emerges from the shadows, a sort of digital chicanery that preys not upon weaknesses of the system, but upon the psychological vulnerabilities of its users—scammers adorned in the guise of authenticity, extracting trust and assets with Machiavellian precision.

The New Wave of Fincrimes

Extending their tentacles further, perpetrators of cybercrime exploit code vulnerabilities, engage in wash trading, obscuring the trails of money laundering, meander through sanctions evasion, and even dare to fund activities that send ripples of terror across the physical and virtual divide. The intricacies of smart contracts and the decentralized nature of these worlds, designed to be bastions of innovation, morph into paths paved for misuse and exploitation. The openness of blockchain transactions, the transparency that should act as a deterrent, becomes a paradox, a double-edged sword for the law enforcement agencies tasked with delineating the networks of faceless adversaries.

Addressing financial crime in the metaverse is Herculean labour, requiring an orchestra of efforts—harmonious, synchronised—from individual users to mammoth corporations, from astute policymakers to vigilant law enforcement bodies. Users must furnish themselves with critical awareness, fortifying their minds against the siren calls that beckon impetuous decisions, spurred by the anxiety of falling behind. Enterprises, the architects and custodians of this digital realm, are impelled to collaborate with security specialists, to probe their constructs for weak seams, and to reinforce their bulwarks against the sieges of cyber onslaughts. Policymakers venture onto the tightrope walk, balancing the impetus for innovation against the gravitas of robust safeguards—a conundrum played out on the global stage, as epitomised by the European Union's strides to forge cohesive frameworks to safeguard this new vessel of human endeavour.

The Austrian Example

Consider the case of Austria, where the tapestry of laws entwining crypto-assets spans a gamut of criminal offences, from data breaches to the complex webs of money laundering and the financing of dark enterprises. Users and corporations alike must become cartographers of local legislation, charting their ventures and vigilances within the volatile seas of the metaverse.

Upon the sands of this virtual frontier, we must not forget: that the metaverse is more than a hive of bits and bandwidth. It crystallises our collective dreams, echoes our unspoken fears, and reflects the range of our ambitions and failings. It stands as a citadel where the ever-evolving quest for progress should never stray from the compass of ethical pursuit. The cross-pollination of best practices, and the solidarity of international collaboration, are not simply tactics—they are imperatives engraved with the moral codes of stewardship, guiding us to preserve the unblemished spirit of the metaverse.

Conclusion

The clarion call of the metaverse invites us to venture into its boundless expanse, to savour its gifts of connection and innovation. Yet, on this odyssey through the pixelated constellations, we harness vigilance as our star chart, mindful of the mirage of morality that can obfuscate and lead astray. In our collective pursuit to curtail financial crime, we deploy our most formidable resource—our unity—conjuring a bastion for human ingenuity and integrity. In this, we ensure that the metaverse remains a beacon of awe, safeguarded against the shadows of transgression, and celebrated as a testament to our shared aspiration to venture beyond the realm of the possible, into the extraordinary.

References

- https://www.wolftheiss.com/insights/financial-crime-in-the-metaverse-is-real/

- https://gnet-research.org/2023/08/16/meta-terror-the-threats-and-challenges-of-the-metaverse/

- https://shuftipro.com/blog/the-rising-concern-of-financial-crimes-in-the-metaverse-aml-screening-as-a-solution/

Introduction

In the rapidly evolving landscape of cyber threats, a novel menace has surfaced the concept of Digital Arrest. The impostors impersonating law enforcement officers deceive the victims into believing that their bank account, SIM card, Aadhaar card, or bank card has been used unlawfully. They coerce victims into paying them money. Digital Arrest involves the virtual restraint of individuals. These suspensions can vary from restricted access to the account(s), and digital platforms, to implementing measures to prevent further digital activities or being restrained on video calling or being monitored through video calling. In the era of digitisation where the technology is growing on an exponential phase, various existing loopholes are being utilised by the wrongdoers which has given rise to this sinister trend known as “digital arrest fraud”. In this scam, the defrauder manipulates the victims, who impersonate law enforcement officials and further traps the victims into a web of deception involving threats of imminent digital restraint and coerced financial transactions.

Recognizing the Danger of Digital Arrest

A recent case involving an interactive voice response (IVR) call that targeted a victim sheds light on the complexities of the "digital arrest" cybercrime. The victim was notified by the scammers—who were pretending to be law enforcement officers—that a SIM card in her name had apparently been utilised in a criminal incident in Mumbai. The call proceeded to a video conversation with an FBI agent who falsely accused her of being involved in money laundering. The victim was forced into a web of dishonesty because she now believed she was involved in a criminal case, underscoring the psychological manipulation these hackers were using.

Recent incidents of digital arrest fraud

- Recently, a complaint was registered at the Noida Cyber Crime Police Station made by a 50-year-old victim, who was deceived of over Rs 11 lakh and exposed to "digital arrest". By using the identities of an IPS officer in the CBI and the founder of an airline that was grounded, the attackers, masquerading as law enforcement officers, falsely accused the victim of being involved in a fake money-laundering case. She was told that she had another SIM card in her name that was used for fraudulent activities in Mumbai. The complaint made by the victim asserted “Victim’s call was transferred to a person (who identified himself as a Mumbai Police officer) who conducted the initial interrogation over the call and then on Skype VC, where she stayed from 9:30 AM to around 7 in the evening. The woman ended up transferring around ₹11.11 lakh. The scammers then ended contact with her, after which she realised she had been scammed.

- Another recent case of digital arrest fraud came from Faridabad. Where a 23-year-old girl got a call from a fraudster posing as a Lucknow customs officer. The caller said that a package was being shipped to Cambodia that included cards and passports associated with the victim's Aadhaar number. The victim was forced to believe that she was a part of illegal activity, which included trafficking in humans. Under the guise of police officials, the hackers made up allegations before extorting money from the victim. After that, she was told by a man acting as a CBI official that she needed to pay five per cent of the total which was Rs 15 lakh. She said the cybercriminals instructed her not to log off Skype. In the meantime, she ended up transferring Rs 2.5 lakh to a bank account shared by cybercriminals.

Measures to protect oneself from digital arrest

Sustaining a practical and observant approach towards cybersecurity is the key to lowering the peril of being targeted and experiencing digital arrest. Following are certain best practices for ensuring the same:

- Cyber Hygiene: This includes maintaining cyber hygiene by regularly updating passwords, and software and also enabling two-factor authentications to reduce the chances of unauthorized access.

- Phishing Attempts: These can be evaded by refraining from clicking on dubious links or downloading attachments from unknown sources and also authenticating the legitimacy of emails and messages before sharing any personal information.

- Secured devices: By installing reputable antivirus and anti-malware solutions and keeping operating systems and applications up to date with the latest security protocols.

- Virtual Private Networks (VPNs): VPNs can be employed to encrypt internet connections thus enhancing privacy and security. However one must be cautious of free VPN services and OTP only for trustworthy providers.

- Monitor online services: A regular review of online accounts for any unauthorized or unlawful activities and setting up alerts for any changes to account settings or login attempts may help in the early detection of cybercrime and coping with it.

- Secure communication channels: Using secure communication techniques such as encryption can be done for the protection of sensitive information. Sharing of passwords and other information must be cautiously done especially in public forums.

- Awareness: The increasing prevalence of cybercrime known as "digital arrest" underscores the need for preventive measures and increased public awareness. Educational initiatives that draw attention to prevalent cyber threats—especially those that include law enforcement impersonation—can enable people to identify and fend off scams of this kind. The collaboration of law enforcement agencies and telecommunication companies can effectively limit the access points used by fraudsters by identifying and blocking susceptible calls.

Conclusion

The rise of Digital Arrest presents a noteworthy and innovative threat to cybersecurity by taking advantage of people's weaknesses through deceitful impersonation and coercive measures. The case in Noida is a prime example of the boldness and skill of cybercriminals who use fear and false information to trick victims into thinking they are in danger of suffering harsh legal repercussions and taking large amounts of money. In order to combat this increasing cybercrime, people need to take a proactive and watchful stance when it comes to cybersecurity. Cyber hygiene techniques, such as two-factor authentication and frequent password changes, are essential for lowering the possibility of unwanted access. Important precautions include being aware of phishing efforts, protecting devices with reliable antivirus software, and using Virtual Private Networks (VPNs) to increase privacy. Cybercriminals and fraudsters often use fear as a powerful tool to manipulate people and exploit their vulnerabilities for illicit gains in the realms of cybercrime and financial fraud. To protect themselves against the sneaky threat of Digital Arrest, netizens must traverse the constantly changing cyber threat landscape with collective knowledge, educated practices, and strong cybersecurity measures.

References:

- https://www.business-standard.com/india-news/new-cyber-crime-trend-unravelled-in-up-woman-held-under-digital-arrest-123120200485_1.html

- https://www.businessinsider.in/india/news/noida-woman-scammed-11-lakh-in-digital-arrest-scam-everything-you-need-to-know/articleshow/105727970.cms

- https://m.timesofindia.com/life-style/parenting/moments/23-year-old-faridabad-girl-on-digital-arrest-for-17-days-how-to-protect-your-children-from-cyber-crime/photostory/105442556.cms