#FactCheck: Fake Claim on Delhi Authority Culling Dogs After Supreme Court Stray Dog Ban Directive 11 Aug 2025

Executive Summary:

A viral claim alleges that following the Supreme Court of India’s August 11, 2025 order on relocating stray dogs, authorities in Delhi NCR have begun mass culling. However, verification reveals the claim to be false and misleading. A reverse image search of the viral video traced it to older posts from outside India, probably linked to Haiti or Vietnam, as indicated by the use of Haitian Creole and Vietnamese language respectively. While the exact location cannot be independently verified, it is confirmed that the video is not from Delhi NCR and has no connection to the Supreme Court’s directive. Therefore, the claim lacks authenticity and is misleading

Claim:

There have been several claims circulating after the Supreme Court of India on 11th August 2025 ordered the relocation of stray dogs to shelters. The primary claim suggests that authorities, following the order, have begun mass killing or culling of stray dogs, particularly in areas like Delhi and the National Capital Region. This narrative intensified after several videos purporting to show dead or mistreated dogs allegedly linked to the Supreme Court’s directive—began circulating online.

Fact Check:

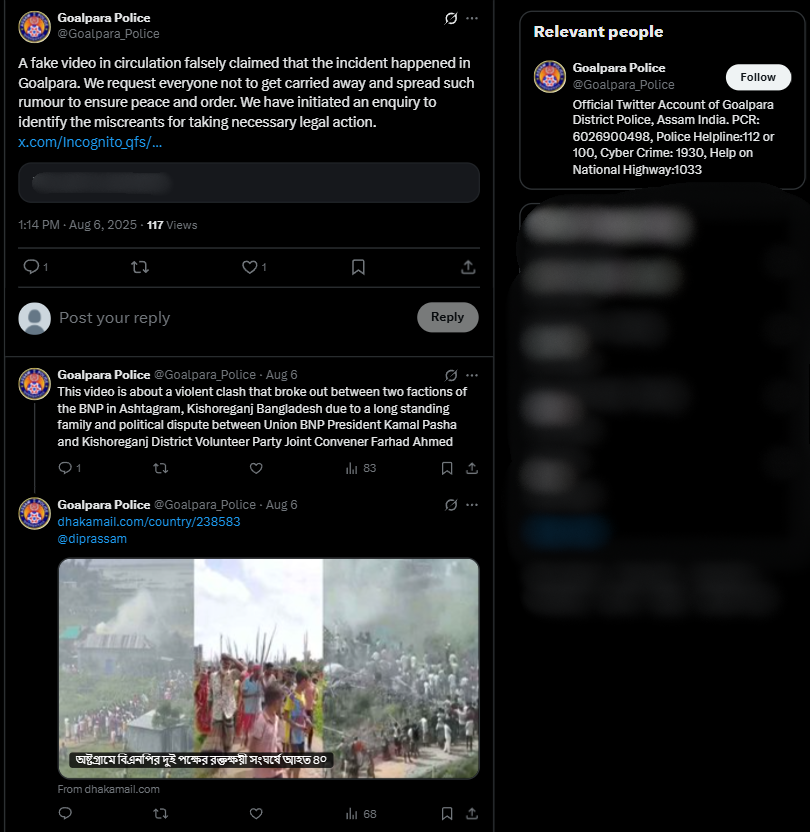

After conducting a reverse image search using a keyframe from the viral video, we found similar videos circulating on Facebook. Upon analyzing the language used in one of the posts, it appears to be Haitian Creole (Kreyòl Ayisyen), which is primarily spoken in Haiti. Another similar video was also found on Facebook, where the language used is Vietnamese, suggesting that the post associates the incident with Vietnam.

However, it is important to note that while these posts point towards different locations, the exact origin of the video cannot be independently verified. What can be established with certainty is that the video is not from Delhi NCR, India, as is being claimed. Therefore, the viral claim is misleading and lacks authenticity.

Conclusion:

The viral claim linking the Supreme Court’s August 11, 2025 order on stray dogs to mass culling in Delhi NCR is false and misleading. Reverse image search confirms the video originated outside India, with evidence of Haitian Creole and Vietnamese captions. While the exact source remains unverified, it is clear the video is not from Delhi NCR and has no relation to the Court’s directive. Hence, the claim lacks credibility and authenticity.

Claim: Viral fake claim of Delhi Authority culling dogs after the Supreme Court directive on the ban of stray dogs as on 11th August 2025

Claimed On: Social Media

Fact Check: False and Misleading