#FactCheck - Viral Video Showing Man Frying Bhature on His Stomach Is AI-Generated

A video circulating on social media shows a man allegedly rolling out bhature on his stomach and then frying them in a pan. The clip is being shared with a communal narrative, with users making derogatory remarks while falsely linking the act to a particular community.

CyberPeace Foundation’s research found the viral claim to be false. Our probe confirms that the video is not real but has been created using artificial intelligence (AI) tools and is being shared online with a misleading and communal angle.

Claim

On January 5, 2025, several users shared the viral video on social media platform X (formerly Twitter). One such post carried a communal caption suggesting that the person shown in the video does not belong to a particular community and making offensive remarks about hygiene and food practices..

- The post link and archived version can be viewed here: https://x.com/RightsForMuslim/status/2008035811804291381

- Archive Link: https://archive.ph/lKnX5

Fact Check:

Upon closely examining the viral video, several visual inconsistencies and unnatural movements were observed, raising suspicion about its authenticity. These anomalies are commonly associated with AI-generated or digitally manipulated content.

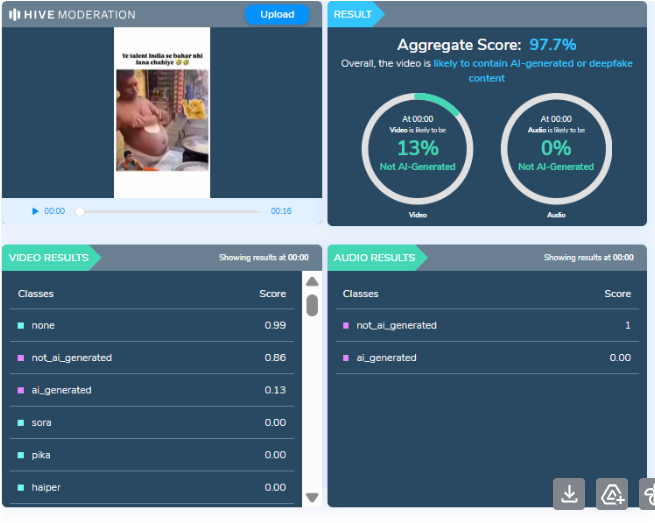

To verify this, the video was analysed using the AI detection tool HIVE Moderation. According to the tool’s results, the video was found to be 97 percent AI-generated, strongly indicating that it was not recorded in real life but synthetically created.

Conclusion

CyberPeace Foundation’s research clearly establishes that the viral video is AI-generated and does not depict a real incident. The clip is being deliberately shared with a false and communal narrative to mislead users and spread misinformation on social media. Users are advised to exercise caution and verify content before sharing such sensational and divisive material online.

Related Blogs

Executive Summary

Following the reported box office success of ‘Dhurandhar 2: The Revenge’, released on March 19, 2026, a video of Ranveer Singh visiting a temple is being widely shared on social media. Users claim that the actor visited the Kashi Vishwanath Temple to offer prayers after the film’s success. Research by CyberPeace found that the viral claim is misleading. The video of Ranveer Singh visiting the Kashi Vishwanath Temple is not recent. It dates back to 2024, when he visited the temple with Kriti Sanon, and is unrelated to the release or success of ‘Dhurandhar 2: The Revenge’.

Claim

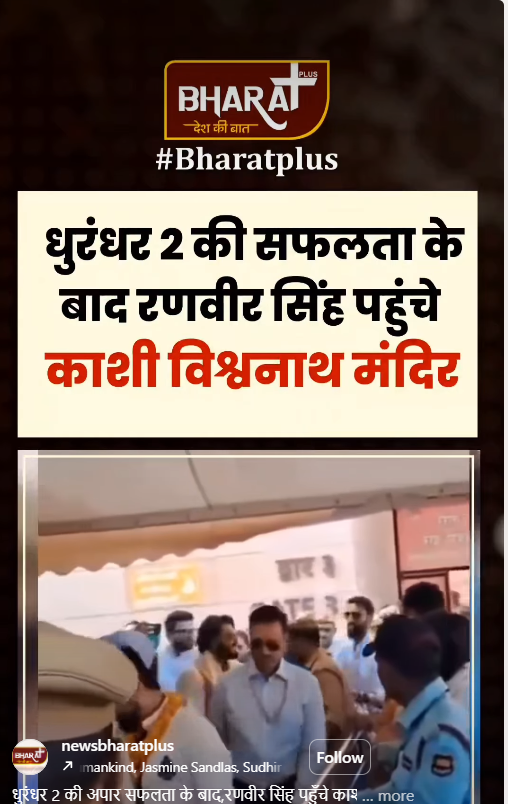

An Instagram user “newsbharatplus” shared the video on March 26, 2026, with a caption stating that after the massive success of Dhurandhar 2, Ranveer Singh visited the temple and performed rituals.

Fact Check

To verify the claim, we extracted keyframes from the viral video and conducted a reverse image search. This led us to a report published by Dainik Jagran on April 14, 2024. According to the report, Ranveer Singh had visited the Kashi Vishwanath Temple along with Kriti Sanon and noted fashion designer Manish Malhotra. During the visit, the trio was seen offering prayers, wearing traditional attire, and applying sandalwood tilak.

https://www.jagran.com/entertainment/bollywood-ranveer-singh-and-kriti-sanon-visits-kashi-vishwanath-temple-with-manish-malhotra-see-photos-here-23696781.html

We also found a video report on the official YouTube channel of Times Now Navbharat, uploaded on April 15, 2024, showing Ranveer Singh and Kriti Sanon at the temple. The report also featured visuals from a fashion event held in Varanasi.

- https://www.youtube.com/watch?v=OMuW_SVbfb4

Conclusion

The viral claim is misleading. The video of Ranveer Singh visiting the Kashi Vishwanath Temple is not recent. It dates back to 2024, when he visited the temple with Kriti Sanon, and is unrelated to the release or success of ‘Dhurandhar 2: The Revenge’.

Executive Summary

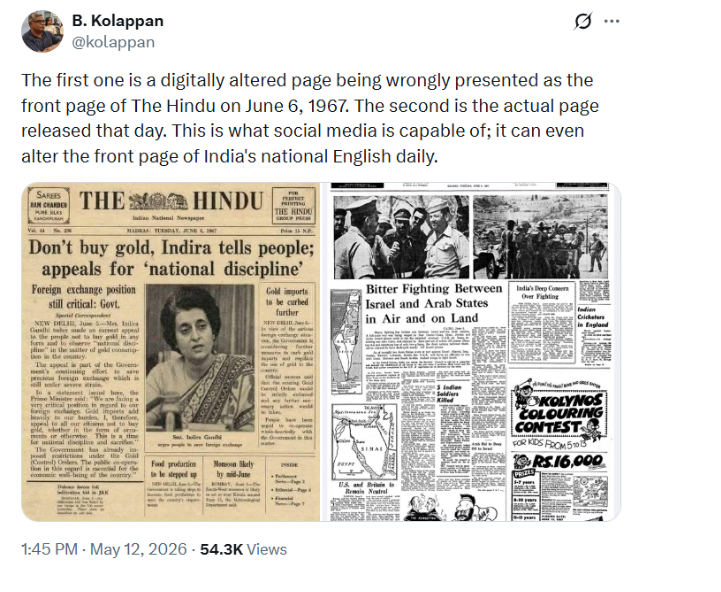

A purported front page of The Hindu dated June 6, 1967, is being widely circulated on social media with the claim that then Prime Minister Indira Gandhi had urged Indians not to buy gold in any form and to follow “national discipline” by restricting gold consumption. The viral claim suggests that the appeal was part of the government’s efforts to conserve foreign exchange reserves, which were allegedly under severe pressure at the time. However, research conducted by CyberPeace Research Wing found the claim to be false. Our research revealed that the front page being circulated online is not authentic and has been digitally edited.

Claim

An X (formerly Twitter) user shared the viral newspaper clipping on May 12, 2026, and wrote:“In 1967, during a severe foreign exchange crisis, Indira Gandhi appealed to Indians not to buy gold.From ‘skip one meal’ to ‘don’t buy gold,’ Congress-era governance normalized shortages, restrictions, and sacrifice, while ordinary citizens paid the price for failed economic policies.”

Research

To verify the claim, we examined the official social media accounts of The Hindu. During the research, we found a post published on the publication’s official X account on May 12, 2026, clarifying that the viral image claiming to be the June 6, 1967 front page of The Hindu was digitally altered and not part of its official archives. The newspaper also urged readers to verify information carefully before sharing it online.

We also found an X post by B Kolappan, a journalist with The Hindu, who shared the original front page of the newspaper dated June 6, 1967, further exposing the viral image as fake.

For context, Prime Minister Narendra Modi, while addressing a public gathering on May 10, 2026, spoke about the possible economic impact of the ongoing conflict in the Middle East and advised people to avoid buying gold for a year, even during weddings or family functions. The viral claim appears to have resurfaced in this backdrop.

Conclusion

Our research found that the alleged 1967 front page of The Hindu circulating on social media is digitally edited and fake. There is no evidence that the viral newspaper page is authentic or part of The Hindu’s archival records.

Executive Summary:

A new threat being uncovered in today’s threat landscape is that while threat actors took an average of one hour and seven minutes to leverage Proof-of-Concept(PoC) exploits after they went public, now the time is at a record low of 22 minutes. This incredibly fast exploitation means that there is very limited time for organizations’ IT departments to address these issues and close the leaks before they are exploited. Cloudflare released the Application Security report which shows that the attack percentage is more often higher than the rate at which individuals invent and develop security countermeasures like the WAF rules and software patches. In one case, Cloudflare noted an attacker using a PoC-based attack within a mere 22 minutes from the moment it was released, leaving almost no time for a remediation window.

Despite the constant growth of vulnerabilities in various applications and systems, the share of exploited vulnerabilities, which are accompanied by some level of public exploit or PoC code, has remained relatively stable over the past several years and fluctuates around 50%. These vulnerabilities with publicly known exploit code, 41% was initially attacked in the zero-day mode while of those with no known code, 84% was first attacked in the same mode.

Modus Operandi:

The modus operandi of the attack involving the rapid weaponization of proof-of-concept (PoC) exploits is characterized by the following steps:

- Vulnerability Identification: Threat actors bring together the exploitation of a system vulnerability that may be in the software or hardware of the system; this may be a code error, design failure, or a configuration error. This is normally achieved using vulnerability scanners and test procedures that have to be performed manually.

- Vulnerability Analysis: After the vulnerability is identified, the attackers study how it operates to determine when and how it can be triggered and what consequences that action will have. This means that one needs to analyze the details of the PoC code or system to find out the connection sequence that leads to vulnerability exploitation.

- Exploit Code Development: Being aware of the weakness, the attackers develop a small program or script denoted as the PoC that addresses exclusively the identified vulnerability and manipulates it in a moderated manner. This particular code is meant to be utilized in showing a particular penalty, which could be unauthorized access or alteration of data.

- Public Disclosure and Weaponization: The PoC exploit is released which is frequently done shortly after the vulnerability has been announced to the public. This makes it easier for the attackers to exploit it while waiting for the software developer to release the patch. To illustrate, Cloudflare has spotted an attacker using the PoC-based exploit 22 minutes after the publication only.

- Attack Execution: The attackers then use the weaponized PoC exploit to attack systems which are known to be vulnerable to it. Some of the actions that are tried in this context are attempts at running remote code, unauthorized access and so on. The pace at which it happens is often much faster than the pace at which humans put in place proper security defense mechanisms, such as the WAF rules or software application fixes.

- Targeted Operations: Sometimes, they act as if it’s a planned operation, where the attackers are selective in the system or organization to attack. For example, exploitation of CVE-2022-47966 in ManageEngine software was used during the espionage subprocess, where to perform such activity, the attackers used the mentioned vulnerability to install tools and malware connected with espionage.

Precautions: Mitigation

Following are the mitigating measures against the PoC Exploits:

1. Fast Patching and New Vulnerability Handling

- Introduce proper patching procedures to address quickly the security released updates and disclosed vulnerabilities.

- Focus should be made on the patching of those vulnerabilities that are observed to be having available PoC exploits, which often risks being exploited almost immediately.

- It is necessary to frequently check for the new vulnerability disclosures and PoC releases and have a prepared incident response plan for this purpose.

2. Leverage AI-Powered Security Tools

- Employ intelligent security applications which can easily generate desirable protection rules and signatures as attackers ramp up the weaponization of PoC exploits.

- Step up use of artificial intelligence (AI) - fueled endpoint detection and response (EDR) applications to quickly detect and mitigate the attempts.

- Integrate Artificial Intelligence based SIEM tools to Detect & analyze Indicators of compromise to form faster reaction.

3. Network Segmentation and Hardening

- Use strong networking segregation to prevent the attacker’s movement across the network and also restrict the effects of successful attacks.

- Secure any that are accessible from the internet, and service or protocols such as RDP, CIFS, or Active directory.

- Limit the usage of native scripting applications as much as possible because cyber attackers may exploit them.

4. Vulnerability Disclosure and PoC Management

- Inform the vendors of the bugs and PoC exploits and make sure there is a common understanding of when they are reported, to ensure fast response and mitigation.

- It is suggested to incorporate mechanisms like digital signing and encryption for managing and distributing PoC exploits to prevent them from being accessed by unauthorized persons.

- Exploits used in PoC should be simple and independent with clear and meaningful variable and function names that help reduce time spent on triage and remediation.

5. Risk Assessment and Response to Incidents

- Maintain constant supervision of the environment with an intention of identifying signs of a compromise, as well as, attempts of exploitation.

- Support a frequent detection, analysis and fighting of threats, which use PoC exploits into the system and its components.

- Regularly communicate with security researchers and vendors to understand the existing threats and how to prevent them.

Conclusion:

The rapid process of monetization of Proof of Concept (POC) exploits is one of the most innovative and constantly expanding global threats to cybersecurity at the present moment. Cyber security experts must react quickly while applying a patch, incorporate AI to their security tools, efficiently subdivide their networks and always heed their vulnerability announcements. Stronger incident response plan would aid in handling these kinds of menaces. Hence, applying measures mentioned above, the organizations will be able to prevent the acceleration of turning PoC exploits into weapons and the probability of neutral affecting cyber attacks.

Reference:

https://www.mayrhofer.eu.org/post/vulnerability-disclosure-is-positive/

https://www.uptycs.com/blog/new-poc-exploit-backdoor-malware

https://www.balbix.com/insights/attack-vectors-and-breach-methods/

https://blog.cloudflare.com/application-security-report-2024-update