#FactCheck - "Deep fake Falsely Claimed as a photo of Arvind Kejriwal welcoming Elon Musk when he visited India to discuss Delhi’s administrative policies.”

Executive Summary:

A viral online image claims to show Arvind Kejriwal, Chief Minister of Delhi, welcoming Elon Musk during his visit to India to discuss Delhi’s administrative policies. However, the CyberPeace Research Team has confirmed that the image is a deep fake, created using AI technology. The assertion that Elon Musk visited India to discuss Delhi’s administrative policies is false and misleading.

Claim

A viral image claims that Arvind Kejriwal welcomed Elon Musk during his visit to India to discuss Delhi’s administrative policies.

Fact Check:

Upon receiving the viral posts, we conducted a reverse image search using InVid Reverse Image searching tool. The search traced the image back to different unrelated sources featuring both Arvind Kejriwal and Elon Musk, but none of the sources depicted them together or involved any such event. The viral image displayed visible inconsistencies, such as lighting disparities and unnatural blending, which prompted further investigation.

Using advanced AI detection tools like TrueMedia.org and Hive AI Detection tool, we analyzed the image. The analysis confirmed with 97.5% confidence that the image was a deepfake. The tools identified “substantial evidence of manipulation,” particularly in the merging of facial features and the alignment of clothes and background, which were artificially generated.

Moreover, a review of official statements and credible reports revealed no record of Elon Musk visiting India to discuss Delhi’s administrative policies. Neither Arvind Kejriwal’s office nor Tesla or SpaceX made any announcement regarding such an event, further debunking the viral claim.

Conclusion:

The viral image claiming that Arvind Kejriwal welcomed Elon Musk during his visit to India to discuss Delhi’s administrative policies is a deep fake. Tools like Reverse Image search and AI detection confirm the image’s manipulation through AI technology. Additionally, there is no supporting evidence from any credible sources. The CyberPeace Research Team confirms the claim is false and misleading.

- Claim: Arvind Kejriwal welcomed Elon Musk to India to discuss Delhi’s administrative policies, viral on social media.

- Claimed on: Facebook and X(Formerly Twitter)

- Fact Check: False & Misleading

Related Blogs

.webp)

Introduction

Social media platforms have begun to shape the public understanding of history in today’s digital landscape. You may have encountered videos, images, and posts that claim to reveal an untold story about our past. For example, you might have seen a post on your feed that has a painted or black and white image of a princess and labelled as "the most beautiful princess of Rajasthan who fought countless wars but has been erased from history.” Such emotionally charged narratives spread quickly, without any academic scrutiny or citation. Unfortunately, the originator believes it to be true.

Such unverified content may look harmless. But it profoundly contributes to the systematic distortion of historical information. Such misinformation reoccurs on feeds and becomes embedded in popular memory. It misguides the public discourse and undermines the scholarly research on the relevant topic. Sometimes, it also contributes to communal outrage and social tensions. It is time to recognise that protecting the integrity of our cultural and historical narratives is not only an academic concern but a legal and institutional responsibility. This is where the role of the Ministry of Culture becomes critical.

Pseudohistorical News Information in India

Fake news and misinformation are frequently disseminated via images, pictures, and videos on various messaging applications, which is referred to as “WhatsApp University” in a derogatory way. WhatsApp has become India’s favourite method of communication, while users have to stay very conscious about what they are consuming from forwarded messages. Academic historians strive to understand the past in its context to differentiate it from the present, whereas pseudo-historians try to manipulate history to satisfy their political agendas. Unfortunately, this wave of pseudo-history is expanding rapidly, with platforms like 'WhatsApp University' playing a significant role in amplifying its spread. This has led to an increase in fake historical news and paid journalism. Unlike pseudo-history, academic history is created by professional historians in academic contexts, adhering to strict disciplinary guidelines, including peer review and expert examination of justifications, assertions, and publications.

How to Identify Pseudo-Historic Misinformation

1. Lack of Credible Sources: There is a lack of reliable primary and secondary sources. Instead, pseudohistorical works depend on hearsay and unreliable eyewitness accounts.

2. Selective Use of Evidence: Misinformative posts portray only those facts that support their argument and minimise the facts which is contradictory to their assertions.

3. Incorporation of Conspiracy Theories: They often include conspiracy theories, which postulate secret groups, repressed knowledge. They might mention that evil powers influenced the historical events. Such hypotheses frequently lack any supporting data.

4. Extravagant Claims: Pseudo-historic tales sometimes present unbelievable assertions about historic persons or events.

5. Lack of Peer Review: Such work is generally never published on authentic academic platforms. You would not find them on platforms like LinkedIn, but on platforms like Instagram and Facebook, as they do not pitch for academic publications. Authentic historical research is examined by subject-matter authorities.

6. Neglect of Established Historiographical Methods: Such posts lack knowledge of a recognised methodology and procedures, like the critical study of sources.

7. Ideologically Driven Narratives: Political, communal, ideological, and personal opinions are prioritised in such posts. The author has a prior goal, instead of finding the truth.

8. Exploitation of Gaps in the Historical Record: Pseudo-historians often use missing or unclear parts of history to suggest that regular historians are hiding important secrets. They make the story sound more mysterious than it is.

9. Rejection of Scholarly Consensus: Pseudo-historians often reject the views of experts and historians, choosing instead to believe and promote their strange ideas.

10. Emphasis on Sensationalism: Pseudo-historical works may put more emphasis on sensationalism than academic rigour to pique public interest rather than offer a fair and thorough account of the history.

Legal and Institutional Responsibility

Public opinion is the heart of democracy. It should not be affected by any misinformation or disinformation. Vested interests cannot be allowed to sabotage this public opinion. Specifically, when it concerns academia, it cannot be shared unverified without any fact-checking. Such unverified claims can be called out, and action can be taken only if the authorities take over the charge. In India, the Indian Council of Historical Research (ICHR) regulates the historical academia. As per the official website, their stated aim is to “take all such measures as may be found necessary from time to time to promote historical research and its utilisation in the country,”. However, it is now essential to modernise the functioning of the ICHR to meet the demands of the digital era. Concerned authorities can run campaigns and awareness programmes to question the validity and research of such misinformative posts. Just as there are fact-checking mechanisms for news, there must also be an institutional push to fact-check and regulate historical content online. The following measures can be taken by authorities to strike down such misinformation online:

- Launch a nationwide awareness campaign about historical misinformation.

- Work with scholars, historians, and digital platforms to promote verified content.

- Encourage social media platforms to introduce fact-check labels for historical posts.

- Consider legal frameworks that penalise the deliberate spread of false historical narratives.

History is part of our national heritage, and preserving its accuracy is a matter of public interest. Misinformation and pseudo-history are a combination that misleads the public and weakens the foundation of shared cultural identity. In this digital era, false narratives spread rapidly, and it is important to promote critical thinking, encourage responsible academic work, and ensure that the public has access to accurate and well-researched historical information. Protecting the integrity of history is not just the work of historians — it is a collective responsibility that serves the future of our democracy.

References:

- https://kuey.net/index.php/kuey/article/view/4091

- https://www.drishtiias.com/daily-news-editorials/social-media-and-the-menace-of-false-information

Executive Summary:

A video circulating on social media claims to show a live elephant falling from a moving truck due to improper transportation, followed by the animal quickly standing up and reacting on a public road. The content may raise concerns related to animal cruelty, public safety, and improper transport practices. A detailed examination using AI content detection tools, visual anomaly analysis indicates that the video is not authentic and is likely AI generated or digitally manipulated.

Claim:

The viral video (archive link) shows a disturbing scene where a large elephant is allegedly being transported in an open blue truck with barriers for support. As the truck moves along the road, the elephant shifts its weight and the weak side barrier breaks. This causes the elephant to fall onto the road, where it lands heavily on its side. Shortly after, the animal is seen getting back on its feet and reacting in distress, facing the vehicle that is recording the incident. The footage may raise serious concerns about safety, as elephants are normally transported in reinforced containers, and such an incident on a public road could endanger both the animal and people nearby.

Fact Check:

After receiving the video, we closely examined the visuals and noticed some inconsistencies that raised doubts about its authenticity. In particular, the elephant is seen recovering and standing up unnaturally quickly after a severe fall, which does not align with realistic animal behavior or physical response to such impact.

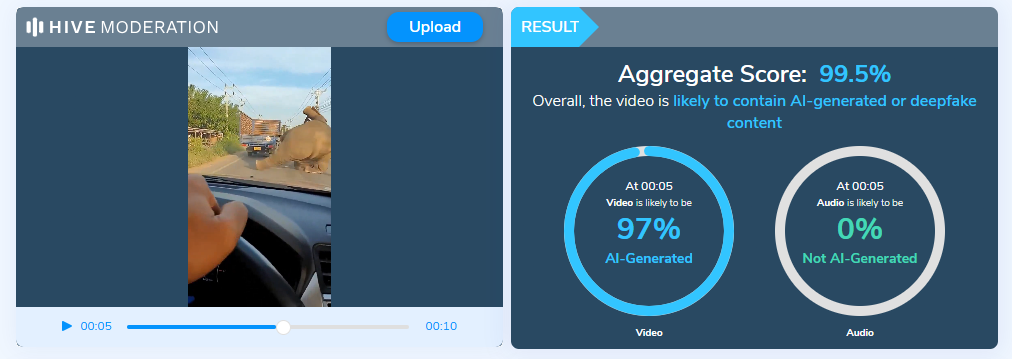

To further verify our observations, the video was analyzed using the Hive Moderation AI Detection tool, which indicated that the content is likely AI generated or digitally manipulated.

Additionally, no credible news reports or official sources were found to corroborate the incident, reinforcing the conclusion that the video is misleading.

Conclusion:

The claim that the video shows a real elephant transport accident is false and misleading. Based on AI detection results, observable visual anomalies, and the absence of credible reporting, the video is highly likely to be AI generated or digitally manipulated. Viewers are advised to exercise caution and verify such sensational content through trusted and authoritative sources before sharing.

- Claim: The viral video shows an elephant allegedly being transported, where a barrier breaks as it moves, causing the animal to fall onto the road before quickly getting back on its feet.

- Claimed On: X (Formally Twitter)

- Fact Check: False and Misleading

Introduction

In the digital realm of social media, Meta Platforms, the driving force behind Facebook and Instagram, faces intense scrutiny following The Wall Street Journal's investigative report. This exploration delves deeper into critical issues surrounding child safety on these widespread platforms, unravelling algorithmic intricacies, enforcement dilemmas, and the ethical maze surrounding monetisation features. Instances of "parent-managed minor accounts" leveraging Meta's subscription tools to monetise content featuring young individuals have raised eyebrows. While skirting the line of legality, this practice prompts concerns due to its potential appeal to adults and the associated inappropriate interactions. It's a nuanced issue demanding nuanced solutions.

Failed Algorithms

The very heartbeat of Meta's digital ecosystem, its algorithms, has come under intense scrutiny. These algorithms, designed to curate and deliver content, were found to actively promoting accounts featuring explicit content to users with known pedophilic interests. The revelation sparks a crucial conversation about the ethical responsibilities tied to the algorithms shaping our digital experiences. Striking the right balance between personalised content delivery and safeguarding users is a delicate task.

While algorithms play a pivotal role in tailoring content to users' preferences, Meta needs to reevaluate the algorithms to ensure they don't inadvertently promote inappropriate content. Stricter checks and balances within the algorithmic framework can help prevent the inadvertent amplification of content that may exploit or endanger minors.

Major Enforcement Challenges

Meta's enforcement challenges have come to light as previously banned parent-run accounts resurrect, gaining official verification and accumulating large followings. The struggle to remove associated backup profiles adds layers to concerns about the effectiveness of Meta's enforcement mechanisms. It underscores the need for a robust system capable of swift and thorough actions against policy violators.

To enhance enforcement mechanisms, Meta should invest in advanced content detection tools and employ a dedicated team for consistent monitoring. This proactive approach can mitigate the risks associated with inappropriate content and reinforce a safer online environment for all users.

The financial dynamics of Meta's ecosystem expose concerns about the exploitation of videos that are eligible for cash gifts from followers. The decision to expand the subscription feature before implementing adequate safety measures poses ethical questions. Prioritising financial gains over user safety risks tarnishing the platform's reputation and trustworthiness. A re-evaluation of this strategy is crucial for maintaining a healthy and secure online environment.

To address safety concerns tied to monetisation features, Meta should consider implementing stricter eligibility criteria for content creators. Verifying the legitimacy and appropriateness of content before allowing it to be monetised can act as a preventive measure against the exploitation of the system.

Meta's Response

In the aftermath of the revelations, Meta's spokesperson, Andy Stone, took centre stage to defend the company's actions. Stone emphasised ongoing efforts to enhance safety measures, asserting Meta's commitment to rectifying the situation. However, critics argue that Meta's response lacks the decisive actions required to align with industry standards observed on other platforms. The debate continues over the delicate balance between user safety and the pursuit of financial gain. A more transparent and accountable approach to addressing these concerns is imperative.

To rebuild trust and credibility, Meta needs to implement concrete and visible changes. This includes transparent communication about the steps taken to address the identified issues, continuous updates on progress, and a commitment to a user-centric approach that prioritises safety over financial interests.

The formation of a task force in June 2023 was a commendable step to tackle child sexualisation on the platform. However, the effectiveness of these efforts remains limited. Persistent challenges in detecting and preventing potential child safety hazards underscore the need for continuous improvement. Legislative scrutiny adds an extra layer of pressure, emphasising the urgency for Meta to enhance its strategies for user protection.

To overcome ongoing challenges, Meta should collaborate with external child safety organisations, experts, and regulators. Open dialogues and partnerships can provide valuable insights and recommendations, fostering a collaborative approach to creating a safer online environment.

Drawing a parallel with competitors such as Patreon and OnlyFans reveals stark differences in child safety practices. While Meta grapples with its challenges, these platforms maintain stringent policies against certain content involving minors. This comparison underscores the need for universal industry standards to safeguard minors effectively. Collaborative efforts within the industry to establish and adhere to such standards can contribute to a safer digital environment for all.

To align with industry standards, Meta should actively participate in cross-industry collaborations and adopt best practices from platforms with successful child safety measures. This collaborative approach ensures a unified effort to protect users across various digital platforms.

Conclusion

Navigating the intricate landscape of child safety concerns on Meta Platforms demands a nuanced and comprehensive approach. The identified algorithmic failures, enforcement challenges, and controversies surrounding monetisation features underscore the urgency for Meta to reassess and fortify its commitment to being a responsible digital space. As the platform faces this critical examination, it has an opportunity to not only rectify the existing issues but to set a precedent for ethical and secure social media engagement.

This comprehensive exploration aims not only to shed light on the existing issues but also to provide a roadmap for Meta Platforms to evolve into a safer and more responsible digital space. The responsibility lies not just in acknowledging shortcomings but in actively working towards solutions that prioritise the well-being of its users.

References

- https://timesofindia.indiatimes.com/gadgets-news/instagram-facebook-prioritised-money-over-child-safety-claims-report/articleshow/107952778.cms

- https://www.adweek.com/blognetwork/meta-staff-found-instagram-tool-enabled-child-exploitation-the-company-pressed-ahead-anyway/107604/

- https://www.tbsnews.net/tech/meta-staff-found-instagram-subscription-tool-facilitated-child-exploitation-yet-company