Encrypted & Closed-Network Spread: The Hidden Pathways of Misinformation

Introduction

In today’s hyper-connected world, information spreads faster than ever before. But while much attention is focused on public platforms like Facebook and Twitter, a different challenge lurks in the shadows: misinformation circulating on encrypted and closed-network platforms such as WhatsApp and Telegram. Unlike open platforms where harmful content can be flagged in public, private groups operate behind a digital curtain. Here, falsehoods often spread unchecked, gaining legitimacy because they are shared by trusted contacts. This makes encrypted platforms a double-edged sword. It is essential for privacy and free expression, yet uniquely vulnerable to misuse.

As Prime Minister Narendra Modi rightly reminded,

“Think 10 times before forwarding anything,” warning that even a “single fake news has the capability to snowball into a matter of national concern.”

The Moderation Challenge with End-to-End Encryption

Encrypted messaging platforms were built to protect personal communication. Yet, the same end-to-end encryption that shields users’ privacy also creates a blind spot for moderation. Authorities, researchers, and even the platforms themselves cannot view content circulating in private groups, making fact-checking nearly impossible.

Trust within closed groups makes the problem worse. When a message comes from family, friends, or community leaders, people tend to believe it without questioning and quickly pass it along. Features like large group chats, broadcast lists, and “forward to many” options further speed up its spread. Unlike open networks, there is no public scrutiny, no visible counter-narrative, and no opportunity for timely correction.

During the COVID-19 pandemic, false claims about vaccines spread widely through WhatsApp groups, undermining public health campaigns. Even more alarming, WhatsApp rumors about child kidnappers and cow meat in India triggered mob lynchings, leading to the tragic loss of life.

Encrypted platforms, therefore, represent a unique challenge: they are designed to protect privacy, but, unintentionally, they also protect the spread of dangerous misinformation.

Approaches to Curbing Misinformation on End-to-End Platforms

- Regulatory: Governments worldwide are exploring ways to access encrypted data on messaging platforms, creating tensions between the right to user privacy and crime prevention. Approaches like traceability requirements on WhatsApp, data-sharing mandates for platforms in serious cases, and stronger obligations to act against harmful viral content are also being considered.

- Technological Interventions: Platforms like WhatsApp have introduced features such as “forwarded many times” labels and limits on mass forwarding. These tools can be expanded further by introducing AI-driven link-checking and warnings for suspicious content.

- Community-Based Interventions: Ultimately, no regulation or technology can succeed without public awareness. People need to be inoculated against misinformation through pre-bunking efforts and digital literacy campaigns. Fact-checking websites and tools also have to be taught.

Best Practices for Netizens

Experts recommend simple yet powerful habits that every user can adopt to protect themselves and others. By adopting these, ordinary users can become the first line of defence against misinformation in their own communities:

- Cross-Check Before Forwarding: Verify claims from trusted platforms & official sources.

- Beware of Sensational Content: Headlines that sound too shocking or dramatic probably need checking. Consult multiple sources for a piece of news. If only one platform/ channel is carrying sensational news, it is likely to be clickbait or outright false.

- Stick to Trusted News Sources: Verify news through national newspapers and expert commentary. Remember, not everything on the internet/television is true.

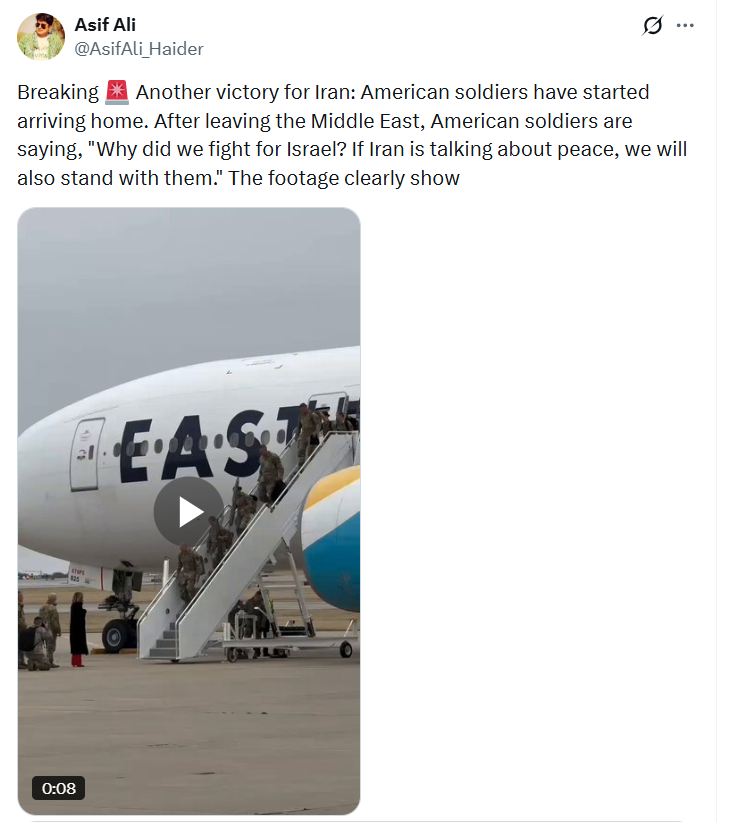

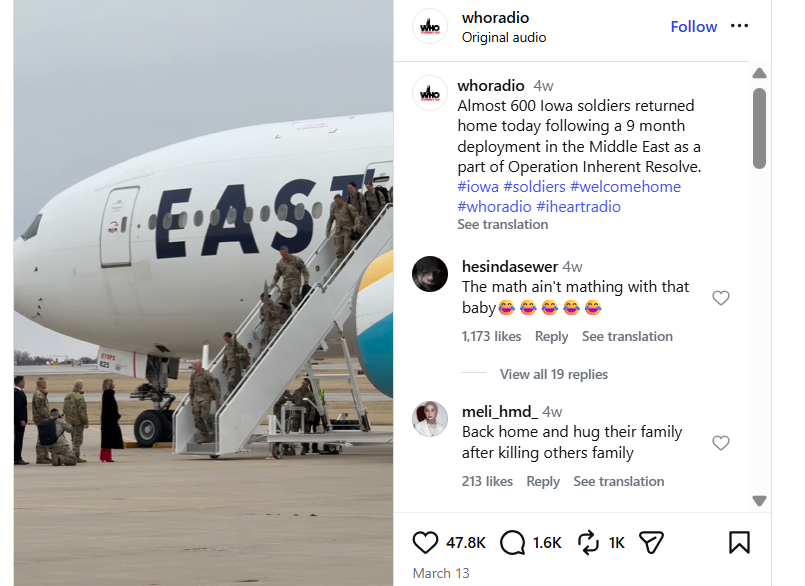

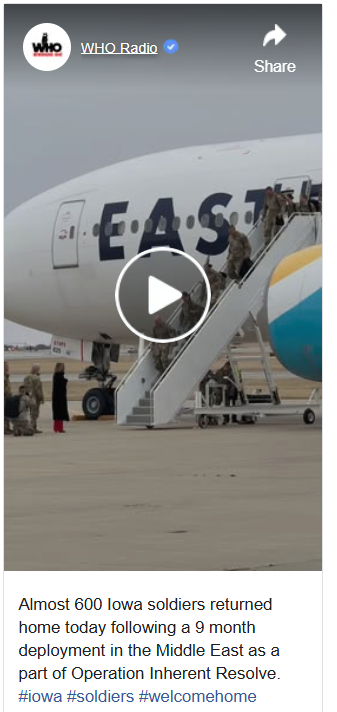

- Look Out for Manipulated Media: Now, with AI-generated deepfakes, it becomes more difficult to tell the difference between original and manipulated media. Check for edited images, cropped videos, or voice messages without source information. Always cross-verify any media received.

- Report Harmful Content: Report misinformation to the platform it is being circulated on and PIB’s Fact Check Unit.

Conclusion

In closed, unmonitored groups, platforms like WhatsApp and Telegram often become safe havens where people trust and forward messages from friends and family without question. Once misinformation takes root, it becomes extremely difficult to challenge or correct, and over time, such actions can snowball into serious social, economic and national concerns.

Preventing this is a matter of shared responsibility. Governments can frame balanced regulations, but individuals must also take initiative: pause, think, and verify before sharing. Ultimately, the right to privacy must be upheld, but with reasonable safeguards to ensure it is not misused at the cost of societal trust and safety.

References

- India WhatsApp ‘child kidnap’ rumours claim two more victims (BBC) The people trying to fight fake news in India (BBC)

- Press Information Bureau – PIB Fact Check

- Brookings Institution – Encryption and Misinformation Report (2021)

- Curtis, T. L., Touzel, M. P., Garneau, W., Gruaz, M., Pinder, M., Wang, L. W., Krishna, S., Cohen, L., Godbout, J.-F., Rabbany, R., & Pelrine, K. (2024). Veracity: An Open-Source AI Fact-Checking System. arXiv.

- NDTV – PM Modi cautions against fake news (2022)

- Times of India – Govt may insist on WhatsApp traceability (2019)

- Medianama – Telegram refused to share ISIS channel data (2019)

.webp)

.webp)