#FactCheck - An edited video of Bollywood actor Ranveer Singh criticizing PM getting viral

Executive Summary:

An alleged video is making the rounds on the internet featuring Ranveer Singh criticizing the Prime Minister Narendra Modi and his Government. But after examining the video closely it revealed that it has been tampered with to change the audio. In fact, the original videos posted by different media outlets actually show Ranveer Singh praising Varanasi, professing his love for Lord Shiva, and acknowledging Modiji’s role in enhancing the cultural charms and infrastructural development of the city. Differences in lip synchronization and the fact that the original video has no sign of criticizing PM Modi show that the video has been potentially manipulated in order to spread misinformation.

Claims:

The Viral Video of Bollywood actor Ranveer Singh criticizing Prime Minister Narendra Modi.

Fact Check:

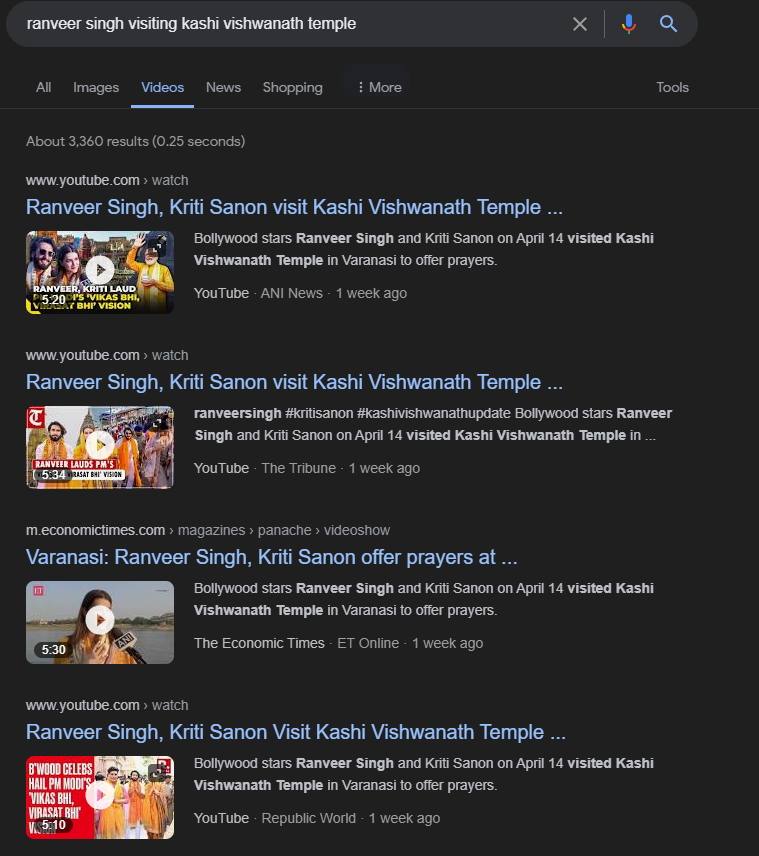

Upon receiving the Video we divided the video into keyframes and reverse-searched one of the images, we landed on another video of Ranveer Singh with lookalike appearance, posted by an Instagram account named, “The Indian Opinion News''. In the video Ranveer Singh talks about his experience of visiting Kashi Vishwanath Temple with Bollywood actress Kriti Sanon. When we watched the Full video we found no indication of criticizing PM Modi.

Taking a cue from this we did some keyword search to find the full video of the interview. We found many videos uploaded by media outlets but none of the videos indicates criticizing PM Modi as claimed in the viral video.

Ranveer Singh shared his thoughts about how he feels about Lord Shiva, his opinions on the city and the efforts undertaken by the Prime Minister Modi to keep history and heritage of Varanasi alive as well as the city's ongoing development projects. The discrepancy in the viral video clip is clearly seen when we look at it closely. The lips are not in synchronization with the words which we can hear. It is clearly seen in the original video that the lips are in perfect synchronization with the words of audio. Upon lack of evidence to the claim made and discrepancies in the video prove that the video was edited to misrepresent the original interview of Bollywood Actor Ranveer Singh. Hence, the claim made is misleading and false.

Conclusion:

The video that claims Ranveer Singh criticizing PM Narendra Modi is not genuine. Further investigation shows that it has been edited by changing the audio. The original footage actually shows Singh speaking positively about Varanasi and Modi's work. Differences in lip-syncing and upon lack of evidence highlight the danger of misinformation created by simple editing. Ultimately, the claim made is false and misleading.

- Claim: A viral featuring Ranveer Singh criticizing the Prime Minister Narendra Modi and his Government.

- Claimed on: X (formerly known as Twitter)

- Fact Check: Fake & Misleading

Related Blogs

In the rich history of humanity, the advent of artificial intelligence (AI) has added a new, delicate aspect. The aspect of promising technological advancement has the potential to either enrich the nest of our society or destroy it entirely. The latest straw in this complex nest is generative AI, a frontier teeming with both potential and perils. It is a realm where the ethereal concepts of cyber peace and resilience are not just theoretical constructs but tangible necessities.

The spectre of generative AI looms large over the digital landscape, casting a long shadow on the sanctity of data privacy and the integrity of political processes. The seeds of this threat were sown in the fertile soil of the Cambridge Analytica scandal of 2018, a watershed moment that unveiled the extent to which personal data could be harvested and utilized to influence electoral outcomes. However despite the indignation, the scandal resulted in meagre alterations to modus operandi of digital platforms.

Fast forward to the present day, and the spectre has only grown more ominous. A recent report by Human Rights Watch has shed light on the continued exploitation of data-driven campaigning in Hungary's re-election of Viktor Orbán. The report paints a chilling picture of political parties leveraging voter databases for targeted social media advertising, with the ruling Fidesz party even resorting to the unethical use of public service data to bolster its voter database.

The Looming Threat of Disinformation

As we stand on the precipice of 2024, a year that will witness over 50 countries holding elections, the advancements in generative AI could exponentially amplify the ability of political campaigns to manipulate electoral outcomes. This is particularly concerning in countries where information disparities are stark, providing fertile ground for the seeds of disinformation to take root and flourish.

The media, the traditional watchdog of democracy, has already begun to sound the alarm about the potential threats posed by deepfakes and manipulative content in the upcoming elections. The limited use of generative AI in disinformation campaigns has raised concerns about the enforcement of policies against generating targeted political materials, such as those designed to sway specific demographic groups towards a particular candidate.

Yet, while the threat of bad actors using AI to generate and disseminate disinformation is real and present, there is another dimension that has largely remained unexplored: the intimate interactions with chatbots. These digital interlocutors, when armed with advanced generative AI, have the potential to manipulate individuals without any intermediaries. The more data they have about a person, the better they can tailor their manipulations.

Root of the Cause

To fully grasp the potential risks, we must journey back 30 years to the birth of online banner ads. The success of the first-ever banner ad for AT&T, which boasted an astounding 44% click rate, birthed a new era of digital advertising. This was followed by the advent of mobile advertising in the early 2000s. Since then, companies have been engaged in a perpetual quest to harness technology for manipulation, blurring the lines between commercial and political advertising in cyberspace.

Regrettably, the safeguards currently in place are woefully inadequate to prevent the rise of manipulative chatbots. Consider the case of Snapchat's My AI generative chatbot, which ostensibly assists users with trivia questions and gift suggestions. Unbeknownst to most users, their interactions with the chatbot are algorithmically harvested for targeted advertising. While this may not seem harmful in its current form, the profit motive could drive it towards more manipulative purposes.

If companies deploying chatbots like My AI face pressure to increase profitability, they may be tempted to subtly steer conversations to extract more user information, providing more fuel for advertising and higher earnings. This kind of nudging is not clearly illegal in the U.S. or the EU, even after the AI Act comes into effect. The market size of AI in India is projected to touch US$4.11bn in 2023.

Taking this further, chatbots may be inclined to guide users towards purchasing specific products or even influencing significant life decisions, such as religious conversions or voting choices. The legal boundaries here remain unclear, especially when manipulation is not detectable by the user.

The Crucial Dos/Dont's

It is crucial to set rules and safeguards in order to manage the possible threats related to manipulative chatbots in the context of the general election in 2024.

First and foremost, candor and transparency are essential. Chatbots, particularly when employed for political or electoral matters, ought to make it clear to users what they are for and why they are automated. By being transparent, people are guaranteed to be aware that they are interacting with automated processes.

Second, getting user consent is crucial. Before collecting user data for any reason, including advertising or political profiling, users should be asked for their informed consent. Giving consumers easy ways to opt-in and opt-out gives them control over their data.

Furthermore, moral use is essential. It's crucial to create an ethics code for chatbot interactions that forbids manipulation, disseminating false information, and trying to sway users' political opinions. This guarantees that chatbots follow moral guidelines.

In order to preserve transparency and accountability, independent audits need to be carried out. Users might feel more confident knowing that chatbot behavior and data collecting procedures are regularly audited by impartial third parties to ensure compliance with legal and ethical norms.

Important "don'ts" to take into account. Coercion and manipulation ought to be outlawed completely. Chatbots should refrain from using misleading or manipulative approaches to sway users' political opinions or religious convictions.

Another hazard to watch out for is unlawful data collecting. Businesses must obtain consumers' express agreement before collecting personal information, and they must not sell or share this information for political reasons.

At all costs, one should steer clear of fake identities. Impersonating people or political figures is not something chatbots should do because it can result in manipulation and false information.

It is essential to be impartial. Bots shouldn't advocate for or take part in political activities that give preference to one political party over another. In encounters, impartiality and equity are crucial.

Finally, one should refrain from using invasive advertising techniques. Chatbots should ensure that advertising tactics comply with legal norms by refraining from displaying political advertisements or messaging without explicit user agreement.

Present Scenario

As we approach the critical 2024 elections and generative AI tools proliferate faster than regulatory measures can keep pace, companies must take an active role in building user trust, transparency, and accountability. This includes comprehensive disclosure about a chatbot's programmed business goals in conversations, ensuring users are fully aware of the chatbot's intended purposes.

To address the regulatory gap, stronger laws are needed. Both the EU AI Act and analogous laws across jurisdictions should be expanded to address the potential for manipulation in various forms. This effort should be driven by public demand, as the interests of lawmakers have been influenced by intensive Big Tech lobbying campaigns.

At present, India doesn’t have any specific laws pertaining to AI regulation. Ministry of Electronics and Information Technology (MEITY), is the executive body responsible for AI strategies and is constantly working towards a policy framework for AI. The Niti Ayog has presented seven principles for responsible AI which includes equality , inclusivity, safety, privacy, transparency, accountability, dependability and protection of positive human values.

Conclusion

We are at a pivotal juncture in history. As generative AI gains more power, we must proactively establish effective strategies to protect our privacy, rights and democracy. The public's waning confidence in Big Tech and the lessons learned from the techlash underscore the need for stronger regulations that hold tech companies accountable. Let's ensure that the power of generative AI is harnessed for the betterment of society and not exploited for manipulation.

Reference

McCallum, B. S. (2022, December 23). Meta settles Cambridge Analytica scandal case for $725m. BBC News. https://www.bbc.com/news/technology-64075067

Hungary: Data misused for political campaigns. (2022, December 1). Human Rights Watch. https://www.hrw.org/news/2022/12/01/hungary-data-misused-political-campaigns

Statista. (n.d.). Artificial Intelligence - India | Statista Market forecast. https://www.statista.com/outlook/tmo/artificial-intelligence/india

Introduction

A photo circulating on social media depicting modified tractors is being misrepresented as part of the 'Delhi Chalo' farmers' protest narrative. In the recent swirl of misinformation surrounding the 'Delhi Chalo' farmers' protest. A photo, ostensibly showing a phalanx of modified tractors, has been making the rounds on social media platforms, falsely tethered to the ongoing protests. This image, accompanied by a headline suggesting a mechanical metamorphosis to resist police barricades, was allegedly published by a news agency. However, beneath the surface of this viral phenomenon lies a more complex and fabricated reality.

The Movement

The 'Delhi Chalo' movement, a clarion call that resonated with thousands of farmers from the fertile plains of Punjab, the verdant fields of Haryana, and the sprawling expanses of Uttar Pradesh, has been a testament to the agrarian community's demand for assured crop prices and legal guarantees for the Minimum Support Price (MSP). The protest, which has seen the fortification of borders and the chaos at the Punjab-Haryana border on February 13, 2024, has become a crucible for the farmers' unyielding spirit.

Yet, amidst this backdrop of civil demonstration and discourse, a nefarious narrative of misinformation has taken root. The viral image, which has been shared with the fervour of wildfire, was accompanied by a screenshot of an article allegedly published by the news agency. This article, dated February 11, 2024, quoted an anonymous official who claimed that intelligence agencies had alerted the police to the protesters' plans to outfit tractors with hydraulic tools. The implication was clear: these machines had been transformed into battering rams against the bulwark of law enforcement.

The Pursuit of Truth

However, the India TV Fact Check team, in their relentless pursuit of truth, unearthed that the viral photo of these so-called modified tractors is nothing but a chimerical creation, a figment of artificial intelligence. Visual discrepancies betrayed its AI-generated nature.

This is not the first time that the misinformation has loomed over the farmers' protest. Previous instances, including a viral video of a modified tractor, have been debunked by the same fact-checking team. These efforts are a bulwark against the tide of false narratives that seek to muddy the waters of public understanding.

The claim that the photo depicted modified tractors intended for use in the ‘Delhi Chalo’ farmers' protest rally in Delhi on February 13, 2024, was a mirage.

The Fact Check

OpIndia, in their article, clarified that the photo used was a representative image created by AI and not a real photograph. To further scrutinize this viral photo, the HIVE AI detector tool was employed, indicating a 99.4% likelihood of the image being AI-generated. Thus, the claim made in the post was misleading.

The viral photo claiming that farmers had modified their tractors to avoid tear gas shells and remove barricades put up by the police during the rally was a digital illusion. The internet has become a fertile ground for the rapid spread of misinformation, reaching millions in an instant. Social media, with its complex algorithms, amplifies this spread, as any interaction, even those intended to debunk false information, inadvertently increases its reach. This phenomenon is exacerbated by 'echo chambers,' where users are exposed to a homogenous stream of content that reinforces their pre-existing beliefs, making it difficult to encounter and consider alternative perspectives.

Conclusion

The viral image depicting modified tractors for the ‘Delhi Chalo’ farmers' protest rally was a digital fabrication, a testament to the power of AI in creating convincing yet false narratives. As we navigate the labyrinth of information in the digital era, it is imperative to remain vigilant, to question the veracity of what we see and hear, and to rely on the diligent work of fact-checkers in discerning the truth. The mirage of modified machines serves as a stark reminder of the potency of misinformation and the importance of critical thinking in the age of artificial intelligence.

References

- https://www.indiatvnews.com/fact-check/fact-check-ai-generated-tractor-photo-misrepresented-delhi-chalo-farmers-protest-narrative-msp-police-barricades-punjab-haryana-uttar-pradesh-2024-02-15-917010

- https://factly.in/this-viral-image-depicting-modified-tractors-for-the-delhi-chalo-farmers-protest-rally-is-created-using-ai/

Introduction

In the digital realm of social media, Meta Platforms, the driving force behind Facebook and Instagram, faces intense scrutiny following The Wall Street Journal's investigative report. This exploration delves deeper into critical issues surrounding child safety on these widespread platforms, unravelling algorithmic intricacies, enforcement dilemmas, and the ethical maze surrounding monetisation features. Instances of "parent-managed minor accounts" leveraging Meta's subscription tools to monetise content featuring young individuals have raised eyebrows. While skirting the line of legality, this practice prompts concerns due to its potential appeal to adults and the associated inappropriate interactions. It's a nuanced issue demanding nuanced solutions.

Failed Algorithms

The very heartbeat of Meta's digital ecosystem, its algorithms, has come under intense scrutiny. These algorithms, designed to curate and deliver content, were found to actively promoting accounts featuring explicit content to users with known pedophilic interests. The revelation sparks a crucial conversation about the ethical responsibilities tied to the algorithms shaping our digital experiences. Striking the right balance between personalised content delivery and safeguarding users is a delicate task.

While algorithms play a pivotal role in tailoring content to users' preferences, Meta needs to reevaluate the algorithms to ensure they don't inadvertently promote inappropriate content. Stricter checks and balances within the algorithmic framework can help prevent the inadvertent amplification of content that may exploit or endanger minors.

Major Enforcement Challenges

Meta's enforcement challenges have come to light as previously banned parent-run accounts resurrect, gaining official verification and accumulating large followings. The struggle to remove associated backup profiles adds layers to concerns about the effectiveness of Meta's enforcement mechanisms. It underscores the need for a robust system capable of swift and thorough actions against policy violators.

To enhance enforcement mechanisms, Meta should invest in advanced content detection tools and employ a dedicated team for consistent monitoring. This proactive approach can mitigate the risks associated with inappropriate content and reinforce a safer online environment for all users.

The financial dynamics of Meta's ecosystem expose concerns about the exploitation of videos that are eligible for cash gifts from followers. The decision to expand the subscription feature before implementing adequate safety measures poses ethical questions. Prioritising financial gains over user safety risks tarnishing the platform's reputation and trustworthiness. A re-evaluation of this strategy is crucial for maintaining a healthy and secure online environment.

To address safety concerns tied to monetisation features, Meta should consider implementing stricter eligibility criteria for content creators. Verifying the legitimacy and appropriateness of content before allowing it to be monetised can act as a preventive measure against the exploitation of the system.

Meta's Response

In the aftermath of the revelations, Meta's spokesperson, Andy Stone, took centre stage to defend the company's actions. Stone emphasised ongoing efforts to enhance safety measures, asserting Meta's commitment to rectifying the situation. However, critics argue that Meta's response lacks the decisive actions required to align with industry standards observed on other platforms. The debate continues over the delicate balance between user safety and the pursuit of financial gain. A more transparent and accountable approach to addressing these concerns is imperative.

To rebuild trust and credibility, Meta needs to implement concrete and visible changes. This includes transparent communication about the steps taken to address the identified issues, continuous updates on progress, and a commitment to a user-centric approach that prioritises safety over financial interests.

The formation of a task force in June 2023 was a commendable step to tackle child sexualisation on the platform. However, the effectiveness of these efforts remains limited. Persistent challenges in detecting and preventing potential child safety hazards underscore the need for continuous improvement. Legislative scrutiny adds an extra layer of pressure, emphasising the urgency for Meta to enhance its strategies for user protection.

To overcome ongoing challenges, Meta should collaborate with external child safety organisations, experts, and regulators. Open dialogues and partnerships can provide valuable insights and recommendations, fostering a collaborative approach to creating a safer online environment.

Drawing a parallel with competitors such as Patreon and OnlyFans reveals stark differences in child safety practices. While Meta grapples with its challenges, these platforms maintain stringent policies against certain content involving minors. This comparison underscores the need for universal industry standards to safeguard minors effectively. Collaborative efforts within the industry to establish and adhere to such standards can contribute to a safer digital environment for all.

To align with industry standards, Meta should actively participate in cross-industry collaborations and adopt best practices from platforms with successful child safety measures. This collaborative approach ensures a unified effort to protect users across various digital platforms.

Conclusion

Navigating the intricate landscape of child safety concerns on Meta Platforms demands a nuanced and comprehensive approach. The identified algorithmic failures, enforcement challenges, and controversies surrounding monetisation features underscore the urgency for Meta to reassess and fortify its commitment to being a responsible digital space. As the platform faces this critical examination, it has an opportunity to not only rectify the existing issues but to set a precedent for ethical and secure social media engagement.

This comprehensive exploration aims not only to shed light on the existing issues but also to provide a roadmap for Meta Platforms to evolve into a safer and more responsible digital space. The responsibility lies not just in acknowledging shortcomings but in actively working towards solutions that prioritise the well-being of its users.

References

- https://timesofindia.indiatimes.com/gadgets-news/instagram-facebook-prioritised-money-over-child-safety-claims-report/articleshow/107952778.cms

- https://www.adweek.com/blognetwork/meta-staff-found-instagram-tool-enabled-child-exploitation-the-company-pressed-ahead-anyway/107604/

- https://www.tbsnews.net/tech/meta-staff-found-instagram-subscription-tool-facilitated-child-exploitation-yet-company